Metrics Server is a scalable, efficient source of container resource metrics for Kubernetes built-in autoscaling pipelines.

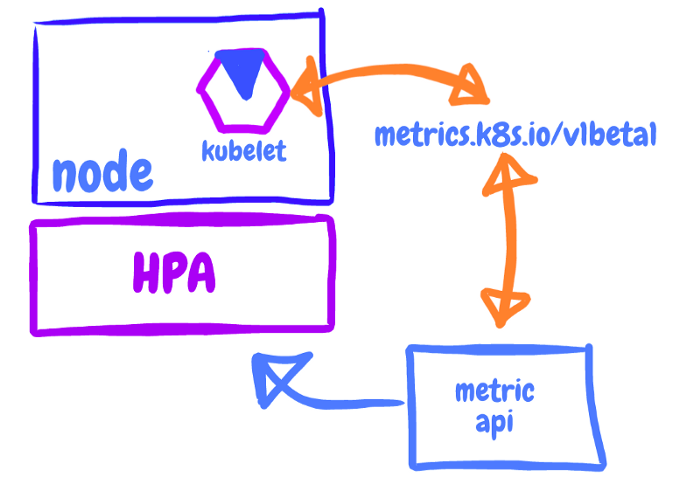

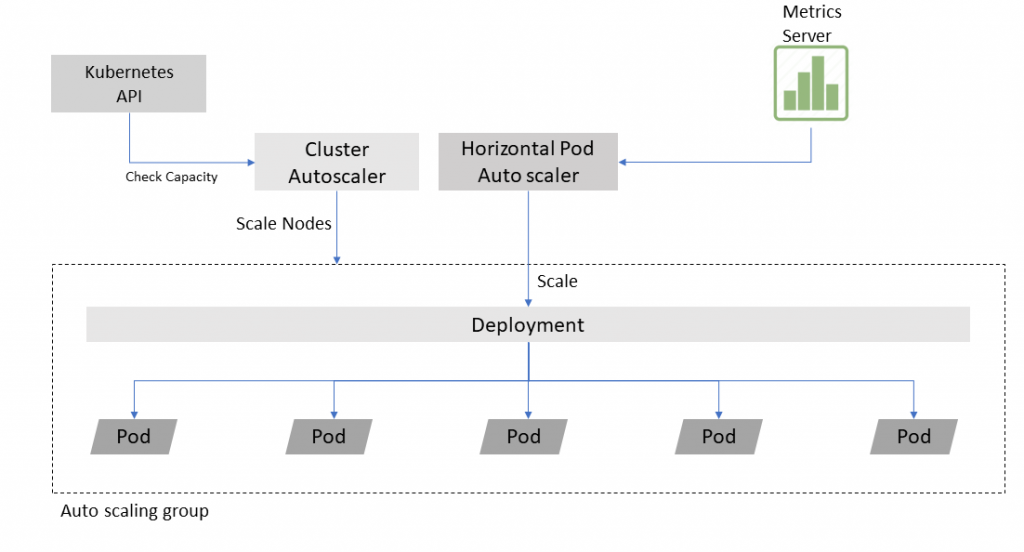

Metrics Server collects resource metrics from Kubelets and exposes them in Kubernetes apiserver through Metrics API for use by Horizontal Pod Autoscaler and Vertical Pod Autoscaler. Metrics API can also be accessed by kubectl top, making it easier to debug autoscaling pipelines.

Metrics Server is not meant for non-autoscaling purposes. For example, don’t use it to forward metrics to monitoring solutions, or as a source of monitoring solution metrics.

Metrics Server offers:

- A single deployment that works on most clusters

- Scalable support up to 5,000 node clusters

- Resource efficiency: Metrics Server uses 0.5m core of CPU and 4 MB of memory per node\

The way it works is relatively simple:

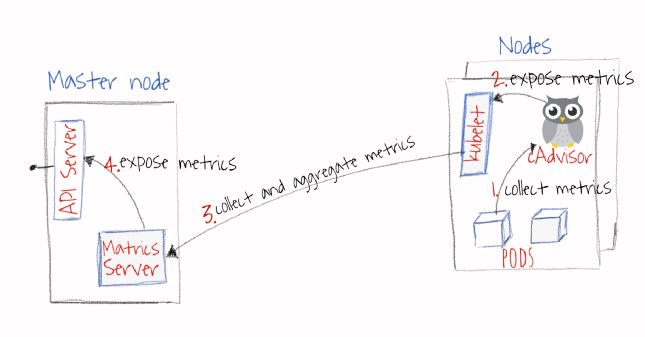

- CAdvisor collects metrics about containers and nodes that on which it is installed. Note: CAdvisor is installed by default on all cluster nodes

- Kubelet exposes these metrics (default is one-minute resolution) through Kubelet APIs.

- Metrics Server discovers all available nodes and calls Kubelet API to get containers and nodes resources usage.

- Metrics Server exposes these metrics through Kubernetes aggregation API.

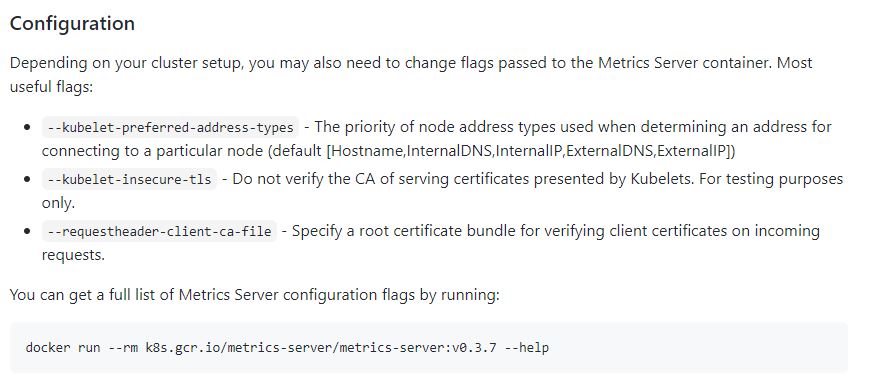

How to install it?

Refernece

I’m a DevOps/SRE/DevSecOps/Cloud Expert passionate about sharing knowledge and experiences. I have worked at Cotocus. I share tech blog at DevOps School, travel stories at Holiday Landmark, stock market tips at Stocks Mantra, health and fitness guidance at My Medic Plus, product reviews at TrueReviewNow , and SEO strategies at Wizbrand.

Do you want to learn Quantum Computing?

Please find my social handles as below;

Rajesh Kumar Personal Website

Rajesh Kumar at YOUTUBE

Rajesh Kumar at INSTAGRAM

Rajesh Kumar at X

Rajesh Kumar at FACEBOOK

Rajesh Kumar at LINKEDIN

Rajesh Kumar at WIZBRAND

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals