Multi-cluster Ingress (MCI) is an advanced feature typically used in cloud computing environments that enables the management of ingress (the entry point for external traffic into a network) across multiple Kubernetes clusters. This functionality is especially useful for applications that are deployed globally across several regions or clusters, offering a unified method to manage access to these applications. MCI simplifies the process of routing external traffic to the appropriate cluster, enhancing both the reliability and scalability of applications.

Here are key features and benefits of Multi-cluster Ingress:

- Global Load Balancing: MCI can intelligently route traffic to different clusters based on factors like region, latency, and health of the service. This ensures users are directed to the nearest or best-performing cluster, improving the overall user experience.

- Centralized Management: It allows for the configuration of ingress rules from a single point, even though these rules are applied across multiple clusters. This simplification reduces the complexity of managing global applications.

- High Availability and Redundancy: By spreading resources across multiple clusters, MCI enhances the availability and fault tolerance of applications. If one cluster goes down, traffic can be automatically rerouted to another healthy cluster.

- Cross-Region Failover: In the event of a regional outage or a significant drop in performance within one cluster, MCI can perform automatic failover to another cluster in a different region, ensuring continuous availability of services.

- Cost Efficiency: MCI helps optimize resource utilization across clusters, potentially leading to cost savings. Traffic can be routed to clusters where resources are less expensive or more abundant.

- Simplified DNS Management: Typically, MCI solutions offer integrated DNS management, automatically updating DNS records based on the health and location of clusters. This removes the need for manual DNS management in a multi-cluster setup.

How What is Multi-cluster Ingress (MCI) works?

Multi-cluster Ingress (MCI) works by managing and routing external traffic into applications running across multiple Kubernetes clusters. This process involves several components and steps to ensure that traffic is efficiently and securely routed to the appropriate destination based on predefined rules and policies. Here’s a high-level overview of how MCI operates:

1. Deployment Across Multiple Clusters

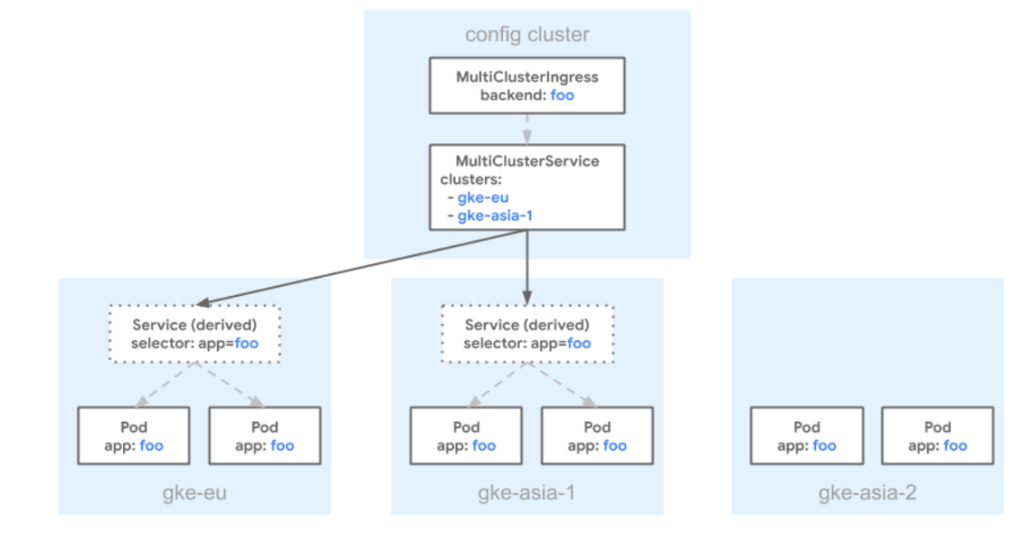

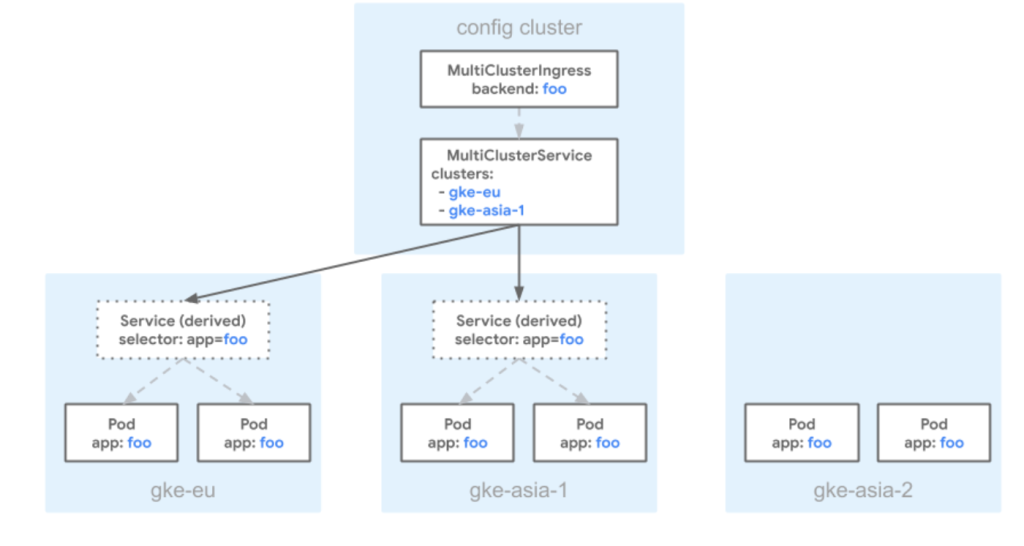

- Clusters Preparation: You deploy your application across multiple Kubernetes clusters, often spread across different geographical locations or cloud regions, to ensure high availability and resilience.

- Ingress Configuration: Each cluster has its own set of resources and services that need to be exposed externally. With MCI, you configure ingress resources that are aware of the multi-cluster environment.

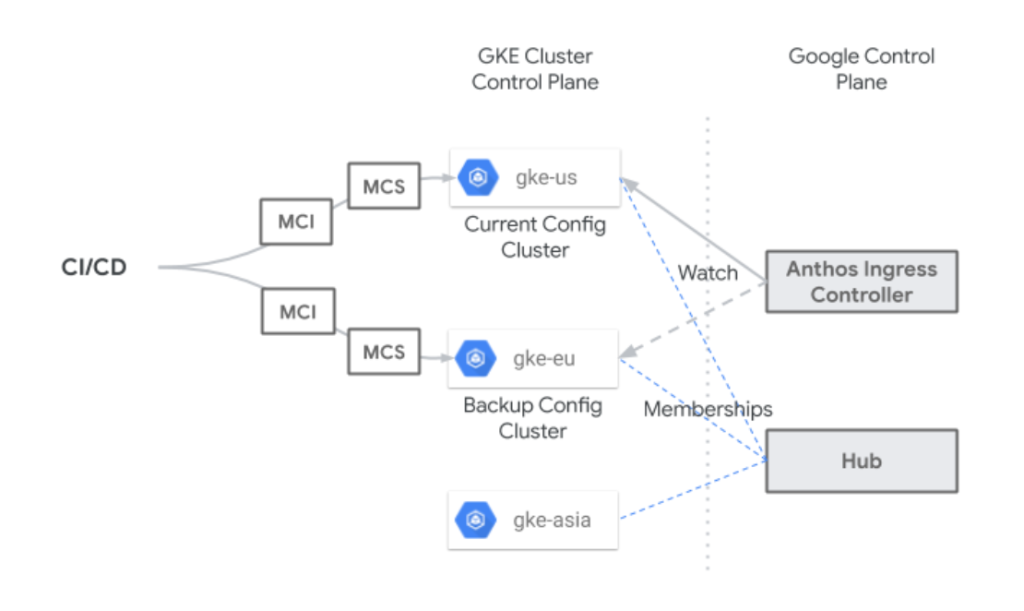

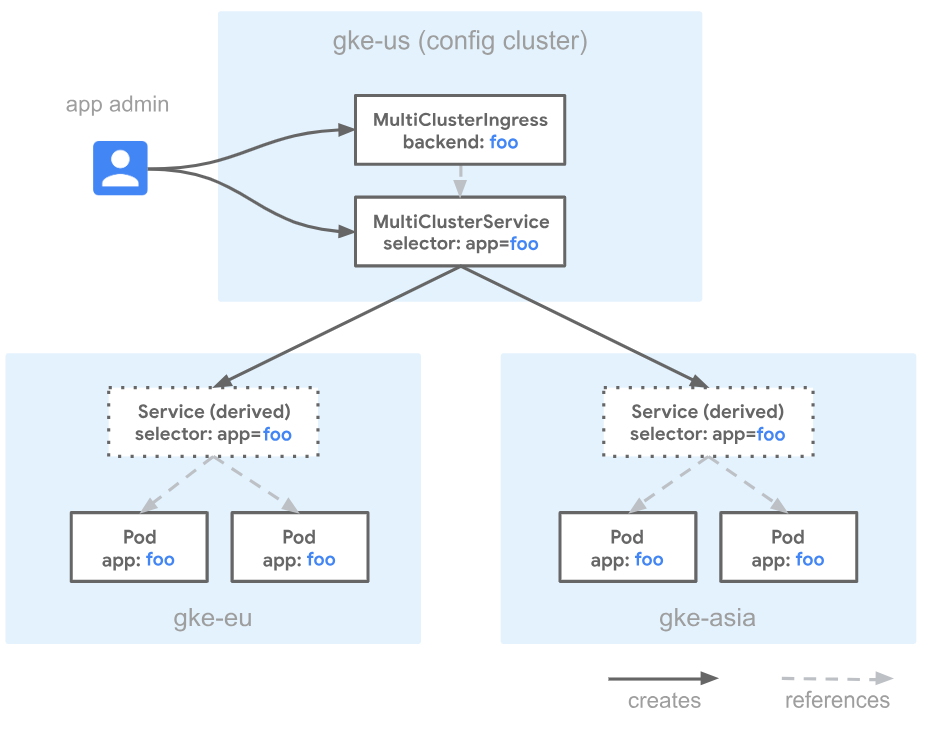

2. Central Management and Configuration

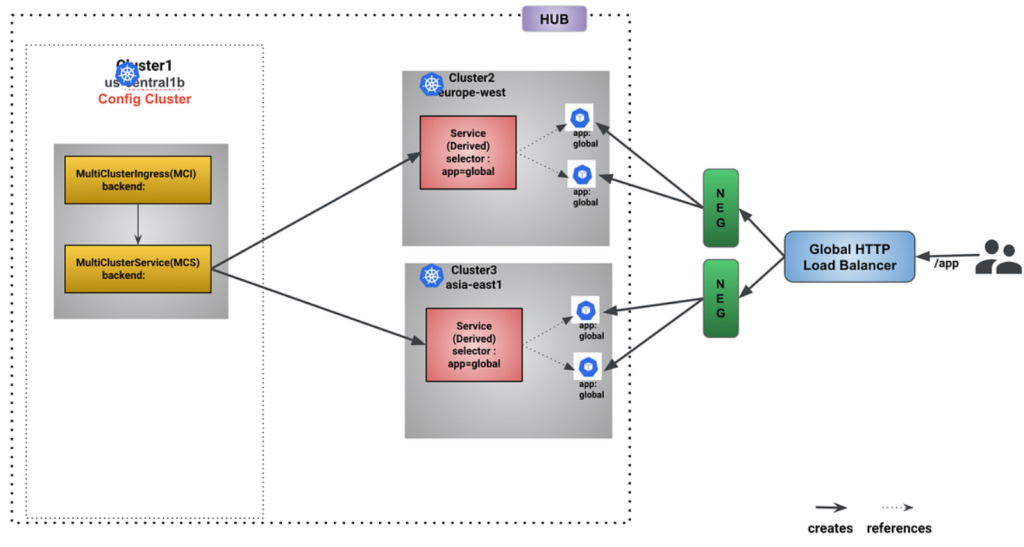

- Unified Ingress Control: A central control plane is used to manage the ingress resources across all participating clusters. This is where you define the rules for how external traffic should be routed to your services.

- DNS and Global Load Balancer Setup: MCI integrates with global load balancers and DNS systems to direct users to the closest or most appropriate cluster based on various criteria like location, latency, and the health of the clusters.

3. Traffic Routing

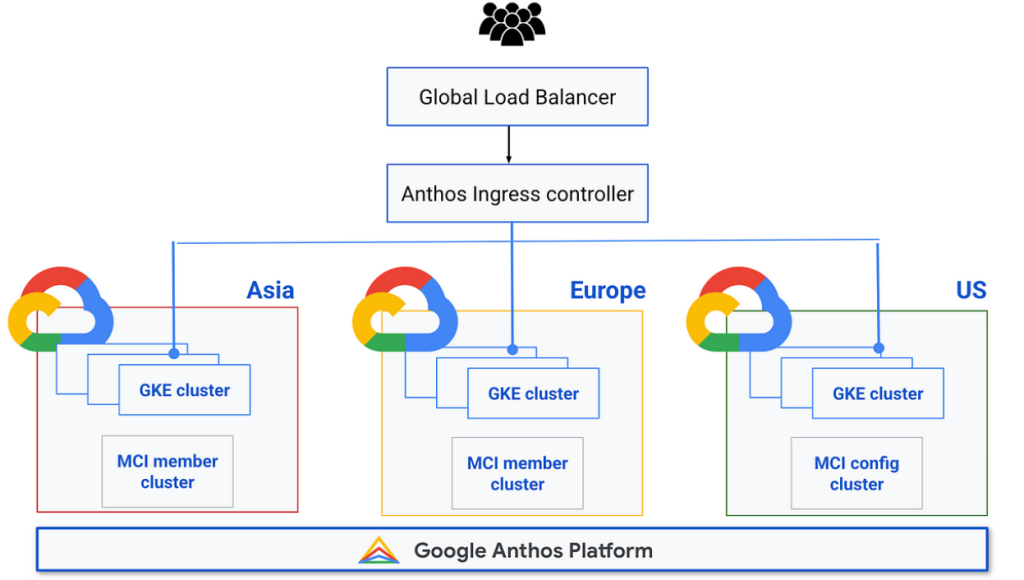

- Initial Request: When a user makes a request to access the application, the DNS resolution directs the request to the global load balancer.

- Global Load Balancing: The global load balancer evaluates the request against the configured routing rules and the current state of the clusters (e.g., load, health). It then selects the optimal cluster to handle the request.

- Cluster Selection: The criteria for cluster selection can include geographic proximity to the user, the health and capacity of the clusters, and other custom rules defined in the MCI configuration.

- Request Forwarding: Once the optimal cluster is selected, the global load balancer forwards the request to an ingress controller in that cluster.

- Service Routing: The ingress controller within the chosen cluster then routes the request to the appropriate service based on the path, host, or other headers in the request.

4. Health Checks and Failover

- Continuous Monitoring: MCI continuously monitors the health and performance of all clusters and their services. This includes performing health checks and monitoring metrics to ensure each service is functioning correctly.

- Failover and Redundancy: In case a cluster becomes unhealthy or is unable to handle additional traffic, MCI automatically reroutes traffic to another healthy cluster, ensuring uninterrupted access to the application.

5. Scalability and Maintenance

- Dynamic Scaling: As traffic patterns change or as clusters are added or removed, MCI dynamically adjusts routing rules and load balancing to optimize performance and resource utilization.

- Configuration Updates: Changes to the application or its deployment across clusters can be managed centrally through the MCI configuration, simplifying updates and maintenance.

Example Deployment YAML for Multi-cluster Ingress with FrontendConfig and BackendConfig

This example includes:

- A simple web application deployment.

- A service to expose the application within the cluster.

- A

MultiClusterServiceto expose the service across clusters. - A

MultiClusterIngressto expose the service externally withFrontendConfigandBackendConfig.

I’m a DevOps/SRE/DevSecOps/Cloud Expert passionate about sharing knowledge and experiences. I have worked at Cotocus. I share tech blog at DevOps School, travel stories at Holiday Landmark, stock market tips at Stocks Mantra, health and fitness guidance at My Medic Plus, product reviews at TrueReviewNow , and SEO strategies at Wizbrand.

Do you want to learn Quantum Computing?

Please find my social handles as below;

Rajesh Kumar Personal Website

Rajesh Kumar at YOUTUBE

Rajesh Kumar at INSTAGRAM

Rajesh Kumar at X

Rajesh Kumar at FACEBOOK

Rajesh Kumar at LINKEDIN

Rajesh Kumar at WIZBRAND

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals