Nowadays, the age of tech big Data information is continuously increasing in volume and variety. This is the information that companies would like to quickly explore to identify strategic answers to the business. To overcome the problem of traditional database management systems to support large volumes of data arises Google BigQuery platform. This solution runs in the Cloud, SQL-like queries against massive quantities of data, providing real-time insights about the data.

BigQuery is a serverless, highly scalable data warehouse that comes with a built-in query engine. It was designed for analyzing data on the order of billions of rows, using a SQL-like syntax. It runs on the Google Cloud Storage infrastructure and can be accessed with a REST-oriented application program interface (API).

The simplest definition comes from Google itself: “BigQuery is Google’s serverless cloud storage platform designed for large data sets.”

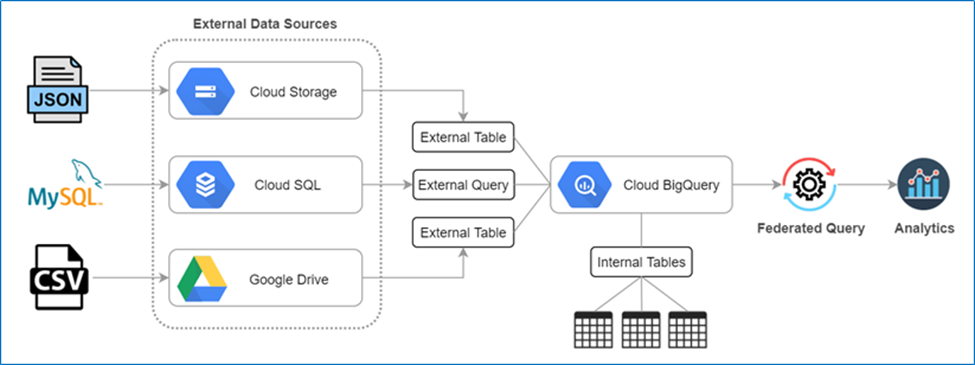

The query engine is capable of running SQL queries on terabytes of data in a matter of seconds, and petabytes in only minutes. You get this performance without having to manage any infrastructure and without having to create or rebuild indexes. BigQuery stores data in a columnar format, achieving a high compression ratio and scan throughput. However, you can also use BigQuery with data stored in other Google Cloud services such as BigTable, Cloud Storage, Cloud SQL and Google Drive.

There are three main ways to use BigQuery:

- Loading and exporting data: You can quickly load your data into BigQuery. Once BigQuery processes your data, you can export it to analyze it better.

- Querying and viewing data: BigQuery allows you to run interactive queries. You can also run batch queries and create virtual tables from your data.

- Managing data: BigQuery allows you to list projects, jobs, datasets, and tables. You can get information about each of these and update or patch your datasets. BigQuery also enables you to delete and manage any data you input.

History

BigQuery, which was released as V2 in 2011, is what Google calls an “externalized version” of its home-brewed Dremel query service software. Dremel and BigQuery employ columnar storage for fast data scanning and a tree architecture for dispatching queries and aggregating results across huge computer clusters. In early 2013, data joins and time stamps were added to the service. Later in the year, stream data insert capabilities were added.

How can I Use BigQuery?

With this architecture tailored for big data, BigQuery works best when it has several petabytes of data to analyze. The use cases most suited for BigQuery are the ones where humans need to perform interactive ad-hoc queries of read-only datasets. Typically, BigQuery is used at the end of the Big Data ETL pipeline on top of processed data or in scenarios where complex analytical queries to a relational database take several seconds. Because it has a built-in cache, BigQuery works really well in cases where the data does not change often. In addition, cases where datasets are fairly small don’t really benefit from BigQuery, with a simple query taking up to a few seconds. As such, it shouldn’t be used as a regular OLTP (Online Transaction Processing) database.

BigQuery is really meant and suited for BIG data and analytics. As a fully managed service, it works out of the box without the need to install, configure and operate any infrastructure. Customers are simply charged based on the queries they make, and the volume of data stored. However, being a black box has its disadvantages since you have very little control to where and how your data is stored and processed.

Productive of Using BigQuery

BigQuery has put up the immense potential to democratize insights with a scalable and secure platform embedded with built-in machine learning. It powers the business decisions that your organization takes based upon the data-driven aspects.

- Getting Visibility and Accessibility on Insights with Predictive & Real-Time Analytics

You get the potential of querying the streaming data in real-time. It will also help you get up-to-date information on all of the processes associated with your business, based upon the stored data. With such real-time visibility, it will be easy for you to predict the business outcomes with the integration of built-in ML without the necessity of moving data.

- Accessing Data & Sharing Insights with Ease

You get the feasibility to implement secure access to the data. It also helps you share all of the analytical insights within the organization to dedicated members easily and conveniently. You can come up with the easy creation of stunning dashboards and reports with the use of popular business intelligence tools. BigQuery allows you with such feasibility of accessing data, preparing insights, and sharing it with the members of your organization for infusing productive business decisions.

- Implementing Data Protection with BigQuery

BigQuery promotes a high-end and top-notch security implementation. The governance of protection imposed upon data makes it secure from unwanted threats and malware attacks. Apart from that, the reliability controls available with BigQuery, offer 99.99% uptime SLA and high availability. You need to preserve the data with utmost protection by encrypting them with default measures or using the customer-managed keys dedicated to encryption.

What are the Core Concepts of BigQuery?

The core concepts of BigQuery collaborate altogether to ensure productive output upon storing and utilizing organizational data. The concepts embedded within BigQuery includes:

- BigQuery ML

- BigQuery Omni

- BigQuery BI Engine

- BigQuery GIS

What about security?

BigQuery offers built-in data protection at scale. It provides security and governance tools to efficiently govern data and democratize insights within your organization.

Within BigQuery, users can assign dataset-level and project-level permissions to help govern data access. Secure data sharing ensures you can collaborate and operate your business with trust. Data has automatically encrypted both while in transit and at rest, ensuring that your data is protected from intrusions, theft, and attacks.

Cloud DLP helps you discover and classify sensitive data assets. Cloud IAM provides access control and visibility into security policies. Data Catalog helps you discover and manage data.

Some of the benefits of using BigQuery

Now that you know more about how to use BigQuery and what you can do with it, it’s time to look at how this tool will benefit your business.

- You can set it up fast

When you’re busy running your business, you don’t want to spend hours trying to set up a data tool to aggregate all your information. The most significant benefit of BigQuery is its easy and fast to set up. You can set up a data warehouse in seconds.

As soon as your data warehouse is set up, you can start to query your data immediately. BigQuery processes billions of rows of data in seconds. It handles all your real-time data and processes it quickly as it comes into the tool. This speed makes BigQuery a desirable option for managing your data.

- It’s easy to use

One of the most significant benefits of BigQuery is it’s easy to use. Building your own data center is not only expensive but time-consuming and challenging to scale. It leaves you frustrated and can even waste time as you try to understand your data.

BigQuery makes the process simple. You load your data into the tool and only pay for what you use. It’s an efficient way to help you process your data and understand it without the complications of building your own data center.

- It scales seamlessly

One of the biggest problems with inputting data is scaling. Many companies struggle to figure out how to properly size their data to make it make sense. With BigQuery, you’ll get all the scaling work done for you.

BigQuery separates storing and computing data. This process enables elastic scaling, which helps you scale at a higher performance rate. It works seamlessly for real-time analytics and appropriately scales your data to help it make sense to you.

- You’ll get accelerated insights

With BigQuery, you get a full view of your data. You can use data tools to help you further digest and break down your data. Tools like Tableau and Data Studio work seamlessly with BigQuery to help you better understand your information.

You can create reports and dashboards through these supplemental tools. BigQuery quickly takes the data it’s processed and integrates it into these data tools’ platforms to help you break down your data.

- Your data is protected

Your data is precious to your business. BigQuery protects your data and maintains strong security over it. This process eliminates the burden of having disaster recovery in place in case your data gets compromised or lost, although you should always have a disaster recovery plan.

- It’s affordable

BigQuery’s pricing works with your business. You only pay for the resources you use. Whether it’s storage or computing resources, Google charges your company solely based on how much of the tool you use. As you look at BigQuery Pricing, you’ll find that you’re charged separately for storage and streaming inserts. Copying and exporting data comes at no charge.

For Storage, the fee is:

$0.02 per GB, per month

$0.01 per GB, per month for long-term storage

For Streaming Inserts, the fee is:

$0.01 per 200MB

As you can see by this BigQuery pricing, you’re paying based on what you use in BigQuery. If you store 100GB of data, you’re paying $2 a month to store your data. You will never overpay for storage or processing when you don’t use it.

Summary

Google is continuing to scale its product offering and BigQuery is no exception. Having the capability to leverage this type of query service provides new flexibility to teams to tailor their data workflows to fit their needs. BigQuery is a highly scalable data warehouse that provides fast SQL analytics over large datasets in a serverless way.

I hope you like this particular blog. Thank you!!

I’m a DevOps/SRE/DevSecOps/Cloud Expert passionate about sharing knowledge and experiences. I have worked at Cotocus. I share tech blog at DevOps School, travel stories at Holiday Landmark, stock market tips at Stocks Mantra, health and fitness guidance at My Medic Plus, product reviews at TrueReviewNow , and SEO strategies at Wizbrand.

Do you want to learn Quantum Computing?

Please find my social handles as below;

Rajesh Kumar Personal Website

Rajesh Kumar at YOUTUBE

Rajesh Kumar at INSTAGRAM

Rajesh Kumar at X

Rajesh Kumar at FACEBOOK

Rajesh Kumar at LINKEDIN

Rajesh Kumar at WIZBRAND

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals