What is DataOps?

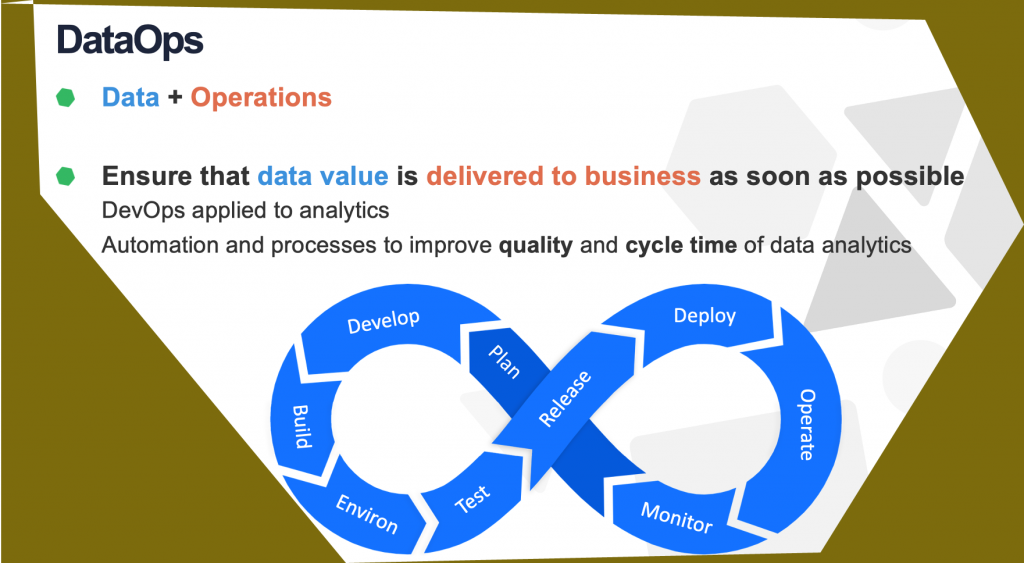

DataOps is a set of practices, processes and technologies that combines an integrated and process-oriented perspective on data with automation and methods from agile software engineering to improvise the quality, speed, and collaboration and promote a culture of continuous improvement in the area of data analytics.

According to Gartner, DataOps is a collaborative data management practice focused on improving the communication, integration and automation of data flows between data managers and data consumers across an organization.

What are the top tools of Dataops

- K2 view data fabric

K2View provides an operational data fabric dedicated to making every customer experience personalized and profitable.

The K2View platform continually ingests all customer data from all systems, enriches it with real-time insights, and transforms it into a patented Micro-Database – one for every customer.

To maximize performance, scale, and security, every micro-DB is compressed and individually encrypted.

It is then delivered in milliseconds to fuel quick, effective, and pleasing.

- High Byte intelligence hub

High Byte Intelligence Hub is the first Data Ops solution purpose-built for industrial data. It provides industrial companies with an off-the-shelf software solution to accelerate and scale the usage of operational data throughout the extended enterprise by contextualizing, standardizing, and securing this valuable information.

High Byte Intelligence Hub runs at the Edge, scales from embedded to server-grade computing platforms, connects devices and applications via a wide range of open standards and native connections, processes streaming data through standard models, and delivers contextualized and correlated information to the applications that require it.

Use High Byte Intelligence Hub to model and deliver your data more efficiently, stop writing custom scripts and troubleshooting broken integrations, and reduce time spent preparing data for analysis.

Starting Price: $5000 per year

- Tengu

Tengu enables companies to become data-driven, and encourage their business by making this data most useful and accessible at the exactly right moment, and increasing the reliability of the data.

scientists and engineers in executing their tasks to quicken the data-to-insights cycle, and helping them understand and manage the difficulty of building and operating a data-driven company.

TENGU is a Data Ops platform for data-driven companies, that allows them to improve the efficiency of data

- SuperbAI

Superb AI offers a new generation machine learning data platform to AI teams so, they can create better AI in very less time. The Superb AI Suite is an enterprise SaaS platform created to help ML engineers, product teams, researchers and data annotators build efficient training data workflows, saving time and money.

Most of ML teams spend more than 50% of their time managing training datasets Superb AI can help. On average, our customers have deduct the time it takes to start training models by 80%.

Fully managed workforce, powerful labeling tools, training data quality control, pre-trained model predictions, advanced auto-labeling, filter and search your datasets, data source integration, robust developer tools, ML workflow integrations, and much more.

Training data management just got easier with Superb AI. Superb AI offers enterprise-level features for every layer in an ML organization.

- Unravel

Unravel makes data work anywhere: on Azure, AWS, GCP or in your own data center– Optimizing performance, automating troubleshooting and keeping costs in check.

Unravel helps you monitor, manage, and improve your data pipelines in the cloud and on-premises – to drive more reliable performance in the applications that power your business. Get a unified view of your entire data stack. Unravel collects performance data from every platform, system, and application on any cloud then uses agentless technologies and machine learning to model your data pipelines from end to end.

Explore, correlate, and analyze everything in your modern data and cloud environment.. Unravel’s data model reveals dependencies, issues, and opportunities, how apps and resources are being used, what’s working and what’s not. Don’t just monitor performance – quickly troubleshoot and rapidly remediate issues.

- Delphix

Delphix is the industry leader in Data Ops and provides an intelligent data platform that accelerates digital transformation for leading companies around the world.

The Delphix Data Ops Platform supports a broad spectrum of systems—from mainframes to Oracle databases, ERP applications, and Kubernetes containers.

Delphix supports a comprehensive range of data operations to enable modern CI/CD workflows and automates data compliance for privacy regulations, including GDPR

- Zaloni Arena

End-to-end Data Use machine-learning to identify and align master data assets for better data decisioning. Complete lineage with detailed visualizations alongside masking and tokenization for superior security.Ops built on an agile platform that improves and safeguards your data assets.

Arena is the premier augmented data management platform. Our active data catalog enables self-service data enrichment and consumption to quickly control complex data environments. Customizable workflows that increase the accuracy and reliability of every data set.

Use machine-learning to identify and align master data assets for better data decisioning. Complete lineage with detailed visualizations alongside masking and tokenization for superior security.

We make data management easy. Arena catalogs your data, wherever it is and our extensible connections enable analytics to happen across your preferred tools. Conquer data sprawl challenges:

Our software drives business and analytics success while providing the controls and extensibility needed across today’s decentralized, multi-cloud data complexity.

8. Stream sets

Stream Sets Data Ops Platform. Your data integration engine for flowing data from myriad batch and streaming sources to your modern analytics platforms. * Collaborative, visual pipeline design * Deploy and scale on-edge, on-prem or in the cloud * Map and monitor dataflows for end-to-end visibility * Enforce Data SLAs for availability, quality and privacy Go further, faster.

Replace specialized coding skills will visual pipeline design, test and deployment. Get projects up and running in a fraction of the time. Don’t let brittle pipelines and lost data cripple your applications. Handle unexpected changes automatically. Get a live map with metrics, alerting and drill down.

Replace specialized coding skills will visual pipeline design, test and deployment. Get projects up and running in a fraction of the time. Don’t let brittle pipelines and lost data cripple your applications. Handle unexpected changes automatically. Get a live map with metrics, alerting and drill down.

- Brevo

Brevo’s suite of data analytics and business intelligence features fully integrate with any existing data infrastructure, securely taking complex business decisions and simplifying the outcome. Ensuring you have the insights you need without the wait.

No more reporting delays. Delivering insights at your fingertips, activating intelligence on your cell phone. Democratization of data starts with easy to digest visualizations, with easy to access insights

No more reporting delays. Delivering insights at your fingertips, activating intelligence on your cell phone.

Democratization of data starts with easy to digest visualizations, with easy to access insights. Brevo is a hybrid, multi-cloud platform that fully integrates with any system architecture. Delve deeper into data than ever before with the Brevo advanced analytics engine. Embed Brevo into your systems and processes.

We bring native intelligence to business. A cloud-based self-service platform providing interactive reporting through enhanced visualizations and real time analytics using any data source.

- Apache Airflow

Airflow is a platform created by the community to programmatically author, schedule and monitor workflows. Airflow has a modular architecture and uses a message queue to orchestrate an arbitrary number of workers. Airflow is ready to scale to infinity. Airflow pipelines are defined in Python, allowing for dynamic pipeline generation.

This allows for writing code that instantiates pipelines dynamically

Easily define your own operators and extend libraries to fit the level of abstraction that suits your environment. Airflow pipelines are lean and explicit. Parametrization is built into its core using the powerful Jinja templating engine.

No more command-line or XML black-magic! Use standard Python features to create your workflows, including date time formats for scheduling and loops to dynamically generate tasks. This allows you to maintain full flexibility when building your workflows.

- Lentiq

Lentiq is a collaborative data lake as a service environment that’s built to enable small teams to do big things. Quickly run data science, machine learning and data analysis at scale in the cloud of your choice. With Lentiq, your teams can ingest data in real time and then process, clean and share it.

From there, Lentiq makes it possible to build, train and share models internally. Simply put, data teams can collaborate with Lentiq and innovate with no restrictions. Data lakes are storage and processing environments, which provide ML, ETL, schema-on-read querying capabilities and so much more.

Are you working on some data science magic? You definitely need a data lake. In the Post-Hadoop era, the big, centralized data lake is a thing of the past.

With Lentiq, we use data pools, which are multi-cloud, interconnected mini-data lakes. They work together to give you a stable, secure and fast data science environment.

- Naveego

Naveego is a software company and offers a software title called Naveego. Naveego offers a free version. Naveego is business intelligence software, and includes features such as dashboard, data analysis, and predictive analytics.

With regards to system requirements, Naveego is available as SaaS software.

. Costs start at $99.00/month. Some alternative products to Naveego include Salesforce Analytics Cloud, Domo, and AnswerRocket.

- Composabale data ops platform

Composable Analytics is a software organization based in the United States that offers a piece of software called Composable Data Ops Platform.

Composable Data Ops Platform offers online, business hours, and 24/7 live support . Composable Data Ops Platform features training via documentation, webinars, live online, and in person sessions. The Composable Data Ops Platform software suite is SaaS, and Windows software.

Composable Data Ops Platform is business intelligence software, and includes features such as ad hoc reports, benchmarking, budgeting & forecasting, dashboard, data analysis, key performance indicators, OLAP, performance metrics, predictive analytics, profitability analysis, strategic planning, trend / problem indicators, and visual analytics.

Some competitor software products to Composable Data Ops Platform include Blueshift ONE, Innovaccer – Healthcare Data Platform, and ImagineIntelligence.

- Piperr

Saturam is a software business formed in 2014 in the United States that publishes a software suite called Piperr. Piperr includes training via documentation, live online, and in person sessions.

The Piperr product is SaaS software. Piperr includes online, and business hours supportPiperr is data management software, and includes features such as customer data, data analysis, data capture, data integration, data migration, data quality control, data security, information governance, master data

- Data buck

Big Data Quality must be validated to ensure the sanctity, accuracy & completeness of data, as it moves through multiple IT platforms, or as it is stored in Data Lakes, so that the data is trustworthy and fit for use.

- Undetected errors in incoming data

- Multiple data sources that get out of sync over time

- Structural change to data in upstream processes not expected downstream and,

- Presence of multiple IT platforms

- Right data

RightData is an intuitive, flexible, efficient and scalable data testing, reconciliation, validation suite that allows stakeholders in identifying issues related to data consistency, quality, completeness, and gaps. It empowers users to analyze, design, build, execute and automate reconciliation and Validation scenarios with no programming.

It helps highlighting the data issues in production thereby preventing compliance, credibility damages and minimize the financial risk to your organization.

RightData is targeted to improve your organization’s data quality, consistency reliability, completeness

It also allows to accelerate the test cycles thereby reducing the cost of delivery by enabling Continuous Integration and Continuous Deployment (CI/CD).

- Badook

Badook allows data scientists to write automated tests for data used in training and testing AI models (and much more).

Validate data automatically and over time.

Reduce time to insights. Free data scientists to do more meaningful work.

- Data kitchen

Reclaim control of your data pipelines and deliver value instantly, without errors. The Data Kitchen Data Ops platform automates and coordinates all the people, tools, and environments in your entire data analytics organization.

Everything from orchestration, testing, and monitoring to development and deployment.

You’ve already got the tools you need.

Our platform automatically orchestrates your end-to-end multi-tool, multi-environment pipelines – from data access to value delivery.

Best institute for learning DataOps

In my consideraton, the best institute is DevOpsSchool. Why i am saying this? Because this institute has proven itself in very less time by achieved a tremedous track record of successfully trained so many participants so far. Whether it is a student or individual professionals or to any particular company. This institute has brilliant trainers that holds 15+ years of IT experience and they all are well skilled in their domain. This institute ‘s USP is it provides live and instructor led online training with so many benefits to help in learning. So if you are lloking fo any specific institute for training and certification then you should go for this.

Reference

- What is DevOps?

- DataOps Certifications

- DataOps Consultants

- DataOps Consulting Company

- Best DataOps Courses

- Best DataOps Tools

- Best DataOps Trainer

- Best DataOps Training

I’m a DevOps/SRE/DevSecOps/Cloud Expert passionate about sharing knowledge and experiences. I have worked at Cotocus. I share tech blog at DevOps School, travel stories at Holiday Landmark, stock market tips at Stocks Mantra, health and fitness guidance at My Medic Plus, product reviews at TrueReviewNow , and SEO strategies at Wizbrand.

Do you want to learn Quantum Computing?

Please find my social handles as below;

Rajesh Kumar Personal Website

Rajesh Kumar at YOUTUBE

Rajesh Kumar at INSTAGRAM

Rajesh Kumar at X

Rajesh Kumar at FACEBOOK

Rajesh Kumar at LINKEDIN

Rajesh Kumar at WIZBRAND

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals