Introduction

Agent Test & Replay Frameworks are platforms that enable AI teams to validate, debug, and stress-test agent workflows in controlled environments. These frameworks allow teams to record agent actions, replay workflows, test reasoning, evaluate tool usage, and verify memory or RAG interactions. Replay frameworks help identify errors, unsafe behaviors, and performance bottlenecks before agents are deployed into production environments.

In, these tools are critical for enterprise AI, multi-agent orchestration, RAG pipelines, tool-calling validation, memory workflow testing, regulatory compliance, and risk mitigation. Buyers should evaluate workflow recording fidelity, multi-agent support, tool and API emulation, memory and RAG integration, human-in-the-loop testing, latency and cost tracking, policy validation, observability, versioning and rollback, synthetic scenario simulation, and integration with orchestration systems.

Best for: AI platform engineers, enterprise AI teams, research labs, and regulated industries deploying complex agent workflows.

Not ideal for: single-turn chatbots or stateless agents without tool access, memory usage, or multi-step reasoning.

What’s Changed in Agent Test & Replay Frameworks

- End-to-end multi-agent workflow replay is now standard.

- Tool calls, memory, and RAG interactions can be replayed for testing.

- Human-in-the-loop checkpoints are integrated for sensitive actions.

- Observability dashboards track replayed workflows and unsafe behavior.

- Model-agnostic support allows BYO, proprietary, and open-source LLMs.

- Versioning and rollback of workflows ensures reproducibility.

- Latency, token usage, and cost metrics are recorded for replayed scenarios.

- Red-teaming and regression frameworks are integrated into replay pipelines.

- Synthetic data and sandboxed scenarios allow stress-testing of agents.

- Low-code replay visualizers complement code-first frameworks.

- Alerts and anomaly detection trigger during replay testing.

- Compliance and audit logs are automatically captured during test runs.

Quick Buyer Checklist

- Full workflow recording and replay

- Multi-agent workflow support

- Tool and API execution replay

- Memory and RAG testing

- Human-in-the-loop checkpoints

- Guardrails and policy validation

- Observability dashboards

- Latency, cost, and token monitoring

- Versioning and rollback

- Synthetic environment testing

- Regression and red-team testing

- Integration with orchestration and monitoring systems

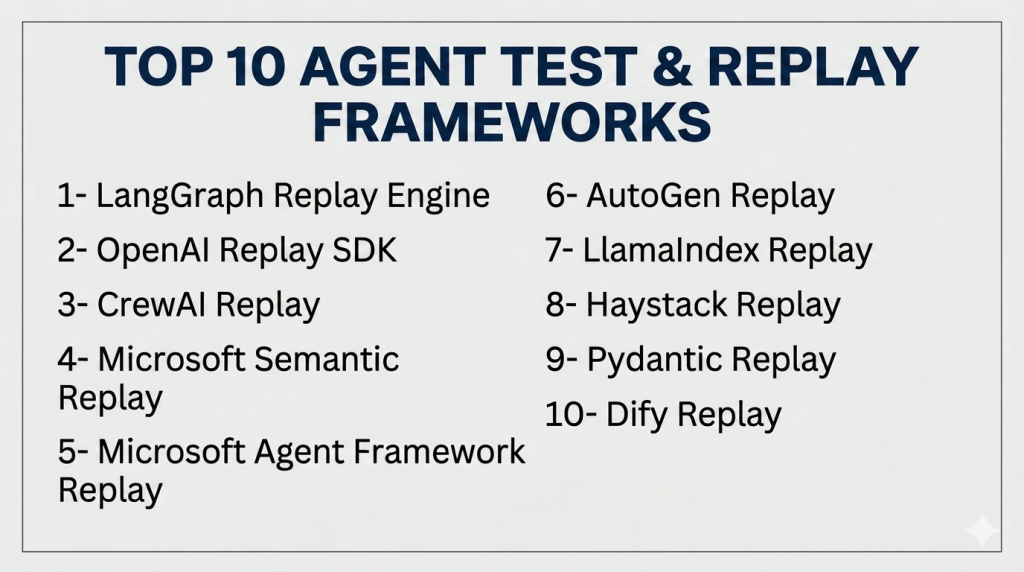

Top 10 Agent Test & Replay Frameworks

1- LangGraph Replay Engine

One-line verdict: Enterprise-grade replay framework for multi-agent workflows with tool, memory, and RAG testing.

Short description:

LangGraph Replay Engine allows recording, replaying, and debugging multi-agent workflows safely, supporting memory, tool, and RAG evaluation.

Standout Capabilities

- Multi-agent workflow recording and replay

- Tool and API emulation

- Memory and RAG usage replay

- Human-in-the-loop checkpoints

- Observability dashboards

- Versioned workflow replay

- Fault injection and error simulation

AI-Specific Depth

- Model support: proprietary / BYO / multi-model

- RAG / knowledge integration: vector DB connectors

- Evaluation: regression and workflow correctness tests

- Guardrails: policy enforcement visibility

- Observability: latency, token metrics, blocked action logs

Pros

- Enterprise-ready replay

- Multi-agent workflow debugging

- RAG and memory testing

Cons

- Complex setup

- Requires engineering expertise

- Learning curve

Deployment & Platforms

Cloud / hybrid; Python-based

Integrations & Ecosystem

APIs, RAG connectors, LangChain ecosystem

Pricing Model

Open-source; enterprise support available

Best-Fit Scenarios

- Production multi-agent workflow testing

- RAG-heavy pipelines

- Human-in-the-loop debugging

2- OpenAI Replay SDK

One-line verdict: Replay and test OpenAI agents with tool, memory, and RAG workflow validation.

Short description:

OpenAI Replay SDK enables teams to record and replay agent workflows, evaluate tool usage, memory, and retrieval pipelines in isolated environments.

Standout Capabilities

- Multi-agent workflow replay

- Tool and API execution testing

- Memory and RAG replay

- Human-in-the-loop checkpoints

- Observability dashboards

AI-Specific Depth

- Model support: OpenAI / BYO / multi-model

- RAG / knowledge integration: API connectors

- Evaluation: workflow regression tests

- Guardrails: policy enforcement visibility

- Observability: latency, token usage, unsafe action logs

Pros

- Developer-friendly

- Integrated with OpenAI agents

- Multi-agent workflow testing

Cons

- Limited outside OpenAI ecosystem

- Enterprise governance may require setup

- Premium features may be required

Deployment & Platforms

Cloud; Python-based

Integrations & Ecosystem

OpenAI APIs, workflow connectors, RAG pipelines

Pricing Model

Usage-based tiers

Best-Fit Scenarios

- Rapid prototyping

- Tool-driven workflow evaluation

- Multi-agent testing

3- CrewAI Replay

One-line verdict: Role-based replay framework for multi-agent workflows and tool validation.

Short description:

CrewAI Replay enables role-specific workflow replay, allowing multi-agent interaction testing, memory, and tool execution monitoring.

Standout Capabilities

- Role-based workflow replay

- Multi-agent coordination simulation

- Tool and API execution replay

- Memory and RAG metrics

- Observability dashboards

AI-Specific Depth

- Model support: BYO / multi-model

- RAG / knowledge integration: connectors

- Evaluation: workflow correctness and regression

- Guardrails: access enforcement

- Observability: unsafe actions, latency, token metrics

Pros

- Flexible role-based replay

- Multi-agent workflow testing

- Human-in-the-loop checkpoints

Cons

- Complexity grows with workflow size

- Less code-first control

- Learning curve

Deployment & Platforms

Cloud / self-hosted; Python-based

Integrations & Ecosystem

APIs, RAG connectors, workflow tools

Pricing Model

Open-source with enterprise support

Best-Fit Scenarios

- Enterprise workflow replay

- Multi-agent coordination testing

- Knowledge-intensive workflows

4- Microsoft Semantic Replay

One-line verdict: Enterprise replay framework for multi-agent workflows with tool, memory, and RAG evaluation.

Short description:

Semantic Replay allows recording, replaying, and analyzing agent workflows in complex enterprise environments, including RAG pipelines, memory usage, and tool calls.

Standout Capabilities

- Multi-agent workflow replay and monitoring

- Tool and API execution testing

- Memory and RAG pipeline replay

- Human-in-the-loop checkpoints

- Observability dashboards with latency, cost, and token metrics

- Versioning and rollback for workflow tests

- Anomaly detection and alerting

AI-Specific Depth

- Model support: BYO / multi-model

- RAG / knowledge integration: vector DB connectors

- Evaluation: regression and workflow tests

- Guardrails: policy enforcement visibility

- Observability: latency, token usage, unsafe action logs

Pros

- Enterprise-ready replay

- Multi-agent workflow debugging

- RAG and memory evaluation

Cons

- Requires Microsoft ecosystem

- Low-code support is limited

- Complex setup

Deployment & Platforms

Cloud / hybrid; Windows, Linux

Integrations & Ecosystem

Microsoft apps, APIs, RAG connectors

Pricing Model

Enterprise license

Best-Fit Scenarios

- Enterprise workflow testing

- Production multi-agent pipelines

- Compliance-focused evaluation

5- Microsoft Agent Framework Replay

One-line verdict: Unified framework for replaying multi-agent workflows and tool execution.

Short description:

Agent Framework Replay tracks agent workflows, monitors tool and memory usage, and enables RAG pipeline replay for enterprise deployments.

Standout Capabilities

- Multi-agent workflow replay

- Tool and API monitoring

- Memory and RAG evaluation

- Human-in-the-loop checkpoints

- Observability dashboards

AI-Specific Depth

- Model support: BYO / multi-model

- RAG / knowledge integration: connectors

- Evaluation: regression and workflow correctness

- Guardrails: access and policy enforcement

- Observability: blocked actions, latency, token metrics

Pros

- Enterprise-grade replay

- Multi-agent workflow tracking

- RAG and tool monitoring

Cons

- Microsoft ecosystem required

- Low-code dashboards limited

- Complexity for small teams

Deployment & Platforms

Cloud / hybrid; Web, Windows, Linux

Integrations & Ecosystem

Microsoft apps, APIs, RAG pipelines

Pricing Model

Enterprise license

Best-Fit Scenarios

- Enterprise multi-agent replay

- Production workflow testing

- Compliance-sensitive RAG pipelines

6- AutoGen Replay

One-line verdict: Open-source framework for testing and replaying multi-agent workflows.

Short description:

AutoGen Replay allows teams to record and replay agent interactions with memory, tools, and RAG retrieval safely in research or prototype environments.

Standout Capabilities

- Multi-agent workflow replay

- Tool and API execution testing

- Memory and RAG monitoring

- Human-in-the-loop checkpoints

- Observability dashboards

AI-Specific Depth

- Model support: BYO / multi-model

- RAG / knowledge integration: connectors

- Evaluation: regression and correctness testing

- Guardrails: sandboxed workflow policies

- Observability: latency, token usage, unsafe actions

Pros

- Flexible for research workflows

- Multi-agent testing

- Open-source

Cons

- Limited production readiness

- Technical expertise required

- Minimal enterprise governance

Deployment & Platforms

Python, cloud / local

Integrations & Ecosystem

APIs, RAG connectors, memory stores

Pricing Model

Open-source

Best-Fit Scenarios

- Research workflows

- Multi-agent prototyping

- Experimental AI testing

7- LlamaIndex Replay

One-line verdict: Replay framework for RAG-intensive multi-agent workflows.

Short description:

LlamaIndex Replay monitors and replays multi-agent workflows, tool usage, memory, and retrieval for RAG-heavy enterprise or research pipelines.

Standout Capabilities

- Multi-agent RAG workflow replay

- Tool and API monitoring

- Memory usage replay

- Human-in-the-loop checkpoints

- Observability dashboards

AI-Specific Depth

- Model support: BYO / multi-model

- RAG / knowledge integration: vector DB connectors

- Evaluation: retrieval and workflow tests

- Guardrails: policy enforcement visibility

- Observability: latency, token usage

Pros

- Knowledge-driven workflow replay

- RAG and tool observability

- Enterprise-ready

Cons

- Technical expertise required

- Less low-code support

- Governance outside RAG may need customization

Deployment & Platforms

Python, cloud / hybrid

Integrations & Ecosystem

Vector DBs, APIs, RAG pipelines

Pricing Model

Open-source

Best-Fit Scenarios

- Knowledge-intensive workflows

- Multi-agent RAG pipelines

- Enterprise testing

8- Haystack Replay

One-line verdict: Modular replay framework for multi-agent workflows and RAG pipelines.

Short description:

Haystack Replay allows teams to replay multi-agent workflows in modular environments, testing tool execution, memory usage, and RAG retrieval safely.

Standout Capabilities

- Modular workflow replay

- Tool and API execution replay

- Multi-agent reasoning tests

- Memory and RAG monitoring

- Alerting dashboards

AI-Specific Depth

- Model support: BYO / multi-model

- RAG / knowledge integration: connectors

- Evaluation: workflow and reasoning tests

- Guardrails: policy enforcement

- Observability: latency, token metrics

Pros

- Flexible modular replay

- Multi-agent RAG testing

- Open-source

Cons

- Complex pipelines require engineering

- Multi-agent collaboration limited

- Guardrails may need customization

Deployment & Platforms

Python, cloud / hybrid

Integrations & Ecosystem

Vector DBs, APIs, RAG pipelines

Pricing Model

Open-source

Best-Fit Scenarios

- Knowledge-driven workflows

- Multi-agent RAG pipelines

- Enterprise replay testing

9- Pydantic Replay

One-line verdict: Python-first replay framework for structured multi-agent workflows.

Short description:

Pydantic Replay validates agent outputs, replays memory and tool actions, and provides structured multi-agent workflow testing with observability.

Standout Capabilities

- Structured workflow replay

- Tool and memory action testing

- Multi-agent supervision

- Human-in-the-loop checkpoints

- Observability dashboards

AI-Specific Depth

- Model support: BYO / multi-model

- RAG / knowledge integration: connectors

- Evaluation: regression tests

- Guardrails: schema validation and workflow policies

- Observability: latency, token usage

Pros

- Type-safe workflow replay

- Python developer-friendly

- Production-ready multi-agent testing

Cons

- Python expertise required

- Less visual/low-code support

- Complex orchestration may need custom dashboards

Deployment & Platforms

Python, cloud / hybrid

Integrations & Ecosystem

Python apps, RAG pipelines, APIs

Pricing Model

Open-source

Best-Fit Scenarios

- Structured reasoning workflows

- Python-first multi-agent replay

- Enterprise workflow testing

10- Dify Replay

One-line verdict: Low-code replay framework for multi-agent workflows with memory, tool, and RAG testing.

Short description:

Dify Replay provides a visual environment for replaying multi-agent workflows, testing tool execution, memory usage, and RAG pipelines safely.

Standout Capabilities

- Visual workflow replay

- Tool and memory testing

- Multi-agent metrics

- RAG pipeline replay

- Alerting dashboards

AI-Specific Depth

- Model support: Hosted / BYO

- RAG / knowledge integration: connectors

- Evaluation: workflow and tool replay tests

- Guardrails: policy enforcement

- Observability: latency, token usage

Pros

- Low-code rapid deployment

- Multi-agent workflow testing

- Visual dashboards for replay

Cons

- Less control for complex workflows

- Governance depends on setup

- Complex scenarios may need engineering

Deployment & Platforms

Web, cloud / self-hosted

Integrations & Ecosystem

LLMs, APIs, RAG pipelines, workflow tools

Pricing Model

Open-source / tiered

Best-Fit Scenarios

- Rapid prototyping

- Multi-agent RAG workflows

- Enterprise workflow replay

Comparison Table

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| LangGraph Replay Engine | Enterprise workflows | Cloud / Hybrid | Multi-model / BYO | Durable multi-agent replay | Complexity | N/A |

| OpenAI Replay SDK | OpenAI agents | Cloud | OpenAI / BYO | Workflow & tool replay | Limited outside OpenAI | N/A |

| CrewAI Replay | Role-based workflows | Cloud / Self-hosted | BYO / Multi-model | Role-based replay | Complexity | N/A |

| Microsoft Semantic Replay | Enterprise AI | Cloud / Hybrid | Multi-model / BYO | Enterprise-grade replay | Microsoft ecosystem | N/A |

| Microsoft Agent Framework Replay | Enterprise orchestration | Cloud / Hybrid | Multi-model | Unified workflow replay | Microsoft-centric | N/A |

| AutoGen Replay | Research workflows | Cloud / Local | BYO / Multi-model | Multi-agent experimentation | Production readiness | N/A |

| LlamaIndex Replay | Knowledge-heavy workflows | Cloud / Hybrid | BYO / Multi-model | RAG-focused replay | Engineering skill | N/A |

| Haystack Replay | Modular workflows | Cloud / Hybrid | BYO / Multi-model | Modular replay | Multi-agent collaboration | N/A |

| Pydantic Replay | Structured outputs | Cloud / Hybrid | BYO / Multi-model | Type-safe workflow replay | Python-dependent | N/A |

| Dify Replay | Low-code workflows | Cloud / Self-hosted | Hosted / BYO | Rapid visual replay | Governance setup | N/A |

Scoring & Evaluation

| Tool | Core | Reliability | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| LangGraph Replay Engine | 9 | 8 | 9 | 9 | 7 | 8 | 8 | 8 | 8.4 |

| OpenAI Replay SDK | 8 | 8 | 8 | 8 | 8 | 7 | 7 | 8 | 7.8 |

| CrewAI Replay | 8 | 7 | 8 | 8 | 8 | 7 | 7 | 8 | 7.7 |

| Microsoft Semantic Replay | 8 | 8 | 8 | 8 | 7 | 7 | 8 | 8 | 7.8 |

| Microsoft Agent Framework Replay | 8 | 8 | 8 | 8 | 7 | 7 | 8 | 8 | 7.8 |

| AutoGen Replay | 7 | 6 | 6 | 7 | 7 | 7 | 6 | 7 | 6.6 |

| LlamaIndex Replay | 8 | 7 | 8 | 9 | 7 | 7 | 7 | 8 | 7.7 |

| Haystack Replay | 8 | 7 | 7 | 8 | 7 | 7 | 7 | 8 | 7.4 |

| Pydantic Replay | 7 | 8 | 8 | 7 | 8 | 7 | 7 | 7 | 7.4 |

| Dify Replay | 7 | 6 | 7 | 8 | 9 | 7 | 7 | 7 | 7.2 |

Top 3 for Enterprise: LangGraph Replay Engine, Microsoft Semantic Replay, Microsoft Agent Framework Replay

Top 3 for SMB: Dify Replay, CrewAI Replay, OpenAI Replay SDK

Top 3 for Developers: LangGraph Replay Engine, Pydantic Replay, LlamaIndex Replay

Which Agent Test & Replay Framework Is Right for You

Solo / Freelancer

Dify Replay or Pydantic Replay are ideal for prototyping and small-scale agent workflows. They provide low-code or Python-first replay capabilities without heavy infrastructure.

SMB

CrewAI Replay, Dify Replay, and OpenAI Replay SDK provide practical multi-agent replay and monitoring for mid-sized teams.

Mid-Market

LangGraph Replay Engine, LlamaIndex Replay, and Haystack Replay offer advanced replay, observability, and RAG workflow validation suitable for growing teams.

Enterprise

Microsoft Semantic Replay, Microsoft Agent Framework Replay, and LangGraph Replay Engine are best for large-scale multi-agent workflow replay with enterprise-grade monitoring and compliance features.

Regulated Industries

Finance, healthcare, insurance, and legal teams should focus on human-in-the-loop checks, audit logs, and replaying critical workflows. Microsoft and LangGraph frameworks are particularly well-suited.

Budget vs Premium

Budget-conscious teams: Dify Replay, AutoGen Replay, Pydantic Replay

Premium / enterprise: LangGraph Replay Engine, Microsoft frameworks

Build vs Buy

Build if workflows require highly customized replay and testing rules. Buy or adopt platforms for enterprise-ready dashboards, low-code integration, and prebuilt monitoring.

Implementation Playbook 30 / 60 / 90 Days

30 Days: Identify high-risk workflows, record initial agent actions, and replay basic multi-agent interactions. Add human-in-the-loop checkpoints and logs.

60 Days: Expand replay to all active agents, integrate memory and RAG pipeline replay, establish dashboards for token usage, latency, and cost, and run regression tests.

90 Days: Optimize workflow replay performance, scale replay across departments, implement governance for replay policies, and validate all workflows with red-teaming and anomaly detection.

Common Mistakes

- Replaying only single-agent workflows and ignoring multi-agent interactions

- Not tracking tool or API execution during replay

- Ignoring memory or RAG pipeline interactions

- Skipping human-in-the-loop checkpoints for sensitive workflows

- Not capturing latency, token usage, and cost metrics

- Failing to version and rollback workflows for reproducibility

- Overlooking regression tests during replay

- Not integrating replay frameworks with policy or guardrail systems

- Scaling replay before validation

- Underestimating governance and compliance requirements

- Failing to red-team workflows

- Assuming one replay setup fits all agent types

- Ignoring blocked or unsafe actions

- Not monitoring workflow performance during replay

FAQs

1. What are agent test & replay frameworks?

Platforms that record and replay AI agent workflows, including tool calls, memory, and RAG pipelines, for testing and validation.

2. Why are they important?

They help detect unsafe behaviors, logic errors, and performance issues before agents impact production systems.

3. Can multiple agents be replayed together?

Yes, modern frameworks support multi-agent workflows and coordinated replay for complex interactions.

4. Do these tools support RAG pipelines?

Yes, they allow replaying retrieval-augmented generation pipelines and monitoring memory or tool usage.

5. Can human-in-the-loop checks be added?

Yes, checkpoints can approve or review agent actions during replay, especially for critical workflows.

6. Are these frameworks model-agnostic?

Most support BYO, open-source, proprietary, and multi-model agent workflows.

7. How do these frameworks measure performance?

They track latency, token usage, cost, tool execution, workflow completion, and anomalies.

8. Can they help with compliance?

Yes, audit logs, human review, and workflow traceability are included for regulated environments.

9. Do they increase latency?

Minimal latency may occur due to logging and monitoring, but it ensures safety and debugging effectiveness.

10. Are open-source frameworks enough for enterprise use?

Open-source can be used for prototyping, but enterprises may require dashboards, alerts, and full human-in-the-loop integration.

Conclusion

Agent Test & Replay Frameworks are essential for safely validating multi-agent workflows, tool calls, memory usage, and RAG pipelines. LangGraph Replay Engine, Microsoft Semantic Replay, and Microsoft Agent Framework Replay excel in enterprise and regulated environments, while Dify Replay, Pydantic Replay, and AutoGen Replay are ideal for prototyping and smaller teams. The best framework depends on workflow complexity, multi-agent coordination, compliance requirements, and budget.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals