Introduction

Large Language Model (LLM) Hosting Platforms are infrastructure systems that allow developers and enterprises to deploy, run, scale, and manage large language models without building or maintaining complex machine learning infrastructure from scratch. Instead of worrying about GPUs, distributed inference, scaling logic, or model serving pipelines, teams can use these platforms to access optimized, production-ready LLM endpoints.

In simple terms, these platforms are the “runtime layer” for modern AI applications. They power chatbots, AI agents, coding assistants, enterprise search systems, document automation tools, and multimodal AI workflows.

Today, LLM hosting is not just about serving a model. It includes orchestration, model routing, fine-tuning support, evaluation frameworks, observability, safety guardrails, and cost optimization systems. In production environments, the hosting layer is often more important than the model itself because it determines reliability, latency, and scalability.

Common real-world use cases include:

- Hosting chat-based AI assistants for enterprises

- Running customer support automation systems

- Deploying AI copilots for software tools

- Powering RAG-based knowledge systems

- Serving fine-tuned domain-specific models

- Running autonomous AI agents in workflows

When evaluating LLM hosting platforms, buyers typically focus on:

- Inference latency and throughput

- Model compatibility (open-source, proprietary, fine-tuned models)

- Scalability under heavy load

- Cost efficiency per token or request

- GPU availability and optimization

- Deployment flexibility (cloud, hybrid, self-hosted)

- Observability and monitoring tools

- Security and access control

- Evaluation and testing support

- Vendor lock-in risk

Best for: AI engineers, platform teams, startups building AI products, and enterprises deploying production-grade AI systems.

Not ideal for: casual users or teams that only need basic chat interfaces without scaling or infrastructure control.

What’s Changed in LLM Hosting Platforms

Modern LLM hosting platforms have evolved significantly and now include full AI infrastructure capabilities:

- Shift from simple model serving to AI orchestration platforms

- Native support for agent execution and tool calling

- Integration of multi-model routing systems

- Strong focus on low-latency inference optimization

- Built-in auto-scaling GPU infrastructure

- Expansion of serverless LLM inference models

- Increased adoption of open-source model hosting

- Built-in evaluation and regression testing frameworks

- Advanced prompt injection and safety guardrails

- Deep observability with traces, logs, and token metrics

- Support for fine-tuning pipelines and LoRA adapters

- Hybrid deployments combining cloud + private inference

- Cost optimization using batching and caching systems

- Enterprise-grade RBAC, audit logs, and compliance controls

Quick Buyer Checklist (Scan-Friendly)

Before selecting an LLM hosting platform, evaluate:

- Latency and throughput performance

- GPU availability and autoscaling capabilities

- Support for open-source and proprietary models

- Fine-tuning and adapter support

- Multi-model routing capabilities

- RAG compatibility and vector DB integration

- Observability (logs, traces, metrics)

- Cost optimization tools (caching, batching)

- Security controls (RBAC, SSO, audit logs)

- Deployment flexibility (cloud, hybrid, self-hosted)

- API stability and versioning strategy

- Lock-in risk and portability options

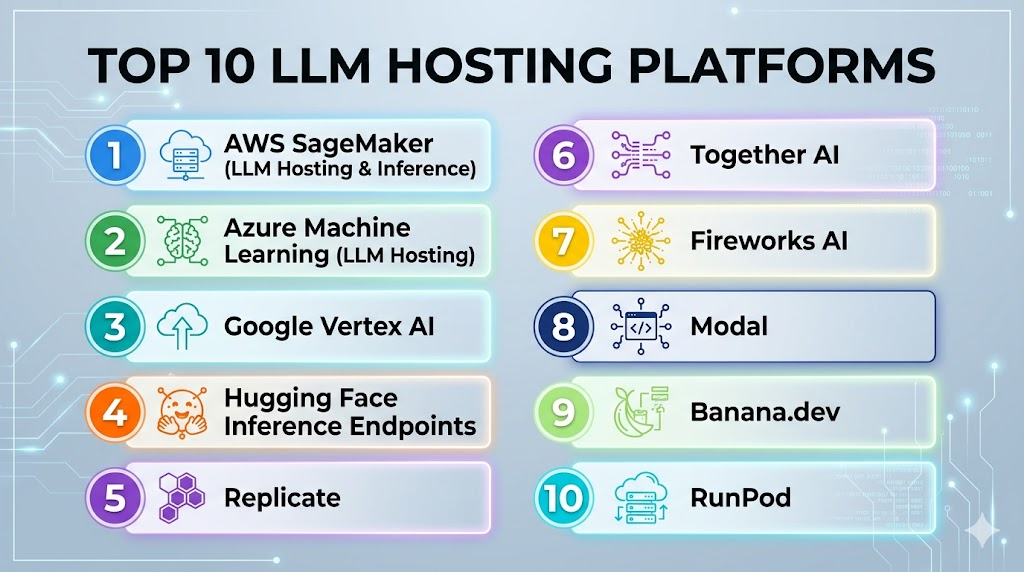

Top 10 LLM Hosting Platforms

#1 — AWS SageMaker (LLM Hosting & Inference)

One-line verdict: Best for enterprise-grade scalable LLM deployment within AWS ecosystem.

Short description:

AWS SageMaker provides a full machine learning hosting stack, including LLM inference endpoints, GPU scaling, and model deployment pipelines.

Standout Capabilities

- Managed GPU inference endpoints

- Auto-scaling model serving

- Integration with AWS ecosystem

- Support for custom and open-source models

- MLOps pipeline integration

AI-Specific Depth

- Model support: Open-source + custom + proprietary via integrations

- RAG: Native AWS ecosystem support

- Evaluation: External or SageMaker tools

- Guardrails: AWS security layers + optional controls

- Observability: CloudWatch metrics and logs

Pros

- Highly scalable infrastructure

- Strong enterprise reliability

- Deep AWS integration

Cons

- Complex setup and configuration

- Higher operational learning curve

Security & Compliance

- Enterprise-grade AWS security controls

Deployment & Platforms

- Cloud (AWS)

Integrations & Ecosystem

- S3, Lambda, Bedrock, Redshift

Pricing Model

Usage-based (compute + GPU time)

Best-Fit Scenarios

- Enterprise AI systems

- Large-scale LLM deployments

- Cloud-native AI platforms

#2 — Azure Machine Learning (LLM Hosting)

One-line verdict: Best for enterprise LLM deployment inside Microsoft ecosystem.

Short description:

Provides managed LLM hosting with strong governance, security, and integration with Azure services.

Standout Capabilities

- Managed inference endpoints

- Enterprise governance controls

- GPU cluster management

- Integration with Azure OpenAI ecosystem

AI-Specific Depth

- Model support: Custom + open-source + Azure-hosted models

- RAG: Azure AI Search integration

- Evaluation: External or Azure tooling

- Guardrails: Enterprise policy controls

- Observability: Azure Monitor

Pros

- Strong enterprise compliance

- Secure deployment options

- Microsoft ecosystem integration

Cons

- Complex configuration

- Slower iteration cycles

Security & Compliance

- Full Azure enterprise security stack

Deployment & Platforms

- Cloud (Azure)

Integrations & Ecosystem

- Microsoft 365, Power BI, Azure Data services

Pricing Model

Usage-based (compute + endpoints)

Best-Fit Scenarios

- Enterprise AI platforms

- Regulated industries

- Microsoft-centric organizations

#3 — Google Vertex AI

One-line verdict: Best for scalable multimodal and LLM hosting in cloud-native environments.

Short description:

Provides managed model hosting with strong support for multimodal and large-scale AI workloads.

Standout Capabilities

- Managed model endpoints

- GPU autoscaling

- Multimodal model support

- Integrated ML pipeline tools

AI-Specific Depth

- Model support: Gemini + custom models

- RAG: Native integration tools

- Evaluation: Platform evaluation tools

- Guardrails: Safety filters included

- Observability: Cloud logging systems

Pros

- Strong scalability

- Multimodal support

- Cloud-native design

Cons

- Complex ecosystem

- Learning curve for new users

Security & Compliance

- Enterprise Google Cloud security

Deployment & Platforms

- Cloud (Google Cloud)

Integrations & Ecosystem

- BigQuery, Cloud Storage, Dataflow

Pricing Model

Usage-based

Best-Fit Scenarios

- Multimodal AI systems

- Data-heavy AI applications

- Enterprise ML pipelines

#4 — Hugging Face Inference Endpoints

One-line verdict: Best for deploying open-source LLMs quickly with minimal infrastructure overhead.

Short description:

Provides managed hosting for open-source LLMs with easy deployment and scaling.

Standout Capabilities

- One-click model deployment

- Wide open-source model library

- Autoscaling inference endpoints

- GPU-backed hosting

AI-Specific Depth

- Model support: Open-source models

- RAG: External integration required

- Evaluation: External tools

- Guardrails: Basic filters

- Observability: Usage metrics

Pros

- Easy deployment

- Large model ecosystem

- Developer-friendly

Cons

- Limited enterprise governance

- Performance varies by model

Security & Compliance

- Not fully standardized across tiers

Deployment & Platforms

- Cloud + private endpoints

Integrations & Ecosystem

- Hugging Face Hub ecosystem

Pricing Model

Usage-based (compute time)

Best-Fit Scenarios

- Open-source LLM deployment

- Prototyping AI apps

- Research workloads

#5 — Replicate

One-line verdict: Best for fast deployment and experimentation with diverse LLMs.

Short description:

Provides simple API-based hosting for a wide range of AI models.

Standout Capabilities

- Wide model catalog

- Simple API deployment

- Rapid prototyping support

- Community-driven models

AI-Specific Depth

- Model support: Open-source + community models

- RAG: External systems

- Evaluation: Not built-in

- Guardrails: Minimal

- Observability: Basic logs

Pros

- Very easy to use

- Fast experimentation

- Large model variety

Cons

- Not enterprise-grade

- Limited control and governance

Security & Compliance

- Not fully detailed publicly

Deployment & Platforms

- Cloud API

Integrations & Ecosystem

- Developer experimentation ecosystem

Pricing Model

Usage-based per model

Best-Fit Scenarios

- Prototyping

- Research experiments

- Model testing

#6 — Together AI

One-line verdict: Best for scalable open-source LLM hosting and fine-tuning.

Short description:

Specializes in hosting and serving open-source models at scale.

Standout Capabilities

- Open-source model hosting

- Fine-tuning support

- High-performance inference

- Scalable API endpoints

AI-Specific Depth

- Model support: Open-source models

- RAG: External

- Evaluation: External tools

- Guardrails: Limited

- Observability: Basic metrics

Pros

- Flexible model control

- Cost-effective scaling

- Strong OSS support

Cons

- Limited enterprise features

- Requires engineering effort

Security & Compliance

- Not fully publicly stated

Deployment & Platforms

- Cloud API

Integrations & Ecosystem

- Hugging Face compatible workflows

Pricing Model

Usage-based

Best-Fit Scenarios

- Open-source LLM hosting

- Custom AI pipelines

- Research environments

#7 — Fireworks AI

One-line verdict: Best for high-speed optimized LLM inference.

Short description:

Focuses on low-latency, high-throughput model serving infrastructure.

Standout Capabilities

- Ultra-fast inference engine

- Optimized GPU usage

- Scalable model endpoints

- Real-time AI performance

AI-Specific Depth

- Model support: Mixed models

- RAG: External

- Evaluation: Limited

- Guardrails: Basic

- Observability: Performance metrics

Pros

- Very fast inference

- Efficient infrastructure

- Developer-friendly APIs

Cons

- Limited governance tools

- Smaller ecosystem

Security & Compliance

- Not fully detailed publicly

Deployment & Platforms

- Cloud API

Integrations & Ecosystem

- LLM orchestration tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Real-time AI systems

- Chat applications

- High-throughput workloads

#8 — Modal

One-line verdict: Best serverless GPU platform for scalable LLM workloads.

Short description:

Provides serverless GPU infrastructure for running LLMs and AI workloads.

Standout Capabilities

- Serverless GPU execution

- Auto-scaling workloads

- Python-first deployment

- Flexible compute model

AI-Specific Depth

- Model support: Custom + open-source

- RAG: External

- Evaluation: External tools

- Guardrails: Minimal

- Observability: Execution logs

Pros

- Flexible serverless model

- Easy scaling

- Developer-friendly

Cons

- Requires engineering setup

- Not plug-and-play enterprise solution

Security & Compliance

- Not fully publicly stated

Deployment & Platforms

- Cloud serverless

Integrations & Ecosystem

- Python ML ecosystem

Pricing Model

Compute-based

Best-Fit Scenarios

- Dynamic workloads

- AI pipelines

- Custom LLM services

#9 — Banana.dev

One-line verdict: Best for simple GPU-based LLM hosting APIs.

Short description:

Provides straightforward GPU-based model deployment with API access.

Standout Capabilities

- Simple deployment model

- GPU-backed inference

- API-first design

- Fast setup

AI-Specific Depth

- Model support: Custom models

- RAG: External

- Evaluation: Not built-in

- Guardrails: Minimal

- Observability: Basic logs

Pros

- Easy deployment

- Fast setup

- Lightweight system

Cons

- Limited scalability features

- Minimal enterprise tooling

Security & Compliance

- Not publicly detailed

Deployment & Platforms

- Cloud API

Integrations & Ecosystem

- Basic API ecosystem

Pricing Model

Usage-based

Best-Fit Scenarios

- Small AI apps

- Prototypes

- Lightweight inference

#10 — RunPod

One-line verdict: Best for flexible GPU hosting and custom LLM deployments.

Short description:

Provides GPU cloud infrastructure for hosting and running LLM workloads.

Standout Capabilities

- GPU instance hosting

- Flexible model deployment

- Serverless GPU options

- Cost-efficient scaling

AI-Specific Depth

- Model support: Custom + open-source

- RAG: External

- Evaluation: External tools

- Guardrails: Minimal

- Observability: Basic metrics

Pros

- Flexible infrastructure

- Cost-effective GPU access

- Developer control

Cons

- Requires setup effort

- Limited enterprise tooling

Security & Compliance

- Not standardized publicly

Deployment & Platforms

- Cloud + self-managed

Integrations & Ecosystem

- ML frameworks and Docker support

Pricing Model

Usage-based GPU pricing

Best-Fit Scenarios

- Custom LLM hosting

- Experimental AI systems

- GPU-heavy workloads

Comparison Table

| Platform | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| AWS SageMaker | Enterprise hosting | Cloud | High | Scalability | Complexity | N/A |

| Azure ML | Enterprise AI | Cloud | High | Security | Setup complexity | N/A |

| Vertex AI | Multimodal AI | Cloud | High | Cloud integration | Learning curve | N/A |

| Hugging Face | OSS deployment | Cloud | Open-source | Ease of use | Limited governance | N/A |

| Replicate | Experimentation | Cloud | Mixed | Simplicity | Not enterprise-ready | N/A |

| Together AI | OSS scaling | Cloud | Open-source | Flexibility | Limited governance | N/A |

| Fireworks AI | Fast inference | Cloud | Mixed | Speed | Smaller ecosystem | N/A |

| Modal | Serverless GPU | Cloud | Custom | Flexibility | Setup effort | N/A |

| Banana.dev | Simple hosting | Cloud | Custom | Ease of use | Limited scaling | N/A |

| RunPod | GPU hosting | Cloud/self | Custom | Cost control | Manual setup | N/A |

Scoring & Evaluation (Transparent Rubric)

| Platform | Core | Reliability | Guardrails | Integrations | Ease | Perf/Cost | Security | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| AWS SageMaker | 10 | 9 | 8 | 10 | 7 | 8 | 10 | 9 | 8.7 |

| Azure ML | 9 | 9 | 9 | 10 | 7 | 8 | 10 | 9 | 8.6 |

| Vertex AI | 9 | 8 | 8 | 10 | 7 | 8 | 9 | 9 | 8.4 |

| Hugging Face | 8 | 8 | 7 | 9 | 9 | 8 | 7 | 8 | 8.0 |

| Replicate | 7 | 7 | 6 | 7 | 10 | 8 | 6 | 7 | 7.2 |

| Together AI | 8 | 8 | 6 | 8 | 8 | 9 | 7 | 7 | 7.8 |

| Fireworks AI | 8 | 8 | 6 | 7 | 8 | 10 | 7 | 7 | 7.8 |

| Modal | 8 | 7 | 6 | 8 | 8 | 9 | 7 | 7 | 7.7 |

| Banana.dev | 7 | 7 | 5 | 6 | 9 | 8 | 6 | 6 | 7.0 |

| RunPod | 8 | 7 | 6 | 7 | 8 | 9 | 7 | 7 | 7.6 |

Which LLM Hosting Platform Is Right for You?

Solo / Developer

- Replicate

- Banana.dev

- RunPod

Startup / SMB

- Fireworks AI

- Together AI

- Hugging Face

Mid-Market

- Vertex AI

- AWS SageMaker

- Modal

Enterprise

- Azure ML

- AWS SageMaker

- Vertex AI

Regulated Industries

- Azure ML

- AWS SageMaker

- Vertex AI

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Deploy initial LLM endpoint

- Benchmark latency and cost

- Set up basic logging

- Test 1–2 models

60 Days

- Add autoscaling and load balancing

- Introduce evaluation pipeline

- Implement observability dashboards

- Add guardrails

90 Days

- Optimize cost and GPU usage

- Implement model routing

- Add governance and RBAC

- Scale to production workloads

Common Mistakes & How to Avoid Them

- Ignoring GPU cost optimization

- No observability setup

- Over-reliance on one model provider

- No evaluation framework

- Poor scaling strategy

- Missing fallback models

- Not testing under load

- Weak security controls

- No prompt/version tracking

- Underestimating latency requirements

- Skipping caching strategies

- No governance or audit logs

- Poor RAG optimization

- No disaster recovery plan

FAQs

1. What is LLM hosting?

It is the process of deploying and serving large language models through scalable infrastructure.

2. Why not self-host LLMs?

Self-hosting requires managing GPUs, scaling, and optimization, which hosting platforms simplify.

3. What is serverless LLM hosting?

It runs models without managing infrastructure, scaling automatically based on demand.

4. Can I host open-source models?

Yes, most platforms support open-source models like Llama variants.

5. What is the cheapest hosting option?

GPU marketplaces and serverless platforms are generally more cost-efficient.

6. Do I need GPUs for LLM hosting?

Yes, most production LLM hosting relies on GPU acceleration.

7. What is model routing?

Automatically selecting the best model based on cost, speed, or quality.

8. Can I fine-tune models on hosting platforms?

Yes, many platforms support fine-tuning or adapters like LoRA.

9. Is LLM hosting secure?

Enterprise platforms provide strong security, but configuration matters.

10. What is inference optimization?

Techniques like batching, quantization, and caching to improve speed and cost.

11. Can I switch hosting platforms later?

Yes, but abstraction layers help reduce migration complexity.

12. Do hosting platforms support AI agents?

Yes, most now support tool calling and agent execution workflows.

Conclusion

LLM Hosting Platforms are the backbone of modern AI infrastructure, enabling scalable, efficient, and production-ready deployment of large language models. The right platform depends on your priorities—whether that is enterprise security, cost efficiency, open-source flexibility, or ultra-low latency—but long-term success depends on strong observability, evaluation systems, and scalable architecture rather than just model selection.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals