Introduction

Multimodal Model Platforms are AI systems that allow models to understand and generate information across multiple types of data—such as text, images, audio, video, and documents—within a single unified workflow. Instead of treating each input type separately, these platforms combine them into one reasoning system, enabling more human-like understanding.

In practical terms, these platforms power applications like visual assistants, real-time voice agents, document intelligence systems, video analysis tools, and advanced AI copilots that can “see, hear, and read” at the same time. Modern multimodal platforms are no longer experimental—they are production-grade infrastructure used in enterprise AI systems.

Leading models now support combinations of text + image + audio + video in a single API call, enabling unified reasoning across formats instead of fragmented pipelines.

Common real-world use cases include:

- AI assistants that analyze screenshots and explain them

- Voice-based copilots with real-time responses

- Document + image + chart analysis systems

- Video summarization and understanding tools

- Customer support bots that process screenshots and voice messages

- Medical, legal, and enterprise document interpretation systems

When evaluating multimodal platforms, buyers typically focus on:

- Supported input types (text, image, audio, video)

- Cross-modal reasoning quality

- Latency across modalities

- Context window size

- Model accuracy for vision/audio tasks

- Integration with RAG systems

- Cost per multimodal request

- Tool calling and agent capabilities

- Safety and moderation systems

- Enterprise deployment flexibility

Best for: AI product teams, enterprise automation teams, developers building intelligent assistants, and startups building next-gen AI interfaces.

Not ideal for: simple text-only chatbot use cases or lightweight applications where multimodal input is not required.

What’s Changed in Multimodal Model Platforms

Multimodal platforms have rapidly evolved from simple “vision add-ons” into deeply integrated intelligence systems:

- Shift from text-only LLMs to native multimodal foundation models

- True text + image + audio + video fusion in single models

- Growth of real-time voice AI and conversational agents

- Expansion of video-native understanding models

- Large context windows enabling long document + video reasoning

- Strong improvements in cross-modal reasoning accuracy

- Integration of agentic workflows with multimodal inputs

- Rise of multimodal tool calling (vision + actions)

- Increased focus on latency optimization for real-time apps

- Better OCR + document + diagram understanding

- Enterprise adoption of multimodal RAG pipelines

- Improved evaluation benchmarks for vision/audio reasoning

- Stronger safety filters for visual and audio content

Modern frontier systems like Gemini and GPT-class models now support multimodal reasoning at scale with native architecture design rather than patchwork encoders.

Quick Buyer Checklist (Scan-Friendly)

Before choosing a multimodal platform, evaluate:

- Supported modalities (text, image, audio, video)

- Native vs. add-on multimodal architecture

- Vision reasoning accuracy (charts, diagrams, screenshots)

- Audio understanding and transcription quality

- Video processing capability

- Context window size for multimodal inputs

- Latency under mixed input workloads

- Cost per multimodal request

- RAG and external knowledge integration

- Tool calling and agent support

- Safety filters for image/audio content

- Deployment options (cloud, hybrid, self-hosted)

- API consistency across modalities

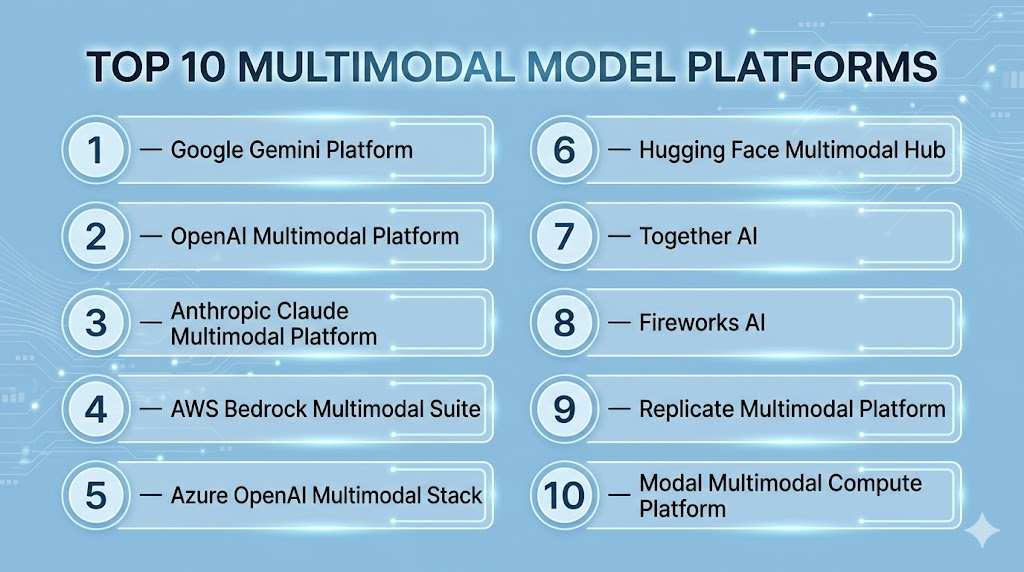

Top 10 Multimodal Model Platforms

#1 — Google Gemini Platform

One-line verdict: Best overall multimodal platform with native text, image, audio, and video understanding.

Short description:

Gemini is designed as a native multimodal system that processes multiple input types in a unified architecture, making it one of the most advanced platforms for cross-modal reasoning.

Standout Capabilities

- Native multimodal architecture (text, image, audio, video)

- Strong long-context reasoning

- Excellent video understanding

- High-quality document and diagram analysis

- Real-time multimodal interaction capabilities

AI-Specific Depth

- Model support: Native multimodal Gemini models

- Vision + audio + video: Fully supported

- RAG: Strong integration with cloud tools

- Evaluation: Built-in benchmarking tools

- Observability: Cloud-native monitoring

Pros

- True multimodal integration (not stitched)

- Strong performance on mixed inputs

- Excellent scalability

Cons

- Complex ecosystem

- Requires cloud dependency

Security & Compliance

- Enterprise-grade cloud security controls

Deployment & Platforms

- Cloud only

Integrations & Ecosystem

- Google Cloud, Vertex AI, BigQuery

Pricing Model

Usage-based cloud pricing

Best-Fit Scenarios

- Video intelligence systems

- Enterprise multimodal AI

- Real-time assistant applications

#2 — OpenAI Multimodal Platform

One-line verdict: Best for high-quality multimodal reasoning and developer-friendly AI APIs.

Short description:

OpenAI platforms support vision, text, and audio capabilities with strong reasoning and agent integration.

Standout Capabilities

- Strong vision reasoning (screenshots, diagrams)

- Audio-based interaction support

- Tool/function calling for agents

- High reasoning accuracy

- Strong ecosystem adoption

AI-Specific Depth

- Model support: Proprietary multimodal models

- Vision/audio: Supported

- Video: Limited/native support varies

- RAG: External integration required

- Observability: Token + request logs

Pros

- High reasoning quality

- Strong developer ecosystem

- Easy API integration

Cons

- Limited full video-native support

- Potential cost scaling

Security & Compliance

- Enterprise controls available

Deployment & Platforms

- Cloud API

Integrations & Ecosystem

- Broad SDK ecosystem and agent frameworks

Pricing Model

Usage-based

Best-Fit Scenarios

- AI copilots

- Vision-based assistants

- Multimodal chat applications

#3 — Anthropic Claude Multimodal Platform

One-line verdict: Best for document + image reasoning with strong safety alignment.

Short description:

Claude excels in analyzing documents, diagrams, and images with high reliability and structured reasoning.

Standout Capabilities

- Strong document + image interpretation

- High-context reasoning

- Safety-focused design

- Reliable structured outputs

AI-Specific Depth

- Model support: Proprietary multimodal models

- Vision: Strong

- Audio/video: Limited support

- RAG: External integration

- Guardrails: Strong built-in alignment

Pros

- Very reliable reasoning

- Excellent for enterprise documents

- Safe outputs

Cons

- Limited multimodal breadth

- No native video-first design

Security & Compliance

- Enterprise-grade offerings available

Deployment & Platforms

- Cloud API

Integrations & Ecosystem

- Enterprise workflow tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Legal and compliance systems

- Document intelligence

- Enterprise assistants

#4 — AWS Bedrock Multimodal Suite

One-line verdict: Best enterprise multimodal platform inside AWS ecosystem.

Short description:

Provides access to multiple multimodal models with enterprise-grade infrastructure and governance.

Standout Capabilities

- Multi-model access

- Enterprise governance controls

- AWS-native integration

- Scalable inference infrastructure

AI-Specific Depth

- Model support: Multiple providers

- Vision/audio: Model-dependent

- RAG: AWS-native

- Observability: CloudWatch

- Guardrails: AWS Guardrails

Pros

- Enterprise-ready

- Flexible model selection

- Strong governance

Cons

- Complex configuration

- Fragmented model behavior

Security & Compliance

- AWS enterprise security stack

Deployment & Platforms

- Cloud (AWS)

Integrations & Ecosystem

- S3, Lambda, SageMaker

Pricing Model

Usage-based

Best-Fit Scenarios

- Enterprise AI systems

- Multi-model multimodal pipelines

- AWS-native applications

#5 — Azure OpenAI Multimodal Stack

One-line verdict: Best for secure enterprise multimodal AI in Microsoft ecosystem.

Short description:

Provides multimodal AI capabilities integrated with Azure’s enterprise infrastructure.

Standout Capabilities

- Vision + text reasoning

- Enterprise-grade governance

- Secure deployment options

- Microsoft ecosystem integration

AI-Specific Depth

- Model support: OpenAI models via Azure

- Vision/audio: Supported

- RAG: Azure AI Search

- Observability: Azure Monitor

Pros

- Strong compliance

- Enterprise security

- Deep integration with Microsoft tools

Cons

- Slower iteration

- Complex setup

Security & Compliance

- Azure enterprise security standards

Deployment & Platforms

- Cloud (Azure)

Integrations & Ecosystem

- Microsoft 365, Power Platform

Pricing Model

Usage-based

Best-Fit Scenarios

- Enterprise workflows

- Regulated industries

- Microsoft-heavy organizations

#6 — Hugging Face Multimodal Hub

One-line verdict: Best open-source multimodal ecosystem for experimentation and deployment.

Short description:

Provides access to a large collection of multimodal models and deployment tools.

Standout Capabilities

- Wide open-source model support

- Vision + language models

- Easy deployment endpoints

- Strong community ecosystem

AI-Specific Depth

- Model support: Open-source multimodal models

- Vision/audio/video: Varies by model

- RAG: External

- Evaluation: External tools

Pros

- Huge model ecosystem

- Flexible experimentation

- Easy prototyping

Cons

- Inconsistent performance

- Limited enterprise governance

Security & Compliance

- Varies by deployment setup

Deployment & Platforms

- Cloud + self-host

Integrations & Ecosystem

- Hugging Face ecosystem tools

Pricing Model

Usage-based or self-hosted

Best-Fit Scenarios

- Research

- Prototyping multimodal apps

- Open-source deployments

#7 — Together AI

One-line verdict: Best for scalable open-source multimodal model hosting.

Short description:

Focuses on hosting and scaling open multimodal models efficiently.

Standout Capabilities

- Open-source multimodal hosting

- Fine-tuning support

- Scalable inference

- API-first architecture

AI-Specific Depth

- Model support: Open-source models

- Vision/audio: Model-dependent

- RAG: External

- Observability: Basic metrics

Pros

- Flexible deployment

- Strong OSS support

- Cost-efficient scaling

Cons

- Limited enterprise tooling

- Requires engineering setup

Security & Compliance

- Not fully standardized publicly

Deployment & Platforms

- Cloud API

Integrations & Ecosystem

- Hugging Face compatible

Pricing Model

Usage-based

Best-Fit Scenarios

- Open-source multimodal systems

- Custom AI pipelines

- Research applications

#8 — Fireworks AI

One-line verdict: Best for fast multimodal inference optimization.

Short description:

Optimized for low-latency multimodal model serving.

Standout Capabilities

- High-speed inference

- Optimized GPU usage

- Real-time multimodal performance

- Scalable APIs

AI-Specific Depth

- Model support: Mixed models

- Vision/audio: Supported depending on model

- RAG: External

- Observability: Performance metrics

Pros

- Very fast inference

- Efficient infrastructure

- Developer-friendly

Cons

- Limited governance tools

- Smaller ecosystem

Security & Compliance

- Not fully publicly stated

Deployment & Platforms

- Cloud API

Integrations & Ecosystem

- LLM orchestration tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Real-time multimodal apps

- Chat + vision systems

- High-throughput workloads

#9 — Replicate Multimodal Platform

One-line verdict: Best for rapid multimodal experimentation and prototyping.

Short description:

Provides API access to a wide variety of multimodal models.

Standout Capabilities

- Large model variety

- Simple API access

- Fast experimentation

- Community models

AI-Specific Depth

- Model support: Open-source + community

- Vision/audio/video: Varies

- RAG: External

- Observability: Basic logs

Pros

- Very easy to use

- Wide experimentation scope

- Fast prototyping

Cons

- Not enterprise-grade

- Limited control

Security & Compliance

- Not standardized

Deployment & Platforms

- Cloud API

Integrations & Ecosystem

- Developer experimentation ecosystem

Pricing Model

Usage-based

Best-Fit Scenarios

- Prototyping multimodal apps

- Research experiments

- Model testing

#10 — Modal Multimodal Compute Platform

One-line verdict: Best serverless GPU platform for multimodal workloads.

Short description:

Serverless GPU platform for running multimodal AI pipelines.

Standout Capabilities

- Serverless GPU execution

- Auto-scaling workloads

- Flexible multimodal pipelines

- Python-native deployment

AI-Specific Depth

- Model support: Custom/open-source

- Vision/audio/video: User-defined

- RAG: External

- Observability: Execution logs

Pros

- Flexible compute

- Easy scaling

- Developer-friendly

Cons

- Requires setup effort

- Not plug-and-play

Security & Compliance

- Not fully publicly detailed

Deployment & Platforms

- Serverless cloud

Integrations & Ecosystem

- Python ML ecosystem

Pricing Model

Compute-based

Best-Fit Scenarios

- Custom multimodal pipelines

- AI infrastructure workloads

- Dynamic workloads

Comparison Table

| Platform | Best For | Deployment | Modalities | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Gemini | Full multimodal AI | Cloud | Text/Image/Audio/Video | Native multimodal | Ecosystem complexity | N/A |

| OpenAI | General multimodal apps | Cloud | Text/Image/Audio | Reasoning quality | Limited video | N/A |

| Claude | Document + image reasoning | Cloud | Text/Image | Safety + accuracy | Limited modalities | N/A |

| AWS Bedrock | Enterprise multimodal | Cloud | Multi-model | Governance | Complexity | N/A |

| Azure OpenAI | Enterprise AI | Cloud | Text/Image/Audio | Security | Slower updates | N/A |

| Hugging Face | OSS multimodal | Cloud/self | Mixed | Flexibility | Inconsistency | N/A |

| Together AI | OSS scaling | Cloud | Mixed | Cost efficiency | Limited governance | N/A |

| Fireworks AI | Fast inference | Cloud | Mixed | Speed | Smaller ecosystem | N/A |

| Replicate | Experimentation | Cloud | Mixed | Simplicity | Not enterprise-ready | N/A |

| Modal | Serverless compute | Cloud | Custom | Flexibility | Setup complexity | N/A |

Scoring & Evaluation (Transparent Rubric)

| Platform | Core | Reliability | Guardrails | Integrations | Ease | Perf/Cost | Security | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Gemini | 10 | 9 | 9 | 9 | 8 | 8 | 9 | 9 | 8.9 |

| OpenAI | 9 | 9 | 8 | 9 | 9 | 8 | 8 | 9 | 8.7 |

| Claude | 9 | 9 | 9 | 8 | 9 | 8 | 9 | 8 | 8.8 |

| AWS Bedrock | 9 | 8 | 8 | 10 | 7 | 8 | 10 | 9 | 8.6 |

| Azure OpenAI | 9 | 8 | 9 | 10 | 7 | 8 | 10 | 9 | 8.6 |

| Hugging Face | 8 | 7 | 7 | 9 | 9 | 8 | 7 | 8 | 8.0 |

| Together AI | 8 | 7 | 6 | 8 | 8 | 9 | 7 | 7 | 7.8 |

| Fireworks AI | 8 | 8 | 6 | 7 | 8 | 10 | 7 | 7 | 7.9 |

| Replicate | 7 | 6 | 5 | 7 | 10 | 8 | 6 | 6 | 7.0 |

| Modal | 8 | 7 | 6 | 8 | 8 | 9 | 7 | 7 | 7.7 |

Which Multimodal Platform Is Right for You

Solo / Developers

- Replicate

- Hugging Face

- OpenAI

Startups / SMBs

- Fireworks AI

- Together AI

- OpenAI

Mid-Market

- AWS Bedrock

- Gemini

- Modal

Enterprise

- Azure OpenAI

- AWS Bedrock

- Gemini

Regulated Industries

- Azure OpenAI

- AWS Bedrock

- Claude

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Test multimodal APIs (text + image first)

- Define use cases (vision, audio, video)

- Build baseline evaluation set

- Measure latency and cost

60 Days

- Add RAG pipelines

- Introduce observability and tracing

- Implement safety filters

- Run multimodal stress tests

90 Days

- Optimize routing and cost

- Deploy production workloads

- Add governance and RBAC

- Scale multimodal agents

Common Mistakes & How to Avoid Them

- Treating multimodal as “just vision + text”

- Ignoring video cost explosion

- No evaluation benchmarks for images/audio

- Poor latency planning for multimodal inputs

- Missing fallback models

- No safety filters for images/audio

- Overloading single model for all modalities

- Weak observability setup

- No RAG optimization

- Lack of agent orchestration design

- Ignoring token cost spikes in vision

- No production stress testing

- Skipping data governance

- Not separating modalities in pipelines

FAQs

1. What is a multimodal model platform?

A platform that supports multiple input types like text, images, audio, and video in one AI system.

2. Why are multimodal models important?

They enable AI to understand real-world data more like humans by combining different sensory inputs.

3. Which is the most advanced multimodal platform?

Platforms like Gemini and OpenAI currently lead in native multimodal reasoning.

4. Do all models support video?

No, only some platforms support native video understanding.

5. What is native multimodal AI?

It means the model is trained on multiple modalities together, not added later as separate systems.

6. Is multimodal AI expensive?

Yes, especially video and high-resolution image processing.

7. Can multimodal models do real-time voice?

Yes, many support real-time audio interaction.

8. What is multimodal RAG?

It combines retrieval systems with text, images, and other inputs.

9. Are multimodal platforms secure?

Enterprise platforms provide strong security, but configuration is critical.

10. Can I build agents with multimodal models?

Yes, most modern platforms support agent workflows.

11. What industries use multimodal AI?

Healthcare, finance, education, customer support, and media.

12. What is the biggest challenge in multimodal AI?

Cost, latency, and cross-modal reasoning consistency.

Conclusion

Multimodal Model Platforms represent the next evolution of AI systems, enabling unified reasoning across text, images, audio, and video. The most advanced platforms are now natively multimodal, meaning they are designed from the ground up to process multiple data types together rather than stitching them externally. The best platform depends on your use case—whether it is enterprise governance, developer flexibility, or real-time multimodal intelligence—but long-term success depends on balancing performance, cost, and true cross-modal understanding.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals