Introduction

AI Inference API Management Platforms are the control layer that sits between your applications and AI models. They help teams route requests, monitor usage, manage costs, enforce safety policies, and orchestrate multiple models through a single interface. Instead of hardcoding calls to one model provider, these platforms give you flexibility, visibility, and control over how AI is used in production.

This category matters more than ever as AI systems become more complex, involving agents, multimodal inputs (text, image, voice), and real-time decision-making. Managing inference is no longer just about API calls—it’s about reliability, governance, and cost efficiency at scale.

Common use cases include:

- AI-powered customer support bots with fallback models

- Real-time content moderation and safety filtering

- Multi-model routing for cost and latency optimization

- Retrieval-augmented generation (RAG) applications

- AI copilots for internal enterprise tools

- Automated document processing and analysis

What to evaluate when choosing a platform:

- Model flexibility (hosted vs BYO vs open-source)

- Latency and cost optimization features

- Observability (logs, traces, token usage)

- Guardrails and safety controls

- Evaluation and testing capabilities

- Data privacy and retention policies

- Integration ecosystem and APIs

- Deployment options (cloud vs self-hosted)

- Scalability and reliability

- Vendor lock-in risk

Best for: AI engineers, CTOs, platform teams, and enterprises deploying production-grade AI systems across industries like fintech, SaaS, healthcare, and e-commerce.

Not ideal for: Solo developers or small projects experimenting with a single model API—direct model provider APIs or lightweight SDKs may be simpler and cheaper in those cases.

What’s Changed in AI Inference API Management Platforms

- Agentic workflows are now standard with tool calling and orchestration

- Multi-model routing has become a default expectation

- Built-in evaluation frameworks for testing reliability and hallucinations

- Guardrails like prompt injection detection are integrated

- Enterprise privacy controls with data residency and retention options

- Advanced observability with tracing, token usage, and latency insights

- Cost optimization through dynamic routing and budget controls

- Support for BYO models alongside proprietary models

- Native RAG integrations with vector databases

- Hybrid deployments for enterprise and regulated environments

- Prompt versioning and workflow lifecycle management

Quick Buyer Checklist (Scan-Friendly)

- Does it support multiple model providers and BYO models?

- Can you enforce data privacy and retention policies?

- Are logs, traces, and token usage metrics available?

- Does it include evaluation and testing tools?

- Are there guardrails for prompt injection and unsafe outputs?

- Does it integrate with RAG pipelines and vector databases?

- Can you control latency and cost dynamically?

- Are there role-based access controls and audit logs?

- How easy is it to switch providers (avoid lock-in)?

- Does it support agent workflows and tool calling?

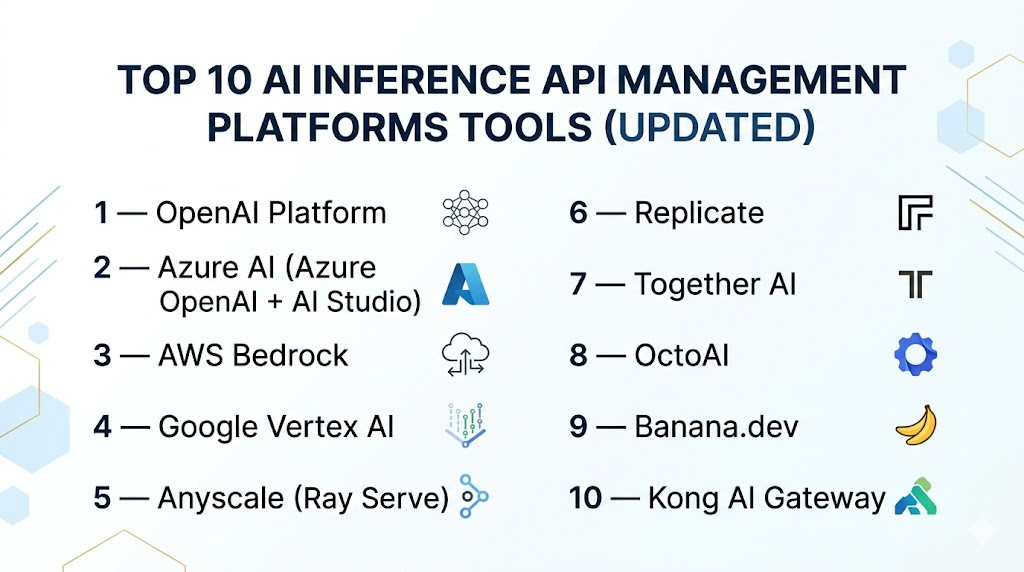

Top 10 AI Inference API Management Platforms Tools (Updated)

#1 — OpenAI Platform

One-line verdict: Best for teams wanting reliable, scalable AI APIs with strong ecosystem and ease of use.

Short description:

A widely adopted AI platform offering access to advanced models with built-in tooling for inference management, monitoring, and scaling. Used by startups and enterprises alike for production AI applications.

Standout Capabilities

- High-quality proprietary models

- Built-in function calling and agent workflows

- Scalable infrastructure with global availability

- Strong developer ecosystem

- Integrated safety tooling

AI-Specific Depth

- Model support: Proprietary + limited multi-model routing

- RAG / knowledge integration: Basic support, external tools needed

- Evaluation: Limited native tools, improving

- Guardrails: Built-in moderation APIs

- Observability: Basic usage metrics, improving

Pros

- Easy to get started

- High model performance

- Strong documentation

Cons

- Vendor lock-in risk

- Limited deep observability

- Cost can scale quickly

Security & Compliance

SSO, encryption, and enterprise controls available. Certifications: Not publicly stated.

Deployment & Platforms

Cloud-based

Integrations & Ecosystem

Strong API ecosystem with SDKs and integrations across major frameworks.

- REST APIs

- Python/JS SDKs

- Integration with orchestration tools

Pricing Model

Usage-based

Best-Fit Scenarios

- AI copilots

- Chatbots

- Rapid prototyping

#2 — Azure AI (Azure OpenAI + AI Studio)

One-line verdict: Best for enterprises needing compliance, security, and deep ecosystem integration.

Short description:

An enterprise AI platform offering managed access to models with governance, security, and integration with a broader cloud ecosystem.

Standout Capabilities

- Enterprise-grade security

- Deep ecosystem integration

- Hybrid deployment options

- Advanced monitoring tools

- Compliance-focused features

AI-Specific Depth

- Model support: Proprietary + hosted

- RAG / knowledge integration: Strong

- Evaluation: Built-in tools

- Guardrails: Policy controls

- Observability: Advanced

Pros

- Strong compliance

- Scalable infrastructure

- Deep integrations

Cons

- Complex setup

- Higher cost

- Platform dependency

Security & Compliance

Strong enterprise controls. Certifications: Varies.

Deployment & Platforms

Cloud + Hybrid

Integrations & Ecosystem

- Cloud services

- Data pipelines

- Identity systems

Pricing Model

Usage-based + enterprise tiers

Best-Fit Scenarios

- Regulated industries

- Enterprise AI deployments

- Internal copilots

#3 — AWS Bedrock

One-line verdict: Best for multi-model flexibility within a cloud-native ecosystem.

Short description:

A managed service providing access to multiple foundation models with unified API and governance controls.

Standout Capabilities

- Multi-model access

- Scalable infrastructure

- Integrated security controls

- Cloud-native architecture

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong

- Evaluation: Basic

- Guardrails: Available

- Observability: Strong

Pros

- Flexible model choice

- Scalable

- Strong ecosystem

Cons

- Platform dependency

- Learning curve

- Pricing complexity

Security & Compliance

Enterprise-grade. Certifications: Varies.

Deployment & Platforms

Cloud

Integrations & Ecosystem

- Cloud services

- Data tools

- APIs

Pricing Model

Usage-based

Best-Fit Scenarios

- Multi-model apps

- Scalable AI systems

- Cloud-native teams

#4 — Google Vertex AI

One-line verdict: Best for ML teams needing unified AI, data, and inference management.

Short description:

A comprehensive AI platform combining model development, deployment, and inference management with strong data integration.

Standout Capabilities

- End-to-end ML + AI workflows

- Strong data integration

- Multi-model support

- Scalable infrastructure

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong

- Evaluation: Advanced

- Guardrails: Available

- Observability: Strong

Pros

- Unified platform

- Advanced ML tooling

- Scalable

Cons

- Complex

- Learning curve

- Platform dependency

Security & Compliance

Enterprise-grade. Certifications: Varies.

Deployment & Platforms

Cloud

Integrations & Ecosystem

- Data pipelines

- APIs

- ML tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Data-heavy applications

- ML teams

- Enterprise AI

#5 — Anyscale (Ray Serve)

One-line verdict: Best for teams deploying open-source models with full control and scalability.

Short description:

A platform built on Ray for scalable serving of AI models, especially open-source and custom deployments.

Standout Capabilities

- Distributed model serving

- Open-source flexibility

- High scalability

- Developer control

AI-Specific Depth

- Model support: Open-source + BYO

- RAG / knowledge integration: Varies

- Evaluation: N/A

- Guardrails: N/A

- Observability: Moderate

Pros

- Full control

- Scalable architecture

- Flexible

Cons

- Requires expertise

- Limited built-in safety tools

- Setup complexity

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud + Self-hosted

Integrations & Ecosystem

- Python ecosystem

- APIs

- Ray tools

Pricing Model

Open-source + enterprise

Best-Fit Scenarios

- Custom AI infrastructure

- Research teams

- Large-scale deployments

#6 — Replicate

One-line verdict: Best for developers deploying models quickly with minimal infrastructure.

Short description:

A developer-friendly platform for running and scaling machine learning models through simple APIs.

Standout Capabilities

- Simple deployment

- Model marketplace

- Fast setup

- Developer-focused

AI-Specific Depth

- Model support: Open-source

- RAG / knowledge integration: N/A

- Evaluation: N/A

- Guardrails: N/A

- Observability: Basic

Pros

- Easy to use

- Fast deployment

- Accessible

Cons

- Limited enterprise features

- Basic monitoring

- Less governance

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- Developer tools

- Model hosting

Pricing Model

Usage-based

Best-Fit Scenarios

- Prototyping

- Indie developers

- Small apps

#7 — Together AI

One-line verdict: Best for cost-efficient inference using open-source models.

Short description:

A platform focused on affordable, high-performance inference for open-source and custom models.

Standout Capabilities

- Cost optimization

- Open-source support

- High performance

- Flexible usage

AI-Specific Depth

- Model support: Open-source + BYO

- RAG / knowledge integration: Varies

- Evaluation: N/A

- Guardrails: N/A

- Observability: Moderate

Pros

- Cost-effective

- Flexible

- Scalable

Cons

- Limited enterprise tooling

- Fewer safety features

- Smaller ecosystem

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- Developer tools

- Model hosting

Pricing Model

Usage-based

Best-Fit Scenarios

- Startups

- Cost-sensitive apps

- Open-source AI

#8 — OctoAI

One-line verdict: Best for high-performance inference with GPU optimization.

Short description:

A platform designed for optimized AI workloads using GPU acceleration for low-latency applications.

Standout Capabilities

- GPU optimization

- Low latency

- Scalable infrastructure

- Developer-friendly tools

AI-Specific Depth

- Model support: Open-source + BYO

- RAG / knowledge integration: N/A

- Evaluation: N/A

- Guardrails: N/A

- Observability: Moderate

Pros

- High performance

- Efficient scaling

- Flexible

Cons

- Limited ecosystem

- Fewer enterprise features

- Less mature

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- SDKs

- GPU tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Real-time AI

- Performance-critical apps

- ML workloads

#9 — Banana.dev

One-line verdict: Best for simple serverless model deployment with minimal overhead.

Short description:

A lightweight platform focused on easy deployment and scaling of machine learning models.

Standout Capabilities

- Serverless deployment

- Simple APIs

- Fast setup

- Lightweight infrastructure

AI-Specific Depth

- Model support: Open-source

- RAG / knowledge integration: N/A

- Evaluation: N/A

- Guardrails: N/A

- Observability: Basic

Pros

- Simple

- Fast

- Beginner-friendly

Cons

- Limited features

- Not enterprise-ready

- Basic tooling

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- Developer tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Small projects

- Prototyping

- Indie development

#10 — Kong AI Gateway

One-line verdict: Best for extending API gateway control into AI inference governance.

Short description:

A platform that brings API gateway capabilities into AI inference, focusing on governance, routing, and security.

Standout Capabilities

- Policy enforcement

- Traffic routing

- Observability

- Security controls

AI-Specific Depth

- Model support: Multi-model routing

- RAG / knowledge integration: N/A

- Evaluation: N/A

- Guardrails: Policy-based

- Observability: Strong

Pros

- Strong governance

- Flexible routing

- Enterprise-ready

Cons

- Requires setup

- Not AI-native

- Learning curve

Security & Compliance

Enterprise-grade. Certifications: Varies.

Deployment & Platforms

Cloud + Self-hosted

Integrations & Ecosystem

- APIs

- Plugins

- Enterprise tools

Pricing Model

Open-core + enterprise

Best-Fit Scenarios

- API-first organizations

- Governance-heavy environments

- Hybrid systems

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| OpenAI Platform | General AI apps | Cloud | Proprietary | Ease of use | Lock-in | N/A |

| Azure AI | Enterprise | Cloud/Hybrid | Hosted | Compliance | Complexity | N/A |

| AWS Bedrock | Cloud-native teams | Cloud | Multi-model | Flexibility | Dependency | N/A |

| Vertex AI | ML teams | Cloud | Multi-model | End-to-end | Complexity | N/A |

| Anyscale | Open-source control | Hybrid | BYO | Flexibility | Setup | N/A |

| Replicate | Developers | Cloud | Open-source | Simplicity | Limited features | N/A |

| Together AI | Startups | Cloud | Open-source | Cost | Maturity | N/A |

| OctoAI | Performance apps | Cloud | BYO | Speed | Ecosystem | N/A |

| Banana.dev | Small apps | Cloud | Open-source | Simplicity | Limited | N/A |

| Kong AI Gateway | Governance | Hybrid | Multi-model | Control | Complexity | N/A |

Scoring & Evaluation (Transparent Rubric)

Scoring is comparative, not absolute. Each tool is evaluated across key criteria weighted by importance for real-world AI deployments.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| OpenAI | 9 | 7 | 8 | 9 | 9 | 7 | 8 | 8 | 8.2 |

| Azure AI | 9 | 8 | 9 | 9 | 7 | 7 | 9 | 8 | 8.4 |

| AWS Bedrock | 9 | 7 | 8 | 9 | 7 | 8 | 9 | 8 | 8.3 |

| Vertex AI | 9 | 8 | 8 | 9 | 7 | 8 | 9 | 8 | 8.4 |

| Anyscale | 8 | 6 | 5 | 7 | 6 | 9 | 7 | 7 | 7.3 |

| Replicate | 7 | 5 | 5 | 6 | 9 | 7 | 5 | 6 | 6.6 |

| Together AI | 7 | 5 | 5 | 6 | 8 | 9 | 5 | 6 | 6.9 |

| OctoAI | 8 | 6 | 5 | 6 | 7 | 9 | 6 | 6 | 7.2 |

| Banana.dev | 6 | 4 | 4 | 5 | 9 | 7 | 4 | 5 | 6.0 |

| Kong AI Gateway | 8 | 6 | 8 | 8 | 6 | 7 | 9 | 7 | 7.8 |

Top 3 for Enterprise: Azure AI, Vertex AI, AWS Bedrock

Top 3 for SMB: OpenAI Platform, Together AI, Replicate

Top 3 for Developers: OpenAI Platform, Replicate, Anyscale

Which AI Inference API Management Platforms Tool Is Right for You

Solo / Freelancer

Choose simple platforms like OpenAI or Replicate. Avoid complex infrastructure-heavy tools.

SMB

Use OpenAI or Together AI for a balance of cost, performance, and ease of use.

Mid-Market

AWS Bedrock or Vertex AI offer scalability with flexibility and integrations.

Enterprise

Azure AI or Vertex AI are strong choices for governance, compliance, and large-scale deployment.

Regulated industries (finance/healthcare/public sector)

Opt for Azure AI or AWS Bedrock with strict security and hybrid deployment support.

Budget vs premium

- Budget: Together AI, Replicate

- Premium: Azure AI, Vertex AI

Build vs buy (when to DIY)

Choose DIY (Anyscale or open-source stack) if you need full control and have strong engineering resources.

Implementation Playbook (30 / 60 / 90 Days)

30 Days:

- Identify 1–2 high-impact use cases

- Define metrics (latency, accuracy, cost)

- Set up pilot environment

- Enable basic logging and monitoring

60 Days:

- Build evaluation pipelines

- Add guardrails and safety filters

- Implement prompt/version control

- Roll out to limited users

90 Days:

- Optimize cost and latency

- Add governance and access controls

- Scale across teams

- Implement incident handling and monitoring

Common Mistakes & How to Avoid Them

- Skipping evaluation frameworks

- Ignoring prompt injection risks

- Not managing data retention policies

- Lack of observability

- Unexpected cost spikes

- Over-automation without human review

- Vendor lock-in without abstraction

- No fallback models

- Poor latency planning

- Weak access controls

- No audit logs

- Ignoring compliance needs

- Lack of prompt versioning

FAQs

1. What is an AI inference API management platform?

It’s a system that manages how applications interact with AI models, including routing, monitoring, and governance.

2. Do these platforms store my data?

It varies by provider. Some offer strict controls, while others may retain data for improvements.

3. Can I use my own models?

Yes, many platforms support bring-your-own-model (BYO) setups or open-source integrations.

4. Are these platforms secure?

Enterprise platforms offer strong security, but you should verify features like encryption and access control.

5. What is multi-model routing?

It allows systems to choose the best model for each request based on cost, speed, or quality.

6. Do I need evaluation tools?

Yes, they help measure accuracy, detect hallucinations, and ensure reliability.

7. What are guardrails?

Guardrails are safety mechanisms that prevent harmful, biased, or incorrect outputs.

8. Can I self-host these platforms?

Some tools support self-hosting or hybrid deployments, especially for enterprises.

9. How much do these platforms cost?

Most use usage-based pricing, but costs vary widely depending on scale and models used.

10. Are these platforms vendor lock-in risks?

Yes, unless you use abstraction layers or multi-model strategies.

11. What alternatives exist?

You can directly use model APIs or build your own infrastructure using open-source tools.

12. How easy is it to switch platforms?

Switching can be complex, so it’s best to design systems with flexibility from the start.

Conclusion

AI inference API management platforms have become a core layer in modern AI systems, enabling teams to control cost, improve reliability, and enforce governance across increasingly complex workflows. The right choice depends on your scale, technical expertise, and compliance needs—there is no one-size-fits-all solution. Some tools excel in enterprise security, while others focus on developer simplicity or cost efficiency. The smartest approach is to shortlist a few relevant platforms, run a focused pilot to validate performance and reliability, and ensure strong evaluation and guardrails before scaling. Start by identifying your priorities, test in real conditions, and build with flexibility in mind to avoid long-term lock-in.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals