The requirement for high-quality video content is transitioning from a manual creative process to a programmable infrastructure task. For engineering teams and DevOps architects, the primary challenge involves scaling media production without introducing the latency and manual bottlenecks of traditional editing environments. The implementation of the Kling 3.0 API allows organizations to treat video synthesis as a standardized, code-driven service. By integrating these capabilities into automated pipelines, developers can move toward a media infrastructure that prioritizes technical precision and high-throughput delivery.

Technical Specifications of the Unified Multimodal Architecture

Establishing a reliable video infrastructure requires an engine that can maintain structural coherence across varied generation requests. The architecture behind Kling AI 3.0 addresses this by utilizing a unified multimodal system designed for high-fidelity output and stable synthesis. According to recent tech industry benchmarks, the shift toward transformer-based diffusion models has significantly reduced temporal flickering in AI video. This technical foundation is critical for ensuring that automated outputs remain consistent with the high standards expected in enterprise environments.

Core Features for Enterprise-Grade Video Synthesis

Native 4K Synthesis and Visual Standards

A significant technical feature of the Kling 3.0 engine is its support for native 4K resolution. Unlike systems that rely on post-generation upscaling, which often introduces visual artifacts, the API generates high-density pixels from the initial processing stage. Utilizing Kling AI API allows technical teams to maintain a clear line of sight from raw prompts to broadcast-quality assets without manual post-production.

Granular Parameterization and Motion Control

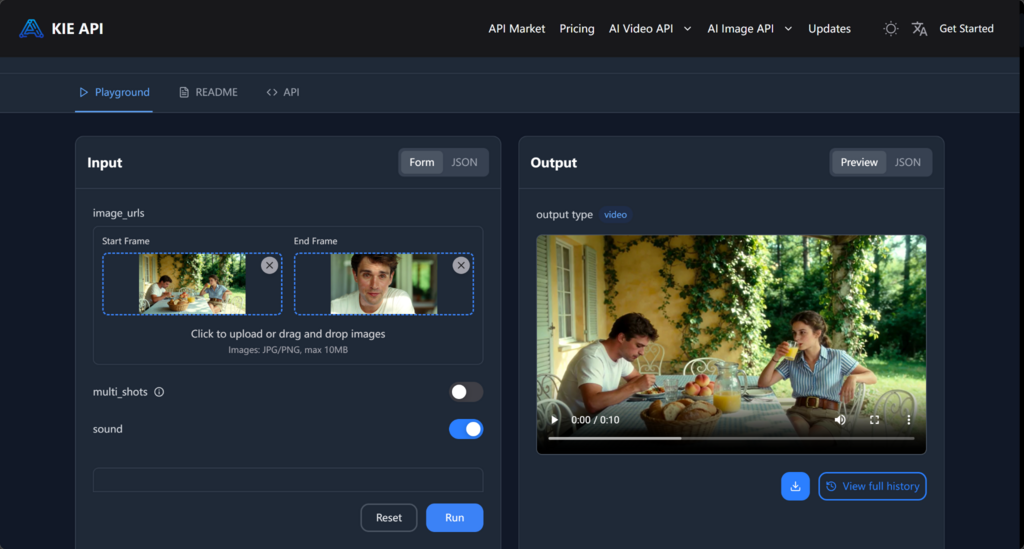

The utility of Kling Video 3.0 API lies in its capacity for granular parameterization. Rather than relying solely on descriptive natural language, the API accepts specific motion vectors and camera dynamics as structured inputs. This enables an automated workflow to dictate exactly how a visual narrative unfolds, ensuring the final output aligns with the technical requirements of a predefined storyboard or programmatic template.

Managing Subject Integrity via Subject Reference Logic

The Kling V3.0 API addresses identity drift through sophisticated subject reference logic. This capability allows developers to programmatically define and lock the physical attributes of a subject across a batch of generation requests. By ensuring the core subject remains visually identical regardless of changes in the environment, the API provides the technological consistency required for professional deployment.

Industrializing Video Workflows through API Integration

The primary value of the Kling 3 API for automation-focused teams is its ability to industrialize the creative process by treating video generation as an asynchronous task within a larger DevOps pipeline.

Batch Processing and Content Scalability

When a growth strategy requires testing diverse creative variants, manual production becomes an operational bottleneck. The Kling 3 API facilitates the batch processing of video assets, allowing teams to synchronize creative parameters with existing metadata and content libraries.

Automated Localization and Audio Sync

Expanding into international markets requires localized assets that maintain engagement. The Kling AI API facilitates this through integrated lip-syncing and native audio synchronization. For global development teams, this reduces the reliance on expensive reshoots, allowing a centralized infrastructure to maintain a worldwide visual presence with local-level precision.

Conclusion: Strategic Value of Video as Code

Integrating a programmable infrastructure via the Kling 3.0 API provides a practical framework for managing high-fidelity assets within a modern developer environment. By standardizing output and reducing operational friction, technical teams can align production capabilities with the speed of software deployment. To explore the full range of supported generative models and integration options, developers can access the centralized model market. This approach ensures that technical precision remains a core component of the enterprise media stack.

I’m a DevOps/SRE/DevSecOps/Cloud Expert passionate about sharing knowledge and experiences. I have worked at Cotocus. I share tech blog at DevOps School, travel stories at Holiday Landmark, stock market tips at Stocks Mantra, health and fitness guidance at My Medic Plus, product reviews at TrueReviewNow , and SEO strategies at Wizbrand.

Do you want to learn Quantum Computing?

Please find my social handles as below;

Rajesh Kumar Personal Website

Rajesh Kumar at YOUTUBE

Rajesh Kumar at INSTAGRAM

Rajesh Kumar at X

Rajesh Kumar at FACEBOOK

Rajesh Kumar at LINKEDIN

Rajesh Kumar at WIZBRAND

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals