Introduction

Open-Source Model Hub Platforms are centralized repositories where developers, researchers, and organizations can discover, share, host, and deploy machine learning models—especially large language models (LLMs), vision models, and multimodal systems. Think of them as the “GitHub for AI models,” enabling collaboration, reproducibility, and faster AI development.

These platforms go beyond simple storage—they provide versioning, datasets, demos, APIs, and increasingly, deployment and evaluation tools. The rise of open-source AI has made these hubs critical infrastructure for building modern AI systems.

Real-world use cases include:

- Discovering and downloading pretrained models for NLP, vision, and audio

- Sharing fine-tuned models within teams or communities

- Hosting private enterprise model registries

- Rapid prototyping with public datasets and demos

- Building RAG systems with open datasets

- Benchmarking and evaluating models across domains

When evaluating these platforms, buyers should consider:

- Model catalog size and diversity

- Open-source licensing transparency

- Dataset availability and integration

- Versioning and collaboration features

- Inference and deployment capabilities

- Evaluation and benchmarking tools

- Security, access control, and governance

- API and SDK support

- Community activity and ecosystem

- Vendor lock-in risk

Open-source model hubs are now foundational to AI development, with platforms like Hugging Face hosting hundreds of thousands of models and datasets across domains.

Best for: AI engineers, ML researchers, startups, and enterprises building AI with open-source models.

Not ideal for: teams needing fully managed proprietary AI solutions with strict SLAs and minimal customization.

What’s Changed in Open-Source Model Hub Platforms

- Explosion of community-contributed models and datasets

- Rise of multimodal model hubs (text, vision, audio, video)

- Growth of private model registries for enterprises

- Integration of inference APIs directly inside hubs

- Emergence of model versioning and lineage tracking

- Built-in evaluation and benchmarking pipelines

- Support for agent workflows and tool chaining

- Increasing focus on model governance and licensing clarity

- Expansion of fine-tuning and training pipelines

- Growth of regional hubs (e.g., Asia-focused platforms)

- Adoption of OpenAI-compatible APIs

- Integration with CI/CD pipelines for ML workflows

Quick Buyer Checklist (Scan-Friendly)

- Does it support your model types (LLM, vision, multimodal)?

- Are licensing and usage rights clearly defined?

- Can you host private models securely?

- Does it support datasets and RAG workflows?

- Are evaluation tools built-in?

- Can you deploy models directly from the hub?

- Does it integrate with your ML stack?

- Is there strong community support?

- What are the governance and access controls?

- How easy is model versioning and collaboration?

- Is there risk of vendor lock-in?

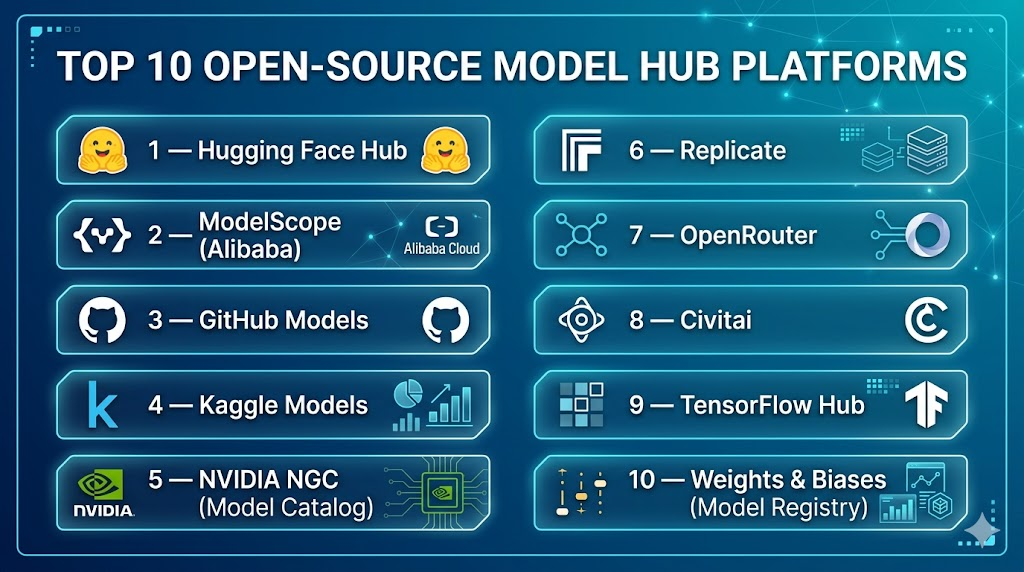

Top 10 Open-Source Model Hub Platforms

#1 — Hugging Face Hub

One-line verdict: Best overall open-source model hub with unmatched ecosystem and community adoption.

Short description:

The largest and most widely used platform for hosting, sharing, and deploying AI models, datasets, and applications.

Standout Capabilities

- Massive model and dataset library (hundreds of thousands)

- Supports NLP, vision, audio, and multimodal models

- Spaces for deploying demos and apps

- Integrated inference APIs

- Strong community and documentation

- Model versioning and collaboration tools

- Enterprise features (private hubs, governance)

AI-Specific Depth

- Model support: Open-source + some proprietary

- RAG / knowledge integration: Strong dataset hub

- Evaluation: Community benchmarks + tools

- Guardrails: Basic + external integrations

- Observability: API metrics

Pros

- Largest ecosystem

- Easy to use

- Strong community

Cons

- Can be overwhelming

- Some enterprise features paid

Security & Compliance

- SSO, private repos, enterprise controls (details vary)

Deployment & Platforms

- Web, APIs, cloud-hosted

Integrations & Ecosystem

- Transformers, Gradio, PyTorch, TensorFlow, APIs

Pricing Model

Freemium + enterprise

Best-Fit Scenarios

- Model discovery

- Open-source collaboration

- Rapid prototyping

#2 — ModelScope (Alibaba)

One-line verdict: Best for large-scale multilingual and Asia-focused model ecosystems.

Short description:

A comprehensive model hub offering diverse AI models across NLP, vision, and speech.

Standout Capabilities

- Strong multilingual model support

- Large model repository

- Integrated training and deployment tools

- Active developer ecosystem

AI-Specific Depth

- Model support: Open-source

- RAG: Dataset integration

- Evaluation: Platform tools

- Guardrails: Limited

- Observability: Basic

Pros

- Strong in Asian markets

- Diverse model support

- Growing ecosystem

Cons

- Smaller global adoption

- Documentation varies

Deployment & Platforms

- Web + APIs

Integrations & Ecosystem

- Alibaba Cloud ecosystem

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Multilingual AI

- Asia-focused deployments

- Research

#3 — GitHub Models

One-line verdict: Best for developers wanting model access integrated with Git workflows.

Short description:

A model catalog integrated into GitHub with APIs and developer tooling.

Standout Capabilities

- Git-based versioning

- OpenAI-compatible APIs

- Integration with repositories

- Developer-first experience

AI-Specific Depth

- Model support: Open + hosted

- RAG: External

- Evaluation: Limited

- Guardrails: Basic

- Observability: Usage metrics

Pros

- Familiar workflow

- Strong developer ecosystem

- Easy collaboration

Cons

- Newer platform

- Limited advanced features

Deployment & Platforms

- Web + APIs

Integrations & Ecosystem

- GitHub ecosystem, CI/CD

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Dev teams

- CI/CD workflows

- Model versioning

#4 — Kaggle Models

One-line verdict: Best for dataset discovery and experimentation-driven AI workflows.

Short description:

A platform for datasets, models, and notebooks with strong community engagement.

Standout Capabilities

- Massive dataset library

- Notebook-based experimentation

- Community competitions

- Easy prototyping

AI-Specific Depth

- Model support: Open-source

- RAG: Strong dataset integration

- Evaluation: Competition benchmarks

- Guardrails: N/A

- Observability: Limited

Pros

- Great for learning

- Strong community

- Easy experimentation

Cons

- Not production-focused

- Limited deployment features

Deployment & Platforms

- Web

Integrations & Ecosystem

- Google ecosystem

Pricing Model

Free

Best-Fit Scenarios

- Learning AI

- Prototyping

- Dataset exploration

#5 — NVIDIA NGC (Model Catalog)

One-line verdict: Best for GPU-optimized models and enterprise AI deployments.

Short description:

A curated catalog of models and containers optimized for NVIDIA hardware.

Standout Capabilities

- GPU-optimized models

- Containerized deployments

- Enterprise-ready

- Integration with NVIDIA stack

AI-Specific Depth

- Model support: Open + proprietary

- RAG: External

- Evaluation: Limited

- Guardrails: N/A

- Observability: Enterprise tools

Pros

- High performance

- Enterprise-ready

- Optimized for GPUs

Cons

- NVIDIA dependency

- Smaller community

Deployment & Platforms

- Cloud + on-prem

Integrations & Ecosystem

- CUDA, TensorRT

Pricing Model

Varies / N/A

Best-Fit Scenarios

- GPU-heavy workloads

- Enterprise AI

- Production systems

#6 — Replicate

One-line verdict: Best for simple API-based access to open models without infrastructure overhead.

Short description:

A platform for running open-source models via simple APIs.

Standout Capabilities

- One-call model execution

- Large model catalog

- Hosted inference

- Easy integration

AI-Specific Depth

- Model support: Open-source

- RAG: External

- Evaluation: Limited

- Guardrails: Basic

- Observability: Usage metrics

Pros

- Simple API

- No infrastructure needed

- Fast setup

Cons

- Less control

- Usage-based cost

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs, SDKs

Pricing Model

Usage-based

Best-Fit Scenarios

- Rapid prototyping

- API-based apps

- Startups

#7 — OpenRouter

One-line verdict: Best for unified access to multiple LLMs via a single API.

Short description:

A model routing and catalog platform that aggregates multiple LLM providers.

Standout Capabilities

- Multi-model routing

- Unified API

- Access to open + closed models

- Cost optimization

AI-Specific Depth

- Model support: Multi-model

- RAG: External

- Evaluation: Limited

- Guardrails: Basic

- Observability: Usage tracking

Pros

- Flexibility

- Easy switching

- Cost optimization

Cons

- Not a pure model hub

- Depends on providers

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs, developer tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Multi-model apps

- Cost optimization

- API abstraction

#8 — Civitai

One-line verdict: Best for community-driven generative image and diffusion models.

Short description:

A platform focused on sharing and discovering diffusion models and fine-tuned variants.

Standout Capabilities

- Large diffusion model library

- Community contributions

- LoRA and embeddings support

- Visual model previews

AI-Specific Depth

- Model support: Open-source (image models)

- RAG: N/A

- Evaluation: Community-driven

- Guardrails: Minimal

- Observability: N/A

Pros

- Strong community

- Visual focus

- Easy discovery

Cons

- Limited to image models

- Less enterprise-ready

Deployment & Platforms

- Web

Integrations & Ecosystem

- Stable Diffusion ecosystem

Pricing Model

Free

Best-Fit Scenarios

- Generative art

- Diffusion workflows

- Creative AI

#9 — TensorFlow Hub

One-line verdict: Best for TensorFlow-native model discovery and integration.

Short description:

A repository of reusable machine learning models designed for TensorFlow workflows.

Standout Capabilities

- Pretrained TensorFlow models

- Easy integration into pipelines

- Google-backed ecosystem

- Reliable performance

AI-Specific Depth

- Model support: Open-source

- RAG: External

- Evaluation: Limited

- Guardrails: N/A

- Observability: Limited

Pros

- Stable ecosystem

- Easy integration

- Trusted platform

Cons

- Less flexible outside TensorFlow

- Smaller community vs Hugging Face

Deployment & Platforms

- Web + APIs

Integrations & Ecosystem

- TensorFlow ecosystem

Pricing Model

Free

Best-Fit Scenarios

- TensorFlow projects

- Production pipelines

- ML engineering

#10 — Weights & Biases (Model Registry)

One-line verdict: Best for experiment tracking combined with model registry capabilities.

Short description:

A platform focused on experiment tracking, evaluation, and model lifecycle management.

Standout Capabilities

- Experiment tracking

- Model registry

- Evaluation tools

- Collaboration features

AI-Specific Depth

- Model support: Custom + open

- RAG: External

- Evaluation: Strong

- Guardrails: N/A

- Observability: Advanced metrics

Pros

- Strong evaluation tools

- Great for teams

- Mature platform

Cons

- Not a pure model hub

- Requires setup

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- ML frameworks, pipelines

Pricing Model

Freemium

Best-Fit Scenarios

- ML teams

- Experiment tracking

- Model lifecycle

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Hugging Face | General use | Cloud | High | Largest ecosystem | Complexity | N/A |

| ModelScope | Multilingual AI | Cloud | High | Asia focus | Adoption | N/A |

| GitHub Models | Dev workflows | Cloud | Medium | Git integration | New platform | N/A |

| Kaggle | Learning | Cloud | Medium | Datasets | Not production | N/A |

| NVIDIA NGC | Enterprise AI | Hybrid | Medium | GPU optimization | Lock-in | N/A |

| Replicate | API access | Cloud | Medium | Simplicity | Cost | N/A |

| OpenRouter | Multi-model | Cloud | High | Flexibility | Dependency | N/A |

| Civitai | Image models | Web | Low | Community | Narrow focus | N/A |

| TensorFlow Hub | TF workflows | Cloud | Medium | Stability | Ecosystem limit | N/A |

| Weights & Biases | ML ops | Cloud | Medium | Evaluation | Not hub-first | N/A |

Scoring & Evaluation (Transparent Rubric)

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Hugging Face | 10 | 9 | 7 | 10 | 9 | 8 | 9 | 10 | 9.1 |

| ModelScope | 9 | 8 | 6 | 8 | 7 | 8 | 7 | 8 | 7.9 |

| GitHub Models | 8 | 7 | 6 | 9 | 9 | 8 | 8 | 9 | 8.2 |

| Kaggle | 8 | 7 | 5 | 8 | 10 | 9 | 7 | 9 | 8.1 |

| NVIDIA NGC | 9 | 8 | 6 | 8 | 7 | 9 | 9 | 8 | 8.3 |

| Replicate | 8 | 7 | 6 | 8 | 9 | 7 | 7 | 8 | 7.8 |

| OpenRouter | 8 | 7 | 6 | 9 | 8 | 8 | 7 | 8 | 7.9 |

| Civitai | 7 | 6 | 5 | 6 | 9 | 8 | 6 | 7 | 7.1 |

| TensorFlow Hub | 8 | 7 | 5 | 8 | 8 | 8 | 7 | 8 | 7.8 |

| Weights & Biases | 8 | 9 | 6 | 9 | 7 | 8 | 9 | 9 | 8.4 |

Top 3 for Enterprise

- Hugging Face

- NVIDIA NGC

- Weights & Biases

Top 3 for SMB

- Kaggle

- Replicate

- GitHub Models

Top 3 for Developers

- Hugging Face

- GitHub Models

- OpenRouter

Which Open-Source Model Hub Platform Is Right for You

Solo / Freelancer

- Kaggle

- Civitai

- Hugging Face

SMB

- Replicate

- GitHub Models

- Hugging Face

Mid-Market

- Weights & Biases

- ModelScope

- OpenRouter

Enterprise

- Hugging Face

- NVIDIA NGC

- Weights & Biases

Regulated industries (finance/healthcare/public sector)

- Hugging Face (private hubs)

- NVIDIA NGC

- Weights & Biases

Budget vs premium

- Budget: Kaggle, TensorFlow Hub

- Premium: Hugging Face Enterprise, NGC

Build vs buy (when to DIY)

- Build when full control is required

- Use hubs when speed and collaboration matter

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Select platform

- Identify use cases

- Test model discovery and downloads

- Define evaluation metrics

60 Days

- Integrate datasets (RAG)

- Implement model versioning

- Set up evaluation workflows

- Add access controls

90 Days

- Scale usage across teams

- Optimize deployment pipelines

- Implement governance policies

- Monitor usage and performance

Common Mistakes & How to Avoid Them

- Ignoring model licensing

- No evaluation pipeline

- Poor dataset integration

- Lack of version control

- Over-reliance on public models

- No governance controls

- Ignoring security risks

- No observability

- Poor documentation practices

- Vendor lock-in

- No collaboration workflows

- Skipping validation before deployment

FAQs

1. What is a model hub platform?

A platform for hosting, sharing, and discovering AI models and datasets.

2. Are all models open-source?

No, some hubs host mixed-license models.

3. Can I host private models?

Yes, many platforms support private repositories.

4. Do model hubs support deployment?

Some provide built-in inference APIs.

5. What is RAG in model hubs?

Using datasets to augment model responses.

6. Are these platforms free?

Most have free tiers with paid enterprise features.

7. Which is the most popular?

Hugging Face is the most widely used.

8. Can enterprises use open-source hubs?

Yes, with private and secure deployments.

9. Do they support multimodal models?

Yes, most modern hubs do.

10. What is the biggest risk?

Licensing and compliance issues.

11. Can I fine-tune models on these platforms?

Some platforms support it directly.

12. Are alternatives available?

Yes, several specialized hubs exist.

Conclusion

Open-source model hub platforms are the backbone of modern AI development, enabling rapid innovation, collaboration, and deployment. The right choice depends on your use case—whether it’s experimentation, production, or enterprise governance—but success comes from combining the right platform with strong evaluation, security, and integration practices.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals