Introduction

Model fine-tuning platforms are tools and services that allow you to customize pre-trained AI models—especially large language models (LLMs)—using your own data. Instead of building models from scratch, these platforms adapt existing models to perform better on specific tasks, domains, or company-specific use cases.

In simple terms, fine-tuning turns a general-purpose AI into a specialized one. For example, you can train a model to understand legal contracts, medical terminology, customer support tone, or internal company knowledge.

This category has become essential as organizations move from generic AI outputs to domain-specific, high-accuracy AI systems. Fine-tuning improves reliability, reduces hallucinations, and enables consistent behavior across applications.

Common real-world use cases include:

- Customer support automation with brand-specific tone

- Legal, financial, or healthcare document processing

- Code generation tailored to internal systems

- AI copilots trained on proprietary company data

- Personalized marketing and content generation

- Domain-specific chatbots and assistants

When evaluating model fine-tuning platforms, consider:

- Model compatibility (open-source vs proprietary)

- Parameter-efficient fine-tuning (LoRA, QLoRA, PEFT)

- Dataset handling and preprocessing tools

- Evaluation and benchmarking capabilities

- Guardrails and alignment controls

- Infrastructure (GPU scaling, distributed training)

- Cost efficiency and training speed

- Deployment and inference integration

- Observability and experiment tracking

- Security, data privacy, and retention policies

Best for: AI engineers, ML teams, enterprises building domain-specific AI, and startups creating differentiated AI products.

Not ideal for: simple prompt-based applications or teams without training data or ML expertise.

What’s Changed in Model Fine-Tuning Platforms

- Shift toward parameter-efficient fine-tuning (PEFT) to reduce cost and compute

- Rise of LoRA and QLoRA-based workflows for large models

- Emergence of no-code and low-code fine-tuning interfaces

- Integration of evaluation pipelines and benchmarking tools

- Growth of multimodal fine-tuning (text, image, audio)

- Adoption of RLHF and alignment training frameworks

- Increasing support for open-source models (LLaMA, Mistral, etc.)

- Expansion of managed GPU infrastructure platforms

- Built-in model deployment pipelines after training

- Focus on data privacy and enterprise compliance

- Integration with LLMOps and MLOps ecosystems

- Automation of dataset generation and augmentation

Quick Buyer Checklist (Scan-Friendly)

- Does it support your target models (open-source or proprietary)?

- Can it handle your dataset size and format?

- Does it support LoRA/QLoRA or other efficient methods?

- Are evaluation tools included?

- Can you track experiments and versions?

- Does it provide deployment/inference pipelines?

- How scalable is the GPU infrastructure?

- Are guardrails and alignment tools available?

- What are the data privacy and retention policies?

- Does it integrate with your AI stack?

- Is there vendor lock-in risk?

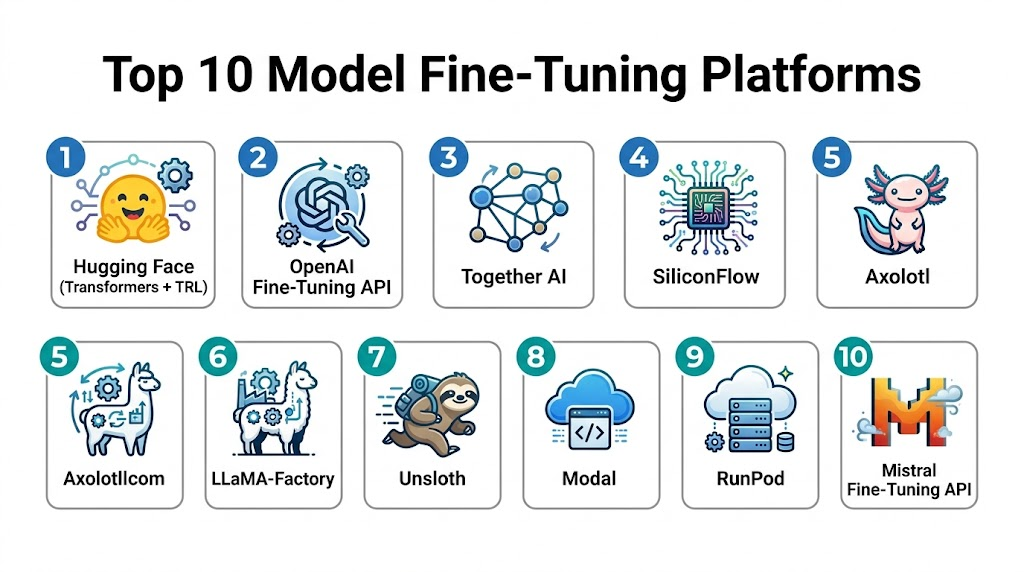

Top 10 Model Fine-Tuning Platforms

#1 — Hugging Face (Transformers + TRL)

One-line verdict: Best overall platform for flexible, open-source fine-tuning with massive ecosystem support.

Short description:

A leading open-source ecosystem offering libraries and tools for fine-tuning LLMs, including Transformers and TRL for alignment training.

Standout Capabilities

- Supports thousands of models (LLaMA, Mistral, Falcon, etc.)

- Parameter-efficient fine-tuning (LoRA, QLoRA)

- TRL library for RLHF workflows

- Dataset and model hub integration

- Strong community and documentation

- Works with PyTorch and TensorFlow

- Scalable from local to distributed training

AI-Specific Depth

- Model support: Open-source + custom

- RAG / knowledge integration: Strong dataset integration

- Evaluation: Benchmarking tools and community evals

- Guardrails: External integrations

- Observability: Integration with external tools

Pros

- Highly flexible

- Large ecosystem

- Free and open-source

Cons

- Requires ML expertise

- Setup complexity

Security & Compliance

Varies / N/A

Deployment & Platforms

- Local, cloud, hybrid

Integrations & Ecosystem

- PyTorch, TensorFlow, datasets, APIs, MLOps tools

Pricing Model

Free + enterprise options

Best-Fit Scenarios

- Open-source fine-tuning

- Research and experimentation

- Custom AI pipelines

#2 — OpenAI Fine-Tuning API

One-line verdict: Best for simple, managed fine-tuning of proprietary models with minimal setup.

Short description:

A fully managed API for fine-tuning OpenAI models using custom datasets.

Standout Capabilities

- Fully managed training pipeline

- Easy dataset upload and configuration

- No infrastructure management

- Integrated deployment

- Consistent performance and reliability

AI-Specific Depth

- Model support: Proprietary

- RAG: External

- Evaluation: Basic tools

- Guardrails: Built-in policies

- Observability: Usage metrics

Pros

- Easy to use

- No DevOps required

- Fast setup

Cons

- Limited control

- Vendor lock-in

Security & Compliance

Varies / N/A

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs, SDKs

Pricing Model

Usage-based

Best-Fit Scenarios

- Quick customization

- SaaS applications

- Non-ML teams

#3 — Together AI

One-line verdict: Best managed platform for fine-tuning open models with scalable infrastructure.

Short description:

Provides APIs and infrastructure to fine-tune and deploy open-source LLMs at scale.

Standout Capabilities

- Managed GPU infrastructure

- Open model support

- Fast training pipelines

- API-based deployment

- Multi-model support

AI-Specific Depth

- Model support: Open-source

- RAG: External

- Evaluation: Limited

- Guardrails: Basic

- Observability: Metrics and logs

Pros

- Scalable

- Developer-friendly

- Open model flexibility

Cons

- Less mature ecosystem

- Limited advanced tooling

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs, ML pipelines

Pricing Model

Usage-based

Best-Fit Scenarios

- Startups

- Open model fine-tuning

- API-first applications

#4 — SiliconFlow

One-line verdict: Best all-in-one platform combining fine-tuning and high-performance deployment.

Short description:

A managed platform that simplifies fine-tuning and deployment with strong performance optimization.

Standout Capabilities

- End-to-end pipeline (train → deploy)

- High-performance inference engine

- Multi-modal support

- GPU infrastructure management

- OpenAI-compatible APIs

AI-Specific Depth

- Model support: Open + multimodal

- RAG: Integrated pipelines

- Evaluation: Built-in tools

- Guardrails: Policy-based

- Observability: Full metrics

Pros

- Unified platform

- Strong performance

- Enterprise-ready

Cons

- Learning curve

- Pricing complexity

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs, AI pipelines

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Enterprise AI systems

- Multimodal AI

- High-performance workloads

#5 — Axolotl

One-line verdict: Best open-source toolkit for reproducible and scalable fine-tuning pipelines.

Short description:

A community-driven framework for fine-tuning LLMs across various architectures with flexible configurations.

Standout Capabilities

- Broad model compatibility

- Scales from single GPU to clusters

- Config-driven workflows

- Active open-source community

AI-Specific Depth

- Model support: Open-source

- RAG: External

- Evaluation: External

- Guardrails: N/A

- Observability: Limited

Pros

- Highly flexible

- Free and open-source

- Scalable

Cons

- No UI

- Requires expertise

Deployment & Platforms

- Local, cloud

Integrations & Ecosystem

- Hugging Face, PyTorch

Pricing Model

Free

Best-Fit Scenarios

- Research

- Custom pipelines

- Advanced users

#6 — LLaMA-Factory

One-line verdict: Best beginner-friendly platform with GUI for fine-tuning many models.

Short description:

A toolkit with UI support that simplifies fine-tuning across hundreds of models.

Standout Capabilities

- GUI-based training (LlamaBoard)

- 200+ model support

- Multi-GPU scaling

- Easy configuration

AI-Specific Depth

- Model support: Open-source

- RAG: External

- Evaluation: Basic

- Guardrails: N/A

- Observability: Limited

Pros

- Beginner-friendly

- Wide model support

- GUI interface

Cons

- Limited enterprise features

- Requires setup

Deployment & Platforms

- Local, cloud

Integrations & Ecosystem

- Hugging Face ecosystem

Pricing Model

Free

Best-Fit Scenarios

- Beginners

- Experimentation

- Multi-model testing

#7 — Unsloth

One-line verdict: Best for ultra-fast, cost-efficient single-GPU fine-tuning.

Short description:

A lightweight toolkit optimized for speed and efficiency in fine-tuning LLMs.

Standout Capabilities

- Fast training on single GPU

- Memory-efficient pipelines

- Easy setup

- Works with open models

AI-Specific Depth

- Model support: Open-source

- RAG: External

- Evaluation: Limited

- Guardrails: N/A

- Observability: Minimal

Pros

- Very fast

- Cost-efficient

- Lightweight

Cons

- Limited scalability

- Fewer enterprise features

Deployment & Platforms

- Local, cloud

Integrations & Ecosystem

- Open-source ecosystem

Pricing Model

Free

Best-Fit Scenarios

- Small teams

- Cost-sensitive projects

- Fast experimentation

#8 — Modal

One-line verdict: Best for developer-first GPU workflows with scalable fine-tuning pipelines.

Short description:

A platform that simplifies running GPU workloads, including fine-tuning models.

Standout Capabilities

- Serverless GPU execution

- Python-first workflows

- Scalable infrastructure

- Easy deployment

AI-Specific Depth

- Model support: Custom/open

- RAG: External

- Evaluation: External

- Guardrails: N/A

- Observability: Logs and metrics

Pros

- Developer-friendly

- Scalable

- Flexible

Cons

- Requires coding

- Not fine-tuning specific

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- Python ecosystem

Pricing Model

Usage-based

Best-Fit Scenarios

- Developers

- Custom pipelines

- Scalable workloads

#9 — RunPod

One-line verdict: Best for affordable GPU infrastructure for fine-tuning workloads.

Short description:

Provides on-demand GPU infrastructure for training and fine-tuning AI models.

Standout Capabilities

- Low-cost GPU access

- Flexible infrastructure

- Supports multiple frameworks

- Easy scaling

AI-Specific Depth

- Model support: Any

- RAG: External

- Evaluation: External

- Guardrails: N/A

- Observability: Basic

Pros

- Cost-effective

- Flexible

- Easy scaling

Cons

- Infrastructure-focused

- Requires setup

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- ML frameworks

Pricing Model

Usage-based

Best-Fit Scenarios

- GPU workloads

- Budget teams

- Custom training

#10 — Mistral Fine-Tuning API

One-line verdict: Best for optimizing models within the Mistral ecosystem.

Short description:

A managed API for fine-tuning Mistral models with optimized performance.

Standout Capabilities

- Optimized for Mistral models

- Managed training pipeline

- API-based workflow

- High performance

AI-Specific Depth

- Model support: Proprietary (Mistral)

- RAG: External

- Evaluation: Basic

- Guardrails: Built-in

- Observability: Metrics

Pros

- High performance

- Easy to use

- Integrated deployment

Cons

- Limited model choice

- Ecosystem lock-in

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs

Pricing Model

Usage-based

Best-Fit Scenarios

- Mistral-based apps

- API-first development

- Fast deployment

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Hugging Face | Open-source tuning | Hybrid | High | Ecosystem | Complexity | N/A |

| OpenAI API | Simplicity | Cloud | Low | Ease of use | Lock-in | N/A |

| Together AI | Open model infra | Cloud | High | Scalability | Ecosystem | N/A |

| SiliconFlow | End-to-end AI | Cloud | High | Performance | Complexity | N/A |

| Axolotl | Advanced pipelines | Hybrid | High | Flexibility | No UI | N/A |

| LLaMA-Factory | Beginners | Hybrid | High | GUI | Setup | N/A |

| Unsloth | Fast training | Hybrid | Medium | Speed | Scale limits | N/A |

| Modal | Dev workflows | Cloud | High | Flexibility | Coding | N/A |

| RunPod | GPU infra | Cloud | High | Cost | DIY setup | N/A |

| Mistral API | Mistral models | Cloud | Low | Performance | Lock-in | N/A |

Scoring & Evaluation (Transparent Rubric)

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Hugging Face | 10 | 9 | 7 | 10 | 8 | 9 | 8 | 10 | 9.0 |

| OpenAI API | 8 | 8 | 9 | 8 | 10 | 7 | 9 | 9 | 8.6 |

| Together AI | 8 | 7 | 6 | 8 | 8 | 8 | 7 | 8 | 7.8 |

| SiliconFlow | 9 | 8 | 8 | 9 | 7 | 9 | 8 | 8 | 8.5 |

| Axolotl | 9 | 7 | 6 | 8 | 6 | 8 | 7 | 8 | 7.7 |

| LLaMA-Factory | 8 | 7 | 6 | 8 | 9 | 8 | 7 | 8 | 7.9 |

| Unsloth | 8 | 6 | 5 | 7 | 8 | 9 | 7 | 7 | 7.5 |

| Modal | 8 | 7 | 6 | 9 | 8 | 8 | 7 | 8 | 7.9 |

| RunPod | 7 | 6 | 5 | 7 | 7 | 9 | 7 | 7 | 7.3 |

| Mistral API | 8 | 7 | 8 | 7 | 9 | 8 | 8 | 8 | 8.1 |

Top 3 for Enterprise

- Hugging Face

- SiliconFlow

- OpenAI API

Top 3 for SMB

- LLaMA-Factory

- Unsloth

- Together AI

Top 3 for Developers

- Hugging Face

- Axolotl

- Modal

Which Model Fine-Tuning Platform Is Right for You

Solo / Freelancer

- LLaMA-Factory

- Unsloth

- Hugging Face

SMB

- Together AI

- LLaMA-Factory

- Modal

Mid-Market

- Hugging Face

- SiliconFlow

- RunPod

Enterprise

- Hugging Face

- OpenAI API

- SiliconFlow

Regulated industries (finance/healthcare/public sector)

- Hugging Face (private deployments)

- SiliconFlow

- OpenAI API

Budget vs premium

- Budget: Unsloth, Axolotl, RunPod

- Premium: OpenAI API, SiliconFlow

Build vs buy (when to DIY)

- Build if you need full control over data and training

- Buy if you want speed and simplicity

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Define use case and success metrics

- Prepare and clean dataset

- Run pilot fine-tuning

- Benchmark baseline vs tuned model

60 Days

- Implement evaluation framework

- Optimize hyperparameters and LoRA configs

- Add guardrails and alignment checks

- Deploy staging model

90 Days

- Optimize cost and latency

- Implement monitoring and logging

- Add governance and version control

- Scale across applications

Common Mistakes & How to Avoid Them

- Using poor-quality training data

- Skipping evaluation pipelines

- Overfitting models

- Ignoring cost of GPU training

- No version control for models

- Lack of observability

- No fallback models

- Weak guardrails

- Ignoring bias and fairness

- Over-automation without review

- Vendor lock-in without abstraction

- Poor deployment planning

FAQs

1. What is model fine-tuning?

Customizing a pre-trained AI model using domain-specific data.

2. Is fine-tuning better than prompting?

For complex or repetitive tasks, yes.

3. Do I need GPUs?

Yes, for most fine-tuning tasks.

4. What is LoRA?

A technique that reduces training cost by updating fewer parameters.

5. Is fine-tuning expensive?

It can be, but PEFT methods reduce cost significantly.

6. Can I fine-tune open-source models?

Yes, most platforms support them.

7. Is data privacy a concern?

Yes, especially with cloud platforms.

8. What is RLHF?

A method to align models using human feedback.

9. Can I deploy after fine-tuning?

Most platforms support deployment pipelines.

10. How long does fine-tuning take?

From minutes to days depending on model size.

11. What is the biggest challenge?

Data quality and evaluation.

12. Are no-code tools available?

Yes, some platforms offer UI-based workflows.

Conclusion

Model fine-tuning platforms are essential for transforming general AI models into high-performing, domain-specific systems. The best choice depends on your technical expertise, budget, and need for control—but success ultimately comes from combining high-quality data, strong evaluation practices, and the right platform for your workflow.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals