Introduction

Secure Enclave Inference Platforms help organizations run AI inference workloads inside protected execution environments where data, prompts, models, and computations remain isolated from unauthorized access. These platforms use technologies such as trusted execution environments, confidential virtual machines, hardware-backed enclaves, encrypted memory, and attestation systems to protect AI inference while it is actively running.

Why It Matters

AI inference workloads increasingly process highly sensitive information including healthcare records, financial transactions, customer conversations, legal documents, source code, and enterprise knowledge. Traditional security methods protect data at rest and in transit, but inference-time exposure remains a major risk. Secure enclave inference platforms reduce these risks by isolating workloads from cloud operators, malicious insiders, compromised hypervisors, and runtime attacks. As enterprises adopt AI copilots, AI agents, RAG systems, and customer-facing AI applications, confidential AI inference is becoming essential for privacy, governance, and compliance.

Real-World Use Cases

- Secure AI healthcare diagnostics

- Privacy-preserving financial AI systems

- Protected enterprise AI copilots

- Secure government and defense AI workloads

- Confidential AI inference for legal documents

- Protected customer support AI systems

- Secure multi-party AI collaboration

- Confidential RAG and vector database processing

Evaluation Criteria for Buyers

- Trusted execution environment support

- GPU enclave compatibility

- AI framework support

- Confidential container support

- Runtime encryption capabilities

- Remote attestation features

- Kubernetes orchestration support

- Multi-cloud deployment flexibility

- Latency and inference performance

- Auditability and governance controls

- AI observability capabilities

- Scalability for large AI workloads

Best for: enterprises, regulated industries, AI infrastructure teams, healthcare providers, financial organizations, government agencies, cloud-native AI teams, and businesses deploying privacy-sensitive AI systems.

Not ideal for: lightweight public AI applications, hobby AI projects, or organizations without sensitive data processing requirements. Simpler encryption and access controls may be sufficient in low-risk environments.

What’s Changed in Secure Enclave Inference Platforms

- Confidential GPU inference is becoming a major enterprise requirement.

- AI agents now require protected runtime execution environments.

- Confidential inference is expanding into edge AI environments.

- Secure enclave orchestration for Kubernetes is improving rapidly.

- Hardware-backed AI attestation is becoming standard.

- RAG security and confidential vector retrieval are growing priorities.

- Enterprises increasingly demand encrypted inference pipelines.

- Cloud providers are expanding confidential AI infrastructure offerings.

- AI model theft prevention is driving infrastructure investments.

- Multi-party secure AI collaboration is becoming more practical.

- Observability for confidential AI workloads is improving.

- Privacy-preserving AI is becoming a competitive differentiator.

Quick Buyer Checklist

- Confirm support for secure enclaves or confidential VMs.

- Verify GPU confidential inference compatibility.

- Check Kubernetes and container orchestration support.

- Review AI framework integrations.

- Test performance overhead during inference.

- Validate remote attestation capabilities.

- Check workload portability across clouds.

- Review audit logs and governance features.

- Confirm AI observability support.

- Evaluate scalability for large models.

- Verify secure API and inference endpoints.

- Avoid excessive infrastructure lock-in.

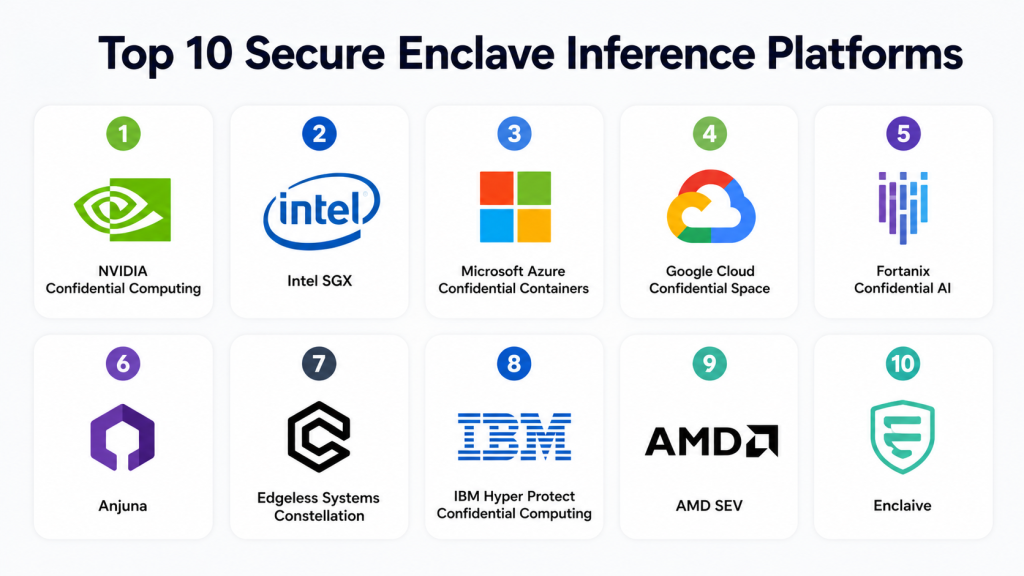

Top 10 Secure Enclave Inference Platforms

1- NVIDIA Confidential Computing

One-line verdict: Best for high-performance GPU-based confidential AI inference in enterprise environments.

Short description:

NVIDIA Confidential Computing provides secure AI inference using hardware-isolated GPU memory protection and encrypted runtime processing. It is widely used for enterprise AI workloads that require strong confidentiality and high performance.

Standout Capabilities

- Confidential GPU inference

- Encrypted GPU memory

- Hardware-backed isolation

- Secure AI acceleration

- Confidential containers

- High-performance inference

- GPU attestation support

- AI infrastructure integration

AI-Specific Depth

- Model support: Proprietary and open-source AI models

- RAG / knowledge integration: Secure inference pipeline support

- Evaluation: Hardware-level attestation validation

- Guardrails: GPU workload isolation

- Observability: GPU telemetry and runtime monitoring

Pros

- Excellent AI inference performance

- Strong GPU ecosystem support

- Enterprise-grade security capabilities

Cons

- Requires compatible hardware infrastructure

- Premium infrastructure investment

- Complex deployment environments

Security & Compliance

Supports encrypted memory, workload isolation, attestation, and enterprise security controls. Certifications vary by deployment provider.

Deployment & Platforms

- Linux support

- Cloud and hybrid deployment

- Kubernetes compatibility

Integrations & Ecosystem

NVIDIA integrates deeply into AI infrastructure ecosystems and accelerated computing environments.

- CUDA

- Kubernetes

- AI orchestration tools

- Container platforms

- AI frameworks

- Cloud GPU environments

Pricing Model

Infrastructure and enterprise licensing model. Exact pricing varies.

Best-Fit Scenarios

- GPU-protected AI inference

- Enterprise confidential AI

- High-performance AI serving

2- Intel SGX

One-line verdict: Best for CPU-based secure enclave AI inference and trusted execution workloads.

Short description:

Intel Software Guard Extensions provides trusted execution environments for protecting sensitive workloads during runtime. It is commonly used for secure inference, encrypted computation, and confidential application processing.

Standout Capabilities

- Secure enclaves

- Trusted execution environments

- Runtime memory isolation

- Hardware-backed attestation

- Secure application execution

- Encrypted computation

- CPU-level protection

- Enterprise infrastructure support

AI-Specific Depth

- Model support: CPU-based AI workloads

- RAG / knowledge integration: Varies / N/A

- Evaluation: Hardware-backed workload validation

- Guardrails: Enclave-based runtime protection

- Observability: Infrastructure telemetry visibility

Pros

- Mature confidential computing ecosystem

- Strong hardware isolation

- Broad enterprise adoption

Cons

- Limited GPU acceleration support

- Performance overhead varies

- Requires enclave-aware development

Security & Compliance

Supports attestation, memory encryption, secure enclaves, and workload isolation.

Deployment & Platforms

- Linux environments

- Enterprise infrastructure

- Hybrid deployment support

Integrations & Ecosystem

Intel SGX integrates into enterprise infrastructure and confidential computing ecosystems.

- Cloud providers

- Kubernetes

- Virtualization platforms

- APIs

- Infrastructure tooling

- Enterprise servers

Pricing Model

Infrastructure and hardware ecosystem pricing.

Best-Fit Scenarios

- Secure CPU-based inference

- Trusted execution environments

- Privacy-sensitive enterprise AI

3- Microsoft Azure Confidential Containers

One-line verdict: Best for enterprises deploying confidential AI inference inside Azure cloud environments.

Short description:

Azure Confidential Containers provides hardware-backed container isolation for AI workloads running in cloud-native environments. It helps protect inference pipelines and sensitive runtime processing.

Standout Capabilities

- Confidential containers

- Trusted execution environments

- Cloud-native orchestration

- Secure Kubernetes support

- Hardware-backed isolation

- Runtime encryption

- Enterprise governance

- Secure workload deployment

AI-Specific Depth

- Model support: Hosted and BYO AI models

- RAG / knowledge integration: Azure AI ecosystem integrations

- Evaluation: Attestation workflows

- Guardrails: Container-level runtime isolation

- Observability: Azure monitoring integrations

Pros

- Strong Kubernetes integration

- Enterprise cloud support

- Useful for containerized AI inference

Cons

- Best suited for Azure users

- Cloud dependency considerations

- Advanced setup complexity

Security & Compliance

Supports attestation, RBAC, audit logging, encryption, and enterprise governance features.

Deployment & Platforms

- Cloud deployment

- Kubernetes environments

- Hybrid integrations

Integrations & Ecosystem

Azure Confidential Containers integrates deeply into Microsoft cloud ecosystems.

- Azure Kubernetes Service

- Azure AI services

- Cloud storage

- Monitoring tools

- APIs

- Security services

Pricing Model

Usage-based cloud pricing. Exact pricing varies.

Best-Fit Scenarios

- Secure containerized AI inference

- Confidential cloud-native AI

- Enterprise Kubernetes AI

4- Google Cloud Confidential Space

One-line verdict: Best for collaborative confidential AI processing across cloud-native environments.

Short description:

Google Cloud Confidential Space enables secure collaborative computation using hardware-backed trusted execution environments for AI and sensitive enterprise workloads.

Standout Capabilities

- Confidential collaborative processing

- Trusted execution environments

- Secure cloud orchestration

- Runtime workload isolation

- Confidential APIs

- Cloud-native integration

- Secure data sharing

- Workload attestation

AI-Specific Depth

- Model support: Multi-model cloud AI support

- RAG / knowledge integration: Google cloud AI ecosystem

- Evaluation: Secure workload attestation

- Guardrails: Runtime workload isolation

- Observability: Cloud-native monitoring support

Pros

- Strong cloud-native architecture

- Good for collaborative AI workloads

- Flexible cloud deployment

Cons

- Best for Google Cloud users

- Multi-cloud governance may require extra tooling

- Enterprise setup can be technical

Security & Compliance

Supports encryption, attestation, runtime isolation, and enterprise cloud security features.

Deployment & Platforms

- Cloud deployment

- Kubernetes compatibility

- Cloud-native orchestration

Integrations & Ecosystem

Google integrates Confidential Space into its cloud and AI infrastructure ecosystem.

- Google Kubernetes Engine

- Cloud AI services

- APIs

- Monitoring tools

- Cloud storage

- Container services

Pricing Model

Cloud consumption pricing model.

Best-Fit Scenarios

- Collaborative confidential AI

- Secure cloud inference

- Multi-party AI processing

5- Fortanix Confidential AI

One-line verdict: Best for centralized governance and management of confidential AI inference workloads.

Short description:

Fortanix provides confidential computing orchestration, secure enclave management, and runtime protection for AI applications and enterprise inference environments.

Standout Capabilities

- Confidential workload orchestration

- Secure enclave management

- Runtime AI protection

- Multi-cloud governance

- Secure inference deployment

- Attestation management

- Enterprise policy controls

- Centralized monitoring

AI-Specific Depth

- Model support: Enterprise AI and BYO models

- RAG / knowledge integration: Varies by deployment

- Evaluation: Workload validation workflows

- Guardrails: Runtime policy controls

- Observability: Centralized workload visibility

Pros

- Strong centralized management

- Useful multi-cloud support

- Good enterprise governance capabilities

Cons

- Enterprise-focused complexity

- Requires enclave-compatible infrastructure

- Technical deployment process

Security & Compliance

Supports RBAC, encryption, attestation, audit logging, and enterprise governance controls.

Deployment & Platforms

- Hybrid deployment

- Kubernetes compatibility

- Linux environments

Integrations & Ecosystem

Fortanix integrates into enterprise confidential computing and AI ecosystems.

- Cloud providers

- Kubernetes

- Security tools

- APIs

- Container platforms

- Governance systems

Pricing Model

Enterprise subscription pricing. Exact pricing is not publicly stated.

Best-Fit Scenarios

- Centralized confidential AI governance

- Multi-cloud secure inference

- Enterprise enclave management

6- Anjuna

One-line verdict: Best for cloud-native confidential inference with minimal application changes.

Short description:

Anjuna helps organizations secure AI workloads and applications using hardware-backed runtime isolation and confidential computing technologies.

Standout Capabilities

- Secure AI runtime isolation

- Confidential application execution

- Minimal code changes

- Hardware-backed protection

- Cloud-native deployment

- Secure workload portability

- Confidential orchestration

- Enterprise security support

AI-Specific Depth

- Model support: Enterprise AI inference workloads

- RAG / knowledge integration: Varies / N/A

- Evaluation: Secure workload verification

- Guardrails: Runtime isolation controls

- Observability: Workload telemetry visibility

Pros

- Easier workload migration

- Strong cloud-native security

- Useful enterprise protections

Cons

- Smaller ecosystem than hyperscalers

- Advanced configurations require expertise

- AI-native tooling still evolving

Security & Compliance

Supports workload isolation, encryption, attestation, and runtime governance features.

Deployment & Platforms

- Cloud deployment

- Hybrid support

- Kubernetes environments

Integrations & Ecosystem

Anjuna integrates with cloud-native confidential computing ecosystems.

- Kubernetes

- Containers

- APIs

- Cloud providers

- Enterprise applications

- Security tooling

Pricing Model

Enterprise subscription pricing.

Best-Fit Scenarios

- Cloud-native secure inference

- Confidential AI applications

- Enterprise runtime isolation

7- Edgeless Systems Constellation

One-line verdict: Best for open-source confidential Kubernetes AI inference environments.

Short description:

Edgeless Systems Constellation provides confidential Kubernetes infrastructure for secure AI inference and cloud-native workloads.

Standout Capabilities

- Confidential Kubernetes

- Secure container orchestration

- Trusted execution environments

- Open-source architecture

- Secure cloud-native AI

- Workload attestation

- Confidential containers

- Infrastructure isolation

AI-Specific Depth

- Model support: Open-source and enterprise AI models

- RAG / knowledge integration: Kubernetes-based support

- Evaluation: Infrastructure validation workflows

- Guardrails: Confidential runtime isolation

- Observability: Kubernetes telemetry integration

Pros

- Strong open-source flexibility

- Useful for Kubernetes-heavy environments

- Good cloud-native deployment support

Cons

- Requires infrastructure expertise

- Smaller commercial ecosystem

- Enterprise support varies

Security & Compliance

Supports secure enclaves, attestation, workload isolation, and confidential container capabilities.

Deployment & Platforms

- Linux support

- Kubernetes environments

- Cloud-native deployment

Integrations & Ecosystem

Edgeless Systems integrates into cloud-native and open-source ecosystems.

- Kubernetes

- Containers

- APIs

- Cloud platforms

- Infrastructure tooling

- Open-source environments

Pricing Model

Open-source and enterprise support models.

Best-Fit Scenarios

- Open-source confidential AI

- Secure Kubernetes inference

- Cloud-native secure AI

8- IBM Hyper Protect Confidential Computing

One-line verdict: Best for highly regulated enterprise AI inference workloads and secure cloud processing.

Short description:

IBM Hyper Protect Confidential Computing provides encrypted runtime environments and confidential cloud services designed for enterprise and regulated AI deployments.

Standout Capabilities

- Secure enclaves

- Hardware-backed isolation

- Runtime encryption

- Compliance-focused architecture

- Confidential cloud services

- Enterprise governance

- Secure workload hosting

- Trusted execution environments

AI-Specific Depth

- Model support: Enterprise AI workload support

- RAG / knowledge integration: Varies / N/A

- Evaluation: Secure workload validation

- Guardrails: Hardware-backed runtime protection

- Observability: Enterprise monitoring integrations

Pros

- Strong compliance positioning

- Useful for regulated industries

- Enterprise governance alignment

Cons

- Enterprise-scale complexity

- Advanced infrastructure setup

- AI ecosystem flexibility varies

Security & Compliance

Supports encryption, attestation, secure execution, governance controls, and enterprise security workflows.

Deployment & Platforms

- Hybrid deployment

- Enterprise cloud environments

- Linux support

Integrations & Ecosystem

IBM integrates confidential services into enterprise cloud and governance ecosystems.

- Security systems

- Cloud infrastructure

- APIs

- Monitoring platforms

- Governance tools

- Hybrid cloud systems

Pricing Model

Enterprise pricing model. Exact pricing is not publicly stated.

Best-Fit Scenarios

- Regulated AI inference

- Confidential enterprise AI

- Secure government workloads

9- AMD SEV

One-line verdict: Best for secure virtualized AI inference workloads in AMD-based environments.

Short description:

AMD Secure Encrypted Virtualization protects AI inference workloads using encrypted virtual machine memory and hardware-backed workload isolation.

Standout Capabilities

- Secure encrypted virtualization

- Memory encryption

- Trusted execution support

- Runtime isolation

- Secure virtual machines

- Cloud infrastructure compatibility

- Hardware-backed workload security

- Enterprise virtualization support

AI-Specific Depth

- Model support: Infrastructure-level AI support

- RAG / knowledge integration: Varies / N/A

- Evaluation: Hardware-backed validation

- Guardrails: Secure VM isolation

- Observability: Infrastructure telemetry visibility

Pros

- Strong virtualization security

- Broad cloud provider compatibility

- Useful hybrid infrastructure support

Cons

- Requires compatible hardware

- AI-native tooling depends on integrations

- Performance overhead varies

Security & Compliance

Supports encrypted virtualization, workload isolation, and secure runtime execution.

Deployment & Platforms

- Linux support

- Cloud deployment

- Hybrid enterprise infrastructure

Integrations & Ecosystem

AMD SEV integrates into virtualization and cloud infrastructure ecosystems.

- Hypervisors

- Kubernetes

- Cloud platforms

- APIs

- Infrastructure management tools

- Enterprise servers

Pricing Model

Infrastructure ecosystem pricing model.

Best-Fit Scenarios

- Secure virtualized AI inference

- Hybrid AI infrastructure

- Confidential cloud workloads

10- Enclaive

One-line verdict: Best for confidential containerized AI inference and privacy-focused cloud-native deployments.

Short description:

Enclaive provides secure confidential container technologies designed to protect cloud-native AI workloads using trusted execution environments and encrypted runtime processing.

Standout Capabilities

- Confidential containers

- Trusted execution environments

- Runtime encryption

- Secure workload portability

- Cloud-native deployment

- Privacy-focused architecture

- Secure AI container execution

- Enterprise deployment flexibility

AI-Specific Depth

- Model support: Containerized AI workloads

- RAG / knowledge integration: Varies / N/A

- Evaluation: Runtime integrity validation

- Guardrails: Confidential container isolation

- Observability: Infrastructure monitoring support

Pros

- Strong container-focused security

- Flexible cloud-native deployment

- Useful workload portability

Cons

- Smaller ecosystem

- Enterprise adoption still growing

- Advanced deployment expertise required

Security & Compliance

Supports trusted execution environments, encrypted runtime processing, and secure workload isolation.

Deployment & Platforms

- Linux support

- Containerized deployment

- Hybrid cloud environments

Integrations & Ecosystem

Enclaive integrates into cloud-native confidential container ecosystems.

- Kubernetes

- Containers

- Cloud platforms

- APIs

- Infrastructure management tools

- Runtime orchestration systems

Pricing Model

Enterprise and infrastructure-based pricing.

Best-Fit Scenarios

- Confidential AI containers

- Privacy-sensitive inference

- Cloud-native secure AI

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| NVIDIA Confidential Computing | GPU AI inference | Hybrid | Multi-model | GPU-level protection | Hardware dependency | N/A |

| Intel SGX | CPU secure inference | Hybrid | Infrastructure-level | Trusted enclaves | Limited GPU support | N/A |

| Azure Confidential Containers | Enterprise cloud AI | Cloud/Hybrid | Hosted and BYO | Kubernetes integration | Azure dependency | N/A |

| Google Cloud Confidential Space | Collaborative AI | Cloud | Multi-model | Secure collaboration | Google Cloud focus | N/A |

| Fortanix Confidential AI | Governance and orchestration | Hybrid | BYO support | Centralized management | Enterprise complexity | N/A |

| Anjuna | Cloud-native secure AI | Hybrid | Enterprise AI | Minimal code changes | Smaller ecosystem | N/A |

| Edgeless Systems Constellation | Open-source secure AI | Cloud-native | Open-source support | Confidential Kubernetes | Requires expertise | N/A |

| IBM Hyper Protect | Regulated AI workloads | Hybrid | Enterprise AI | Compliance alignment | Complex deployment | N/A |

| AMD SEV | Secure virtualization | Hybrid | Infrastructure-level | VM memory encryption | Hardware dependency | N/A |

| Enclaive | Confidential containers | Hybrid | Containerized AI | Runtime isolation | Smaller ecosystem | N/A |

Scoring & Evaluation

The scoring below compares secure enclave inference platforms across confidential computing capabilities, AI inference protection, deployment flexibility, ecosystem maturity, operational usability, and enterprise readiness. Organizations should prioritize workload sensitivity, infrastructure compatibility, AI scale, and governance requirements when evaluating platforms.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| NVIDIA Confidential Computing | 10 | 9 | 9 | 9 | 7 | 8 | 10 | 9 | 9.0 |

| Intel SGX | 8 | 8 | 9 | 8 | 6 | 7 | 10 | 8 | 8.0 |

| Azure Confidential Containers | 9 | 8 | 8 | 9 | 8 | 7 | 10 | 9 | 8.5 |

| Google Cloud Confidential Space | 9 | 8 | 8 | 9 | 8 | 7 | 9 | 8 | 8.3 |

| Fortanix Confidential AI | 9 | 8 | 8 | 8 | 7 | 7 | 9 | 8 | 8.1 |

| Anjuna | 8 | 7 | 8 | 8 | 7 | 7 | 8 | 7 | 7.7 |

| Edgeless Systems Constellation | 8 | 7 | 8 | 8 | 6 | 8 | 8 | 7 | 7.6 |

| IBM Hyper Protect | 8 | 8 | 9 | 7 | 6 | 7 | 10 | 8 | 7.9 |

| AMD SEV | 8 | 8 | 8 | 8 | 7 | 7 | 9 | 8 | 7.9 |

| Enclaive | 7 | 7 | 8 | 7 | 7 | 7 | 8 | 7 | 7.3 |

Top 3 for Enterprise

- NVIDIA Confidential Computing

- Azure Confidential Containers

- Fortanix Confidential AI

Top 3 for SMB

- Anjuna

- Edgeless Systems Constellation

- Enclaive

Top 3 for Developers

- NVIDIA Confidential Computing

- Edgeless Systems Constellation

- Google Cloud Confidential Space

Which Secure Enclave Inference Platform Is Right for You

Solo / Freelancer

Most solo developers only need lightweight confidential cloud services unless they handle highly sensitive AI inference workloads. Managed confidential VM services may be sufficient.

SMB

SMBs should prioritize ease of deployment, cloud-native integrations, and lower operational complexity. Anjuna and Edgeless Systems are practical starting points for secure AI inference.

Mid-Market

Mid-market organizations should focus on Kubernetes compatibility, governance visibility, and scalable confidential infrastructure. Fortanix and Azure Confidential Containers are strong options.

Enterprise

Large enterprises should prioritize GPU confidentiality, attestation, governance, auditability, and hybrid deployment flexibility. NVIDIA, Azure, and IBM are strong enterprise choices.

Regulated Industries

Healthcare, finance, legal, insurance, defense, and government organizations should prioritize runtime isolation, attestation, encryption during computation, and governance controls.

Budget vs Premium

Budget-focused teams may prefer open-source confidential Kubernetes solutions and managed confidential cloud services. Premium buyers often require GPU isolation, centralized governance, and advanced orchestration.

Build vs Buy

Organizations with strong infrastructure engineering teams can build confidential AI environments internally, but commercial platforms provide faster governance, orchestration, and enterprise support.

Implementation Playbook 30 / 60 / 90 Days

First 30 Days

- Identify sensitive AI inference workloads.

- Map data flows and runtime exposure risks.

- Select pilot inference environments.

- Benchmark workload performance.

- Enable attestation and monitoring.

- Validate framework compatibility.

- Define security success metrics.

- Review infrastructure readiness.

First 60 Days

- Expand confidential inference coverage.

- Integrate Kubernetes orchestration.

- Add governance and audit workflows.

- Test failover and scaling strategies.

- Optimize workload isolation policies.

- Review latency overhead.

- Train infrastructure and AI teams.

- Expand observability coverage.

First 90 Days

- Scale secure enclave inference into production.

- Standardize deployment templates.

- Optimize cost and runtime performance.

- Expand confidential RAG architectures.

- Conduct red-team testing.

- Improve governance reporting.

- Review AI workload inventory.

- Establish long-term confidential AI operations.

Common Mistakes and How to Avoid Them

- Assuming encryption at rest fully protects AI inference.

- Ignoring runtime memory exposure.

- Not validating enclave compatibility early.

- Underestimating performance overhead.

- Failing to test GPU confidential inference support.

- Ignoring Kubernetes orchestration needs.

- Deploying confidential AI without observability.

- Forgetting AI workload attestation.

- Not securing vector retrieval systems.

- Using unsupported infrastructure environments.

- Ignoring multi-cloud governance complexity.

- Relying only on perimeter security controls.

- Not planning for scaling secure inference workloads.

- Failing to train infrastructure teams on enclave operations.

FAQs

1. What is a secure enclave inference platform?

A secure enclave inference platform protects AI inference workloads inside isolated trusted execution environments where data and computations remain protected during runtime.

2. Why are secure enclaves important for AI?

Secure enclaves help reduce risks from runtime attacks, insider threats, cloud infrastructure compromise, and unauthorized access to sensitive AI workloads.

3. Can secure enclaves protect AI models?

Yes. Secure enclaves can help protect both model logic and sensitive data during inference execution.

4. Do these platforms support GPUs?

Some platforms support confidential GPU inference, while others focus primarily on CPU-based secure execution environments.

5. What is attestation in confidential computing?

Attestation verifies that workloads are running in trusted secure environments before sensitive data is processed.

6. Are secure enclave platforms cloud-only?

No. Many platforms support hybrid and enterprise infrastructure deployments in addition to cloud environments.

7. Can these platforms secure RAG pipelines?

Yes. Some confidential computing environments can help protect retrieval workflows, vector databases, and sensitive enterprise knowledge.

8. Is there performance overhead?

Yes. The amount depends on workload type, infrastructure, enclave technology, and AI framework compatibility.

9. Are these platforms only for enterprises?

No, but enterprises and regulated industries benefit most because they handle larger volumes of sensitive data and AI workloads.

10. Can secure enclaves stop insider threats?

They help reduce insider exposure risks by isolating workloads and encrypting sensitive runtime memory regions.

11. Do developers need to rewrite applications?

Some platforms require enclave-aware development, while others support minimal code changes depending on architecture.

12. What should buyers evaluate first?

Organizations should first evaluate workload sensitivity, infrastructure compatibility, GPU requirements, performance impact, and deployment flexibility.

Conclusion

Secure Enclave Inference Platforms are becoming a critical layer of enterprise AI security. As organizations deploy larger AI models, customer-facing AI applications, confidential RAG systems, and AI agents, protecting runtime inference environments is now essential for privacy, governance, and compliance. Traditional encryption and access controls alone cannot fully protect sensitive AI workloads during active computation.The best platform depends on AI scale, infrastructure strategy, cloud alignment, GPU requirements, and compliance needs. NVIDIA leads for GPU-heavy confidential AI inference, Azure and Google provide strong confidential cloud ecosystems, and Fortanix, Anjuna, and Edgeless Systems offer flexible orchestration and cloud-native security approaches.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals