Introduction

Retrieval-Augmented Generation systems are becoming a core part of enterprise AI infrastructure, but building a successful RAG application is no longer only about connecting a language model to a vector database. Organizations now need reliable methods to evaluate retrieval quality, grounding accuracy, hallucination rates, answer relevance, latency, and overall user experience. Without proper benchmarking and evaluation, even advanced AI systems can generate misleading, inaccurate, or low-quality responses.

RAG evaluation and benchmarking tools help AI teams systematically measure the performance of retrieval pipelines, reranking systems, chunking strategies, embeddings, prompts, and generated outputs. These platforms provide automated testing, synthetic dataset generation, observability, experimentation frameworks, and production monitoring for enterprise AI applications.

Why It Matters

- Improves answer quality and grounding accuracy

- Reduces hallucinations in AI systems

- Validates retrieval performance

- Helps optimize prompts and chunking

- Measures enterprise AI reliability

- Enables scalable AI governance

Real-World Use Cases

- Enterprise AI copilots

- Customer support automation

- AI-powered internal search

- Legal document assistants

- Healthcare knowledge retrieval

- AI research assistants

- Financial compliance automation

- Developer documentation search

Evaluation Criteria for Buyers

When selecting a RAG evaluation platform, buyers should focus on:

- Retrieval benchmarking quality

- Hallucination detection

- Automated evaluation workflows

- Synthetic test dataset generation

- Observability and monitoring

- Multi-model evaluation support

- Scalability and enterprise readiness

- Integration flexibility

- Human feedback workflows

- Production monitoring capabilities

Best For

Organizations deploying production-grade RAG systems that require measurable reliability, retrieval optimization, and AI quality governance.

Not Ideal For

Small experimental AI projects that do not yet require automated evaluation pipelines or enterprise-scale observability.

What’s Changing in RAG Evaluation & Benchmarking

- Automated evaluation pipelines are replacing manual testing

- Hallucination monitoring is becoming a core AI metric

- Synthetic dataset generation is accelerating benchmarking

- AI observability platforms now include retrieval analytics

- Multi-model evaluation frameworks are growing rapidly

- Continuous evaluation is replacing static benchmarking

- Context relevance scoring is becoming standardized

- Agent evaluation capabilities are expanding

- Human-in-the-loop feedback systems are increasing adoption

- Real-time AI quality monitoring is becoming essential

Quick Buyer Checklist

Before choosing a RAG evaluation platform, confirm:

- Retrieval evaluation support

- Hallucination detection capabilities

- Synthetic dataset generation

- AI observability features

- Benchmarking automation

- Integration compatibility

- Experiment tracking

- Multi-model testing support

- Enterprise scalability

- Security and governance controls

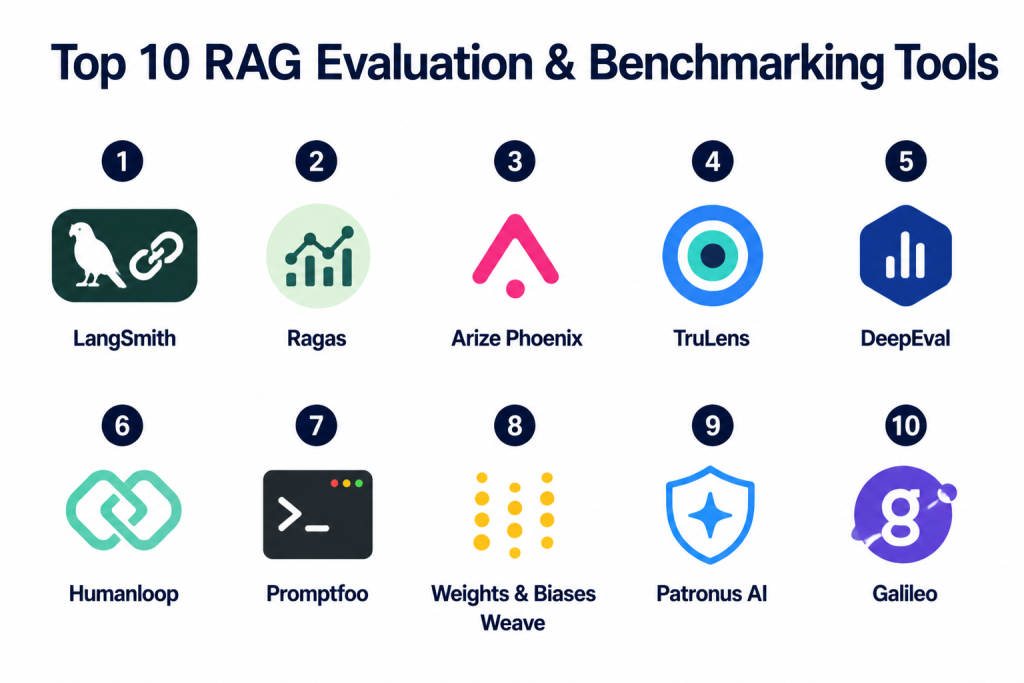

Top 10 RAG Evaluation & Benchmarking Tools

1- LangSmith

2- Ragas

3- Arize Phoenix

4- TruLens

5- DeepEval

6- Humanloop

7- Promptfoo

8- Weights & Biases Weave

9- Patronus AI

10- Galileo

1. LangSmith

One-line Verdict

Best for end-to-end RAG tracing, debugging, and evaluation workflows.

Short Description

LangSmith is a comprehensive AI observability and evaluation platform developed for monitoring, testing, and optimizing large language model applications. It provides deep visibility into retrieval pipelines, prompts, chains, agents, and generation quality within modern RAG systems.

The platform is heavily adopted by teams building production AI applications that require debugging, benchmarking, and continuous evaluation across complex workflows.

Standout Capabilities

- End-to-end tracing

- Prompt evaluation

- Retrieval debugging

- Experiment tracking

- Dataset management

- Human feedback workflows

- AI observability

- Performance analytics

AI-Specific Depth

LangSmith enables developers to evaluate retrieval quality, compare prompts, benchmark model outputs, and trace failures across multi-step RAG pipelines.

Pros

- Strong observability features

- Excellent debugging workflows

- Tight LangChain integration

Cons

- Best experience within LangChain ecosystem

- Advanced enterprise usage may require tuning

- Pricing can scale with usage

Security & Compliance

Enterprise deployment options available.

Deployment & Platforms

- Cloud

- Enterprise deployment

Integrations & Ecosystem

LangSmith integrates deeply with AI orchestration and retrieval ecosystems.

- LangChain

- OpenAI

- Anthropic

- Vector databases

- RAG frameworks

- AI monitoring tools

Pricing Model

Usage-based SaaS pricing.

Best-Fit Scenarios

- Production RAG systems

- AI observability

- Prompt optimization

2. Ragas

One-line Verdict

Best open-source framework for automated RAG evaluation metrics.

Short Description

Ragas is one of the most popular open-source frameworks for evaluating Retrieval-Augmented Generation systems using automated metrics. It helps teams measure answer relevance, context precision, faithfulness, and retrieval quality without extensive manual review.

The framework is widely used for benchmarking RAG pipelines during experimentation and production optimization.

Standout Capabilities

- Automated RAG metrics

- Faithfulness evaluation

- Context precision scoring

- Retrieval benchmarking

- Synthetic test generation

- Open-source flexibility

- Multi-model compatibility

- Lightweight integration

AI-Specific Depth

Ragas focuses heavily on grounding quality and retrieval accuracy, helping organizations benchmark how well generated answers align with retrieved context.

Pros

- Strong evaluation metrics

- Open-source flexibility

- Lightweight implementation

Cons

- Requires engineering integration

- Limited enterprise UI tooling

- Advanced workflows may require customization

Security & Compliance

Depends on deployment architecture.

Deployment & Platforms

- Self-hosted

- Python environments

- Cloud infrastructure

Integrations & Ecosystem

- LangChain

- LlamaIndex

- OpenAI

- Hugging Face

- Custom RAG stacks

Pricing Model

Open-source.

Best-Fit Scenarios

- Retrieval benchmarking

- Experimental RAG systems

- AI evaluation pipelines

3. Arize Phoenix

One-line Verdict

Best for enterprise AI observability and RAG performance monitoring.

Short Description

Arize Phoenix provides observability, evaluation, and monitoring capabilities for modern AI systems. The platform focuses on retrieval analytics, hallucination detection, embedding drift monitoring, and production AI quality management.

Organizations use Phoenix to gain visibility into large-scale RAG pipelines and continuously improve AI reliability.

Standout Capabilities

- AI observability

- Embedding monitoring

- Hallucination detection

- Retrieval analytics

- Experiment comparison

- Production monitoring

- Drift detection

- Evaluation dashboards

AI-Specific Depth

Phoenix provides detailed visibility into retrieval behavior, response quality, and embedding performance within production RAG environments.

Pros

- Strong enterprise observability

- Excellent analytics dashboards

- Retrieval-focused monitoring

Cons

- Enterprise complexity

- Advanced setup requirements

- Infrastructure considerations

Security & Compliance

Enterprise-grade governance controls supported.

Deployment & Platforms

- Cloud

- Self-hosted

- Hybrid deployment

Integrations & Ecosystem

- OpenAI

- LangChain

- Vector databases

- AI observability ecosystems

- ML monitoring platforms

Pricing Model

Enterprise subscription pricing.

Best-Fit Scenarios

- Enterprise AI monitoring

- Large-scale RAG systems

- Production observability

4. TruLens

One-line Verdict

Best for explainable evaluation and feedback scoring in RAG systems.

Short Description

TruLens is an open-source evaluation framework focused on transparency, explainability, and feedback-driven benchmarking for language model applications. It enables developers to evaluate groundedness, answer relevance, and retrieval quality using customizable feedback functions.

The platform is popular among teams prioritizing explainable AI evaluation.

Standout Capabilities

- Explainable evaluation

- Feedback functions

- Groundedness scoring

- Retrieval quality metrics

- Hallucination analysis

- Open-source architecture

- Transparent evaluation workflows

- Multi-model support

AI-Specific Depth

TruLens helps teams understand why responses fail by providing interpretable evaluation signals across retrieval and generation pipelines.

Pros

- Strong explainability

- Flexible feedback workflows

- Open-source ecosystem

Cons

- Requires technical expertise

- UI capabilities still evolving

- Advanced scaling may require engineering effort

Security & Compliance

Varies by deployment model.

Deployment & Platforms

- Self-hosted

- Python environments

- Cloud infrastructure

Integrations & Ecosystem

- LangChain

- OpenAI

- Hugging Face

- LlamaIndex

- Custom AI stacks

Pricing Model

Open-source.

Best-Fit Scenarios

- Explainable AI evaluation

- Retrieval quality analysis

- Experimental benchmarking

5. DeepEval

One-line Verdict

Best for lightweight automated AI testing and benchmarking workflows.

Short Description

DeepEval is a testing and evaluation framework built specifically for large language model applications. It allows teams to automate RAG testing, benchmark prompts, validate outputs, and continuously evaluate AI systems.

The framework emphasizes developer productivity and streamlined AI testing workflows.

Standout Capabilities

- Automated AI testing

- Benchmark pipelines

- Prompt evaluation

- Retrieval testing

- CI/CD integration

- Lightweight setup

- Multi-model support

- Regression testing

AI-Specific Depth

DeepEval enables automated validation of retrieval quality and generated outputs across evolving RAG systems.

Pros

- Easy to implement

- Strong developer workflows

- Lightweight architecture

Cons

- Smaller enterprise ecosystem

- Limited advanced observability

- Customization may be required

Security & Compliance

Depends on deployment architecture.

Deployment & Platforms

- Self-hosted

- Cloud infrastructure

- Developer environments

Integrations & Ecosystem

- OpenAI

- LangChain

- CI/CD pipelines

- AI testing frameworks

Pricing Model

Open-source with enterprise options.

Best-Fit Scenarios

- AI regression testing

- Continuous benchmarking

- Developer evaluation workflows

6. Humanloop

One-line Verdict

Best for human feedback and collaborative AI evaluation workflows.

Short Description

Humanloop focuses on evaluation workflows that combine automated AI benchmarking with human review and collaborative feedback systems. It enables organizations to align RAG quality evaluation with real-world business expectations.

The platform is commonly used for enterprise AI governance and iterative model improvement.

Standout Capabilities

- Human feedback loops

- Prompt evaluation

- AI experimentation

- Collaborative review

- Dataset management

- Model comparison

- AI governance workflows

- Continuous optimization

AI-Specific Depth

Humanloop helps organizations combine automated retrieval evaluation with expert review for grounded and trustworthy RAG outputs.

Pros

- Strong human evaluation workflows

- Collaborative interface

- Enterprise governance support

Cons

- Premium enterprise focus

- Advanced workflows may require onboarding

- Smaller open-source ecosystem

Security & Compliance

Enterprise governance and security controls supported.

Deployment & Platforms

- Cloud

- Enterprise deployment

Integrations & Ecosystem

- OpenAI

- LangChain

- AI orchestration frameworks

- Workflow systems

Pricing Model

Enterprise SaaS pricing.

Best-Fit Scenarios

- Human-in-the-loop evaluation

- Enterprise AI governance

- Prompt optimization

7. Promptfoo

One-line Verdict

Best for prompt benchmarking and automated AI test suites.

Short Description

Promptfoo is a developer-focused evaluation framework designed for testing prompts, comparing models, and benchmarking AI application outputs. It supports automated testing pipelines for RAG systems and AI assistants.

Its simplicity and automation capabilities make it attractive for developer teams.

Standout Capabilities

- Prompt testing

- Model comparison

- Regression evaluation

- AI benchmarking

- Automated test suites

- Lightweight setup

- CI/CD compatibility

- Open-source workflows

AI-Specific Depth

Promptfoo helps developers validate prompt performance and retrieval behavior across changing AI models and datasets.

Pros

- Developer-friendly

- Fast implementation

- Strong testing automation

Cons

- Limited enterprise analytics

- Smaller observability feature set

- UI tooling limited

Security & Compliance

Depends on deployment architecture.

Deployment & Platforms

- Self-hosted

- Developer environments

- CI/CD systems

Integrations & Ecosystem

- OpenAI

- Anthropic

- LangChain

- GitHub Actions

Pricing Model

Open-source with enterprise capabilities.

Best-Fit Scenarios

- Prompt benchmarking

- AI regression testing

- Lightweight evaluation workflows

8. Weights & Biases Weave

One-line Verdict

Best for ML-native AI experimentation and evaluation tracking.

Short Description

Weights & Biases Weave extends experiment tracking into modern AI applications, enabling developers to monitor prompts, retrieval pipelines, model outputs, and evaluation workflows.

The platform is especially valuable for AI engineering teams already using ML experimentation infrastructure.

Standout Capabilities

- Experiment tracking

- Prompt analytics

- Retrieval evaluation

- AI workflow monitoring

- Model comparison

- Visualization dashboards

- Dataset management

- Collaboration tools

AI-Specific Depth

Weave helps AI teams track RAG experiments, retrieval behavior, and generation quality across iterative optimization cycles.

Pros

- Strong ML ecosystem

- Excellent experiment tracking

- Powerful visualization capabilities

Cons

- ML-oriented learning curve

- Advanced setup requirements

- Enterprise pricing considerations

Security & Compliance

Enterprise governance features supported.

Deployment & Platforms

- Cloud

- Enterprise deployment

Integrations & Ecosystem

- PyTorch

- TensorFlow

- OpenAI

- LangChain

- ML pipelines

Pricing Model

Usage-based enterprise pricing.

Best-Fit Scenarios

- AI experimentation

- RAG optimization

- ML engineering workflows

9. Patronus AI

One-line Verdict

Best for hallucination detection and AI safety benchmarking.

Short Description

Patronus AI specializes in evaluating trustworthiness, hallucination risk, and response reliability in AI systems. It focuses heavily on enterprise AI governance and safe deployment practices.

Organizations use Patronus AI to improve AI reliability and reduce risky outputs in production systems.

Standout Capabilities

- Hallucination detection

- AI safety evaluation

- Reliability scoring

- Compliance workflows

- Groundedness evaluation

- Enterprise governance

- Risk analysis

- Continuous monitoring

AI-Specific Depth

Patronus AI evaluates how accurately RAG responses align with retrieved context while monitoring hallucination risk and unsafe outputs.

Pros

- Strong AI safety focus

- Enterprise governance support

- Reliable hallucination monitoring

Cons

- Enterprise-focused pricing

- Narrower tooling scope

- Advanced setup requirements

Security & Compliance

Enterprise-grade governance and monitoring controls supported.

Deployment & Platforms

- Cloud

- Enterprise deployment

Integrations & Ecosystem

- OpenAI

- Enterprise AI systems

- RAG pipelines

- Monitoring platforms

Pricing Model

Enterprise subscription pricing.

Best-Fit Scenarios

- AI governance

- Hallucination monitoring

- Compliance-sensitive AI deployments

10. Galileo

One-line Verdict

Best for production AI evaluation and continuous quality monitoring.

Short Description

Galileo provides AI observability, evaluation, and monitoring capabilities for large language model applications and RAG systems. It helps organizations measure groundedness, detect hallucinations, and optimize AI performance in production environments.

The platform emphasizes continuous quality management and enterprise AI reliability.

Standout Capabilities

- AI observability

- Hallucination detection

- Retrieval analytics

- Continuous evaluation

- Production monitoring

- AI debugging

- Workflow analytics

- Enterprise governance

AI-Specific Depth

Galileo continuously evaluates retrieval quality and generated responses across production RAG pipelines to maintain reliability and trustworthiness.

Pros

- Strong production monitoring

- Enterprise AI governance

- Continuous evaluation support

Cons

- Enterprise-oriented complexity

- Premium pricing considerations

- Advanced onboarding needs

Security & Compliance

Enterprise governance and compliance controls available.

Deployment & Platforms

- Cloud

- Enterprise deployment

Integrations & Ecosystem

- OpenAI

- LangChain

- Retrieval systems

- AI observability ecosystems

Pricing Model

Enterprise SaaS pricing.

Best-Fit Scenarios

- Production AI systems

- Enterprise RAG governance

- Continuous quality monitoring

Comparison Table

| Tool | Best For | Deployment | Core Strength | Hallucination Detection | Enterprise Scale |

|---|---|---|---|---|---|

| LangSmith | AI observability | Cloud | End-to-end tracing | Partial | High |

| Ragas | Automated RAG metrics | Self-hosted | Retrieval evaluation | Partial | Medium |

| Arize Phoenix | Enterprise monitoring | Hybrid | Observability | Yes | Very High |

| TruLens | Explainable evaluation | Self-hosted | Transparency | Yes | Medium |

| DeepEval | AI testing workflows | Hybrid | Automated testing | Partial | Medium |

| Humanloop | Human feedback workflows | Cloud | Collaborative evaluation | Partial | High |

| Promptfoo | Prompt benchmarking | Self-hosted | Regression testing | Partial | Medium |

| Weights & Biases Weave | Experiment tracking | Cloud | AI experimentation | Partial | High |

| Patronus AI | AI safety evaluation | Cloud | Hallucination monitoring | Yes | High |

| Galileo | Continuous monitoring | Cloud | Production evaluation | Yes | Very High |

Scoring & Evaluation Table

| Tool | Core Features | Ease of Use | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| LangSmith | 9.4 | 8.8 | 9.3 | 8.9 | 9.0 | 8.8 | 8.4 | 9.0 |

| Ragas | 8.9 | 8.1 | 8.5 | 8.0 | 8.5 | 7.9 | 9.3 | 8.6 |

| Arize Phoenix | 9.2 | 7.9 | 8.9 | 9.1 | 9.2 | 8.7 | 8.3 | 8.9 |

| TruLens | 8.8 | 7.8 | 8.4 | 8.3 | 8.4 | 7.8 | 9.0 | 8.4 |

| DeepEval | 8.6 | 8.7 | 8.1 | 7.9 | 8.5 | 8.0 | 9.1 | 8.5 |

| Humanloop | 8.9 | 8.3 | 8.4 | 8.8 | 8.6 | 8.5 | 8.0 | 8.5 |

| Promptfoo | 8.4 | 8.8 | 8.0 | 7.8 | 8.3 | 7.9 | 9.2 | 8.3 |

| Weights & Biases Weave | 9.0 | 7.9 | 9.0 | 8.7 | 8.9 | 8.5 | 8.1 | 8.7 |

| Patronus AI | 8.8 | 7.7 | 8.2 | 9.4 | 8.8 | 8.4 | 7.9 | 8.5 |

| Galileo | 9.1 | 8.0 | 8.7 | 9.0 | 9.0 | 8.6 | 8.2 | 8.8 |

Top 3 Recommendations

Best for Enterprise

- Arize Phoenix

- Galileo

- LangSmith

Best for SMBs

- Ragas

- DeepEval

- Promptfoo

Best for Developers

- TruLens

- LangSmith

- Ragas

Which RAG Evaluation & Benchmarking Tool Is Right for You

For Solo Developers

Ragas and Promptfoo are excellent lightweight options for developers who want quick evaluation workflows without heavy enterprise infrastructure.

For SMBs

DeepEval and LangSmith provide strong automation, benchmarking, and debugging capabilities suitable for growing AI applications.

For Mid-Market Organizations

Humanloop and Weights & Biases Weave support collaborative AI evaluation and scalable experimentation workflows.

For Enterprise AI Programs

Arize Phoenix, Galileo, and Patronus AI are ideal for organizations requiring enterprise observability, governance, compliance monitoring, and continuous AI quality management.

Budget vs Premium

Open-source frameworks like Ragas and TruLens reduce licensing costs but may require more engineering effort. Enterprise observability platforms offer richer governance and monitoring at higher operational cost.

Feature Depth vs Ease of Use

LangSmith balances strong observability with usability, while Arize Phoenix provides deeper enterprise analytics but requires more operational maturity.

Integrations & Scalability

Organizations with complex AI ecosystems should prioritize platforms with strong orchestration, vector database, and AI monitoring integrations.

Security & Compliance Needs

Highly regulated industries should prioritize platforms with governance, auditability, monitoring, and deployment flexibility.

Implementation Playbook

First 30 Days

- Define evaluation KPIs

- Create benchmark datasets

- Establish hallucination metrics

- Measure retrieval precision

- Build baseline performance reports

Days 30–60

- Deploy automated testing pipelines

- Add prompt benchmarking

- Implement retrieval analytics

- Introduce human review workflows

- Optimize chunking and reranking

Days 60–90

- Scale continuous monitoring

- Automate regression testing

- Expand production observability

- Improve governance controls

- Continuously retrain evaluation datasets

Common Mistakes and How to Avoid Them

- Evaluating only generated responses

- Ignoring retrieval precision metrics

- Using weak benchmark datasets

- Skipping hallucination monitoring

- Not testing multi-turn workflows

- Overlooking latency measurements

- Ignoring human feedback loops

- Failing to benchmark prompts

- Poor observability implementation

- Not validating grounding quality

- Underestimating governance needs

- Lack of continuous monitoring

Frequently Asked Questions

1. What are RAG evaluation tools?

RAG evaluation tools measure retrieval quality, answer relevance, hallucination rates, and grounding accuracy in AI systems using automated and human-driven testing workflows.

2. Why is benchmarking important in RAG systems?

Benchmarking helps organizations compare retrieval strategies, prompts, chunking methods, and model outputs to improve reliability and AI quality.

3. What is hallucination detection in RAG?

Hallucination detection identifies responses that are unsupported, inaccurate, or disconnected from retrieved context and source documents.

4. Which tool is best for AI observability?

LangSmith, Arize Phoenix, and Galileo are strong choices for production AI monitoring and observability.

5. Are open-source RAG evaluation tools reliable?

Yes. Frameworks like Ragas and TruLens are widely used for benchmarking and retrieval evaluation across AI development teams.

6. What metrics are commonly used in RAG evaluation?

Common metrics include faithfulness, answer relevance, context precision, retrieval recall, groundedness, and hallucination rates.

7. Can these tools evaluate multiple language models?

Most modern evaluation platforms support testing across multiple LLMs, embeddings, and retrieval architectures.

8. Which tool is easiest for developers?

Promptfoo and DeepEval are often easier for developers because of lightweight setup and automation-friendly workflows.

9. What industries benefit most from RAG benchmarking?

Healthcare, finance, legal, ecommerce, education, and enterprise knowledge management heavily benefit from AI evaluation systems.

10. What should organizations prioritize first?

Organizations should first establish retrieval quality baselines, hallucination monitoring, and automated benchmarking workflows before scaling AI deployments.

Conclusion

RAG evaluation and benchmarking platforms are rapidly becoming essential components of enterprise AI infrastructure. As organizations deploy AI copilots, intelligent search systems, customer support assistants, and knowledge automation platforms, measuring retrieval quality and generation reliability is now critical for trust, governance, and long-term scalability. Modern platforms such as LangSmith, Arize Phoenix, Ragas, Galileo, and TruLens help teams continuously monitor hallucinations, benchmark retrieval pipelines, optimize prompts, and improve grounded generation quality across production AI systems. The right solution ultimately depends on your engineering maturity, governance needs, deployment scale, and evaluation complexity. Start by identifying the most critical AI quality metrics for your business, build repeatable benchmarking workflows, pilot evaluation frameworks in controlled environments, and gradually scale continuous monitoring and observability as your RAG systems mature in production.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals