Introduction

Parameter-Efficient Fine-Tuning (PEFT) tooling refers to modern frameworks that enable customization of large language models without updating all model parameters. Instead of retraining billions of weights, PEFT techniques modify only a small subset using methods like LoRA, QLoRA, adapters, and prefix tuning.

This approach is critical because full fine-tuning is expensive, slow, and requires large-scale GPU infrastructure. PEFT makes model adaptation practical for startups, enterprises, and developers working with limited resources while still achieving high-quality results.

PEFT tools are widely used in:

- Enterprise AI assistants and copilots

- Domain-specific chatbots (legal, healthcare, finance)

- Retrieval-augmented AI systems

- Model personalization and alignment

- Lightweight deployment for edge devices

- Rapid experimentation with foundation models

Key evaluation criteria include efficiency, scalability, model compatibility, evaluation support, observability, and security.

Best for:

AI engineers, ML researchers, startups, and enterprise AI teams building production-grade LLM systems.

Not ideal for:

Teams that only use API-based AI tools without any model training or customization needs.

What’s Changed in PEFT Tooling

- Shift from full fine-tuning to LoRA and QLoRA

- Strong adoption of low-memory GPU training methods

- Integration with distributed training frameworks

- Expansion of modular adapter-based architectures

- Improved evaluation and benchmarking systems

- Growth of LLMOps pipelines (train → evaluate → deploy)

- Stronger support for multimodal models

- Increased use of quantization techniques

- Better observability and experiment tracking

- Strong focus on cost optimization

- Improved RAG + PEFT hybrid workflows

- Enterprise-grade governance and security controls

Quick Buyer Checklist

- Supports LoRA / QLoRA / adapters

- Compatible with target model architecture

- GPU memory efficiency

- Distributed training support

- Built-in or external evaluation tools

- Observability and logging support

- RAG integration capability

- Security and privacy controls

- Deployment flexibility (cloud/self-hosted)

- Ease of use and setup speed

- Ecosystem maturity

- Risk of vendor lock-in

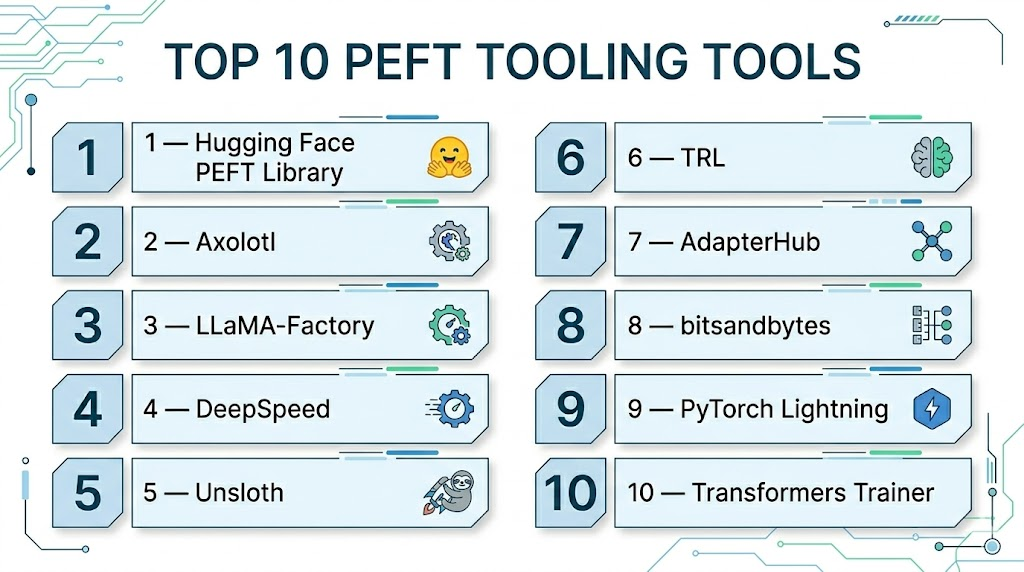

Top 10 PEFT Tooling Tools

1 — Hugging Face PEFT Library

One-line verdict: Most widely adopted standard library for PEFT workflows.

Short description:

A core open-source library that implements PEFT methods like LoRA, QLoRA, and adapters.

It integrates deeply with transformer models and is the default choice for most fine-tuning workflows.

It supports both research experimentation and production deployment.

Standout Capabilities

- LoRA, QLoRA, prefix tuning, adapters

- Transformer ecosystem integration

- Lightweight modular architecture

- Broad model compatibility

- Active community support

AI-Specific Depth

- Model support: Open-source LLMs

- RAG: Not native

- Evaluation: External tools required

- Guardrails: Not included

- Observability: Basic

Pros

- Industry standard

- Highly flexible

- Strong ecosystem

Cons

- Requires expertise

- Not end-to-end platform

2 — Axolotl

One-line verdict: Best configuration-based PEFT training framework.

Short description:

Axolotl simplifies fine-tuning using YAML configuration files.

It supports LoRA and QLoRA workflows with multi-GPU optimization.

It is designed for fast experimentation and reproducibility.

Standout Capabilities

- YAML-based setup

- QLoRA support

- Multi-GPU training

- Prebuilt model recipes

- Fast iteration cycles

AI-Specific Depth

- Model support: Open-source LLMs

- RAG: Limited

- Evaluation: External

- Guardrails: None

- Observability: Basic logs

Pros

- Easy setup

- Fast training

- Lightweight

Cons

- Limited extensibility

- Smaller ecosystem

3 — LLaMA-Factory

One-line verdict: Best all-in-one PEFT tool for LLaMA models.

Short description:

Provides GUI + CLI for fine-tuning LLaMA-style models using PEFT techniques.

It simplifies dataset preparation, training, and export workflows.

It is beginner-friendly and highly automated.

Standout Capabilities

- GUI + CLI

- LoRA/QLoRA support

- Dataset tools

- Training templates

- Export pipelines

AI-Specific Depth

- Model support: LLaMA family

- RAG: Partial

- Evaluation: Basic

- Guardrails: None

- Observability: Training logs

Pros

- Easy to use

- Good automation

- Beginner-friendly

Cons

- Limited enterprise scalability

- Narrow ecosystem

4 — DeepSpeed

One-line verdict: Best for large-scale distributed PEFT training.

Short description:

DeepSpeed is a high-performance training optimization framework for large models.

It enables memory-efficient distributed training using ZeRO and parallelism strategies.

It is widely used in enterprise-scale AI systems.

Standout Capabilities

- ZeRO optimization

- Distributed training

- Memory offloading

- Pipeline parallelism

- Large-scale scaling

AI-Specific Depth

- Model support: Broad

- RAG: Not included

- Evaluation: External

- Guardrails: None

- Observability: Metrics only

Pros

- Extremely scalable

- High performance

- Enterprise-ready

Cons

- Complex setup

- Steep learning curve

5 — Unsloth

One-line verdict: Fastest low-memory PEFT training tool.

Short description:

Optimized for extremely fast LoRA and QLoRA training with minimal GPU usage.

It improves training speed while reducing memory requirements significantly.

Ideal for developers with limited hardware.

Standout Capabilities

- Ultra-fast training

- Low VRAM usage

- Optimized kernels

- LoRA/QLoRA support

- Simple workflow

AI-Specific Depth

- Model support: LLaMA-based

- RAG: None

- Evaluation: External

- Guardrails: None

- Observability: Minimal

Pros

- Very fast

- Cost-efficient

- Easy setup

Cons

- Limited features

- Smaller ecosystem

6 — TRL

One-line verdict: Best tool for RLHF and model alignment.

Short description:

TRL enables reinforcement learning from human feedback workflows.

It is used to align models using reward-based optimization techniques.

It is essential for chatbot improvement systems.

Standout Capabilities

- RLHF pipelines

- PPO training

- Reward modeling

- Preference tuning

- Alignment workflows

AI-Specific Depth

- Model support: Transformers

- RAG: None

- Evaluation: RL-based

- Guardrails: Partial

- Observability: Logs

Pros

- Strong alignment tools

- Hugging Face integration

- Research-grade

Cons

- Complex

- Requires RL knowledge

7 — AdapterHub

One-line verdict: Best modular adapter-based research framework.

Short description:

Focuses on adapter-based PEFT methods for multi-task learning.

It allows efficient parameter sharing across tasks.

Mostly used in academic research.

Standout Capabilities

- Adapter-based training

- Multi-task learning

- Modular architecture

- Lightweight updates

AI-Specific Depth

- Model support: Transformers

- RAG: None

- Evaluation: External

- Guardrails: None

- Observability: Basic

Pros

- Modular

- Research-friendly

- Efficient

Cons

- Limited production use

- Smaller ecosystem

8 — bitsandbytes

One-line verdict: Essential quantization library for PEFT efficiency.

Short description:

Provides 4-bit and 8-bit quantization for memory-efficient training.

It is widely used in QLoRA workflows.

It reduces GPU requirements significantly.

Standout Capabilities

- 4-bit quantization

- 8-bit optimizers

- Memory efficiency

- GPU cost reduction

AI-Specific Depth

- Model support: Transformers

- RAG: None

- Evaluation: None

- Guardrails: None

- Observability: Minimal

Pros

- Huge memory savings

- Enables large model training

- Widely used

Cons

- Not standalone

- Needs integration

9 — PyTorch Lightning

One-line verdict: Best structured training framework for scalable pipelines.

Short description:

Simplifies PyTorch training by structuring workflows for scalability and reproducibility.

It integrates well with PEFT pipelines and production systems.

It improves engineering efficiency.

Standout Capabilities

- Structured training loops

- Multi-GPU support

- Experiment tracking

- Modular design

AI-Specific Depth

- Model support: Any PyTorch

- RAG: None

- Evaluation: External

- Guardrails: None

- Observability: Strong

Pros

- Clean structure

- Scalable

- Reliable

Cons

- Adds abstraction

- Not PEFT-specific

10 — Transformers Trainer

One-line verdict: Best general-purpose fine-tuning API.

Short description:

High-level API for training transformer models with minimal setup.

Works naturally with PEFT workflows.

Widely used for fast experimentation.

Standout Capabilities

- Simple training API

- Built-in evaluation

- Distributed training

- Dataset support

AI-Specific Depth

- Model support: Transformers

- RAG: None

- Evaluation: Built-in

- Guardrails: None

- Observability: Basic

Pros

- Easy setup

- Fast workflow

- Strong ecosystem

Cons

- Limited control

- Less flexible

Comparison Table

| Tool | Best For | Deployment | Flexibility | Strength | Watch-Out |

|---|---|---|---|---|---|

| HF PEFT | Standard PEFT | Cloud/Local | High | Flexibility | Complexity |

| Axolotl | Fast training | Cloud/Local | Medium | Simplicity | Limited scale |

| LLaMA-Factory | LLaMA tuning | Cloud/Local | Medium | Ease | Narrow scope |

| DeepSpeed | Scaling | Cluster | High | Performance | Complexity |

| Unsloth | Speed | Local/Cloud | Medium | Efficiency | Limited features |

| TRL | RLHF | Cloud | High | Alignment | Complexity |

| AdapterHub | Research | Local | High | Modularity | Low adoption |

| bitsandbytes | Quantization | Cloud/Local | High | Memory saving | Not standalone |

| Lightning | ML pipelines | Cloud/Local | High | Structure | Overhead |

| Trainer | General use | Cloud/Local | High | Simplicity | Limited control |

Scoring & Evaluation ( PEFT Tooling Tools)

Scoring below is based on a comparative 1–10 scale across key production and research dimensions. Higher score = stronger capability in that area.

| Tool | Core Capability | Reliability | Guardrails | Integrations | Ease of Use | Performance & Cost Efficiency | Security/Admin | Community Support | Weighted Score |

|---|---|---|---|---|---|---|---|---|---|

| Hugging Face PEFT | 9 | 8 | 5 | 9 | 7 | 8 | 6 | 9 | 7.8 |

| Axolotl | 8 | 8 | 5 | 7 | 9 | 9 | 6 | 7 | 7.7 |

| LLaMA-Factory | 8 | 7 | 5 | 7 | 9 | 8 | 6 | 6 | 7.3 |

| DeepSpeed | 10 | 9 | 6 | 8 | 5 | 10 | 7 | 8 | 8.1 |

| Unsloth | 8 | 7 | 5 | 7 | 9 | 10 | 5 | 6 | 7.5 |

| TRL | 9 | 8 | 7 | 8 | 6 | 7 | 6 | 8 | 7.6 |

| AdapterHub | 7 | 7 | 5 | 7 | 8 | 7 | 5 | 6 | 7.0 |

| bitsandbytes | 8 | 8 | 5 | 8 | 7 | 10 | 6 | 8 | 7.8 |

| PyTorch Lightning | 9 | 8 | 6 | 9 | 7 | 8 | 7 | 8 | 8.0 |

| Transformers Trainer | 9 | 8 | 6 | 9 | 9 | 7 | 7 |

Top 3 for Enterprise

- DeepSpeed

- Lightning

- Hugging Face PEFT

Top 3 for SMB

- Axolotl

- Unsloth

- LLaMA-Factory

Top 3 for Developers

- Hugging Face PEFT

- Transformers Trainer

- Axolotl

Which PEFT Tooling Is Right for You

Solo / Freelancer

Axolotl or Unsloth for speed and simplicity.

SMB

LLaMA-Factory or Hugging Face PEFT for balance of control and usability.

Mid-Market

Lightning + PEFT for scalable workflows.

Enterprise

DeepSpeed + PEFT ecosystem for distributed training and governance.

Regulated industries

Prefer DeepSpeed or Lightning with strict internal deployment control.

Budget vs premium

- Budget: Unsloth, bitsandbytes

- Premium: DeepSpeed, Lightning

Build vs buy

- Build if you need customization and control

- Use frameworks if speed and reliability matter more

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Select PEFT method (LoRA/QLoRA)

- Run baseline fine-tuning

- Establish evaluation metrics

60 Days

- Add distributed training if needed

- Implement evaluation harness

- Introduce logging & observability

90 Days

- Optimize cost & latency

- Add governance policies

- Scale to production workloads

Common Mistakes & How to Avoid Them

- Skipping evaluation frameworks

- Ignoring data quality issues

- Overfitting small datasets

- Not tracking experiments

- Poor GPU resource management

- Using full fine-tuning unnecessarily

- Lack of version control for models

- No guardrails for outputs

- Underestimating deployment complexity

- Vendor/tool lock-in risks

- Missing cost monitoring

- No rollback strategy

FAQs

1. What is PEFT in simple terms?

PEFT is a method of fine-tuning large language models by updating only a small portion of parameters instead of retraining the entire model.

2. Why is PEFT important?

It reduces training cost, GPU usage, and time while still delivering strong model customization performance.

3. What is LoRA in PEFT?

LoRA is a technique that adds low-rank matrices to model layers so only a small number of parameters are trained.

4. What is QLoRA?

QLoRA is an optimized version of LoRA that uses quantization to further reduce GPU memory usage.

5. Do I need powerful GPUs for PEFT?

Not necessarily. PEFT can run on consumer GPUs depending on model size and optimization techniques used.

6. Is PEFT suitable for production use?

Yes, many enterprise AI systems use PEFT for cost-efficient model customization.

7. What is the difference between PEFT and full fine-tuning?

PEFT trains only part of the model, while full fine-tuning updates all parameters.

8. Can PEFT be combined with RAG?

Yes, combining PEFT with RAG is common for improving both knowledge and behavior.

9. Which PEFT method is most popular?

LoRA and QLoRA are currently the most widely used methods.

10. Is PEFT better than prompt engineering?

PEFT is more powerful because it actually modifies model behavior, not just inputs.

11. Can PEFT be used for small datasets?

Yes, but careful evaluation is needed to avoid overfitting.

12. What is the biggest risk in PEFT?

Poor dataset quality and lack of evaluation can lead to unstable or biased models.

Conclusion

PEFT tooling has transformed how large language models are adapted by making training faster, cheaper, and more accessible. Instead of requiring full model retraining, developers can now fine-tune only small parameter sets while maintaining strong performance. The ecosystem includes lightweight libraries, scalable distributed systems, and optimization tools suitable for both research and enterprise use. The right choice depends on your scale, expertise, and production needs, but when combined with proper evaluation and governance, PEFT enables highly efficient and production-ready AI systems.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals