Introduction

MLOps Lifecycle Management Platforms are software systems designed to manage the end‑to‑end lifecycle of machine learning models, from data preparation and experimentation through deployment, monitoring, governance, and model retraining. These platforms help bridge the gap between data science and production operations, enabling teams to scale ML workflows reliably and efficiently.

Modern ML initiatives require repeatability, robust versioning, governance controls, automated pipelines, collaboration features, and integration with CI/CD systems. Common use cases include scalable experiment tracking, reproducible pipelines, automated model testing and validation, continuous deployment of models into production, drift detection and monitoring, automated retraining, and compliance reporting.

Buyers should focus on automation, scalability, model governance, integration with existing infrastructure, collaboration features, observability, monitoring and alerting, cost and performance management, security controls, and support for both open‑source and enterprise workflows.

Best for: data science and ML engineering teams in mid‑market and enterprise organizations building production AI systems

Not ideal for: teams only doing research or proof of concepts without deployment needs, or groups relying solely on manual model deployment

What’s Changed in MLOps Lifecycle Management Platforms

- Standardization of ML lifecycle protocols and pipelines across platforms

- Support for agentic workflows that automate retraining and monitoring

- Model governance frameworks baked into pipelines

- Built‑in drift detection and automated retraining triggers

- Advanced observability and telemetry for model performance

- Multi‑cloud and hybrid deployment support

- Integration with feature stores, data catalogs, and data quality tools

- Cost and latency optimization via dynamic resource allocation

- Experiment tracking with lineage and traceability

- Built‑in security guardrails and access controls

- Seamless integration with CI/CD and DevOps toolchains

- Support for open‑source standards to reduce vendor lock‑in

Quick Buyer Checklist (Scan‑Friendly)

- End‑to‑end pipeline automation

- Experiment tracking and model versioning

- Deployment targets and orchestration flexibility

- Monitoring, alerting, and drift detection

- Model governance, audit trails, and access control

- Integration with CI/CD and data infrastructure

- Support for hybrid and multi‑cloud environments

- Cost and resource optimization features

- Collaboration features for teams

- Ease of use and onboarding support

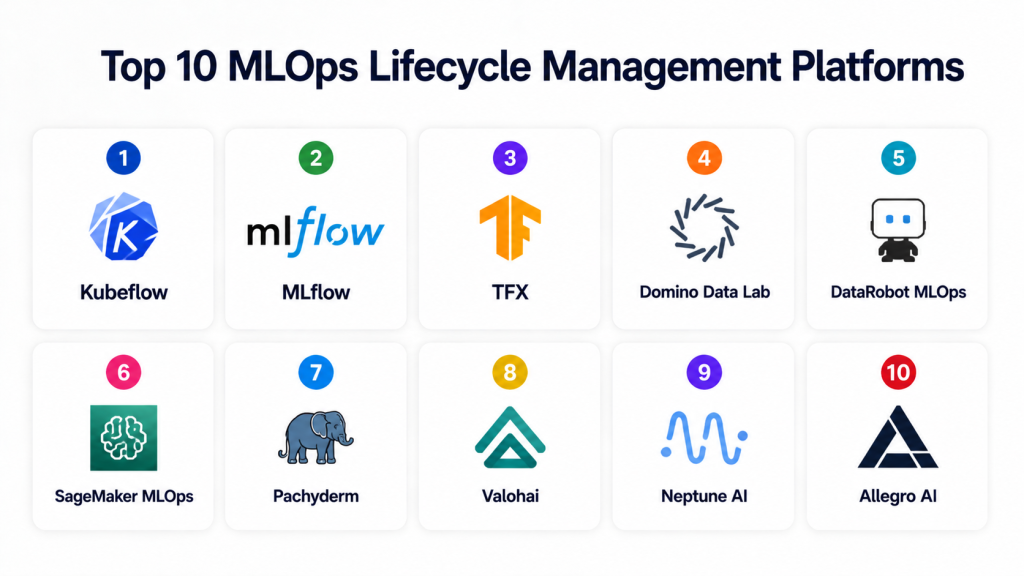

Top 10 MLOps Lifecycle Management Platforms

1 — Kubeflow

One‑line verdict: Best for Kubernetes‑native MLOps workflows with strong pipeline orchestration.

Short description: Kubeflow provides a machine learning toolkit for building, deploying, and managing scalable end‑to‑end ML workflows on Kubernetes.

Standout Capabilities

- Kubernetes‑native orchestration of ML pipelines

- Experiment tracking and model versioning

- Multi‑framework support

- Portable across cloud and on‑prem environments

- Pipeline visualization dashboards

AI‑Specific Depth

- Model support: Open‑source, BYO models

- RAG / knowledge integration: N/A

- Evaluation: Pipeline testing and reproducibility

- Guardrails: RBAC and policy controls via Kubernetes

- Observability: Metrics and logs through Kubernetes tooling

Pros

- Highly flexible and extensible

- Strong community support

- Portable across environments

Cons

- Can be complex to install and manage

- Requires Kubernetes expertise

- User experience can be inconsistent across tools

Security & Compliance

- RBAC, encryption at rest and in transit

- Certifications: Varies

Deployment & Platforms

- Linux, Kubernetes

- Cloud / On‑prem

Integrations & Ecosystem

- Kubernetes ecosystem

- CI/CD toolchains

- Data storage backends

- Metrics and logging tools

Pricing Model

Open‑source

Best‑Fit Scenarios

- Kubernetes‑centric organizations

- Teams with cloud and on‑prem mix

- Highly customizable MLOps requirements

2 — MLflow

One‑line verdict: Best for experiment tracking and model registry with platform flexibility.

Short description: MLflow provides tools for tracking experiments, packaging code, and deploying models across environments.

Standout Capabilities

- Experiment and metric tracking

- Model registry and versioning

- Flexible deployment options

- Integrations with popular ML libraries

- UI for model lifecycle visibility

AI‑Specific Depth

- Model support: Open‑source, BYO

- RAG / knowledge integration: N/A

- Evaluation: Model performance metrics tracking

- Guardrails: User roles and access controls

- Observability: Tracking UI and metric logs

Pros

- Lightweight and easy to adopt

- Supports many ML frameworks

- Strong ecosystem integrations

Cons

- Limited orchestration and pipeline features

- Requires external tools for full pipeline automation

- Monitoring and drift detection are basic

Security & Compliance

- User authentication controls

- Certifications: Varies

Deployment & Platforms

- Linux, macOS, Windows

- Cloud / On‑prem

Integrations & Ecosystem

- Python libraries, Spark

- CI/CD systems

- Model deployment runtimes

Pricing Model

Open‑source

Best‑Fit Scenarios

- Experiment management

- Teams needing model registry

- Framework‑agnostic ML workflows

3 — TFX

One‑line verdict: Great for production ML pipelines optimized for TensorFlow workloads.

Short description: TensorFlow Extended provides components for building reliable, repeatable ML pipelines with validation and monitoring.

Standout Capabilities

- Data validation and preprocessing tools

- Pipelines with metadata tracking

- Model analysis and evaluation

- Scalable pipeline orchestration

- TensorFlow ecosystem integration

AI‑Specific Depth

- Model support: TensorFlow‑centric

- RAG / knowledge integration: N/A

- Evaluation: Integrated validation and analysis

- Guardrails: Schema and validation checks

- Observability: Metadata and lineage tracking

Pros

- Strong production pipeline tooling

- Built‑in validation

- Deep TensorFlow support

Cons

- Best suited for TensorFlow

- Steeper learning curve

- Less flexible for non‑TensorFlow models

Security & Compliance

- RBAC and metadata controls

- Certifications: Varies

Deployment & Platforms

- Kubernetes, Linux

- Cloud / On‑prem

Integrations & Ecosystem

- TensorFlow ecosystem

- Apache Beam

- Monitoring systems

Pricing Model

Open‑source

Best‑Fit Scenarios

- TensorFlow‑powered production workflows

- Teams needing data validation

- High‑scale pipeline automation

4 — Domino Data Lab

One‑line verdict: Enterprise‑grade MLOps with strong collaboration and governance.

Short description: Domino provides an enterprise platform for model development, deployment, and lifecycle governance.

Standout Capabilities

- Centralized model registry

- Collaboration workspaces

- Automated deployment and monitoring

- Governance and audit trails

- Strong role‑based access control

AI‑Specific Depth

- Model support: Proprietary with open‑model support

- RAG / knowledge integration: Connectors to data sources

- Evaluation: Experiment comparison and validation

- Guardrails: Policy enforcement and audit logs

- Observability: Monitoring dashboards

Pros

- Full lifecycle support

- Enterprise security features

- Collaboration and governance

Cons

- Premium pricing

- Can be complex to onboard

- Less flexible for DIY toolchains

Security & Compliance

- SSO, RBAC, encryption

- Certifications: Varied by deployment

Deployment & Platforms

- Cloud / On‑prem

Integrations & Ecosystem

- Data sources, CI/CD

- Feature stores

- Monitoring tools

Pricing Model

Enterprise subscription

Best‑Fit Scenarios

- Regulated industries

- Large data science teams

- Enterprise governance needs

5 — DataRobot MLOps

One‑line verdict: Full enterprise MLOps with automated deployment and monitoring tools.

Short description: DataRobot’s MLOps suite provides model deployment, monitoring, drift detection, and governance.

Standout Capabilities

- Automated deployment pipelines

- Model monitoring and drift detection

- Governance and lineage

- Integration with DataRobot models

- Alerts and performance dashboards

AI‑Specific Depth

- Model support: Proprietary, broad model support

- RAG / knowledge integration: Data source hooks

- Evaluation: Automated model performance tracking

- Guardrails: Policy and compliance enforcement

- Observability: Dashboards for monitoring

Pros

- End‑to‑end automation

- Excellent monitoring

- Governance built‑in

Cons

- Tightly integrated with DataRobot stack

- Premium cost

- Less flexibility for external tools

Security & Compliance

- RBAC, encryption, audit trails

- Certifications: Varies by deployment

Deployment & Platforms

- Cloud / Managed

Integrations & Ecosystem

- Data sources

- CI/CD systems

- Enterprise security

Pricing Model

Enterprise subscription

Best‑Fit Scenarios

- Enterprise scale

- Production monitoring focus

- Governance heavy workflows

6 — SageMaker MLOps

One‑line verdict: Best for AWS‑native deployment with integrated CI/CD and model management.

Short description: AWS SageMaker’s MLOps capabilities include pipelines, deployment, monitoring, and automated retraining.

Standout Capabilities

- Managed pipelines

- Deployment targets across ECS, Lambda, endpoints

- Model monitoring

- CI/CD integration

- Scalability

AI‑Specific Depth

- Model support: Managed AWS models, BYO supported

- RAG / knowledge integration: AWS data sources

- Evaluation: Integrated validation workflows

- Guardrails: AWS security controls

- Observability: CloudWatch metrics, logs

Pros

- Deep AWS integration

- Scalable managed infrastructure

- Strong monitoring

Cons

- AWS lock‑in

- Cost can grow with usage

- Less portable outside AWS

Security & Compliance

- IAM, encryption, compliance controls

- Certifications: Cloud provider compliance

Deployment & Platforms

- AWS Cloud Native

Integrations & Ecosystem

- AWS services

- CI/CD

- Data lakes and storage

Pricing Model

Usage‑based

Best‑Fit Scenarios

- AWS‑centric teams

- Managed infrastructure

- Scalable production needs

7 — Pachyderm

One‑line verdict: Best for data lineage and reproducible data pipelines in MLOps.

Short description: Pachyderm provides a data pipelining platform with versioned data, lineage, and reproducibility for ML workflows.

Standout Capabilities

- Versioned data pipelines

- Data lineage tracking

- Reproducible workflows

- Git‑like data model

- Scalable processing

AI‑Specific Depth

- Model support: BYO

- RAG / knowledge integration: Data store integration

- Evaluation: Data validation and lineage tests

- Guardrails: Policy controls

- Observability: Pipeline metrics

Pros

- Strong data lineage

- Reproducibility

- Scales with data

Cons

- Focuses on data rather than models complete

- Requires expertise

- UI is basic

Security & Compliance

- Encryption, access control

- Certifications: Varies

Deployment & Platforms

- Cloud / On‑prem

Integrations & Ecosystem

- Storage backends

- Pipeline tools

- Data sources

Pricing Model

Subscription / Open options

Best‑Fit Scenarios

- Data lineage focus

- Reproducible pipelines

- Data‑heavy environments

8 — Valohai

One‑line verdict: Flexible enterprise MLOps with strong workflow orchestration.

Short description: Valohai provides pipeline automation, experiment tracking, deployment, and monitoring.

Standout Capabilities

- Pipeline orchestration

- Experiment versioning

- Model deployment

- Monitoring

- Team collaboration

AI‑Specific Depth

- Model support: BYO models

- RAG / knowledge integration: Data connectors

- Evaluation: Versioned tracking

- Guardrails: RBAC, policy controls

- Observability: Metrics and logs

Pros

- Flexible orchestration

- Good visibility

- Team collaboration

Cons

- Requires setup

- Pricing for enterprise tiers

- Some features require scripting

Security & Compliance

- SSO, encryption

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- CI/CD

- Data sources

- Monitoring and alerting tools

Pricing Model

Tiered subscription

Best‑Fit Scenarios

- Cross‑team workflows

- Pipeline automation

- Hybrid environments

9 — Neptune AI

One‑line verdict: Best for experiment tracking and metadata management in MLOps.

Short description: Neptune provides a centralized store for experiments, metrics, and model metadata.

Standout Capabilities

- Experiment metadata store

- Metric tracking

- Model registry

- Visualization dashboards

- Collaboration tools

AI‑Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: Experiment comparison

- Guardrails: Access controls

- Observability: Dashboards

Pros

- Strong metadata management

- Easy tracking

- Team collaboration

Cons

- Not a full pipeline tool

- Requires complementing tools

- Limited deployment automation

Security & Compliance

- RBAC, encryption

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- Python SDK

- CI/CD

- Data storage

Pricing Model

Subscription

Best‑Fit Scenarios

- Experiment‑focused teams

- Metadata tracking

- Collaborative labs

10 — Allegro AI

One‑line verdict: Best for computer vision MLOps with data labeling and model management.

Short description: Allegro AI focuses on vision workflows with dataset versioning, labeling, experiment tracking, and deployment.

Standout Capabilities

- Vision dataset management

- Versioning and labeling

- Experiment tracking

- Model deployment support

- Monitoring

AI‑Specific Depth

- Model support: Vision‑optimized, BYO

- RAG / knowledge integration: N/A

- Evaluation: Visual model validation

- Guardrails: Policy controls

- Observability: Dashboards

Pros

- Excellent vision support

- Dataset versioning

- Built‑in labeling tools

Cons

- Focused on vision

- Less general pipeline tooling

- Enterprise pricing

Security & Compliance

- Access control, encryption

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- Vision libraries

- Storage backends

- CI/CD

Pricing Model

Subscription

Best‑Fit Scenarios

- Computer vision workflows

- Dataset‑centric teams

- Vision model deployment

Comparison Table

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch‑Out | Public Rating |

|---|---|---|---|---|---|---|

| Kubeflow | Kubernetes workflows | Cloud / On‑prem | Open / BYO | Native pipelines | Requires expertise | N/A |

| MLflow | Experiment tracking | Cloud / Hybrid | Open / BYO | Model registry | Pipeline limits | N/A |

| TFX | TensorFlow pipelines | Cloud / On‑prem | TensorFlow | Validation & pipelines | TF‑centric | N/A |

| Domino Data Lab | Enterprise governance | Cloud / On‑prem | Broad | Enterprise features | Premium cost | N/A |

| DataRobot MLOps | Automated prod | Cloud | Proprietary | Monitoring & governance | Stack tied | N/A |

| SageMaker MLOps | AWS native | Cloud | AWS models | Managed workflows | AWS lock‑in | N/A |

| Pachyderm | Data pipelines | Cloud / On‑prem | BYO | Data lineage | Less model focus | N/A |

| Valohai | Workflow automation | Cloud / Hybrid | BYO | Orchestration | Setup required | N/A |

| Neptune AI | Metadata & experiments | Cloud / Hybrid | BYO | Tracking | Not full‑stack | N/A |

| Allegro AI | Vision MLOps | Cloud / Hybrid | BYO | Vision workflows | Focused niche | N/A |

Scoring & Evaluation

| Tool | Core | Reliability | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Total |

|---|---|---|---|---|---|---|---|---|---|

| Kubeflow | 9 | 8 | 8 | 8 | 7 | 7 | 8 | 7 | 7.9 |

| MLflow | 8 | 7 | 7 | 8 | 8 | 7 | 7 | 7 | 7.4 |

| TFX | 8 | 8 | 8 | 7 | 7 | 7 | 7 | 7 | 7.6 |

| Domino | 9 | 8 | 9 | 9 | 7 | 7 | 9 | 8 | 8.3 |

| DataRobot MLOps | 9 | 9 | 9 | 8 | 7 | 8 | 9 | 8 | 8.5 |

| SageMaker MLOps | 9 | 9 | 9 | 9 | 7 | 8 | 9 | 8 | 8.7 |

| Pachyderm | 7 | 7 | 7 | 7 | 7 | 7 | 7 | 7 | 7.0 |

| Valohai | 8 | 8 | 8 | 8 | 7 | 7 | 8 | 7 | 7.7 |

| Neptune AI | 7 | 7 | 7 | 7 | 8 | 7 | 7 | 7 | 7.2 |

| Allegro AI | 7 | 8 | 7 | 7 | 7 | 7 | 7 | 7 | 7.3 |

Top 3 for Enterprise: SageMaker MLOps, DataRobot MLOps, Domino Data Lab

Top 3 for SMB: MLflow, Valohai, Neptune AI

Top 3 for Developers: Kubeflow, TFX, Pachyderm

Which MLOps Lifecycle Management Platform Is Right for You

Solo / Freelancer

MLflow and Neptune AI provide lightweight tracking and experiment management without heavy infrastructure.

SMB

Valohai, MLflow, and Pachyderm balance automation and cost for growing teams.

Mid‑Market

Kubeflow and TFX add pipeline automation with flexibility for hybrid environments.

Enterprise

SageMaker MLOps, DataRobot MLOps, and Domino Data Lab support end‑to‑end production, governance, and monitoring.

Regulated Industries

Platforms with strong audit trails, governance, and access control such as Domino and DataRobot suit compliance needs.

Budget vs Premium

Open‑source tools like MLflow and Kubeflow reduce cost; premium options provide turn‑key automation.

Build vs Buy

DIY is feasible with open‑source stacks; production reliability often benefits from enterprise platforms.

Implementation Playbook

30 Days: Pilot a representative workflow with tracking, pipelines, and validation.

60 Days: Harden security, integrate monitoring, enforce guardrails, and expand automation.

90 Days: Optimize cost and performance, implement governance policies, and scale across teams.

Common Mistakes & How to Avoid Them

- No experiment tracking or versioning

- Ignoring drift monitoring

- No guardrails or policy enforcement

- Siloed data and model repositories

- Manual deployment processes

- Lack of observability and alerting

- No CI/CD integration

- Weak access controls

- No cost monitoring

- Ignoring governance and audit trails

- Inconsistent testing workflows

- No retraining triggers

- Training without production readiness

- Lacking rollback capabilities

FAQs

1. What is MLOps Lifecycle Management?

MLOps Lifecycle Management encompasses automation of ML workflows from experimentation to deployment, monitoring, and governance.

2. Do these tools handle monitoring?

Yes, most include monitoring for performance, drift detection, and alerts.

3. Can I use these platforms with any model?

Support varies; open‑source tools are flexible, enterprise tools may favor ecosystems.

4. How do I start?

Pilot with experiment tracking, then scale to pipelines and deployment automation.

5. Are open‑source tools production‑ready?

With proper integration and governance, yes; enterprise options simplify operations.

6. Do these tools support CI/CD?

Most integrate with CI/CD systems for automated workflows.

7. How is security handled?

RBAC, encryption, and compliance controls are common.

8. Can I use my own cloud?

Yes, many support hybrid and multi‑cloud deployments.

9. What is drift detection?

Monitoring model accuracy over time and alerting on degradation.

10. Are these tools expensive?

Costs vary; open‑source is free while enterprise tiers add features.

11. What languages do they support?

Python is most common; others depend on platform.

12. Do they replace data science?

No; they augment workflows but require human oversight.

Conclusion

MLOps Lifecycle Management Platforms automate and govern the full model lifecycle, reducing operational friction and scaling machine learning reliably. For enterprises, AWS SageMaker MLOps, DataRobot MLOps, and Domino Data Lab provide robust automation and governance. Open‑source options like MLflow, Kubeflow, and TFX suit flexible, customizable pipelines. Evaluate platform needs, pilot workflows early, and embed monitoring and governance before scaling. Human oversight ensures alignment with business goals and responsible AI use.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals