Introduction

Hallucination Detection Tools are platforms and frameworks designed to identify, evaluate, and reduce incorrect, fabricated, misleading, or non-grounded outputs generated by large language models and generative AI systems. These tools help organizations improve trustworthiness, factuality, reliability, and safety across AI applications by detecting hallucinated responses before they cause operational, legal, financial, or reputational risks.

As enterprises increasingly deploy LLMs for customer support, legal analysis, healthcare assistance, coding copilots, enterprise search, and autonomous agents, hallucination detection has become critical infrastructure rather than an optional enhancement. Modern hallucination detection systems use semantic similarity analysis, grounding validation, retrieval verification, chain-of-thought analysis, confidence estimation, statistical scoring, LLM-as-a-judge workflows, and embedding consistency techniques to evaluate AI outputs.

Real-world use cases include validating RAG responses against source documents, detecting fabricated legal citations, preventing false financial advice, monitoring customer support hallucinations, securing AI coding assistants, and enforcing factual consistency in enterprise AI systems.

Key evaluation criteria include factuality scoring accuracy, latency overhead, integration flexibility, explainability, observability, governance controls, regression testing support, scalability, multi-model compatibility, alerting systems, and cost efficiency.

Best for: enterprise AI teams, LLMOps engineers, AI governance groups, regulated industries, and organizations deploying production generative AI systems

Not ideal for: lightweight prototypes, experimental hobby projects, or applications where factual correctness is not business critical

What’s Changed in Hallucination Detection Tools

- LLM hallucination detection became production infrastructure rather than experimental tooling

- Real-time hallucination screening with sub-200ms latency emerged for production systems

- Multi-method detection combining embeddings, CoT analysis, and semantic evaluation gained popularity

- Increased focus on RAG grounding verification and retrieval consistency

- LLM-as-a-judge architectures became mainstream for semantic evaluation

- Drift monitoring now extends to hallucination trends over time

- Enterprise governance and auditability requirements expanded rapidly

- Statistical uncertainty estimation techniques improved hallucination detection robustness

- Open-source hallucination evaluation frameworks matured significantly

- Integration with CI/CD and automated testing workflows accelerated

- Hallucination monitoring expanded into multimodal AI systems

- Security concerns such as package hallucination and slopsquatting increased awareness

Quick Buyer Checklist

- Hallucination and factuality scoring accuracy

- Real-time detection latency

- RAG grounding validation support

- Explainability and root-cause analysis

- Multi-LLM compatibility

- CI/CD and regression testing integration

- Embedding and vector observability

- Governance and audit controls

- Alerting and remediation workflows

- Scalability for high inference volumes

- Cost and token usage visibility

- Hybrid or on-prem deployment support

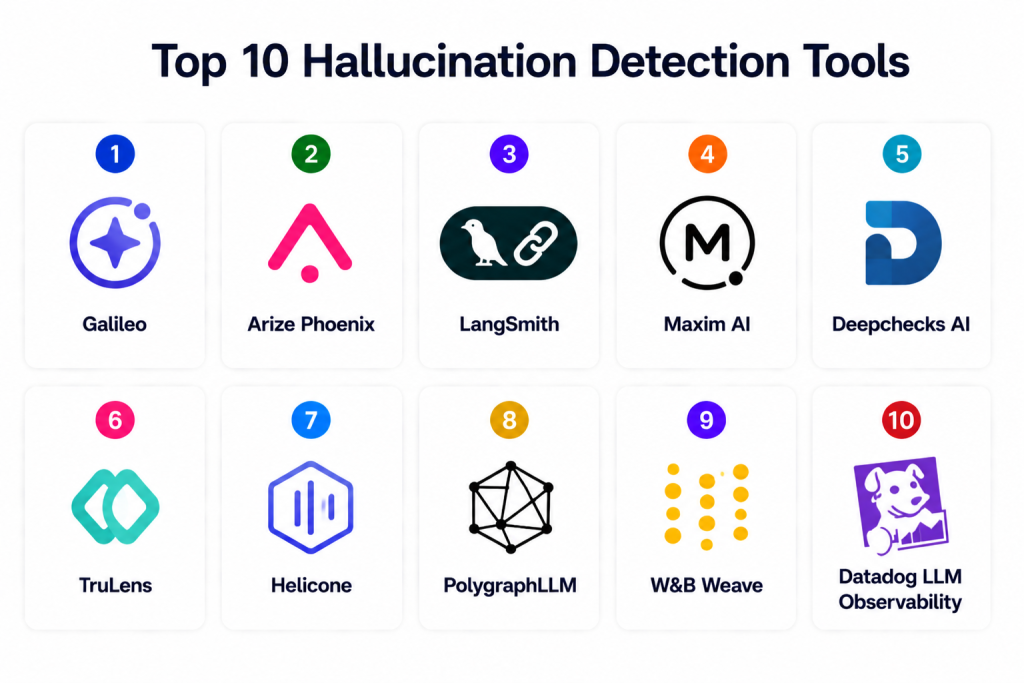

Top 10 Hallucination Detection Tools

1 — Galileo

One-line verdict: Best overall hallucination detection platform for enterprise production AI reliability.

Short description: Galileo provides enterprise hallucination detection, factuality scoring, evaluation workflows, runtime guardrails, and production observability for LLM applications. Its Luna-2 system emphasizes real-time hallucination protection with low latency.

Standout Capabilities

- Multi-method hallucination detection

- Embedding similarity scoring

- Chain-of-thought evaluation

- Runtime hallucination guardrails

- Automated root-cause analysis

- Production observability

- Real-time detection workflows

AI-Specific Depth

- Model support: Hosted / BYO / multi-model

- RAG / knowledge integration: Retrieval validation support

- Evaluation: G-Eval and semantic scoring

- Guardrails: Runtime hallucination blocking

- Observability: Full lifecycle dashboards

Pros

- Enterprise-grade detection stack

- Strong real-time protection

- Comprehensive observability

Cons

- Premium pricing

- Enterprise-focused onboarding

- Advanced workflows require tuning

Security & Compliance

- RBAC, encryption, governance workflows

- Certifications: Varies / Not publicly stated

Deployment & Platforms

- Cloud / Hybrid / On-prem

Integrations & Ecosystem

- LLM pipelines

- RAG systems

- CI/CD workflows

- Evaluation frameworks

- Data platforms

Pricing Model

Enterprise subscription

Best-Fit Scenarios

- Enterprise AI governance

- Real-time hallucination blocking

- Large-scale LLM production systems

2 — Arize Phoenix

One-line verdict: Best for open-source hallucination analysis and LLM observability.

Short description: Arize Phoenix combines observability, embedding analysis, tracing, and hallucination evaluation into a lightweight but scalable platform for monitoring production LLM systems.

Standout Capabilities

- Embedding analysis

- Hallucination evaluators

- Trace visualization

- Semantic drift monitoring

- Prompt and output observability

- Root-cause debugging

- Open-source ecosystem

AI-Specific Depth

- Model support: Multi-model / BYO

- RAG / knowledge integration: Embedding tracing

- Evaluation: Hallucination evaluators

- Guardrails: Alerting and policies

- Observability: Trace dashboards

Pros

- Strong open-source adoption

- Excellent tracing workflows

- Good RAG observability

Cons

- Requires engineering setup

- Enterprise features require scaling

- Less turnkey than premium SaaS

Security & Compliance

- Depends on deployment model

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / On-prem / Hybrid

Integrations & Ecosystem

- Vector DBs

- RAG pipelines

- LLM frameworks

- Experiment tracking

Pricing Model

Open-source / enterprise support

Best-Fit Scenarios

- RAG hallucination analysis

- Open-source LLMOps

- Developer-centric observability

3 — LangSmith

One-line verdict: Ideal for prompt tracing, debugging, and hallucination regression testing.

Short description: LangSmith helps teams monitor chains, prompts, hallucinations, and output quality through tracing, evaluation pipelines, and experiment comparison workflows.

Standout Capabilities

- Prompt and chain tracing

- Hallucination regression analysis

- Workflow debugging

- Experiment comparison

- Multi-model evaluation

- Prompt/output lineage

- Evaluation dashboards

AI-Specific Depth

- Model support: BYO / hosted

- RAG / knowledge integration: Connector support

- Evaluation: Human and automated evaluation

- Guardrails: Policy workflows

- Observability: Traces and dashboards

Pros

- Excellent debugging workflows

- Chain visualization

- Flexible evaluation systems

Cons

- Premium pricing

- Requires engineering maturity

- Learning curve for advanced use

Security & Compliance

- RBAC and API security

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / SaaS

Integrations & Ecosystem

- LangChain

- LLM APIs

- Vector databases

- Experiment frameworks

Pricing Model

Subscription

Best-Fit Scenarios

- Prompt chain debugging

- Regression testing

- Multi-agent observability

4 — Maxim AI

One-line verdict: Strong enterprise platform for hallucination monitoring and evaluation workflows.

Short description: Maxim AI focuses on hallucination monitoring, cross-functional evaluation workflows, simulation testing, and enterprise collaboration for AI teams.

Standout Capabilities

- Hallucination simulation testing

- Prompt/output evaluations

- Cross-team workflows

- Real-time monitoring

- Regression tracking

- Evaluation automation

- Metrics dashboards

AI-Specific Depth

- Model support: Hosted / BYO

- RAG / knowledge integration: Retrieval validation

- Evaluation: Automated hallucination metrics

- Guardrails: Alerting and policy enforcement

- Observability: Evaluation dashboards

Pros

- Strong collaboration workflows

- Enterprise evaluation tooling

- Good simulation capabilities

Cons

- Newer ecosystem

- Enterprise-focused pricing

- Advanced setup required

Security & Compliance

- Enterprise access controls

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- Evaluation systems

- Prompt testing

- LLM APIs

- CI/CD

Pricing Model

Enterprise subscription

Best-Fit Scenarios

- Enterprise evaluation pipelines

- Hallucination simulation

- Multi-team AI governance

5 — Deepchecks AI

One-line verdict: Best open-source-first validation framework for hallucination testing.

Short description: Deepchecks provides validation pipelines, hallucination testing, regression workflows, and evaluation tooling for production AI systems.

Standout Capabilities

- Hallucination validation checks

- CI/CD integrations

- Automated regression testing

- Quality scoring

- Custom evaluation workflows

- Batch and streaming support

- Open-source extensibility

AI-Specific Depth

- Model support: Framework agnostic

- RAG / knowledge integration: Custom workflows

- Evaluation: Validation pipelines

- Guardrails: Automated checks

- Observability: Reports and dashboards

Pros

- Flexible open-source stack

- Strong testing workflows

- CI/CD friendly

Cons

- Engineering setup required

- Basic enterprise governance

- Less polished UI

Security & Compliance

- Depends on deployment

- Certifications: N/A

Deployment & Platforms

- Cloud / On-prem

Integrations & Ecosystem

- Python pipelines

- Testing frameworks

- Monitoring tools

Pricing Model

Open-source / enterprise tiers

Best-Fit Scenarios

- Validation-heavy workflows

- CI/CD hallucination testing

- Developer-focused monitoring

6 — TruLens

One-line verdict: Great for explainable hallucination evaluation in RAG systems.

Short description: TruLens focuses on evaluating groundedness, relevance, and hallucination risk in retrieval-augmented AI systems.

Standout Capabilities

- Groundedness scoring

- Relevance evaluation

- RAG quality analysis

- Explainable evaluations

- Open-source workflows

- Trace visualization

- Hallucination analysis

AI-Specific Depth

- Model support: Framework agnostic

- RAG / knowledge integration: Strong RAG focus

- Evaluation: Groundedness and relevance metrics

- Guardrails: Threshold-based controls

- Observability: Dashboards and traces

Pros

- Strong RAG support

- Open-source flexibility

- Explainable scoring

Cons

- Requires engineering expertise

- Limited enterprise workflows

- Setup complexity

Security & Compliance

- Depends on deployment

- Certifications: N/A

Deployment & Platforms

- Cloud / On-prem

Integrations & Ecosystem

- Vector databases

- LangChain

- LlamaIndex

- RAG frameworks

Pricing Model

Open-source

Best-Fit Scenarios

- RAG hallucination detection

- Explainable evaluation workflows

- Developer experimentation

7 — Helicone

One-line verdict: Best for lightweight hallucination analytics and observability.

Short description: Helicone combines analytics, observability, cost tracking, and prompt/output monitoring for production LLM applications.

Standout Capabilities

- Output analytics

- Hallucination trend monitoring

- Cost tracking

- Multi-model support

- Prompt tracing

- Regression dashboards

- API observability

AI-Specific Depth

- Model support: Hosted / BYO

- RAG / knowledge integration: Limited

- Evaluation: Metrics tracking

- Guardrails: Alerts and thresholds

- Observability: Usage dashboards

Pros

- Lightweight deployment

- Strong analytics

- Good cost visibility

Cons

- Limited governance

- Less semantic depth

- Enterprise features still maturing

Security & Compliance

- API access controls

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / SaaS

Integrations & Ecosystem

- LLM APIs

- Dashboards

- Prompt pipelines

Pricing Model

Usage-based SaaS

Best-Fit Scenarios

- Lightweight observability

- Prompt analytics

- Cost-aware monitoring

8 — PolygraphLLM

One-line verdict: Best research-focused framework for customizable hallucination detection experimentation.

Short description: PolygraphLLM is an open-source library designed for hallucination detection experimentation and research workflows.

Standout Capabilities

- Open-source hallucination library

- Flexible detector architecture

- Research experimentation

- Custom evaluation methods

- Statistical analysis

- Token-level evaluation

- Framework extensibility

AI-Specific Depth

- Model support: Framework agnostic

- RAG / knowledge integration: Customizable

- Evaluation: Statistical and semantic analysis

- Guardrails: Custom implementations

- Observability: Research tooling

Pros

- Highly customizable

- Research-friendly

- Open-source flexibility

Cons

- Not enterprise-ready

- Requires advanced expertise

- Minimal UI and dashboards

Security & Compliance

- Depends on deployment

- Certifications: N/A

Deployment & Platforms

- On-prem / research environments

Integrations & Ecosystem

- Python

- Research frameworks

- LLM experimentation tools

Pricing Model

Open-source

Best-Fit Scenarios

- Academic research

- Experimental hallucination detection

- Custom detector development

9 — W&B Weave

One-line verdict: Excellent for developers needing hallucination scoring inside experimentation workflows.

Short description: Weights & Biases Weave supports evaluation, tracing, hallucination scoring, and monitoring inside AI experimentation environments.

Standout Capabilities

- Hallucination scoring

- Experiment tracking

- Trace analysis

- Regression workflows

- Evaluation dashboards

- Prompt comparison

- Collaborative experimentation

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Partial support

- Evaluation: Hallucination metrics

- Guardrails: Alert workflows

- Observability: Experiment dashboards

Pros

- Strong developer workflows

- Excellent experiment management

- Flexible integrations

Cons

- Requires engineering maturity

- Advanced features require setup

- Enterprise governance limited

Security & Compliance

- RBAC and access controls

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- W&B ecosystem

- ML experimentation

- Prompt pipelines

Pricing Model

Subscription / enterprise

Best-Fit Scenarios

- Experiment-heavy teams

- Hallucination benchmarking

- AI research workflows

10 — Datadog LLM Observability

One-line verdict: Best for infrastructure-centric hallucination monitoring and observability.

Short description: Datadog integrates hallucination detection into infrastructure monitoring, observability, tracing, and production AI telemetry.

Standout Capabilities

- LLM observability

- Hallucination detection workflows

- Infrastructure telemetry

- Prompt/output tracing

- RAG observability

- Alerting systems

- Enterprise dashboards

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong RAG observability

- Evaluation: LLM-as-a-judge workflows

- Guardrails: Alerting and thresholds

- Observability: Infrastructure + AI dashboards

Pros

- Strong observability stack

- Enterprise scalability

- Unified telemetry workflows

Cons

- Datadog ecosystem focus

- Pricing at scale

- Advanced setup complexity

Security & Compliance

- Enterprise controls and audit logging

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- Infrastructure monitoring

- AI telemetry

- CI/CD

- Cloud platforms

Pricing Model

Usage-based enterprise pricing

Best-Fit Scenarios

- Infrastructure-centric AI monitoring

- Unified telemetry workflows

- Enterprise-scale observability

Comparison Table

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Galileo | Enterprise hallucination protection | Cloud/Hybrid | Multi-model | Real-time detection | Premium pricing | N/A |

| Arize Phoenix | Open-source observability | Cloud/Hybrid | Multi-model | Embedding tracing | Requires setup | N/A |

| LangSmith | Prompt tracing | Cloud | Multi-model | Chain debugging | Learning curve | N/A |

| Maxim AI | Enterprise evaluation | Cloud/Hybrid | BYO/Hosted | Simulation testing | Newer ecosystem | N/A |

| Deepchecks AI | Validation testing | Cloud/On-prem | Framework agnostic | CI/CD workflows | Engineering effort | N/A |

| TruLens | RAG evaluation | Cloud/On-prem | Framework agnostic | Groundedness scoring | Setup complexity | N/A |

| Helicone | Lightweight analytics | Cloud | BYO/Hosted | Cost visibility | Limited governance | N/A |

| PolygraphLLM | Research experimentation | On-prem | Framework agnostic | Custom detectors | Not enterprise-ready | N/A |

| W&B Weave | Experiment tracking | Cloud/Hybrid | Multi-model | Experiment workflows | Governance gaps | N/A |

| Datadog LLM Observability | Enterprise telemetry | Cloud/Hybrid | Multi-model | Unified monitoring | Cost at scale | N/A |

Scoring & Evaluation

Scoring is comparative and intended to help teams evaluate tradeoffs between enterprise readiness, flexibility, hallucination accuracy, observability depth, and governance. Enterprise tools typically score higher in governance and scalability, while open-source frameworks prioritize flexibility and developer customization. Teams should prioritize factuality accuracy, integration compatibility, and operational maturity over feature count alone.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Galileo | 9 | 9 | 9 | 9 | 7 | 8 | 9 | 8 | 8.6 |

| Arize Phoenix | 9 | 8 | 8 | 9 | 8 | 8 | 8 | 8 | 8.2 |

| LangSmith | 8 | 8 | 8 | 9 | 8 | 8 | 8 | 8 | 8.0 |

| Maxim AI | 8 | 8 | 8 | 8 | 7 | 7 | 8 | 7 | 7.7 |

| Deepchecks AI | 8 | 8 | 7 | 8 | 8 | 9 | 7 | 7 | 7.9 |

| TruLens | 8 | 8 | 7 | 8 | 7 | 8 | 7 | 7 | 7.6 |

| Helicone | 7 | 7 | 7 | 8 | 9 | 8 | 7 | 7 | 7.5 |

| PolygraphLLM | 7 | 8 | 6 | 7 | 6 | 8 | 6 | 6 | 6.9 |

| W&B Weave | 8 | 8 | 7 | 9 | 8 | 8 | 7 | 8 | 7.9 |

| Datadog LLM Observability | 9 | 8 | 8 | 9 | 7 | 7 | 9 | 8 | 8.2 |

Top 3 for Enterprise: Galileo, Datadog LLM Observability, Arize Phoenix

Top 3 for SMB: LangSmith, Deepchecks AI, Helicone

Top 3 for Developers: TruLens, PolygraphLLM, W&B Weave

Which Hallucination Detection Tool Is Right for You

Solo / Freelancer

Deepchecks AI, TruLens, and Helicone provide lightweight workflows suitable for experimentation, validation, and prompt evaluation without requiring enterprise infrastructure.

SMB

LangSmith, W&B Weave, and Arize Phoenix offer strong observability, debugging, and evaluation capabilities while remaining flexible enough for smaller engineering teams.

Mid-Market

Galileo and Datadog LLM Observability provide scalable hallucination detection and operational telemetry for organizations deploying production AI at growing scale.

Enterprise

Galileo, Arize Phoenix, Datadog LLM Observability, and Maxim AI provide governance, observability, scalability, and enterprise-grade hallucination monitoring.

Regulated Industries

Galileo and Datadog LLM Observability are strong options for governance-heavy environments due to auditability, observability, and policy workflows.

Budget vs Premium

Open-source frameworks such as TruLens, PolygraphLLM, and Deepchecks AI reduce software costs but require engineering investment. Enterprise SaaS platforms accelerate deployment and governance readiness.

Build vs Buy

Organizations with strong research teams may prefer open-source detector frameworks. Enterprises needing governance, support, dashboards, and operational workflows often benefit from managed commercial platforms.

Implementation Playbook

30 Days

- Identify critical hallucination risks

- Define factuality baselines and KPIs

- Integrate basic tracing and monitoring

- Establish alert thresholds

- Create evaluation datasets

60 Days

- Implement regression testing workflows

- Add RAG grounding validation

- Integrate observability dashboards

- Configure governance and RBAC

- Validate evaluation quality

90 Days

- Automate remediation workflows

- Expand hallucination monitoring across teams

- Optimize latency and cost controls

- Scale governance and audit workflows

- Continuously retrain evaluation pipelines

Common Mistakes & How to Avoid Them

- Monitoring only accuracy without semantic evaluation

- Ignoring hallucinations in RAG systems

- Missing grounding validation workflows

- Over-reliance on one detection method

- No regression testing after prompt changes

- Lack of observability and traceability

- Ignoring latency introduced by detection pipelines

- Weak governance and auditability

- Missing human review for critical workflows

- Over-automation without fallback controls

- No hallucination benchmarks or datasets

- Ignoring multimodal hallucination risks

- Vendor lock-in without portability planning

- Failing to monitor hallucination trends over time

FAQs

1. What is hallucination detection in LLMs?

Hallucination detection identifies outputs generated by AI models that are fabricated, misleading, inconsistent, or not grounded in verified information.

2. Why are hallucination detection tools important?

These tools help organizations improve reliability, trust, governance, and factual correctness in production AI systems.

3. How do hallucination detection systems work?

Most use semantic analysis, retrieval validation, uncertainty estimation, or LLM-as-a-judge techniques to evaluate outputs.

4. Can these tools detect hallucinations in RAG systems?

Yes. Many platforms specifically validate retrieval grounding and contextual consistency in RAG pipelines.

5. Are open-source hallucination detection tools available?

Yes. TruLens, PolygraphLLM, Deepchecks AI, and Arize Phoenix provide strong open-source workflows.

6. What industries need hallucination detection most?

Healthcare, finance, legal, cybersecurity, government, and customer support systems benefit heavily from hallucination prevention.

7. Do hallucination detection tools slow down inference?

Some introduce latency overhead, though modern systems increasingly optimize for near real-time detection.

8. Can hallucinations ever be eliminated completely?

No. Current LLM architectures are probabilistic and cannot guarantee zero hallucinations.

9. What is LLM-as-a-judge evaluation?

An LLM evaluates another model’s output for factuality, grounding, or quality using structured prompts.

10. Are hallucination detection tools compatible with all LLMs?

Most enterprise platforms support multiple hosted and BYO models.

11. How do teams benchmark hallucination detection quality?

Teams typically use evaluation datasets, regression tests, semantic similarity metrics, and human review workflows.

12. Do hallucination detection tools replace model monitoring?

No. They complement broader observability and MLOps systems by focusing specifically on factual reliability and grounding.

Conclusion

Hallucination Detection Tools have rapidly evolved into essential infrastructure for trustworthy generative AI systems. Open-source frameworks such as TruLens, Deepchecks AI, and PolygraphLLM provide flexibility for developers and research teams, while enterprise platforms like Galileo, Arize Phoenix, and Datadog LLM Observability deliver production-grade governance, observability, and scalability. As organizations increasingly rely on LLMs for critical workflows, hallucination monitoring must become deeply integrated into evaluation pipelines, CI/CD systems, and governance processes. The best solution depends on operational maturity, infrastructure ecosystem, latency requirements, and governance needs. Start by defining measurable hallucination KPIs, pilot evaluation workflows in high-risk systems, validate detection accuracy and latency, then scale observability and governance across all production AI applications

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals