Introduction

Prompt Testing & Regression Suites are specialized platforms that allow teams to evaluate, test, and validate prompts for large language models (LLMs) and AI agents. These systems ensure that prompt changes, updates, or new iterations do not degrade model performance, introduce biases, or produce unintended outputs. They are critical for teams deploying LLMs in production where reliability, accuracy, and safety are essential.

Organizations use these suites to perform automated prompt regression tests, A/B testing, evaluation against benchmark datasets, and multi-scenario validation. Real-world use cases include:

- Validating prompts for virtual assistants or chatbots

- Regression testing after model or prompt updates

- Detecting hallucinations and output inconsistencies

- Ensuring multi-language prompt reliability

- Evaluating chained or complex prompt workflows

- Tracking prompt performance over time

Key evaluation criteria include regression testing capabilities, automated evaluation pipelines, metrics dashboards, guardrails for safety, support for multi-model LLMs, integration with CI/CD, reproducibility, collaboration features, scalability, observability, and cost optimization.

Best for: AI/ML engineering teams, prompt engineers, and enterprises deploying LLMs in production

Not ideal for: teams using fixed prompts without frequent updates or those with minimal LLM experimentation

What’s Changed in Prompt Testing & Regression Suites

- Standardized regression test frameworks for LLM prompts

- Multi-scenario prompt testing for diverse outputs

- Automated metrics dashboards for prompt evaluation

- Guardrails to prevent unsafe or biased outputs

- Integration with CI/CD and LLM pipelines

- Multi-model support and versioned prompt libraries

- Observability for token usage, latency, and error tracking

- Reproducibility and rollback of prompt changes

- Support for chain-of-thought and multimodal prompts

- Alerting for regression failures

- Cost and latency monitoring for prompt tests

- Collaborative testing workflows for multiple teams

Quick Buyer Checklist

- Automated regression testing for prompts

- Metrics dashboards and performance tracking

- Multi-model and multi-LLM support

- Integration with CI/CD and LLM pipelines

- Guardrails and safety policies

- Versioning and rollback of prompts

- Observability and monitoring of outputs

- Multi-scenario and chain testing

- Collaboration and team management

- Cost and latency optimization

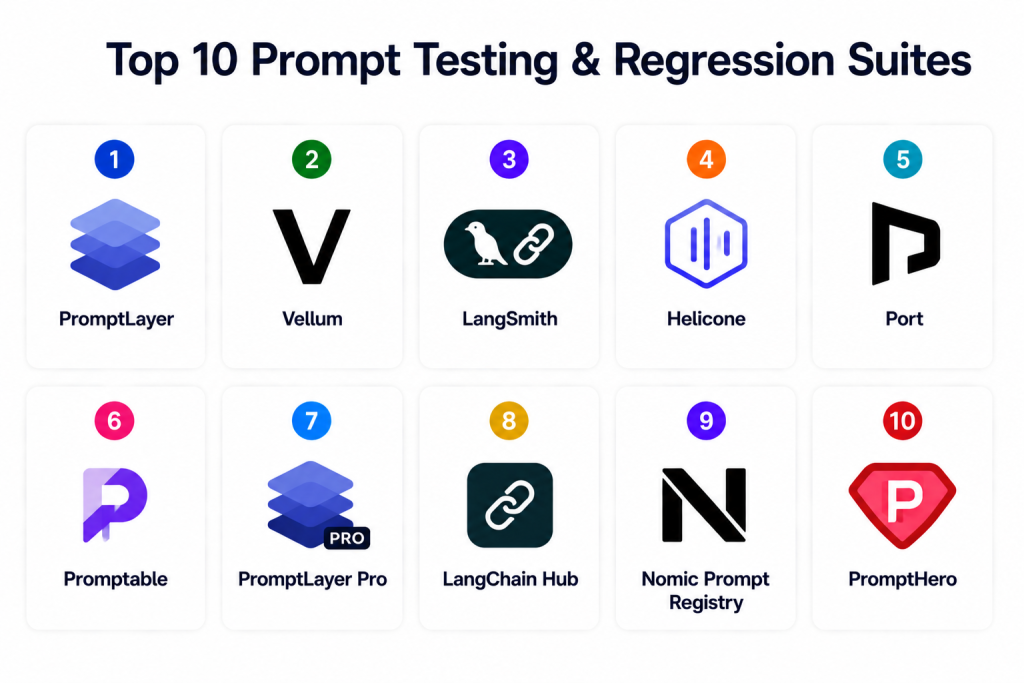

Top 10 Prompt Testing & Regression Suites

1 — PromptLayer

One-line verdict: Best for developers needing prompt logging, versioning, and regression tracking across LLM calls.

Short description: PromptLayer logs prompt executions, versions prompts, and enables regression testing for reproducibility and performance tracking.

Standout Capabilities

- Prompt logging and versioning

- Regression test history

- Performance metrics dashboard

- Multi-LLM API support

- Rollback capabilities

AI-Specific Depth

- Model support: BYO and hosted

- RAG / knowledge integration: N/A

- Evaluation: Prompt regression metrics

- Guardrails: Basic policy checks

- Observability: Logs and dashboards

Pros

- Developer-friendly

- Easy integration with APIs

- Clear version history

Cons

- Limited enterprise governance

- No built-in retraining triggers

- Metrics may require additional setup

Security & Compliance

- API key access control

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / SaaS

Integrations & Ecosystem

- LLM APIs

- Python SDK

- Experiment dashboards

Pricing Model

Tiered SaaS

Best-Fit Scenarios

- LLM experiment reproducibility

- Prompt regression testing

- Multi-LLM workflow tracking

2 — Vellum

One-line verdict: Enterprise-focused suite for visual prompt testing, versioning, and regression workflows.

Short description: Vellum provides visual workflows for prompts with regression testing, evaluation dashboards, and collaboration tools.

Standout Capabilities

- Visual workflow builder for prompts

- Regression testing across prompt versions

- Experiment metrics dashboards

- Multi-model support

- Approval and collaboration features

AI-Specific Depth

- Model support: BYO / hosted

- RAG / knowledge integration: Connectors

- Evaluation: Human-in-the-loop regression evaluation

- Guardrails: Policy enforcement

- Observability: Dashboards and logs

Pros

- Enterprise-grade

- Visual testing workflows

- Collaboration support

Cons

- Premium pricing

- Steep learning curve

- Integration setup required

Security & Compliance

- SSO, RBAC, encryption

- Certifications: Varies

Deployment & Platforms

- Cloud / SaaS

Integrations & Ecosystem

- LLM APIs

- CI/CD pipelines

- Knowledge connectors

Pricing Model

Enterprise subscription

Best-Fit Scenarios

- Enterprise prompt evaluation

- Multi-team collaboration

- Complex prompt pipelines

3 — LangSmith

One-line verdict: Ideal for debugging, regression, and chain-of-thought prompt evaluation.

Short description: LangSmith enables prompt regression testing, debugging, and performance tracking for production LLM pipelines.

Standout Capabilities

- Regression testing of prompt outputs

- Chain-of-thought visualization

- Multi-model support

- Performance dashboards

- Version rollback and history

AI-Specific Depth

- Model support: BYO / hosted

- RAG / knowledge integration: Connectors

- Evaluation: Regression metrics, human review

- Guardrails: Policy enforcement

- Observability: Logs and dashboards

Pros

- Chain visualization

- Multi-model workflows

- Debugging capabilities

Cons

- Premium pricing

- Setup effort for teams

- Learning curve

Security & Compliance

- RBAC and API controls

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / SaaS

Integrations & Ecosystem

- LLM APIs

- Knowledge stores

- Experiment dashboards

Pricing Model

Subscription

Best-Fit Scenarios

- Complex multi-prompt workflows

- Regression tracking

- Multi-model evaluation

4 — Helicone

One-line verdict: Analytics-focused suite for prompt performance and regression monitoring.

Short description: Helicone tracks prompt executions, evaluates performance metrics, and performs regression testing for cost and quality insights.

Standout Capabilities

- Prompt performance analytics

- Regression testing history

- Multi-LLM integration

- Cost and latency dashboards

- Experiment comparison

AI-Specific Depth

- Model support: Hosted / BYO

- RAG / knowledge integration: N/A

- Evaluation: Regression performance metrics

- Guardrails: Alerts for unsafe outputs

- Observability: Logs and dashboards

Pros

- Analytics-driven

- Cost visibility

- Multi-LLM support

Cons

- Focused on metrics

- Limited workflow management

- Not a full prompt editor

Security & Compliance

- API key access

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / SaaS

Integrations & Ecosystem

- LLM APIs

- Experiment dashboards

Pricing Model

Usage-based SaaS

Best-Fit Scenarios

- Cost monitoring

- Performance regression

- Multi-model tracking

5 — Port

One-line verdict: Lightweight suite for prompt iteration, regression, and versioning.

Short description: Port focuses on prompt logging, versioning, and regression testing for rapid iteration and experimentation.

Standout Capabilities

- Prompt versioning

- Regression tracking

- Multi-LLM support

- Experiment dashboards

- Lightweight deployment

AI-Specific Depth

- Model support: BYO / hosted

- RAG / knowledge integration: N/A

- Evaluation: Regression metrics

- Guardrails: Basic access policies

- Observability: Logs

Pros

- Lightweight and easy to adopt

- Multi-LLM support

- Simple dashboards

Cons

- Limited enterprise features

- No chain-of-thought visualization

- Basic collaboration

Security & Compliance

- Access control

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / SaaS

Integrations & Ecosystem

- LLM APIs

- Python SDK

Pricing Model

Tiered SaaS

Best-Fit Scenarios

- Small teams

- Iterative prompt testing

- Multi-model evaluation

6 — Promptable

One-line verdict: Collaborative regression suite with prompt evaluation and tracking.

Short description: Promptable centralizes prompt storage, enables regression tests, and supports collaborative review and experimentation.

Standout Capabilities

- Prompt repository

- Regression testing workflows

- Collaboration tools

- Multi-model tracking

- Version rollback

AI-Specific Depth

- Model support: BYO / hosted

- RAG / knowledge integration: N/A

- Evaluation: Regression metrics

- Guardrails: Access policies

- Observability: Dashboards

Pros

- Collaboration-focused

- Easy regression testing

- Multi-model support

Cons

- Limited enterprise governance

- Manual workflow required

- Premium cost

Security & Compliance

- RBAC

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / SaaS

Integrations & Ecosystem

- LLM APIs

- Experiment dashboards

Pricing Model

Subscription

Best-Fit Scenarios

- Collaborative prompt engineering

- Regression testing

- Multi-team workflows

7 — PromptLayer Pro

One-line verdict: Enterprise-ready regression suite with governance, analytics, and multi-team support.

Short description: PromptLayer Pro extends PromptLayer with advanced analytics, approval workflows, and enterprise governance for prompt testing.

Standout Capabilities

- Regression testing with multi-team dashboards

- Approval and rollback workflows

- Metrics dashboards for evaluation

- Multi-model LLM support

- Enterprise-grade access control

AI-Specific Depth

- Model support: BYO / hosted

- RAG / knowledge integration: Connectors available

- Evaluation: Regression metrics and performance tracking

- Guardrails: Policy enforcement

- Observability: Usage dashboards

Pros

- Enterprise-ready

- Governance and analytics

- Multi-team collaboration

Cons

- Premium cost

- Setup complexity

- Less flexible for small teams

Security & Compliance

- RBAC, SSO, encryption

- Certifications: Varies

Deployment & Platforms

- Cloud / SaaS

Integrations & Ecosystem

- LLM APIs

- Knowledge connectors

- CI/CD

Pricing Model

Enterprise subscription

Best-Fit Scenarios

- Large prompt engineering teams

- Governance and audit workflows

- Multi-model pipelines

8 — LangChain Hub

One-line verdict: Best for chaining prompts and regression testing in collaborative workflows.

Short description: LangChain Hub enables prompt chain versioning, testing, and sharing across teams for complex LLM applications.

Standout Capabilities

- Versioned prompt chains

- Regression testing and comparisons

- Multi-team collaboration

- Integration with LangChain pipelines

- Metrics tracking and dashboards

AI-Specific Depth

- Model support: BYO / hosted

- RAG / knowledge integration: Vector DB connectors

- Evaluation: Regression metrics, human review

- Guardrails: Access control policies

- Observability: Dashboards and logs

Pros

- Chain-focused

- Team collaboration

- Integration with LangChain workflows

Cons

- LangChain-specific

- Learning curve

- Limited enterprise governance

Security & Compliance

- Access control

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / SaaS

Integrations & Ecosystem

- LangChain

- Vector DBs

- Experiment dashboards

Pricing Model

Subscription

Best-Fit Scenarios

- LangChain teams

- Multi-model regression

- Collaborative testing

9 — Nomic Prompt Registry

One-line verdict: Lightweight prompt versioning and regression suite for small to mid-size teams.

Short description: Nomic stores prompts, tracks versions, and provides regression testing capabilities for iterative LLM development.

Standout Capabilities

- Prompt versioning and rollback

- Regression test logging

- Multi-LLM support

- Lightweight dashboards

- Experiment tracking

AI-Specific Depth

- Model support: BYO / hosted

- RAG / knowledge integration: N/A

- Evaluation: Regression metrics

- Guardrails: Access control

- Observability: Logs and dashboards

Pros

- Lightweight and easy to adopt

- Versioning support

- Metrics for regression

Cons

- Limited enterprise features

- Small community

- Basic dashboards

Security & Compliance

- Access control

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / SaaS

Integrations & Ecosystem

- LLM APIs

- Experiment dashboards

Pricing Model

Subscription

Best-Fit Scenarios

- Iterative prompt testing

- Small teams

- Multi-model experimentation

10 — PromptHero

One-line verdict: Enterprise suite for prompt library management, regression, and collaboration.

Short description: PromptHero centralizes prompt storage, regression testing, versioning, and team collaboration for enterprise LLM deployments.

Standout Capabilities

- Centralized prompt library

- Regression testing workflows

- Multi-team collaboration

- Version rollback

- Metrics dashboards

AI-Specific Depth

- Model support: BYO / hosted

- RAG / knowledge integration: Connectors

- Evaluation: Regression metrics and evaluation

- Guardrails: Access control and policies

- Observability: Dashboards

Pros

- Enterprise features

- Collaboration tools

- Governance and auditability

Cons

- Premium pricing

- Setup required

- Platform-specific workflows

Security & Compliance

- RBAC, encryption, audit logs

- Certifications: Varies

Deployment & Platforms

- Cloud / SaaS

Integrations & Ecosystem

- LLM APIs

- Knowledge stores

- Experiment dashboards

Pricing Model

Enterprise subscription

Best-Fit Scenarios

- Enterprise teams

- Multi-team collaboration

- Governance-critical workflows

Comparison Table

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| PromptLayer | Developer logging | Cloud | BYO/Hosted | Versioning | Limited enterprise | N/A |

| Vellum | Enterprise workflows | Cloud | BYO/Hosted | Visual pipelines | Premium | N/A |

| LangSmith | Chain debugging | Cloud | BYO/Hosted | Workflow visualization | Cost | N/A |

| Helicone | Analytics | Cloud | BYO/Hosted | Cost monitoring | Limited workflow | N/A |

| Port | Lightweight versioning | Cloud | BYO/Hosted | Simplicity | Limited governance | N/A |

| Promptable | Collaboration | Cloud | BYO/Hosted | Team workspace | Manual workflow | N/A |

| PromptLayer Pro | Enterprise | Cloud | BYO/Hosted | Governance | Premium | N/A |

| LangChain Hub | Chains & sharing | Cloud | BYO/Hosted | LangChain integration | LangChain-specific | N/A |

| Nomic | Lightweight registry | Cloud | BYO/Hosted | Metrics | Limited enterprise | N/A |

| PromptHero | Enterprise library | Cloud | BYO/Hosted | Governance & collaboration | Premium | N/A |

Scoring & Evaluation

| Tool | Core | Reliability | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Total |

|---|---|---|---|---|---|---|---|---|---|

| PromptLayer | 9 | 8 | 7 | 8 | 8 | 8 | 7 | 7 | 7.8 |

| Vellum | 9 | 8 | 8 | 8 | 7 | 7 | 8 | 7 | 7.8 |

| LangSmith | 9 | 9 | 8 | 8 | 7 | 8 | 8 | 7 | 7.9 |

| Helicone | 8 | 8 | 7 | 8 | 8 | 7 | 7 | 7 | 7.4 |

| Port | 7 | 7 | 7 | 7 | 8 | 7 | 7 | 7 | 7.1 |

| Promptable | 8 | 8 | 7 | 7 | 8 | 7 | 7 | 7 | 7.4 |

| PromptLayer Pro | 9 | 9 | 9 | 8 | 7 | 8 | 9 | 8 | 8.2 |

| LangChain Hub | 9 | 9 | 8 | 8 | 7 | 8 | 8 | 7 | 7.9 |

| Nomic | 7 | 7 | 7 | 7 | 8 | 7 | 7 | 7 | 7.1 |

| PromptHero | 9 | 9 | 9 | 8 | 7 | 8 | 9 | 8 | 8.2 |

Top 3 for Enterprise: PromptLayer Pro, PromptHero, Vellum

Top 3 for SMB: LangSmith, LangChain Hub, Helicone

Top 3 for Developers: PromptLayer, Port, Nomic

Which Prompt Testing & Regression Suite Is Right for You

Solo / Freelancer

PromptLayer or Port for lightweight logging and regression testing.

SMB

LangSmith, LangChain Hub, or Helicone for multi-prompt evaluation workflows.

Mid-Market

Promptable or LangSmith for collaboration and regression analysis.

Enterprise

PromptLayer Pro, Vellum, PromptHero for governance, metrics, and multi-team workflows.

Regulated Industries

Enterprise platforms with access control and audit trails.

Budget vs Premium

Open-source/lightweight for cost-conscious teams; managed suites for governance and collaboration.

Build vs Buy

Open-source registries for flexibility; enterprise platforms for production readiness.

Implementation Playbook

30 Days: Identify prompts, define regression tests, and log baseline metrics.

60 Days: Integrate pipelines, enforce guardrails, and automate testing.

90 Days: Scale multi-team usage, track performance, monitor regression results, optimize workflow.

Common Mistakes

- No prompt versioning

- Skipping regression tests

- Lack of guardrails

- Siloed prompt storage

- Ignoring multi-model evaluation

- No collaboration setup

- Limited metrics or dashboards

- Manual rollback

- Poor integration with pipelines

- Cost tracking omitted

- Overwriting previous prompts

- Weak observability

FAQs

1. What is a prompt regression suite?

A system for testing prompts to ensure new versions do not degrade model outputs.

2. Can these handle multiple LLMs?

Yes, most support BYO, hosted, or multi-model routing.

3. Are outputs reproducible?

Yes, versioning ensures reproducibility across experiments.

4. Can I rollback a prompt?

Yes, version history allows rollback to prior iterations.

5. Do these suites include guardrails?

Enterprise systems enforce safety policies and access control.

6. Are metrics dashboards available?

Yes, performance, cost, and regression metrics are provided.

7. Do they integrate with CI/CD?

Yes, for automated testing and deployment.

8. Can chains of prompts be tested?

Yes, chain visualization and testing are supported in LangSmith and LangChain Hub.

9. Are enterprise compliance features included?

Yes, for enterprise suites like PromptLayer Pro, Vellum, and PromptHero.

10. Are these SaaS only?

Most are SaaS, some offer hybrid deployment options.

11. Can multiple teams collaborate?

Yes, enterprise suites include collaborative features.

12. Do these replace model monitoring?

No, they complement model monitoring with prompt lifecycle testing.

Conclusion

Prompt Testing & Regression Suites ensure reliability, reproducibility, and safety of prompts in LLM workflows. Lightweight tools like PromptLayer, Port, or Nomic suit developers and small teams, while enterprise solutions like Vellum, PromptLayer Pro, or PromptHero support governance and multi-team collaboration. Evaluate based on versioning, regression metrics, guardrails, and integration with LLM pipelines. Pilot tests early, enforce governance, and scale across teams.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals