Introduction

Model Serving Platforms are tools that deploy machine learning and AI models as scalable, reliable services for real‑time and batch inference. These platforms abstract away infrastructure complexity, handle load balancing, latency requirements, monitoring, versioning, scaling, and operational concerns, enabling teams to serve models into applications, APIs, and workflows.

As organizations build intelligent products and services, model serving becomes a crucial layer of the AI stack. Real‑world use cases include real‑time recommendation APIs, fraud detection services, personalized content generation, customer support automation, predictive maintenance, and AI‑driven user insights. Serving platforms make it possible to handle large volumes of inference traffic while ensuring high availability, performance, governance, and observability.

Buyers evaluating platforms should consider latency and throughput, batch vs real‑time capabilities, model versioning, scalability, ease of deployment, observability and metrics, security, CI/CD integration, hybrid/multi‑cloud support, and cost management.

Best for: ML engineers, AI platform teams, CTOs, data science teams in mid‑market and enterprise environments deploying models into production

Not ideal for: teams only conducting offline batch scoring with no deployment needs or minimal inference demands

What’s Changed in Model Serving Platforms

- Standardized APIs and interfaces for serving diverse model types

- Support for multi‑modal inference workloads

- Auto‑scaling of serving endpoints based on demand

- Streamlined CI/CD integration for model deployments

- Built‑in A/B testing and canary releases

- Observability dashboards with latency, throughput, and error metrics

- Integration with feature stores and monitoring systems

- Security guardrails including encryption, authentication, authorization

- Support for serverless serving and microservices

- Cost optimization tools for dynamic resource allocation

- Batch and real‑time serving in unified pipelines

- Support for open‑source and proprietary model stacks

Quick Buyer Checklist

- Real‑time inference support

- Batch scoring capabilities

- Auto‑scaling and load balancing

- Model versioning

- Observability and monitoring

- Security: authentication, authorization, encryption

- CI/CD and deployment automation

- Support for open‑source and custom models

- Multi‑cloud and hybrid deployment

- Cost and latency optimization

- Guardrails for runtime safety

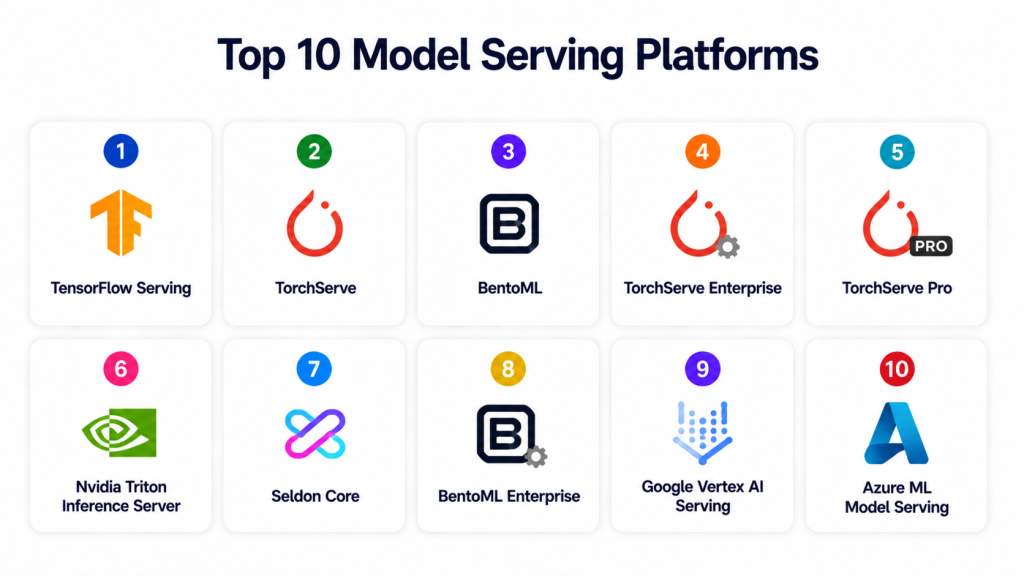

Top 10 Model Serving Platforms

1 — TensorFlow Serving

One‑line verdict: Best for TensorFlow model deployments with high performance and low overhead.

Short description: TensorFlow Serving is a high‑performance serving system designed to serve TensorFlow models with support for model versioning and efficient inference.

Standout Capabilities

- Optimized for TensorFlow models

- Model versioning and rollbacks

- High‑performance serving

- gRPC and REST APIs

- Efficient resource utilization

AI‑Specific Depth

- Model support: TensorFlow native, limited non‑TensorFlow

- RAG / knowledge integration: N/A

- Evaluation: Benchmarking and load testing

- Guardrails: Basic API rate limits

- Observability: Metrics via Prometheus, logs

Pros

- High performance for TensorFlow

- Simple setup

- Widely supported

Cons

- Limited non‑TensorFlow support

- Needs orchestration for multi‑model serving

- No built‑in UI

Security & Compliance

- Supports encryption/TLS

- Certifications: N/A

Deployment & Platforms

- Linux containers

- Cloud / On‑prem

Integrations & Ecosystem

- Prometheus

- Kubernetes

- CI/CD pipelines

Pricing Model

Open‑source

Best‑Fit Scenarios

- TensorFlow production workflows

- High‑performance serving clusters

- Lightweight serving infrastructure

2 — TorchServe

One‑line verdict: Ideal for serving PyTorch models with extensibility and multi‑model support.

Short description: TorchServe provides model serving for PyTorch with modular architecture and support for custom handlers.

Standout Capabilities

- PyTorch native support

- Custom handler support

- Multi‑model serving

- Metrics and logging

- REST and gRPC interfaces

AI‑Specific Depth

- Model support: PyTorch

- RAG / knowledge integration: N/A

- Evaluation: Performance benchmarking

- Guardrails: Custom routing and limits

- Observability: Logging and metrics

Pros

- PyTorch native

- Custom behavior handlers

- Scales with cluster

Cons

- Limited non‑PyTorch support

- Manual scaling required

- Lacks enterprise UI

Security & Compliance

- TLS support

- Certifications: N/A

Deployment & Platforms

- Cloud / On‑prem

Integrations & Ecosystem

- Kubernetes

- Monitoring tools

- CI/CD

Pricing Model

Open‑source

Best‑Fit Scenarios

- PyTorch model production

- Custom inference logic

- Scalable microservices

3 — BentoML

One‑line verdict: Best for framework‑agnostic model serving with strong packaging and deployment workflows.

Short description: BentoML is a model serving toolkit that packages models from diverse frameworks and deploys them as microservices with observability and CI/CD integration.

Standout Capabilities

- Framework‑agnostic model packaging

- Deployment to cloud, containers, serverless

- Built‑in metrics and logs

- REST and gRPC endpoints

- Model versioning

AI‑Specific Depth

- Model support: Multi‑framework (TensorFlow, PyTorch, Scikit‑Learn, etc.)

- RAG / knowledge integration: Custom connectors

- Evaluation: Load testing and canary

- Guardrails: API gateway integration

- Observability: Metrics dashboards

Pros

- Flexible deployment targets

- Simple packaging

- Good documentation

Cons

- Some features need orchestration layers

- Basic governance features

- Requires additional cost tools for monitoring

Security & Compliance

- TLS, authentication support

- Certifications: N/A

Deployment & Platforms

- Cloud, containers, serverless

Integrations & Ecosystem

- Kubernetes

- CI/CD pipelines

- Monitoring tools

Pricing Model

Open‑source + enterprise offerings

Best‑Fit Scenarios

- Framework‑diverse teams

- Microservice serving

- Cloud and serverless deployments

4 — TorchServe Enterprise

One‑line verdict: Enterprise‑ready serving with governance and management tooling.

Short description: TorchServe Enterprise extends open‑source TorchServe with governance, monitoring, security, and scaling features for enterprise usage.

Standout Capabilities

- Multi‑tenant serving

- Security and access control

- Scaling and auto‑balancing

- Monitoring and dashboards

- Enterprise support

AI‑Specific Depth

- Model support: PyTorch

- RAG / knowledge integration: Enterprise connectors

- Evaluation: Health and performance tests

- Guardrails: RBAC and policy controls

- Observability: Dashboards

Pros

- Enterprise support

- Governance and security

- Easy scaling

Cons

- Higher cost

- PyTorch focus

- Enterprise onboarding requires expertise

Security & Compliance

- SSO, role access

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- CI/CD

- Monitoring systems

- Business systems

Pricing Model

Enterprise subscription

Best‑Fit Scenarios

- Large production teams

- Security and governance required

- Enterprise scale

5 — TorchServe Pro

One‑line verdict: Strong choice for managed PyTorch serving with advanced metrics.

Short description: TorchServe Pro builds on open‑source with metrics, logging, and deployment automation for teams serving PyTorch at scale.

Standout Capabilities

- Enhanced observability

- Automated scaling

- Model lifecycle management

- Logging and metrics

- API gateway integration

AI‑Specific Depth

- Model support: PyTorch

- RAG / knowledge integration: N/A

- Evaluation: Benchmarks and live tests

- Guardrails: Policy controls

- Observability: Dashboards

Pros

- Better metrics than open‑source

- Easy scaling

- Integrated logging

Cons

- PyTorch focus

- Pricing

- Less flexible than framework‑agnostic

Security & Compliance

- Encryption and access controls

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- Monitoring systems

- CI/CD

- Logging tools

Pricing Model

Subscription

Best‑Fit Scenarios

- Teams with PyTorch workload

- Performance monitoring

- Managed scaling

6 — Nvidia Triton Inference Server

One‑line verdict: Excellent for high‑performance, multi‑model GPU serving.

Short description: Triton Inference Server provides optimized serving for deep learning and machine learning models on GPU and CPU with multi‑framework support.

Standout Capabilities

- Multi‑framework support

- GPU‑optimized serving

- Dynamic batching

- Metrics and logging

- Model versioning

AI‑Specific Depth

- Model support: TensorFlow, PyTorch, ONNX, XGBoost, etc.

- RAG / knowledge integration: N/A

- Evaluation: Load and performance tests

- Guardrails: API limits

- Observability: Prometheus metrics

Pros

- High throughput on GPU

- Multi‑framework

- Scalable

Cons

- GPU cost

- Complex configs

- Requires infrastructure knowledge

Security & Compliance

- TLS support

- Certifications: N/A

Deployment & Platforms

- Cloud / On‑prem

Integrations & Ecosystem

- GPUs, Kubernetes

- Monitoring systems

- CI/CD

Pricing Model

Open‑source

Best‑Fit Scenarios

- GPU‑intensive serving

- Multi‑framework stacks

- High‑volume inference

7 — Seldon Core

One‑line verdict: Great for Kubernetes‑native multi‑model serving with extensibility.

Short description: Seldon Core enables serving of many model types on Kubernetes with built‑in scaling, metrics, and monitoring.

Standout Capabilities

- Kubernetes‑native serving

- Multi‑model support

- Scaling and autoscaling

- Metrics and dashboards

- Canaries and A/B testing

AI‑Specific Depth

- Model support: Multi‑framework

- RAG / knowledge integration: Connectors

- Evaluation: Canary testing

- Guardrails: Policy control via Kubernetes

- Observability: Dashboards

Pros

- Flexible for many models

- Scales with Kubernetes

- Strong ecosystem

Cons

- Requires Kubernetes skills

- Setup complexity

- Monitoring setup needed

Security & Compliance

- RBAC, TLS

- Certifications: N/A

Deployment & Platforms

- Kubernetes

Integrations & Ecosystem

- Prometheus

- Grafana

- CI/CD

Pricing Model

Open‑source

Best‑Fit Scenarios

- Kubernetes infrastructures

- Multi‑model stacks

- Scalable deployments

8 — BentoML Enterprise

One‑line verdict: Best enterprise option for framework‑agnostic serving with governance.

Short description: BentoML Enterprise extends open‑source BentoML with governance, security, monitoring, and enterprise support.

Standout Capabilities

- Model governance

- Access controls

- Scalability

- Monitoring and metrics

- Deployment automation

AI‑Specific Depth

- Model support: Multi‑framework

- RAG / knowledge integration: Connectors

- Evaluation: Policy and performance tests

- Guardrails: Role‑based access

- Observability: Dashboards

Pros

- Enterprise features

- Framework flexibility

- Governance and security

Cons

- Enterprise cost

- Setup complexity

- Requires support

Security & Compliance

- RBAC, encryption, audit trails

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- CI/CD

- Monitoring platforms

- Logging tools

Pricing Model

Enterprise subscription

Best‑Fit Scenarios

- Enterprise governance

- Multi‑framework teams

- Secure deployments

9 — Google Vertex AI Serving

One‑line verdict: Best for managed serving with auto‑scaling and cloud integration.

Short description: Vertex AI Serving provides model serving with auto‑scaling, monitoring, security, and integration with cloud services.

Standout Capabilities

- Auto‑scaling

- Managed monitoring

- Latency and throughput metrics

- Versioning

- Security controls

AI‑Specific Depth

- Model support: Hosted + BYO

- RAG / knowledge integration: Cloud data sources

- Evaluation: Performance tests

- Guardrails: Policy enforcement

- Observability: Cloud dashboards

Pros

- Managed service

- Auto‑scaling

- Easy integration

Cons

- Cloud lock‑in

- Cost

- Less flexibility than self‑hosted

Security & Compliance

- Cloud security suite

- Certifications: Cloud provider

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- Cloud data sources

- CI/CD

- Monitoring tools

Pricing Model

Usage‑based

Best‑Fit Scenarios

- Managed deployments

- Cloud‑centric teams

- Auto‑scaling needs

10 — Azure ML Model Serving

One‑line verdict: Strong choice for enterprise cloud serving with integrated security and monitoring.

Short description: Azure ML serving provides scalable, secure model serving with cloud monitoring, CI/CD integration, and enterprise governance.

Standout Capabilities

- Managed endpoints

- Security and compliance

- Monitoring and logging

- Auto‑scaling

- Integration with cloud services

AI‑Specific Depth

- Model support: BYO + hosted

- RAG / knowledge integration: Cloud datasets

- Evaluation: Latency and load testing

- Guardrails: Security and access control

- Observability: Dashboards

Pros

- Managed service

- Enterprise security

- Easy deployment

Cons

- Cloud lock‑in

- Cost at scale

- Less portable

Security & Compliance

- Enterprise controls

- Certifications: Cloud provider

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- Cloud services

- CI/CD

- Monitoring

Pricing Model

Usage‑based

Best‑Fit Scenarios

- Enterprise cloud deployments

- Compliance needs

- Auto‑scaling and monitoring

Comparison Table

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch‑Out | Public Rating |

|---|---|---|---|---|---|---|

| TensorFlow Serving | TF models | Cloud / On‑prem | TensorFlow | Performance | Framework limit | N/A |

| TorchServe | PyTorch apps | Cloud / On‑prem | PyTorch | Custom handlers | Limited frameworks | N/A |

| BentoML | Framework‑agnostic serving | Cloud / Serverless | Multi‑framework | Flexible deployment | Needs orchestration | N/A |

| TorchServe Enterprise | Enterprise PyTorch | Cloud / Hybrid | PyTorch | Governance | Cost | N/A |

| TorchServe Pro | Managed PyTorch | Cloud / Hybrid | PyTorch | Metrics | Less flexible | N/A |

| Triton | GPU serving | Cloud / On‑prem | Multi‑framework | GPU optimized | Complex | N/A |

| Seldon Core | Kubernetes serving | Kubernetes | Multi‑framework | Scalability | Requires K8s | N/A |

| BentoML Enterprise | Enterprise multi | Cloud / Hybrid | Multi‑framework | Governance | Cost | N/A |

| Vertex AI Serving | Managed cloud | Cloud | Hosted + BYO | Auto‑scaling | Cloud lock‑in | N/A |

| Azure ML Serving | Enterprise cloud | Cloud | Hosted + BYO | Security | Cloud lock‑in | N/A |

Scoring & Evaluation

| Tool | Core | Reliability | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Total |

|---|---|---|---|---|---|---|---|---|---|

| TensorFlow Serving | 9 | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7.6 |

| TorchServe | 8 | 7 | 7 | 7 | 8 | 7 | 7 | 7 | 7.3 |

| BentoML | 9 | 8 | 8 | 8 | 7 | 8 | 7 | 7 | 7.8 |

| TorchServe Enterprise | 9 | 9 | 9 | 8 | 7 | 8 | 8 | 8 | 8.2 |

| TorchServe Pro | 8 | 8 | 8 | 8 | 7 | 8 | 8 | 7 | 7.8 |

| Triton | 9 | 9 | 8 | 8 | 7 | 9 | 7 | 7 | 8.0 |

| Seldon Core | 9 | 8 | 8 | 8 | 7 | 8 | 8 | 7 | 7.9 |

| BentoML Enterprise | 9 | 9 | 9 | 9 | 7 | 8 | 9 | 8 | 8.4 |

| Vertex AI Serving | 9 | 9 | 9 | 9 | 8 | 8 | 9 | 8 | 8.5 |

| Azure ML Serving | 9 | 9 | 9 | 9 | 8 | 8 | 9 | 8 | 8.5 |

Top 3 for Enterprise: Vertex AI Serving, Azure ML Serving, BentoML Enterprise

Top 3 for SMB: TensorFlow Serving, TorchServe, Seldon Core

Top 3 for Developers: BentoML, Triton, TorchServe Pro

Which Model Serving Platform Is Right for You

Solo / Freelancer

Use TensorFlow Serving or TorchServe for quick production setups.

SMB

BentoML or Seldon Core balance features and cost.

Mid‑Market

Triton or TorchServe Pro provide scalable performance.

Enterprise

Vertex AI Serving, Azure ML Serving, or BentoML Enterprise offer full managed services and governance.

Regulated Industries

Enterprise cloud offerings provide compliance controls out of the box.

Budget vs Premium

Open‑source tools for budget teams; managed services for premium support.

Build vs Buy

Open‑source and DIY tools give flexibility; enterprise services reduce ops burden.

Implementation Playbook

30 Days: Pilot key models, test performance and scale.

60 Days: Harden security, integrate monitoring, automate deployment.

90 Days: Scale to multiple services, establish governance, optimize cost.

Common Mistakes & How to Avoid Them

- Deploying without monitoring

- No version control for models

- Ignoring latency requirements

- Not planning auto‑scaling

- Poor cost visibility

- Weak security controls

- No CI/CD integration

- Skipping drift detection

- Missing guardrails

- Lack of rollback strategies

- Siloed deployments

- Ignoring multi‑cloud needs

- Poor observability

- No performance benchmarking

FAQs

1. What is a Model Serving Platform?

It is a system that deploys models as scalable APIs with monitoring, versioning, and routing.

2. Do these platforms support real‑time inference?

Yes, most support both real‑time and batch serving.

3. Can I use open‑source models?

Yes, many platforms support BYO open‑source models.

4. How is security handled?

Through encryption, authentication, authorization, and access controls.

5. Do they integrate with CI/CD?

Yes, integration with CI/CD enables automated deployments.

6. What is auto‑scaling?

Adjusting resource allocation based on demand automatically.

7. Can I monitor latency and errors?

Most platforms provide monitoring dashboards and logs.

8. What deployment options exist?

Cloud, on‑prem, hybrid, and serverless are supported depending on platform.

9. Are GPU‑based deployments supported?

Yes, platforms like Triton optimize GPU serving.

10. Do these tools support canary releases?

Some platforms support canary testing and A/B deployment.

11. What is model versioning?

Tracking and managing multiple versions of the same model.

12. How do I control cost?

Through cost dashboards, token usage tracking, and auto‑scaling.

Conclusion

Model Serving Platforms enable reliable, scalable, secure delivery of AI and ML models into production systems. Enterprises benefit from managed services like Vertex AI Serving and Azure ML Serving, while developers and smaller teams can use BentoML, TensorFlow Serving, TorchServe, and Seldon Core. Evaluate latency, cost control, governance, and observability when choosing a platform. Early pilots, integrated monitoring, and automated deployment pipelines ensure robust and scalable model serving.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals