Introduction

Data/Model Lineage for AI Pipelines helps teams track where data comes from, how it is transformed, which datasets and features were used for training, which experiments produced a model, and how that model moves into production. In AI systems, lineage is essential because model quality depends heavily on data quality, feature logic, training configuration, model version, evaluation results, and deployment history.

Modern AI pipelines are no longer simple training scripts. They include data warehouses, feature stores, notebooks, orchestration tools, model registries, vector databases, prompt pipelines, evaluation systems, monitoring platforms, and production endpoints. Without lineage, teams struggle to answer basic but critical questions: which dataset trained this model, which code version produced it, which features changed, which pipeline failed, and what downstream models are affected by a data issue.

Real-world use cases include root-cause analysis after model degradation, audit reporting for regulated AI, reproducibility of experiments, impact analysis before schema changes, model approval workflows, feature reuse governance, and tracking sensitive data across AI pipelines.

Best for: MLOps teams, data engineering teams, AI governance teams, regulated enterprises, and organizations running production AI pipelines

Not ideal for: small experiments, single-notebook workflows, or teams that do not yet need reproducibility, governance, or production auditability

What’s Changed in Data/Model Lineage for AI Pipelines

- Lineage now covers models, features, prompts, embeddings, datasets, and deployment artifacts

- Open standards such as OpenLineage improved interoperability across tools

- AI governance teams now require lineage for audit and compliance workflows

- Model registries increasingly connect models back to notebooks, datasets, and experiments

- Data catalogs expanded into AI asset catalogs

- Column-level lineage became more important for sensitive data governance

- Pipeline orchestration tools now emit lineage metadata automatically

- LLM pipelines require tracing across prompts, retrieval data, model calls, and outputs

- Feature stores made feature-level lineage critical for production ML

- Observability platforms use lineage to support root-cause analysis

- Lineage is now connected with deployment approvals and rollback workflows

- Hybrid and multi-cloud AI systems increased the need for portable lineage standards

Quick Buyer Checklist

- Tracks data lineage across jobs, datasets, and pipelines

- Tracks model lineage across experiments, datasets, features, and artifacts

- Supports pipeline orchestration integrations

- Provides model registry or catalog integration

- Supports column-level or feature-level lineage

- Includes impact analysis and root-cause workflows

- Supports metadata search and discovery

- Offers governance, RBAC, and audit logs

- Works with cloud, hybrid, and open-source systems

- Supports ML and LLM pipeline workflows

- Provides APIs and extensibility

- Avoids vendor lock-in where portability matters

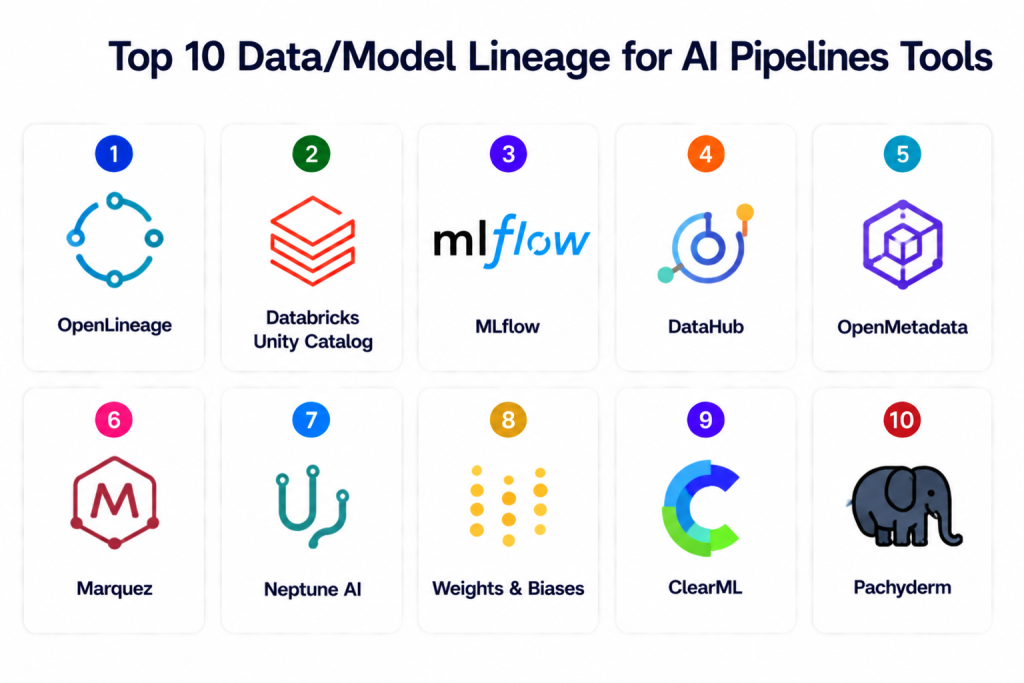

Top 10 Data/Model Lineage for AI Pipelines Tools

1 — OpenLineage

One-line verdict: Best open standard for collecting lineage metadata across data and AI pipelines.

Short description: OpenLineage is an open standard for lineage metadata collection that tracks metadata about datasets, jobs, and runs. It helps teams understand root cause, impact, and movement of data across complex pipelines.

Standout Capabilities

- Open lineage metadata standard

- Tracks datasets, jobs, and runs

- Extensible metadata facets

- Works with pipeline tools

- Supports Marquez reference implementation

- Useful for root-cause analysis

- Strong interoperability focus

AI-Specific Depth

- Model support: Indirect through pipeline metadata and integrations

- RAG / knowledge integration: Custom metadata extensions possible

- Evaluation: Pipeline-level lineage validation

- Guardrails: Governance through metadata and policies

- Observability: Lineage events and metadata tracking

Pros

- Open and interoperable

- Reduces vendor lock-in

- Strong fit for pipeline metadata

Cons

- Requires implementation effort

- Model-specific lineage needs customization

- Governance UI depends on connected tools

Security & Compliance

Security depends on deployment and backend systems. Certifications are not publicly stated.

Deployment & Platforms

Cloud, on-prem, hybrid.

Integrations & Ecosystem

OpenLineage works best as a standard layer across data and AI systems.

- Marquez

- Airflow

- Spark

- dbt

- Data pipelines

- Metadata systems

- Observability workflows

Pricing Model

Open-source.

Best-Fit Scenarios

- Open lineage architecture

- Pipeline metadata standardization

- Cross-tool interoperability

2 — Databricks Unity Catalog

One-line verdict: Best lakehouse-native governance platform for data and AI lineage.

Short description: Unity Catalog provides governance, cataloging, access control, and lineage for data and AI assets across Databricks environments. Databricks documentation describes lineage visualization through Catalog Explorer and lineage system tables.

Standout Capabilities

- Data and AI asset catalog

- Table and column lineage

- Model governance support

- Access control

- Audit workflows

- System lineage tables

- Lakehouse-native governance

AI-Specific Depth

- Model support: Supports AI and ML assets in Databricks ecosystem

- RAG / knowledge integration: Supports governed data assets used in AI pipelines

- Evaluation: Works with Databricks ML workflows

- Guardrails: Fine-grained access controls

- Observability: Lineage visualization and system tables

Pros

- Strong governance inside Databricks

- Column-level lineage support

- Good fit for enterprise lakehouse teams

Cons

- Best within Databricks ecosystem

- Cloud/platform dependency

- May be heavy for smaller teams

Security & Compliance

Fine-grained access controls, auditability, governance policies, and catalog-level permissions. Certifications follow Databricks platform disclosures.

Deployment & Platforms

Cloud / Databricks lakehouse.

Integrations & Ecosystem

Unity Catalog works deeply across Databricks workloads.

- Databricks workflows

- Delta Lake

- MLflow

- Lakehouse tables

- SQL workloads

- Model governance workflows

Pricing Model

Platform-based subscription.

Best-Fit Scenarios

- Databricks-centric AI pipelines

- Lakehouse governance

- Enterprise lineage and auditability

3 — MLflow

One-line verdict: Best lightweight model lineage foundation for experiment tracking and model registry workflows.

Short description: MLflow supports experiment tracking, model registry workflows, artifact tracking, and model lineage. MLflow documentation describes tracking datasets, notebooks, experiments, and models used to create registered models.

Standout Capabilities

- Experiment tracking

- Model registry

- Artifact tracking

- Model versioning

- Metadata logging

- Reproducibility support

- Framework flexibility

AI-Specific Depth

- Model support: Multi-framework and BYO models

- RAG / knowledge integration: Custom integrations possible

- Evaluation: Experiment comparison and model evaluation workflows

- Guardrails: Stage transitions and approval patterns

- Observability: Experiment and model metadata dashboards

Pros

- Strong open-source adoption

- Simple model lineage workflows

- Easy integration with MLOps stacks

Cons

- Data lineage is limited without integrations

- Enterprise governance requires additional tooling

- Complex lineage graphs need external metadata systems

Security & Compliance

Access controls depend on deployment or managed provider. Certifications are not publicly stated.

Deployment & Platforms

Cloud, on-prem, hybrid.

Integrations & Ecosystem

MLflow integrates broadly with AI development and deployment workflows.

- Databricks

- Airflow

- Kubeflow

- Feature stores

- CI/CD systems

- Model serving tools

Pricing Model

Open-source with managed ecosystem offerings.

Best-Fit Scenarios

- Model lineage tracking

- Experiment reproducibility

- Model registry governance

4 — DataHub

One-line verdict: Best open-source metadata platform for cross-system data and AI lineage visibility.

Short description: DataHub is a metadata platform that helps teams catalog data assets, manage metadata, track lineage, and understand impact across data systems. It is widely used for enterprise data discovery and governance workflows.

Standout Capabilities

- Metadata catalog

- Dataset lineage

- Impact analysis

- Search and discovery

- Ownership tracking

- Schema metadata

- Extensible ingestion framework

AI-Specific Depth

- Model support: Metadata extensibility supports AI asset tracking

- RAG / knowledge integration: Tracks data sources used in RAG workflows through metadata modeling

- Evaluation: Governance and impact workflows

- Guardrails: Ownership and policy metadata

- Observability: Metadata graph and lineage views

Pros

- Strong metadata graph

- Open-source flexibility

- Good ecosystem coverage

Cons

- Model lineage needs customization

- Setup and ingestion can require effort

- Advanced governance may need enterprise support

Security & Compliance

RBAC, metadata policies, ownership controls, and audit workflows depending on deployment.

Deployment & Platforms

Cloud, on-prem, hybrid.

Integrations & Ecosystem

DataHub works across modern data and AI stacks.

- Data warehouses

- Data lakes

- dbt

- Airflow

- BI tools

- ML metadata systems

Pricing Model

Open-source with enterprise offerings.

Best-Fit Scenarios

- Enterprise metadata governance

- Cross-system lineage

- Data catalog modernization

5 — OpenMetadata

One-line verdict: Best open-source catalog for data lineage, metadata governance, and AI pipeline visibility.

Short description: OpenMetadata provides metadata management, lineage, data discovery, collaboration, and governance workflows. It is useful for teams connecting data assets with AI pipeline governance.

Standout Capabilities

- Data catalog

- Lineage tracking

- Metadata management

- Collaboration workflows

- Data quality integrations

- Ownership tracking

- Governance automation

AI-Specific Depth

- Model support: Metadata-driven model governance possible

- RAG / knowledge integration: Tracks governed source data for AI systems

- Evaluation: Data quality and metadata validation

- Guardrails: Ownership and access governance

- Observability: Lineage dashboards

Pros

- Open-source and flexible

- Strong metadata visibility

- Good governance foundation

Cons

- AI-specific model lineage requires integration

- Enterprise workflows may require customization

- Setup complexity for large systems

Security & Compliance

RBAC, metadata permissions, auditability, and governance controls depending on deployment.

Deployment & Platforms

Cloud, on-prem, hybrid.

Integrations & Ecosystem

OpenMetadata fits well into modern data governance and AI pipeline stacks.

- Data warehouses

- Data lakes

- Airflow

- dbt

- BI tools

- Data quality tools

Pricing Model

Open-source with managed options.

Best-Fit Scenarios

- Open metadata governance

- AI pipeline lineage foundations

- Data discovery and cataloging

6 — Marquez

One-line verdict: Best reference implementation for OpenLineage-based metadata and lineage collection.

Short description: Marquez is a metadata service that collects and visualizes lineage metadata using OpenLineage events. It helps teams implement lineage collection across pipelines with a practical backend and UI.

Standout Capabilities

- OpenLineage backend

- Dataset and job metadata

- Run tracking

- Pipeline lineage visualization

- API-based metadata collection

- Root-cause analysis support

- Open-source architecture

AI-Specific Depth

- Model support: Pipeline metadata; model tracking requires customization

- RAG / knowledge integration: Custom lineage extensions possible

- Evaluation: Pipeline-level validation

- Guardrails: Metadata governance via integrations

- Observability: Lineage graphs and run metadata

Pros

- OpenLineage-native

- Strong for pipeline lineage

- Useful for open-source stacks

Cons

- Less model-specific out of the box

- Requires integration work

- Enterprise governance is limited

Security & Compliance

Security depends on deployment and access controls. Certifications are not publicly stated.

Deployment & Platforms

Cloud, on-prem, hybrid.

Integrations & Ecosystem

Marquez is strongest in OpenLineage-based architectures.

- OpenLineage

- Airflow

- Spark

- Pipeline systems

- Metadata workflows

Pricing Model

Open-source.

Best-Fit Scenarios

- OpenLineage implementation

- Pipeline lineage collection

- Root-cause analysis workflows

7 — Neptune AI

One-line verdict: Best experiment metadata platform for model lineage and research reproducibility.

Short description: Neptune AI helps teams track experiments, model metadata, datasets, artifacts, metrics, and training runs. It is useful for connecting model lineage with experimentation workflows.

Standout Capabilities

- Experiment tracking

- Metadata logging

- Artifact tracking

- Dataset references

- Run comparison

- Collaboration dashboards

- Model version context

AI-Specific Depth

- Model support: Multi-framework and BYO models

- RAG / knowledge integration: Custom metadata logging possible

- Evaluation: Experiment comparison and metrics tracking

- Guardrails: Project-level access controls

- Observability: Experiment dashboards

Pros

- Strong experiment metadata

- Good for research reproducibility

- Easy collaboration

Cons

- Not a full data lineage platform

- Requires integration with data catalog tools

- Governance workflows are limited

Security & Compliance

RBAC and access controls vary by plan. Certifications are not publicly stated.

Deployment & Platforms

Cloud / Hybrid.

Integrations & Ecosystem

Neptune integrates with common ML and experiment workflows.

- Python ML frameworks

- Notebooks

- CI/CD workflows

- Model training pipelines

- Artifact stores

Pricing Model

Subscription-based.

Best-Fit Scenarios

- Experiment lineage

- Model development tracking

- Research reproducibility

8 — Weights & Biases

One-line verdict: Best for experiment, dataset, and model lineage in collaborative AI development.

Short description: Weights & Biases tracks experiments, artifacts, datasets, model versions, metrics, and training runs. It helps teams connect experimentation activity with model lineage and reproducibility.

Standout Capabilities

- Experiment tracking

- Artifact versioning

- Dataset tracking

- Model version metadata

- Collaboration dashboards

- Run comparison

- Evaluation tracking

AI-Specific Depth

- Model support: Multi-framework and BYO models

- RAG / knowledge integration: Custom tracking possible

- Evaluation: Model and LLM evaluation workflows

- Guardrails: Access controls and project permissions

- Observability: Experiment and artifact dashboards

Pros

- Excellent developer experience

- Strong experiment lineage

- Good collaboration workflows

Cons

- Not a full enterprise data lineage catalog

- Cost can grow with scale

- Governance requires additional systems

Security & Compliance

SSO, RBAC, private deployment options, and audit features vary by plan.

Deployment & Platforms

Cloud, hybrid, private deployment options.

Integrations & Ecosystem

Weights & Biases integrates broadly across AI development.

- PyTorch

- TensorFlow

- Hugging Face

- Notebooks

- CI/CD tools

- Evaluation workflows

Pricing Model

Subscription-based with enterprise options.

Best-Fit Scenarios

- Collaborative AI experimentation

- Artifact and model lineage

- Reproducible training workflows

9 — ClearML

One-line verdict: Best open-source MLOps platform for experiment, data, and model lineage in one workflow.

Short description: ClearML provides experiment tracking, data management, model management, orchestration, and automation. It helps teams connect experiments, datasets, models, and pipelines into a reproducible lineage workflow.

Standout Capabilities

- Experiment tracking

- Dataset versioning

- Model registry

- Pipeline automation

- Artifact tracking

- Reproducibility workflows

- Open-source deployment

AI-Specific Depth

- Model support: Multi-framework and BYO models

- RAG / knowledge integration: Custom pipeline tracking possible

- Evaluation: Experiment and model metrics tracking

- Guardrails: Project-level access controls

- Observability: Experiment and pipeline dashboards

Pros

- Strong all-in-one MLOps functionality

- Open-source option

- Good reproducibility workflows

Cons

- UI and operations can require learning

- Enterprise governance varies by edition

- Data lineage depth may need integrations

Security & Compliance

RBAC, access controls, and deployment security depend on edition and architecture.

Deployment & Platforms

Cloud, on-prem, hybrid.

Integrations & Ecosystem

ClearML works with common ML frameworks and automation systems.

- PyTorch

- TensorFlow

- Kubernetes

- CI/CD systems

- Data storage

- Model serving workflows

Pricing Model

Open-source with enterprise offerings.

Best-Fit Scenarios

- End-to-end MLOps lineage

- Experiment and dataset tracking

- Reproducible pipelines

10 — Pachyderm

One-line verdict: Best for data versioning and pipeline lineage in reproducible AI workflows.

Short description: Pachyderm provides data versioning, pipeline automation, and lineage tracking for data-centric machine learning workflows. It is especially useful for reproducible batch pipelines and data-heavy AI systems.

Standout Capabilities

- Data versioning

- Pipeline lineage

- Reproducible workflows

- Git-like data management

- Batch pipeline automation

- Dataset tracking

- Artifact reproducibility

AI-Specific Depth

- Model support: Framework agnostic through pipeline workflows

- RAG / knowledge integration: Data pipeline tracking possible

- Evaluation: Pipeline validation and reproducibility

- Guardrails: Access controls and version governance

- Observability: Pipeline lineage and run metadata

Pros

- Strong data reproducibility

- Good pipeline lineage

- Useful for batch AI workflows

Cons

- More data-centric than model-centric

- Setup complexity

- Enterprise features vary by offering

Security & Compliance

Access controls, data versioning governance, and infrastructure security depend on deployment.

Deployment & Platforms

Cloud, on-prem, hybrid.

Integrations & Ecosystem

Pachyderm works well with batch and data-heavy AI pipelines.

- Data lakes

- Kubernetes

- Batch workflows

- ML pipelines

- Artifact stores

Pricing Model

Open-source / enterprise subscription.

Best-Fit Scenarios

- Data-heavy AI pipelines

- Reproducible batch workflows

- Dataset lineage and versioning

Comparison Table

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| OpenLineage | Open lineage standard | Cloud / Hybrid / On-prem | Metadata-based | Interoperability | Requires implementation | N/A |

| Unity Catalog | Lakehouse lineage | Cloud | Databricks ecosystem | Data and AI governance | Platform dependency | N/A |

| MLflow | Model lineage | Cloud / Hybrid / On-prem | Multi-framework | Experiment and registry tracking | Limited data lineage | N/A |

| DataHub | Metadata catalog | Cloud / Hybrid / On-prem | Extensible | Cross-system lineage | Setup effort | N/A |

| OpenMetadata | Open data governance | Cloud / Hybrid / On-prem | Metadata-based | Catalog and lineage | AI customization needed | N/A |

| Marquez | OpenLineage backend | Cloud / Hybrid / On-prem | Metadata-based | Pipeline lineage | Limited model features | N/A |

| Neptune AI | Experiment metadata | Cloud / Hybrid | Multi-framework | Research lineage | Not full data lineage | N/A |

| Weights & Biases | Experiment and artifact lineage | Cloud / Hybrid | Multi-framework | Developer experience | Cost at scale | N/A |

| ClearML | MLOps lineage | Cloud / Hybrid / On-prem | Multi-framework | All-in-one tracking | Learning curve | N/A |

| Pachyderm | Data versioning | Cloud / Hybrid / On-prem | Framework agnostic | Reproducibility | Data-centric focus | N/A |

Scoring & Evaluation

Scoring is comparative, not absolute. Data catalog and lineage platforms score higher for cross-system visibility, while experiment tracking tools score higher for model development workflows. Teams should choose based on whether the main requirement is data lineage, model lineage, experiment reproducibility, governance, or full pipeline observability.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| OpenLineage | 9 | 8 | 7 | 9 | 6 | 9 | 7 | 8 | 8.0 |

| Unity Catalog | 9 | 8 | 9 | 9 | 8 | 8 | 9 | 9 | 8.7 |

| MLflow | 8 | 8 | 7 | 9 | 8 | 9 | 7 | 8 | 8.0 |

| DataHub | 9 | 8 | 8 | 9 | 7 | 8 | 8 | 8 | 8.2 |

| OpenMetadata | 8 | 8 | 8 | 8 | 7 | 9 | 8 | 7 | 7.9 |

| Marquez | 8 | 7 | 7 | 8 | 7 | 9 | 7 | 7 | 7.6 |

| Neptune AI | 7 | 8 | 7 | 8 | 8 | 8 | 7 | 8 | 7.6 |

| Weights & Biases | 8 | 9 | 7 | 9 | 8 | 8 | 8 | 9 | 8.2 |

| ClearML | 8 | 8 | 7 | 8 | 7 | 9 | 7 | 8 | 7.8 |

| Pachyderm | 8 | 8 | 8 | 8 | 6 | 8 | 8 | 7 | 7.6 |

Top 3 for Enterprise: Unity Catalog, DataHub, Weights & Biases

Top 3 for SMB: MLflow, OpenMetadata, ClearML

Top 3 for Developers: OpenLineage, MLflow, Marquez

Which Data/Model Lineage for AI Pipelines Tool Is Right for You

Solo / Freelancer

MLflow, OpenLineage, and Marquez are practical starting points for lightweight experiment and pipeline lineage without heavy enterprise overhead.

SMB

MLflow, ClearML, and OpenMetadata provide useful lineage visibility, experiment tracking, and governance foundations for growing AI teams.

Mid-Market

DataHub, OpenMetadata, and Weights & Biases provide broader metadata coverage, collaboration, and cross-team lineage visibility.

Enterprise

Unity Catalog, DataHub, Weights & Biases, and OpenMetadata are strong options for organizations needing governance, auditability, cataloging, and AI asset visibility at scale.

Regulated Industries

Unity Catalog, DataHub, and OpenMetadata are strong choices when auditability, sensitive data tracking, ownership, and impact analysis are important.

Budget vs Premium

Open-source tools such as OpenLineage, Marquez, MLflow, OpenMetadata, and ClearML reduce licensing costs but require engineering ownership. Managed enterprise platforms reduce setup effort and add stronger governance.

Build vs Buy

Build with open standards and open-source metadata tools when portability and customization matter. Buy managed governance and lineage platforms when enterprise auditability, support, and scale are priorities.

Implementation Playbook

30 Days

- Identify critical AI pipelines

- Define lineage requirements

- Track datasets, jobs, and training runs

- Connect experiment tracking and model registry

- Establish ownership metadata

60 Days

- Add feature and column-level lineage

- Integrate orchestration tools

- Add governance and access controls

- Build impact analysis workflows

- Connect lineage with monitoring alerts

90 Days

- Expand lineage across model serving and deployment

- Add LLM and RAG pipeline tracing

- Standardize metadata naming conventions

- Automate lineage capture

- Build audit and compliance reports

Common Mistakes & How to Avoid Them

- Tracking only models while ignoring datasets

- Missing feature-level lineage

- No connection between experiments and deployments

- Manual metadata entry without automation

- Poor naming conventions

- No ownership metadata

- Ignoring sensitive data lineage

- Lack of lineage for RAG and vector pipelines

- No impact analysis before schema changes

- Treating lineage as only a compliance task

- No integration with monitoring systems

- Missing artifact and code lineage

- Vendor lock-in without metadata portability

- Not validating lineage completeness

FAQs

1. What is data lineage in AI pipelines?

Data lineage tracks where data comes from, how it changes, and where it flows across AI pipelines.

2. What is model lineage?

Model lineage tracks which data, features, code, experiments, artifacts, and evaluations produced a model.

3. Why is lineage important for AI systems?

Lineage helps teams debug failures, reproduce models, assess impact, support audits, and improve governance.

4. What is OpenLineage?

OpenLineage is an open standard for collecting lineage metadata about datasets, jobs, and pipeline runs.

5. Does MLflow support model lineage?

Yes. MLflow supports experiment tracking, model registry workflows, artifacts, and model metadata useful for lineage.

6. What is column-level lineage?

Column-level lineage tracks how individual columns move and transform through pipelines.

7. Do lineage tools support LLM pipelines?

Some tools support LLM tracing directly, while others require custom metadata for prompts, embeddings, retrieval data, and outputs.

8. How does lineage help with compliance?

Lineage provides audit trails showing how data and models were created, transformed, validated, and deployed.

9. What tools are best for open-source lineage?

OpenLineage, Marquez, MLflow, OpenMetadata, DataHub, ClearML, and Pachyderm are strong open-source options.

10. Can lineage tools prevent model failures?

They do not prevent failures directly, but they make root-cause analysis and impact assessment much faster.

11. What should teams track in model lineage?

Teams should track datasets, features, code version, parameters, metrics, artifacts, approvals, deployments, and monitoring results.

12. How should teams start with lineage?

Start with one high-value AI pipeline, automate metadata capture, connect training runs to datasets and models, then expand gradually.

Conclusion

Data/Model Lineage for AI Pipelines is essential for reproducibility, governance, auditability, and operational reliability. Open standards like OpenLineage and tools like Marquez help teams avoid vendor lock-in, while platforms such as MLflow, Weights & Biases, Neptune AI, and ClearML provide strong experiment and model lineage for AI development workflows. Enterprise metadata and governance platforms like Unity Catalog, DataHub, and OpenMetadata extend lineage across datasets, features, models, and downstream systems. The best tool depends on whether your main need is model reproducibility, data governance, pipeline observability, or enterprise compliance. Start with one important AI pipeline, track datasets and model artifacts, connect lineage to monitoring and governance, then expand lineage coverage across your AI ecosystem.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals