Introduction

Model explainability platforms help organizations understand how AI and machine learning systems make decisions. As AI systems become more complex, especially with deep learning models, LLMs, recommendation engines, and autonomous AI agents, explainability has become essential for trust, compliance, debugging, governance, and operational safety.

Explainable AI platforms provide tools for feature attribution, prediction analysis, bias detection, root-cause diagnostics, counterfactual explanations, model transparency, and production observability. These platforms help data science, compliance, security, and business teams understand why models behave the way they do and whether those decisions are reliable, fair, and compliant. Explainable AI is increasingly considered foundational for enterprise AI adoption, especially in regulated and high-risk environments.

Why It Matters

- Improves trust in AI systems

- Helps debug model behavior

- Supports compliance and audit requirements

- Detects hidden bias and unfair decisions

- Enables safer enterprise AI deployment

- Improves transparency in AI-driven decisions

Real-World Use Cases

- Explaining loan approval predictions

- Healthcare diagnosis transparency

- Fraud detection analysis

- LLM output explainability

- AI agent reasoning inspection

- Hiring algorithm audits

- Insurance risk scoring analysis

- Production AI debugging and monitoring

Evaluation Criteria for Buyers

- Explainability depth and visualization quality

- Support for black-box and deep learning models

- Production monitoring capabilities

- Fairness and bias analysis support

- Root-cause analysis functionality

- LLM explainability support

- Integration with MLOps pipelines

- Governance and audit workflows

- Scalability across enterprise AI systems

- Ease of use for technical and business teams

Best For

Organizations deploying production-grade AI systems that require transparency, explainability, auditability, and model diagnostics across enterprise workflows.

Not Ideal For

Simple ML experiments where interpretability, governance, and operational monitoring are not critical.

What’s Changing in Model Explainability Platforms

- Explainability is becoming mandatory for enterprise AI adoption

- Black-box AI models are increasingly viewed as operational risks

- Explainability is merging with AI observability and governance

- LLM and generative AI explainability is becoming a major focus

- Counterfactual explanations are gaining enterprise adoption

- Runtime explainability is replacing static analysis workflows

- Human-centered explainability design is becoming important in enterprise UX

- Explainability platforms are integrating fairness and bias testing

- AI copilots and autonomous agents now require transparent reasoning paths

- Production explainability monitoring is becoming standard in MLOps workflows

Quick Buyer Checklist

Before selecting a model explainability platform, ensure:

- Feature attribution and interpretability support

- Deep learning and black-box model compatibility

- Production monitoring capabilities

- Fairness and bias analysis

- Counterfactual explanation support

- Explainability visualization tools

- Audit and governance workflows

- Integration with ML pipelines

- Enterprise scalability and observability

- LLM and generative AI explainability support

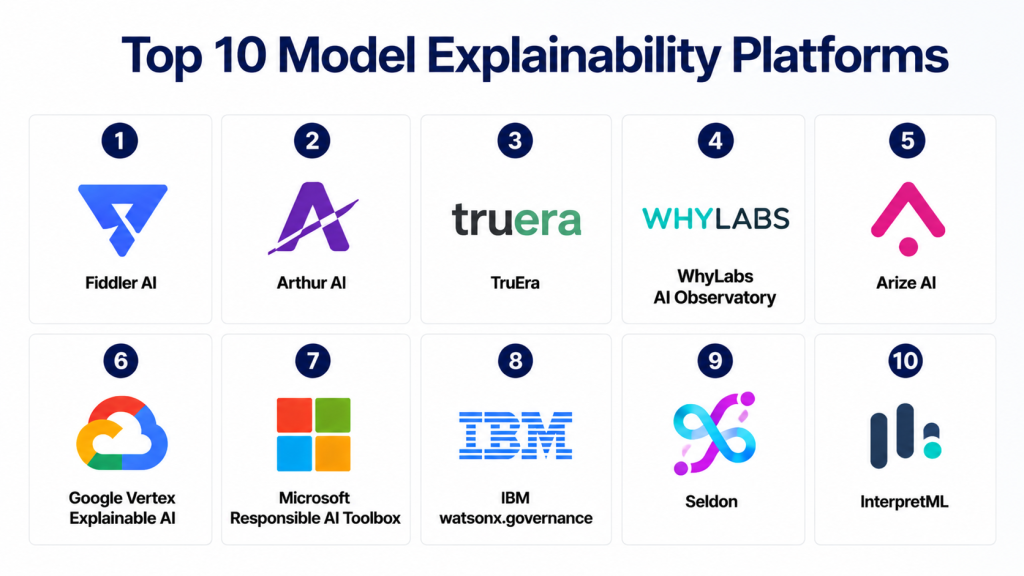

Top 10 Model Explainability Platforms

1- Fiddler AI

2- Arthur AI

3- TruEra

4- WhyLabs AI Observatory

5- Arize AI

6- Google Vertex Explainable AI

7- Microsoft Responsible AI Toolbox

8- IBM watsonx.governance

9- Seldon

10- InterpretML

1. Fiddler AI

One-line Verdict

Best enterprise explainability platform for production AI observability and model transparency.

Short Description

Fiddler AI provides explainability, fairness analysis, monitoring, and AI observability capabilities for enterprise AI systems. It helps organizations understand model decisions, identify drift, analyze bias, and explain predictions in production environments.

The platform is widely used for enterprise AI monitoring and explainable AI workflows. Review platforms highlight its strong visual explanation capabilities and production-focused explainability tooling.

Standout Capabilities

- Local and global explanations

- Feature attribution analysis

- Bias and fairness dashboards

- Drift monitoring

- Root-cause analysis

- Real-time observability

- Governance workflows

- Explainability visualizations

AI-Specific Depth

Fiddler enables teams to understand why models make specific predictions and how model behavior changes in production.

Pros

- Excellent explainability dashboards

- Strong production monitoring support

- Enterprise-ready observability tooling

Cons

- Enterprise pricing

- Requires integration effort

- Complex onboarding for smaller teams

Security & Compliance

Enterprise-grade governance and compliance support available.

Deployment & Platforms

- Cloud

- Hybrid enterprise environments

Integrations & Ecosystem

- ML pipelines

- Enterprise AI platforms

- Data engineering workflows

- Monitoring systems

Pricing Model

Enterprise subscription pricing.

Best-Fit Scenarios

- Production AI explainability

- Enterprise AI observability

- Responsible AI workflows

2. Arthur AI

One-line Verdict

Best explainability platform for enterprise AI monitoring and diagnostics.

Short Description

Arthur AI combines explainability, monitoring, fairness analysis, and performance tracking for enterprise AI systems operating in production environments.

Standout Capabilities

- Explainability dashboards

- Prediction diagnostics

- Fairness analysis

- Drift detection

- AI observability

- Alerting workflows

- Root-cause diagnostics

- Governance reporting

AI-Specific Depth

Arthur AI helps organizations understand why model performance changes and how predictions vary across user groups.

Pros

- Strong enterprise monitoring

- Good explainability tooling

- Production-ready architecture

Cons

- Enterprise pricing

- Requires ML integration

- Complex deployment for smaller teams

Security & Compliance

Enterprise governance support available.

Deployment & Platforms

- Cloud and hybrid deployments

Integrations & Ecosystem

- MLOps systems

- AI infrastructure

- Enterprise workflows

Pricing Model

Enterprise subscription pricing.

Best-Fit Scenarios

- Enterprise AI monitoring

- Explainability workflows

- AI risk analysis

3. TruEra

One-line Verdict

Best for explainability-driven model diagnostics and debugging.

Short Description

TruEra focuses on model explainability, diagnostics, performance analysis, and fairness evaluation to help organizations improve AI reliability and transparency.

Review platforms highlight TruEra’s strong root-cause analysis and diagnostic capabilities for production AI systems.

Standout Capabilities

- Model diagnostics

- Explainability analysis

- Root-cause investigation

- Drift monitoring

- Fairness testing

- Error analysis

- Feature impact evaluation

- Governance reporting

AI-Specific Depth

TruEra helps teams understand why models fail, drift, or generate unexpected outputs.

Pros

- Excellent diagnostics tooling

- Strong explainability features

- Useful for debugging complex models

Cons

- Requires ML expertise

- Enterprise-focused pricing

- Less policy management functionality

Security & Compliance

Enterprise-grade governance and compliance support.

Deployment & Platforms

- Cloud and hybrid

Integrations & Ecosystem

- ML pipelines

- Enterprise AI systems

- Data science workflows

Pricing Model

Enterprise licensing.

Best-Fit Scenarios

- AI debugging

- Explainability diagnostics

- Production model analysis

4. WhyLabs AI Observatory

One-line Verdict

Best for explainability combined with AI observability and drift analysis.

Short Description

WhyLabs AI Observatory focuses on monitoring, drift detection, observability, and explainability for production machine learning systems.

Standout Capabilities

- Drift monitoring

- Data quality analysis

- AI observability

- Explainability workflows

- Real-time monitoring

- Alerting systems

- Governance dashboards

- Production analytics

AI-Specific Depth

WhyLabs helps teams track changing model behavior while identifying explainability and reliability issues over time.

Pros

- Strong observability platform

- Excellent drift detection

- Real-time monitoring support

Cons

- Enterprise pricing

- Requires setup effort

- Limited governance workflows

Security & Compliance

Enterprise monitoring and governance support.

Deployment & Platforms

- Cloud platform

Integrations & Ecosystem

- ML pipelines

- Data warehouses

- Production AI systems

Pricing Model

Usage-based enterprise pricing.

Best-Fit Scenarios

- AI observability

- Production monitoring

- Drift and explainability analysis

5. Arize AI

One-line Verdict

Best for explainability and production AI performance analysis.

Short Description

Arize AI provides model observability, explainability, monitoring, and performance analysis for enterprise AI deployments.

Industry reviews highlight its strong automated drift detection and production monitoring workflows.

Standout Capabilities

- Model observability

- Explainability workflows

- Drift analysis

- Prediction tracing

- Root-cause diagnostics

- Data quality monitoring

- Real-time alerting

- AI analytics dashboards

AI-Specific Depth

Arize helps organizations understand how model performance changes and why predictions behave unexpectedly.

Pros

- Strong observability features

- Scalable monitoring architecture

- Good enterprise integrations

Cons

- Enterprise pricing

- Requires integration setup

- Learning curve for advanced features

Security & Compliance

Enterprise-grade deployment and governance support.

Deployment & Platforms

- Cloud platform

Integrations & Ecosystem

- ML platforms

- AI workflows

- Data engineering systems

Pricing Model

Enterprise subscription pricing.

Best-Fit Scenarios

- Production AI observability

- Explainability monitoring

- Enterprise AI analytics

6. Google Vertex Explainable AI

One-line Verdict

Best explainability platform for Google Cloud AI ecosystems.

Short Description

Google Vertex Explainable AI provides feature attribution, prediction analysis, and explainability workflows integrated into Vertex AI environments.

Industry reviews highlight its support for techniques such as Integrated Gradients and Sampled Shapley explanations.

Standout Capabilities

- Feature attribution

- Integrated Gradients

- Sampled Shapley analysis

- Prediction explanation APIs

- TensorFlow integration

- Model analysis workflows

- Explainability visualizations

- Vertex AI integration

AI-Specific Depth

Vertex Explainable AI enables teams to understand which features most influence predictions across models and datasets.

Pros

- Strong GCP integration

- Scalable cloud architecture

- Good attribution techniques

Cons

- Google Cloud dependency

- Less cross-cloud flexibility

- Enterprise complexity

Security & Compliance

Google Cloud enterprise security and compliance support.

Deployment & Platforms

- Google Cloud

- Vertex AI workflows

Integrations & Ecosystem

- TensorFlow

- BigQuery

- Vertex AI pipelines

Pricing Model

Usage-based cloud pricing.

Best-Fit Scenarios

- GCP AI environments

- Feature attribution analysis

- Cloud-native explainability

7. Microsoft Responsible AI Toolbox

One-line Verdict

Best explainability toolkit for Azure ML and responsible AI workflows.

Short Description

Microsoft Responsible AI Toolbox combines fairness analysis, explainability, debugging, and interpretability workflows into an integrated responsible AI toolkit.

Standout Capabilities

- Explainability dashboards

- Error analysis

- Fairness assessment

- Model debugging

- Feature importance analysis

- Responsible AI workflows

- Azure ML integration

- Visualization tooling

AI-Specific Depth

The toolbox helps teams investigate why models make decisions and where model errors occur across groups and cohorts.

Pros

- Strong responsible AI workflows

- Excellent debugging support

- Azure-native integration

Cons

- Azure ecosystem dependency

- Requires ML expertise

- Limited standalone governance features

Security & Compliance

Enterprise-grade Azure security support.

Deployment & Platforms

- Azure ML

- Python workflows

Integrations & Ecosystem

- Azure AI

- ML pipelines

- Python ML frameworks

Pricing Model

Open-source + Azure usage pricing.

Best-Fit Scenarios

- Responsible AI workflows

- Azure ML explainability

- Model debugging

8. IBM watsonx.governance

One-line Verdict

Best enterprise explainability and governance platform for regulated industries.

Short Description

IBM watsonx.governance combines explainability, fairness analysis, governance, monitoring, and compliance workflows for enterprise AI systems.

Standout Capabilities

- Explainability dashboards

- Fairness monitoring

- Governance workflows

- Compliance automation

- Audit trails

- AI lifecycle tracking

- Monitoring tools

- Enterprise reporting

AI-Specific Depth

IBM helps organizations explain model behavior while maintaining governance and auditability across the AI lifecycle.

Pros

- Strong governance ecosystem

- Excellent compliance support

- Enterprise-scale deployment capabilities

Cons

- Higher implementation complexity

- IBM ecosystem alignment preferred

- Enterprise pricing

Security & Compliance

Strong enterprise compliance and governance support.

Deployment & Platforms

- Hybrid cloud deployments

Integrations & Ecosystem

- IBM AI ecosystem

- Enterprise governance systems

- ML workflows

Pricing Model

Enterprise licensing.

Best-Fit Scenarios

- Regulated AI systems

- Enterprise explainability

- Compliance-focused AI programs

9. Seldon

One-line Verdict

Best framework-agnostic explainability platform for Kubernetes-based ML systems.

Short Description

Seldon provides deployment, monitoring, explainability, and governance tooling for machine learning models running in Kubernetes environments.

Industry reviews highlight its framework-agnostic architecture and operational flexibility.

Standout Capabilities

- Explainability APIs

- Kubernetes-native deployment

- Framework-agnostic support

- Monitoring workflows

- Drift analysis

- Governance integrations

- Real-time inference support

- Scalable deployment pipelines

AI-Specific Depth

Seldon helps organizations operationalize explainability and monitoring across distributed ML environments.

Pros

- Strong Kubernetes integration

- Flexible deployment support

- Framework-agnostic architecture

Cons

- Requires DevOps expertise

- Complex setup

- Less beginner-friendly

Security & Compliance

Enterprise infrastructure security depends on deployment configuration.

Deployment & Platforms

- Kubernetes

- Cloud and hybrid environments

Integrations & Ecosystem

- TensorFlow

- PyTorch

- Kubeflow

- ML pipelines

Pricing Model

Enterprise and open-source options.

Best-Fit Scenarios

- Kubernetes ML operations

- Explainable inference systems

- Enterprise MLOps

10. InterpretML

One-line Verdict

Best open-source explainability toolkit for interpretable machine learning.

Short Description

InterpretML is an open-source explainability toolkit focused on interpretable models and black-box explainability workflows.

Industry explainability reviews highlight it as a strong general-purpose explainability framework.

Standout Capabilities

- Glassbox interpretable models

- Black-box explainability

- Feature importance analysis

- SHAP integration

- Visualization support

- Python workflows

- Model diagnostics

- Open-source flexibility

AI-Specific Depth

InterpretML enables teams to build interpretable models while also explaining black-box model predictions.

Pros

- Open-source flexibility

- Good explainability coverage

- Developer-friendly workflows

Cons

- Requires coding expertise

- Limited enterprise governance features

- Production monitoring must be added separately

Security & Compliance

Depends on deployment environment.

Deployment & Platforms

- Python environments

- Notebook workflows

Integrations & Ecosystem

- Scikit-learn

- SHAP

- Python ML stack

Pricing Model

Open-source.

Best-Fit Scenarios

- Explainable ML research

- Developer workflows

- Interpretable AI projects

Comparison Table

| Platform | Best For | Core Strength | Production Monitoring | Governance Support | Deployment |

|---|---|---|---|---|---|

| Fiddler AI | Enterprise observability | Explainability + monitoring | High | High | Cloud/Hybrid |

| Arthur AI | Enterprise diagnostics | Monitoring + explainability | High | Medium | Cloud/Hybrid |

| TruEra | Model debugging | Diagnostics | Medium | Medium | Cloud/Hybrid |

| WhyLabs | AI observability | Drift + explainability | High | Medium | Cloud |

| Arize AI | Production analytics | Observability | High | Medium | Cloud |

| Vertex Explainable AI | GCP ecosystems | Feature attribution | Medium | Medium | GCP |

| Microsoft RAI Toolbox | Azure ML | Debugging + fairness | Medium | Medium | Azure |

| IBM watsonx | Regulated industries | Governance + explainability | High | Very High | Hybrid |

| Seldon | Kubernetes AI | Explainable inference | High | Medium | Kubernetes |

| InterpretML | Open-source workflows | Interpretable ML | Low | Low | Python |

Scoring & Evaluation Table

| Platform | Core Features | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Fiddler AI | 9.3 | 8.6 | 9.1 | 9.2 | 9.1 | 8.8 | 8.5 | 8.9 |

| Arthur AI | 9.1 | 8.4 | 8.9 | 9.0 | 9.0 | 8.6 | 8.4 | 8.8 |

| TruEra | 9.0 | 8.3 | 8.8 | 8.9 | 8.8 | 8.5 | 8.4 | 8.7 |

| WhyLabs | 8.9 | 8.7 | 8.8 | 8.8 | 9.0 | 8.4 | 8.7 | 8.8 |

| Arize AI | 9.1 | 8.5 | 9.0 | 8.9 | 9.1 | 8.6 | 8.5 | 8.8 |

| Vertex Explainable AI | 9.0 | 8.4 | 9.1 | 9.0 | 8.9 | 8.5 | 8.4 | 8.8 |

| Microsoft RAI Toolbox | 8.9 | 8.8 | 9.0 | 8.9 | 8.7 | 8.5 | 8.9 | 8.8 |

| IBM watsonx | 9.4 | 8.0 | 9.1 | 9.5 | 9.0 | 8.9 | 8.2 | 8.9 |

| Seldon | 8.8 | 7.9 | 9.2 | 8.8 | 9.1 | 8.3 | 8.5 | 8.6 |

| InterpretML | 8.7 | 8.5 | 8.4 | 8.0 | 8.5 | 8.2 | 9.0 | 8.5 |

Top 3 Recommendations

Best for Enterprise AI

- Fiddler AI

- IBM watsonx.governance

- Arthur AI

Best for Developers

- InterpretML

- Microsoft Responsible AI Toolbox

- Seldon

Best for Production Monitoring

- Arize AI

- WhyLabs AI Observatory

- Fiddler AI

Which Model Explainability Platform Is Right for You

For Solo Developers

InterpretML and Microsoft Responsible AI Toolbox are strong choices because they provide flexible explainability workflows without requiring large governance infrastructure.

For SMBs

WhyLabs and Arize AI provide scalable observability and explainability with relatively approachable deployment models.

For Mid-Market Organizations

Arthur AI and TruEra balance diagnostics, explainability, and monitoring without requiring massive governance programs.

For Enterprise AI Programs

Fiddler AI, IBM watsonx.governance, and Vertex Explainable AI are better suited for organizations that need governance, monitoring, compliance, and large-scale explainability workflows.

Budget vs Premium

Open-source explainability frameworks reduce cost but require engineering effort. Enterprise platforms provide monitoring, governance, dashboards, and compliance support at larger scale.

Feature Depth vs Ease of Use

Fiddler AI and Arize balance usability and enterprise depth, while Seldon offers more operational flexibility for Kubernetes-heavy environments.

Integrations & Scalability

Cloud-native explainability platforms integrate better into enterprise MLOps pipelines and production AI systems.

Security & Compliance Needs

Highly regulated industries should prioritize IBM watsonx.governance, Fiddler AI, and Vertex Explainable AI.

Implementation Playbook

First 30 Days

- Identify high-risk AI models

- Define explainability requirements

- Select explainability platform

- Configure baseline monitoring

- Enable feature attribution analysis

Days 30–60

- Add fairness and drift monitoring

- Create explainability dashboards

- Build audit workflows

- Integrate MLOps pipelines

- Validate explanations with domain experts

Days 60–90

- Scale explainability monitoring across production systems

- Automate reporting workflows

- Add human review checkpoints

- Optimize monitoring thresholds

- Improve governance and compliance reporting

Common Mistakes and How to Avoid Them

- Treating explainability as a one-time audit

- Ignoring runtime explainability monitoring

- Using accuracy as the only evaluation metric

- Overlooking fairness and bias analysis

- Poor visualization design for business users

- Lack of governance integration

- Weak production monitoring setup

- Ignoring LLM explainability requirements

- Not validating explanations with stakeholders

- Failing to document explanation workflows

- Missing root-cause diagnostics

- Treating explainability as only a technical requirement

Frequently Asked Questions

1. What are model explainability platforms?

Model explainability platforms help organizations understand how AI systems make decisions. They provide tools for feature attribution, prediction analysis, monitoring, diagnostics, and transparency across machine learning workflows.

2. Why is explainability important in AI?

Explainability improves trust, transparency, compliance, and debugging capabilities. It helps organizations understand why models make decisions and identify potential risks, bias, or operational issues.

3. What is explainable AI?

Explainable AI refers to methods and tools that make AI decisions understandable to humans. It includes feature attribution, interpretable models, visualizations, and decision analysis workflows.

4. What is the difference between explainable and interpretable AI?

Interpretable AI usually refers to models that are inherently understandable, while explainable AI often refers to methods that explain complex or black-box models after predictions are made.

5. Which platforms are best for enterprise explainability?

Fiddler AI, IBM watsonx.governance, Arthur AI, and Arize AI are strong enterprise-focused explainability platforms with monitoring and governance support.

6. What are SHAP and LIME?

SHAP and LIME are popular explainability techniques used to estimate feature importance and explain individual predictions from machine learning models.

7. Can explainability platforms monitor production AI systems?

Yes. Modern explainability platforms often include observability, drift detection, monitoring, and real-time analytics for production AI systems.

8. Why is explainability important for LLMs?

LLMs and AI agents can generate unpredictable outputs, so explainability helps organizations understand reasoning paths, reduce risk, and improve governance.

9. What industries need explainability most?

Finance, healthcare, insurance, government, cybersecurity, and enterprise SaaS are among the industries where explainability is critical.

10. What should buyers prioritize first?

Organizations should prioritize explainability depth, monitoring capabilities, governance integration, production scalability, and compatibility with existing ML workflows.

Conclusion

Model explainability platforms have become a foundational layer in modern enterprise AI systems as organizations increasingly rely on machine learning, LLMs, and autonomous AI agents for critical business decisions. These platforms help teams move beyond black-box AI by providing transparency, monitoring, diagnostics, fairness analysis, and governance workflows that improve trust and operational safety. Solutions such as Fiddler AI, Arthur AI, TruEra, Arize AI, and IBM watsonx.governance are leading the shift toward explainable, observable, and accountable AI systems at enterprise scale. As explainability becomes tightly connected with observability, governance, compliance, and responsible AI operations, organizations that invest early in strong explainability tooling will be better positioned to build scalable and trustworthy AI systems for the future.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals