What is Site Scraping?

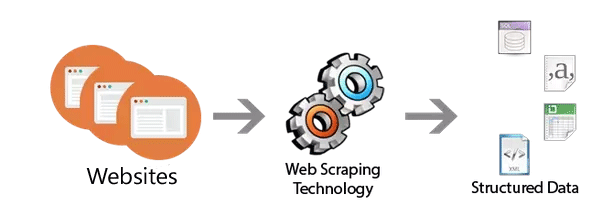

“Site scraping also” called or known by some other names like Web scraping, Screen scraping, Web data mining, Web harvesting, or Web data extraction is a technique or process through which large data or information from various websites can be extract & save into the local or database in structured ways automatically. Data can be in the form of anything like, product items, images, videos, text, contact information etc. With the help of scrapping software’s one can easily & automatically load multiple pages and extract data from it as per their requirements. One can use these software’s to look new data manually or automatically, fetching the new or updated data and can store them for easy access.

Through this image we can understand it clearly:

Why Site Scraping?

As websites can be accessible in web browsers only and when someone needs data or information from websites they needs to open it in their web browsers and to save or download the data the only option they have is just to copy & paste or download it in their local system because websites do not provide the functionality to save a copy of data’s for personal use. But, when you need hell lot of information from various sites than doing manually all tasks is not a good idea. Here Site scrapings comes into the frame make this task possible within a fraction of the time.

But the Question is when one needs to Site scraping?

Nowadays, Site Scraping has been used by various industries which includes News, Blogs, Forums, Tourism, E-commerce, Social Media, Real Estate, Financial Reports, and list goes on and on. The purpose may also vary, some of them are like:

- Research for web content/business intelligence

- Price comparison

- Finding sales leads/conducting market research by crawling public data sources (e.g. Facebook, Twitter & other social networks)

- Sending product data from an e-commerce site to another online vendor (e.g. Google Shopping) It enables you to automate the tedious process of updating your product details, which is difficult if your stock changes often.

- Data monitoring

- It’s also useful when your data needs to be move from one place to another.

There are some antilogy for it to decide where it is ethical/legal or not because when we see that search engine also work on to the similar method. To index or rank a page or website Search engine also crawls the information of all webpages & similarly in scraping data or information is extracted from webpages and after that user use all these information’s for their own purpose.

If we see technically than we can say that it may not be illegal because a person who is doing site scraping is simply taking information which is available to him publically through web browsers. But it does not mean that performing site scrapping does affect negative to website owners.

To understand it let’s see overview about How site scraping work?

Human Copy and Paste – In this process simply copy and paste data from site to local manually.

HTTP Command – HTTP interface commands that send & receive data with the web server, providing us with the required resources

HTML& DOM (Document Object Model) parsers – In this method we can use software’s that will dissect the structural data of front-end of a website (HTML language) or the underlying DOM model so that we can get that required information via means of locating these elements and obtaining their contents.

Custom Web-scraping software – There are likewise software tools that can go past the above techniques and will perceive the information structure of a page (with or without client intercession) or will give an account interface that evacuates the need to manually write code and that can store the scratched information in local or database. This software may consolidate a built-in browser or will catch your capture on any browser you may have installed. Some web scraping software can also be used to extract data from an API directly.

Now let’s see how scraping hurts your website?

SEO Ranking: The first and major problem of site scrapping is that when attackers or site scrappers extract your data from your site & published it to the site than if search engine crawlers detect & rank their pages instead of yours. Same kind of duplicate contents also harm ranked websites as well.

Site Unresponsive: As in site scraping bots or software’s send frequent & large number of requests in a website which can cause denial of service (DoS) situation, where your server and all services hosted on it become unresponsive. Which means your users would not find your website & if your business depends on it than you obviously loss your business.

Slow your page loading times: As bots and site crappers access your site and send large number of requests which will make your unresponsive if no that than definitely it will slows down your site for sure. As we all know that site loading time is a very important factor to get good rank in search engines. Research & studies have shown that around 50% of web visitors expect a website to load in 2 seconds or less, and about 70% will leave a website if the page takes more than 3 seconds to load.

So, now the next question is how can you detect that your site is being scraped?

- Network Bandwidth: Do proper monitoring of your servers bandwidth find unwanted bots visiting your website or not.

- Keyword Research: When querying search engine for key words the new references appear to other similar resources with the same content.

- Check Multiple Request: If you find multiple requests from the same IP address than it can be possible that your site is attacking by scrappers.

- Large volume of requests: If you find high requests rate from a single IP than it may possible that scrappers are attacking your site.

- Headless or weird user agent

- Same IP requests in pattern: Check if same IP is requesting with predictable (equal) intervals.

- Particular files (CSS, Images) are not requested: You can find some certain support files are never requested, ex. favicon.ico, various CSS, Images and javascript files. It means it’s not a real browser.

- The client’s requests sequence. Ex. client access not directly accessible pages.

Now after discussing everything here is the main objective how one can prevent site from scraping?

Before getting through the solution we need to understand here is that whatever accessible to anyone cannot be protected completely but we can make it difficult for site scrapper’s to extract data from our site. One important thing is also needs to consider here is we need to make it harder for scraper’s not for search engine crawlers & for real users.

Start monitoring your logs & traffic patterns & whenever you find some unusual activity from same IP addresses either block or limit their access. Only allow users (and scrapers) to do a limited number of actions in a particular period, means only allow a few or normal searches per second from any IP address or user. You can also implement captchas for subsequent requests.

Require registration & login to see your content – This is a good solution for scrapers but not for the real users. Through this way you can collect and track email addresses and whenever you find some unusual activity, you can limit their access or ban it.

Block access from cloud hosting and scraping service IP addresses – It is also possible that site scrapers can run from hosting services like AWS, Google app engine or VPSes. In this scenario you can limit access or implement captcha or block IP addresses which requests are originating from such cloud hostings.

Make your error message nondescript after blocking – After blocking or limiting access, don’t show the reason of blocking, instead of that you can put user friendly message something like: “Sorry, something went wrong. You can contact support via helpdesk@example.com, should the problem persist.”

Implement Captchas – This is one of the best ways to prevent scrappers. Whenever you find possibilities of scraping in your website than without blocking access you can implement captcha, it is very effective for stopping scrapers. Quick tip here is “Don’t roll your own, use something like Google’s reCaptcha”

Don’t expose your complete dataset – Don’t give a route to a script or bot to get the all part of your dataset. For instance: You have a news portal with great numbers of individual articles. You could influence those articles to be just available via search for them through the on-site search and, if you don’t have a list of the articles on the site and their URLs anyplace, those articles will be just open by search feature. This implies a content needing to get every one of the articles off your site should do scans for every conceivable expression which may show up in your articles keeping in mind the end goal to discover them all, which will be tedious, appallingly wasteful, and will ideally influence the scrubber to surrender.

Never expose your APIs, endpoints, and similar things

Frequently change HTML – HTML parsers work by extracting content from pages & all site scraper needs to hit the search URL with a query, and extract the desired data from the returned HTML. Therefore frequently changing your HTML and leaving old data (by hiding through CSS) can screw their efforts easily

Insert fake, invisible honeypot data – This can be also an effective way, what you need to do is put this kind of message in your HTML for example “This search result is here to prevent scraping, If you’re a human and see this, please ignore it. If you’re a scraper, please click the link below. Note that clicking the link below will block access to this site for 24 hours.” But remember to hide this message with CSS and disallowed /scrapertrap/ in your robots.txt (don’t forget this!) so that real users and the search engine crawlers cannot visit there.

User agent is empty/missing – As all web browsers and search engine bots send user agent but when you find the User agent is missing or empty than you can block them.

Don’t accept requests if the User Agent is a common scraper one; blacklist ones used by scrapers

In few scenarios site scrapers will use a User Agent which no real browser or search engine spider uses, understand such signs like as:

“Mozilla” (Just that, nothing else.)

“Java 1.7.43_u43” (By default, Java’s HttpUrlConnection uses something like this.)

“BIZCO EasyScraping Studio 2.0”

“wget”, “curl”, “libcurl”,.. (Wget and cURL are sometimes used for basic scraping)

If you find that a particular User Agent string is used by scrapers on your site, and it is not used by real browsers or legitimate spiders, you can also add it to your blacklist.

Use and require cookies – You can force user to require cookies to access you site. This can eliminate the newbie scraper script writer & if they send cookies than later you can track them implement rate-limiting, blocking, or showing captchas.

Use JavaScript + Ajax to load your content – Use JavaScript + AJAX to load your data after the page itself loads. This will make the content/data out of reach to HTML parsers which don’t run JavaScript.

MotoShare.in delivers cost-effective bike rental solutions, empowering users to save on transportation while enjoying reliable two-wheelers. Ideal for city commutes, sightseeing, or adventure rides.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals