Introduction

Synthetic data generation platforms are transforming how AI systems are trained by creating artificial datasets that statistically resemble real-world data without exposing sensitive or private information. These platforms are essential in modern machine learning workflows where real data is scarce, expensive, or restricted due to privacy regulations.

Instead of relying on manual collection or sensitive production data, synthetic data tools use generative models, statistical simulations, GANs, and rule-based systems to produce high-quality datasets for training AI models. This allows organizations to scale AI development faster while reducing compliance risks and improving data diversity.

Why It Matters

- Eliminates dependency on sensitive real-world data

- Reduces privacy and compliance risks

- Accelerates AI model training and testing

- Improves dataset diversity and balance

- Enables scalable AI development pipelines

- Supports multimodal AI training needs

Real-World Use Cases

- Autonomous driving simulation datasets

- Healthcare imaging and patient data modeling

- Financial fraud detection systems

- LLM pretraining and fine-tuning datasets

- Retail recommendation systems

- Cybersecurity anomaly detection

- Industrial defect detection models

- NLP and chatbot training datasets

Evaluation Criteria for Buyers

- Data realism and statistical accuracy

- Multimodal data support (text, image, tabular, video)

- Privacy preservation mechanisms

- Scalability of data generation

- AI/ML model integration

- Automation and API support

- Compliance and governance features

- Synthetic data quality evaluation tools

- Deployment flexibility (cloud/on-premise)

- Enterprise readiness

Best For

Organizations building AI systems that require large-scale, privacy-safe, and high-quality training datasets without relying on real sensitive data.

Not Ideal For

Small projects where dataset requirements are minimal or where real-world data is already sufficient and readily available.

What’s Changing in Synthetic Data Platforms

- GANs and diffusion models are improving data realism

- LLMs are generating high-quality synthetic text datasets

- Privacy-preserving synthetic data is becoming mandatory

- Enterprise adoption is increasing rapidly across industries

- Multimodal synthetic generation is becoming standard

- Synthetic data is replacing real data in regulated industries

- Hybrid real + synthetic training is outperforming real-only datasets

- Automated evaluation of synthetic quality is emerging

- Cloud-native generation platforms are expanding

- Synthetic data is powering RLHF and LLM training pipelines

Quick Buyer Checklist

Before selecting a synthetic data platform, ensure:

- High statistical fidelity of generated data

- Support for required data types

- Privacy and compliance guarantees

- Scalability for large datasets

- API and pipeline integration

- Model-based generation support

- Evaluation and validation tools

- On-premise or cloud flexibility

- Automation capabilities

- Enterprise governance features

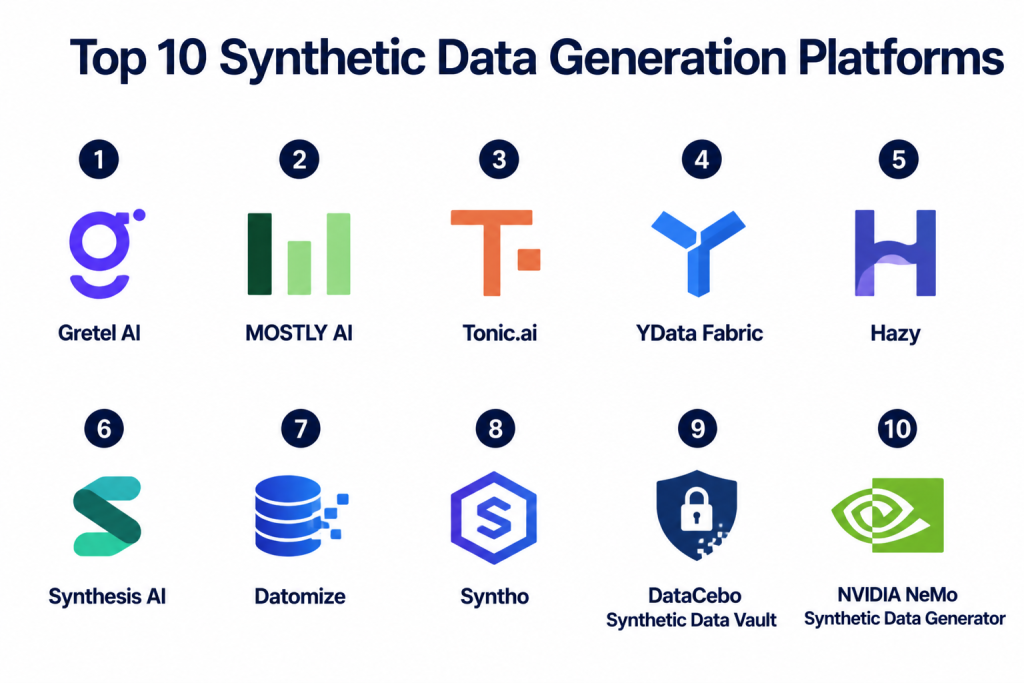

Top 10 Synthetic Data Generation Platforms

1- Gretel AI

2- MOSTLY AI

3- Tonic.ai

4- YData Fabric

5- Hazy

6- Synthesis AI

7- Datomize

8- Syntho

9- DataCebo Synthetic Data Vault

10- NVIDIA NeMo Synthetic Data Generator

1. Gretel AI

One-line Verdict

Best developer-friendly platform for privacy-preserving synthetic data generation.

Short Description

Gretel AI is a leading synthetic data platform designed for developers and ML teams that need secure, scalable, and privacy-safe datasets. It supports tabular, text, and image data generation using advanced generative AI models.

The platform is widely used for privacy-sensitive industries like healthcare, finance, and enterprise AI development.

Standout Capabilities

- API-first synthetic data generation

- Support for tabular, text, and image data

- Privacy-preserving model training

- Differential privacy techniques

- Real-time data synthesis

- Scalable cloud deployment

- Model fine-tuning capabilities

- Enterprise data pipelines

AI-Specific Depth

Gretel AI uses generative models to learn data distributions and create synthetic datasets that preserve statistical properties without exposing real-world sensitive data.

Pros

- Easy API integration

- Strong privacy protection

- Supports multiple data types

Cons

- Advanced features require setup

- Pricing scales with usage

- Limited offline usage flexibility

Security & Compliance

Built-in privacy engineering and enterprise-grade compliance support.

Deployment & Platforms

- Cloud-based API

- Enterprise deployment options

Integrations & Ecosystem

- ML pipelines

- Cloud data warehouses

- AI training frameworks

- Data engineering tools

Pricing Model

Usage-based enterprise pricing.

Best-Fit Scenarios

- Privacy-sensitive AI systems

- Developer-centric ML pipelines

- Multimodal dataset generation

2. MOSTLY AI

One-line Verdict

Best for enterprise-grade tabular synthetic data generation.

Short Description

MOSTLY AI is a powerful synthetic data platform focused on generating high-quality tabular datasets for enterprise use. It is widely used in regulated industries where privacy compliance and data realism are critical.

The platform is designed for scalable deployment in enterprise environments.

Standout Capabilities

- High-fidelity tabular data synthesis

- Privacy-preserving generation

- Kubernetes deployment support

- Enterprise scalability

- Data anonymization features

- Statistical accuracy preservation

- Automated data pipelines

- Cloud and on-premise support

AI-Specific Depth

MOSTLY AI uses deep generative models to replicate complex statistical relationships while ensuring no real data is exposed.

Pros

- Excellent enterprise scalability

- Strong privacy compliance

- High-quality tabular data

Cons

- Limited multimodal support

- Requires enterprise setup

- Not beginner-friendly

Security & Compliance

Strong GDPR and enterprise compliance support.

Deployment & Platforms

- Cloud

- On-premise

- Kubernetes

Integrations & Ecosystem

- Data warehouses

- ML pipelines

- Enterprise BI tools

Pricing Model

Enterprise contract pricing.

Best-Fit Scenarios

- Financial modeling

- Healthcare analytics

- Enterprise data simulation

3. Tonic.ai

One-line Verdict

Best for production-ready synthetic test data generation.

Short Description

Tonic.ai is a widely used synthetic data platform that focuses on creating realistic, production-like datasets for testing and development. It helps organizations safely use synthetic replicas of sensitive databases.

It is especially popular in software engineering and QA environments.

Standout Capabilities

- Database-level synthetic generation

- Data masking and anonymization

- Schema-preserving synthesis

- CI/CD pipeline integration

- API-driven automation

- Referential integrity support

- Test data provisioning

- Enterprise security features

AI-Specific Depth

Tonic.ai ensures synthetic datasets maintain relational structure and business logic while removing sensitive information.

Pros

- Excellent database compatibility

- Strong enterprise adoption

- High data realism

Cons

- Focused more on structured data

- Limited AI-native features

- Requires infrastructure setup

Security & Compliance

Strong enterprise-grade compliance support.

Deployment & Platforms

- Cloud

- On-premise

Integrations & Ecosystem

- SQL databases

- DevOps pipelines

- Data engineering tools

Pricing Model

Enterprise subscription pricing.

Best-Fit Scenarios

- Software testing datasets

- Enterprise QA environments

- Database simulation

4. YData Fabric

One-line Verdict

Best for end-to-end data profiling and synthetic data pipelines.

Short Description

YData Fabric provides a complete data-centric AI platform combining data profiling, cleaning, and synthetic data generation. It helps ML teams prepare high-quality datasets for training and experimentation.

It is widely used in data science workflows for improving dataset quality.

Standout Capabilities

- Data profiling and analysis

- Synthetic data generation

- Tabular and time-series support

- Data augmentation tools

- Pipeline orchestration

- AI-driven data insights

- ML integration support

- Dataset optimization

AI-Specific Depth

YData improves ML training by generating synthetic data that preserves correlations and statistical structure in real datasets.

Pros

- Strong ML integration

- Supports time-series data

- End-to-end data pipeline

Cons

- Smaller ecosystem

- Newer platform

- Enterprise pricing required

Security & Compliance

Enterprise-level compliance support.

Deployment & Platforms

- Cloud

- Enterprise deployments

Integrations & Ecosystem

- ML pipelines

- Data engineering tools

- Cloud platforms

Pricing Model

Custom enterprise pricing.

Best-Fit Scenarios

- ML training pipelines

- Time-series modeling

- Data augmentation workflows

5. Hazy

One-line Verdict

Best for enterprise synthetic data with strong privacy compliance.

Short Description

Hazy is a synthetic data platform focused on privacy-first AI data generation for enterprise systems. It helps organizations safely create synthetic datasets that mimic real data structures while ensuring compliance with strict regulations.

It is widely used in banking and regulated industries.

Standout Capabilities

- Privacy-first synthetic data generation

- Enterprise data modeling

- Tabular data synthesis

- Compliance automation

- Secure data environments

- Scalable pipelines

- AI-driven modeling

- Data anonymization

AI-Specific Depth

Hazy generates statistically accurate synthetic datasets while ensuring no personal or sensitive data is retained.

Pros

- Strong privacy compliance

- Enterprise-ready

- High data fidelity

Cons

- Limited multimodal support

- Enterprise-focused pricing

- Requires setup time

Security & Compliance

Strong regulatory compliance support.

Deployment & Platforms

- Cloud

- Private deployment

Integrations & Ecosystem

- Banking systems

- Enterprise databases

- ML pipelines

Pricing Model

Enterprise subscription pricing.

Best-Fit Scenarios

- Financial services

- Healthcare datasets

- Regulated industries

6. Synthesis AI

One-line Verdict

Best for photorealistic synthetic image and vision datasets.

Short Description

Synthesis AI specializes in generating synthetic image and video datasets for computer vision models. It is widely used in facial recognition, AR/VR, and autonomous systems.

Standout Capabilities

- Photorealistic image generation

- Computer vision datasets

- 3D synthetic rendering

- Facial recognition data

- Environmental simulation

- Multimodal vision datasets

- AI-driven generation

- Custom dataset creation

AI-Specific Depth

Synthesis AI generates high-quality synthetic visual datasets used to train deep vision models without real-world data collection.

Pros

- Excellent vision dataset quality

- Strong realism

- Scalable generation

Cons

- Limited tabular support

- Specialized use case

- Enterprise pricing

Security & Compliance

Enterprise-grade support.

Deployment & Platforms

- Cloud-based

Integrations & Ecosystem

- Computer vision frameworks

- AI simulation tools

Pricing Model

Enterprise pricing.

Best-Fit Scenarios

- Autonomous vehicles

- Facial recognition systems

- Vision AI training

7. Datomize

One-line Verdict

Best for enterprise relational synthetic data generation.

Short Description

Datomize provides enterprise-grade synthetic data generation focused on relational databases. It ensures data consistency, privacy, and scalability for enterprise systems.

Standout Capabilities

- Relational data synthesis

- Data anonymization

- Schema preservation

- Enterprise integration

- Secure data generation

- Scalable pipelines

- Compliance features

- API automation

AI-Specific Depth

Datomize replicates relational structures while ensuring privacy-safe synthetic datasets for enterprise AI systems.

Pros

- Strong relational support

- Enterprise-ready

- High compliance

Cons

- Limited AI-native features

- Narrow data focus

- Requires setup

Security & Compliance

Enterprise compliance support available.

Deployment & Platforms

- Cloud

- On-premise

Integrations & Ecosystem

- Databases

- Enterprise BI systems

- ML pipelines

Pricing Model

Enterprise pricing.

Best-Fit Scenarios

- Enterprise databases

- Financial systems

- Data anonymization workflows

8. Syntho

One-line Verdict

Best for GDPR-compliant synthetic data generation.

Short Description

Syntho is a synthetic data platform designed for privacy-first organizations that need GDPR-compliant data generation for AI and analytics workflows.

Standout Capabilities

- GDPR-compliant synthesis

- Tabular data generation

- Data anonymization

- AI-driven modeling

- Enterprise workflows

- Secure data pipelines

- API integration

- Statistical preservation

AI-Specific Depth

Syntho ensures synthetic datasets preserve statistical patterns while maintaining strict privacy guarantees.

Pros

- Strong compliance focus

- Easy integration

- High-quality data generation

Cons

- Limited multimodal support

- Enterprise pricing

- Smaller ecosystem

Security & Compliance

Strong GDPR compliance features.

Deployment & Platforms

- Cloud

- Enterprise environments

Integrations & Ecosystem

- Data warehouses

- ML pipelines

Pricing Model

Enterprise subscription pricing.

Best-Fit Scenarios

- EU compliance systems

- Financial datasets

- Privacy-first AI

9. DataCebo Synthetic Data Vault

One-line Verdict

Best open-source synthetic data framework for research.

Short Description

Synthetic Data Vault is an open-source framework for generating synthetic datasets using probabilistic modeling techniques. It is widely used in academic and research environments.

Standout Capabilities

- Open-source synthetic data generation

- Probabilistic modeling

- Tabular data synthesis

- Research-friendly tools

- Flexible architecture

- Python integration

- Data augmentation

- ML experimentation

AI-Specific Depth

SDV uses statistical models to generate synthetic datasets that preserve dependencies and relationships in real data.

Pros

- Free and open-source

- Highly flexible

- Strong research support

Cons

- Not enterprise-ready

- Requires coding expertise

- Limited UI tools

Security & Compliance

Depends on deployment setup.

Deployment & Platforms

- Python-based

Integrations & Ecosystem

- ML frameworks

- Data science tools

Pricing Model

Open-source.

Best-Fit Scenarios

- Research projects

- ML experimentation

- Data augmentation

10. NVIDIA NeMo Synthetic Data Generator

One-line Verdict

Best for GPU-accelerated synthetic data generation at scale.

Short Description

NVIDIA NeMo provides high-performance synthetic data generation capabilities optimized for large-scale AI training. It leverages GPU acceleration to generate high-quality datasets for LLMs and vision systems.

Standout Capabilities

- GPU-accelerated generation

- LLM training datasets

- Vision dataset synthesis

- Scalable AI pipelines

- Deep learning integration

- Multimodal generation

- Enterprise optimization

- AI infrastructure support

AI-Specific Depth

NVIDIA uses advanced generative models to create synthetic datasets for large-scale AI training workflows.

Pros

- Extremely fast generation

- High scalability

- Strong AI integration

Cons

- Requires NVIDIA ecosystem

- Infrastructure-heavy

- Enterprise-focused

Security & Compliance

Enterprise-grade GPU infrastructure security.

Deployment & Platforms

- Cloud GPU

- On-premise NVIDIA systems

Integrations & Ecosystem

- NVIDIA AI stack

- ML frameworks

- LLM training pipelines

Pricing Model

Enterprise licensing.

Best-Fit Scenarios

- LLM training

- Large-scale AI systems

- High-performance computing workloads

Comparison Table

| Tool | Best For | Deployment | Data Type | Privacy Focus | Enterprise Scale |

|---|---|---|---|---|---|

| Gretel AI | Developer-friendly synthetic data | Cloud | Multimodal | High | High |

| MOSTLY AI | Tabular enterprise data | Cloud/On-prem | Tabular | Very High | Very High |

| Tonic.ai | Test data generation | Hybrid | Structured | High | High |

| YData Fabric | ML pipelines | Cloud | Tabular/time-series | High | High |

| Hazy | Regulated industries | Private cloud | Tabular | Very High | High |

| Synthesis AI | Vision datasets | Cloud | Image/video | Medium | High |

| Datomize | Relational databases | Hybrid | Structured | High | High |

| Syntho | GDPR compliance | Cloud | Tabular | Very High | High |

| SDV | Research & open-source | Local | Tabular | Medium | Medium |

| NVIDIA NeMo | Large-scale AI training | GPU cloud | Multimodal | Medium | Very High |

Scoring & Evaluation Table

| Tool | Core Features | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Gretel AI | 9.2 | 8.8 | 9.0 | 9.0 | 9.1 | 8.7 | 8.6 | 8.9 |

| MOSTLY AI | 9.3 | 8.2 | 8.9 | 9.5 | 9.0 | 8.6 | 8.4 | 8.9 |

| Tonic.ai | 9.0 | 8.5 | 9.1 | 9.2 | 8.9 | 8.7 | 8.5 | 8.8 |

| YData Fabric | 8.9 | 8.6 | 8.8 | 8.9 | 8.8 | 8.4 | 8.6 | 8.7 |

| Hazy | 8.8 | 8.3 | 8.7 | 9.4 | 8.7 | 8.5 | 8.2 | 8.7 |

| Synthesis AI | 9.0 | 8.4 | 8.5 | 8.6 | 9.2 | 8.3 | 8.3 | 8.7 |

| Datomize | 8.7 | 8.2 | 8.6 | 9.0 | 8.6 | 8.4 | 8.4 | 8.6 |

| Syntho | 8.8 | 8.5 | 8.7 | 9.3 | 8.6 | 8.5 | 8.3 | 8.7 |

| SDV | 8.5 | 9.0 | 8.2 | 8.0 | 8.4 | 7.8 | 9.2 | 8.4 |

| NVIDIA NeMo | 9.4 | 7.9 | 9.1 | 9.0 | 9.6 | 8.6 | 8.2 | 8.9 |

Top 3 Recommendations

Best for Enterprise

- MOSTLY AI

- NVIDIA NeMo

- Gretel AI

Best for SMBs

- Tonic.ai

- YData Fabric

- Syntho

Best for Developers

- SDV

- Gretel AI

- Datomize

Which Synthetic Data Platform Is Right for You

For Solo Developers

SDV and Gretel AI are ideal for experimentation and small-scale dataset generation.

For SMBs

Tonic.ai and YData Fabric provide balanced automation and scalability.

For Mid-Market Organizations

Hazy and Syntho offer compliance-focused, production-ready synthetic data pipelines.

For Enterprise AI Programs

MOSTLY AI, NVIDIA NeMo, and Gretel AI provide high-scale, enterprise-grade synthetic data generation.

Budget vs Premium

Open-source tools reduce cost but require engineering effort, while enterprise platforms provide scalability and compliance.

Feature Depth vs Ease of Use

Gretel AI and Tonic.ai balance usability and power, while NVIDIA NeMo focuses on high-performance infrastructure.

Integrations & Scalability

Cloud-native and GPU-accelerated platforms are best for large-scale AI systems.

Security & Compliance Needs

Highly regulated industries should prioritize MOSTLY AI, Hazy, and Syntho.

Implementation Playbook

First 30 Days

- Define data requirements

- Select synthetic data platform

- Test small-scale generation

- Validate data realism

- Set privacy constraints

Days 30–60

- Integrate ML pipelines

- Improve data fidelity

- Add automation workflows

- Optimize generation models

- Validate compliance rules

Days 60–90

- Scale dataset generation

- Deploy production pipelines

- Automate data validation

- Monitor synthetic quality

- Optimize cost-performance balance

Common Mistakes and How to Avoid Them

- Ignoring statistical fidelity

- Using synthetic-only training without validation

- Poor privacy configuration

- Not testing downstream model impact

- Over-reliance on GAN outputs

- Lack of dataset evaluation metrics

- Weak integration with ML pipelines

- Ignoring bias in synthetic generation

- Not validating edge cases

- Overcomplicating generation pipelines

- Skipping compliance checks

- Poor data distribution modeling

Frequently Asked Questions

1. What is synthetic data generation?

It is the process of creating artificial datasets that mimic real-world data without exposing sensitive information.

2. Why is synthetic data important?

It reduces privacy risks, lowers data dependency, and accelerates AI training.

3. What techniques are used in synthetic data generation?

GANs, statistical models, diffusion models, and rule-based systems.

4. Is synthetic data as good as real data?

In many cases, hybrid models (real + synthetic) perform better than real-only datasets.

5. Which industries use synthetic data?

Healthcare, finance, automotive, cybersecurity, and AI research.

6. What is privacy-preserving synthetic data?

It ensures no real personal or sensitive information is exposed.

7. Can synthetic data replace real data?

Not fully, but it significantly reduces dependency on real data.

8. What is multimodal synthetic data?

It includes synthetic images, text, video, audio, and structured data.

9. What is the biggest risk in synthetic data?

Poor statistical quality leading to inaccurate AI training.

10. What should buyers prioritize?

Data quality, privacy compliance, scalability, and ML integration.

Conclusion

Synthetic data generation platforms are becoming a foundational pillar of modern AI development, enabling organizations to overcome data scarcity, privacy limitations, and scalability challenges. As AI models grow more complex and data-hungry, synthetic data is no longer optional but a core component of training pipelines across industries. Platforms like Gretel AI, MOSTLY AI, NVIDIA NeMo, and Tonic.ai are enabling enterprises to build privacy-safe, scalable, and high-quality datasets that accelerate machine learning innovation. The right platform depends on your data type, compliance needs, infrastructure maturity, and AI workload scale. Organizations that adopt synthetic data strategies early gain a significant advantage in building faster, safer, and more efficient AI systems.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals