Introduction

Data deduplication for model training is a critical step in modern AI and machine learning pipelines where large datasets often contain duplicate, near-duplicate, or semantically similar records. These redundancies can severely impact model performance by causing overfitting, bias amplification, inefficient training, and inflated evaluation metrics.

In large-scale AI systems such as LLM pretraining, retrieval-augmented generation, computer vision, and multimodal learning, deduplication ensures that models learn from diverse and high-quality data instead of repetitive or noisy samples. Modern deduplication platforms use hashing techniques, embedding similarity, clustering, and neural similarity detection to identify and remove redundant data efficiently.

Why It Matters

- Improves model generalization and accuracy

- Reduces training compute cost and time

- Prevents overfitting on repeated samples

- Enhances dataset diversity

- Improves evaluation reliability

- Supports scalable AI training pipelines

Real-World Use Cases

- LLM pretraining dataset cleaning

- Web-scale data filtering

- Duplicate image removal in vision datasets

- RAG knowledge base optimization

- Enterprise document dataset cleanup

- Fraud detection dataset preparation

- NLP corpus optimization

- Synthetic + real dataset merging

Evaluation Criteria for Buyers

- Exact and near-duplicate detection accuracy

- Scalability for large datasets

- Multimodal support (text, image, video)

- Embedding-based similarity detection

- Integration with ML pipelines

- Real-time vs batch processing capability

- Custom deduplication rules

- Performance on large-scale datasets

- Dataset versioning support

- Enterprise governance features

Best For

Organizations training large-scale AI models where dataset redundancy significantly impacts performance, cost, and model reliability.

Not Ideal For

Small datasets where manual cleaning is sufficient and duplication is minimal.

What’s Changing in Data Deduplication for Model Training

- Embedding-based deduplication is replacing hash-only methods

- LLM datasets require semantic duplicate detection

- Multimodal deduplication is becoming standard

- Near-duplicate detection is more important than exact matches

- Real-time deduplication pipelines are emerging

- Web-scale filtering is becoming essential for LLM training

- Vector databases are used for similarity search

- Clustering-based deduplication is gaining adoption

- Synthetic data introduces new duplication challenges

- Deduplication is now integrated into MLOps workflows

Quick Buyer Checklist

Before selecting a deduplication tool, ensure:

- Exact and semantic duplicate detection support

- Scalability for large datasets

- Multimodal data handling capability

- Integration with ML pipelines

- Embedding-based similarity search

- Custom filtering rules

- Batch and streaming support

- Dataset version control

- Performance optimization for large corpora

- Enterprise governance support

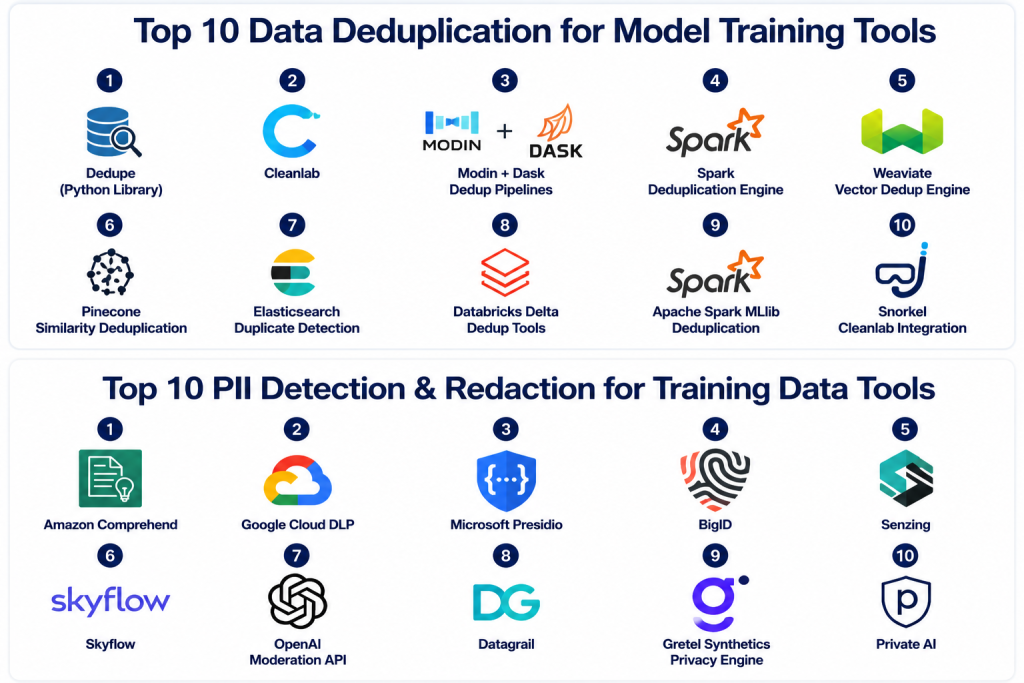

Top 10 Data Deduplication for Model Training Tools

1- Dedupe (Python Library)

2- Cleanlab

3- Modin + Dask Dedup Pipelines

4- Spark Deduplication Engine

5- Weaviate Vector Dedup Engine

6- Pinecone Similarity Deduplication

7- Elasticsearch Duplicate Detection

8- Databricks Delta Dedup Tools

9- Apache Spark MLlib Deduplication

10- Snorkel Cleanlab Integration

1. Dedupe (Python Library)

One-line Verdict

Best open-source library for structured data deduplication using machine learning.

Short Description

Dedupe is a Python-based open-source library designed for deduplicating structured datasets using machine learning techniques. It identifies duplicate records even when exact matches are not present by learning similarity patterns from labeled examples.

It is widely used in data cleaning, entity resolution, and dataset preparation workflows.

Standout Capabilities

- Machine learning-based deduplication

- Supervised training for matching rules

- Entity resolution support

- Scalable batch processing

- Flexible field matching

- Python API integration

- Active learning support

- Custom similarity functions

AI-Specific Depth

Dedupe learns similarity patterns from user-labeled examples, making it effective for identifying near-duplicate records in structured datasets used for AI training.

Pros

- Open-source and flexible

- Strong ML-based matching

- Easy Python integration

Cons

- Requires training data

- Not optimized for unstructured data

- Limited enterprise tooling

Security & Compliance

Depends on deployment environment.

Deployment & Platforms

- Python environments

- Self-hosted systems

Integrations & Ecosystem

- Pandas

- ML pipelines

- Data engineering tools

Pricing Model

Open-source.

Best-Fit Scenarios

- Structured dataset cleaning

- Entity resolution tasks

- Small to mid-scale ML pipelines

2. Cleanlab

One-line Verdict

Best for AI-driven duplicate detection and dataset quality improvement.

Short Description

Cleanlab is a data quality and deduplication platform that focuses on identifying mislabeled and duplicate data in machine learning datasets. It uses model predictions and uncertainty signals to detect problematic samples.

It is widely used in improving dataset integrity for AI training workflows.

Standout Capabilities

- AI-based duplicate detection

- Label error identification

- Data quality scoring

- Embedding-based similarity

- Dataset cleaning automation

- ML pipeline integration

- Uncertainty-based filtering

- Python API support

AI-Specific Depth

Cleanlab uses model confidence and embedding similarity to identify duplicates and low-quality samples in training datasets.

Pros

- Strong data quality focus

- Easy integration with ML models

- Improves dataset reliability

Cons

- Requires ML model outputs

- Limited UI features

- Not a full data platform

Security & Compliance

Depends on deployment setup.

Deployment & Platforms

- Python-based

- Cloud or local

Integrations & Ecosystem

- PyTorch

- TensorFlow

- Scikit-learn

Pricing Model

Open-source with enterprise options.

Best-Fit Scenarios

- ML dataset cleaning

- AI training pipelines

- Label error detection

3. Modin + Dask Dedup Pipelines

One-line Verdict

Best for scalable distributed deduplication on large datasets.

Short Description

Modin and Dask enable distributed data processing for large-scale deduplication tasks. They allow datasets to be processed across clusters, making them suitable for web-scale AI training data.

Standout Capabilities

- Distributed data processing

- Scalable deduplication pipelines

- Parallel computation support

- Pandas-compatible API

- Cluster-based execution

- Large dataset handling

- Batch processing

- Dataframe optimization

AI-Specific Depth

These frameworks enable efficient deduplication of large AI training datasets by distributing similarity checks across compute clusters.

Pros

- Highly scalable

- Fast distributed processing

- Pandas-compatible

Cons

- Requires cluster setup

- Engineering complexity

- Not AI-specific out-of-box

Security & Compliance

Depends on infrastructure.

Deployment & Platforms

- Cluster environments

- Cloud or on-premise

Integrations & Ecosystem

- Spark

- Hadoop

- ML pipelines

Pricing Model

Open-source.

Best-Fit Scenarios

- Large-scale dataset processing

- Web-scale AI training data

- Distributed ML pipelines

4. Apache Spark Deduplication Engine

One-line Verdict

Best enterprise-scale distributed deduplication framework.

Short Description

Apache Spark provides powerful distributed computing capabilities that can be used for deduplicating massive datasets. It is widely used in enterprise AI pipelines for processing terabytes of training data.

Standout Capabilities

- Distributed deduplication

- Cluster computing

- Scalable data processing

- SQL-based transformations

- MLlib integration

- Streaming support

- Fault tolerance

- Big data processing

AI-Specific Depth

Spark enables large-scale duplicate detection using distributed joins, hashing, and similarity computation across datasets.

Pros

- Extremely scalable

- Enterprise-ready

- Highly reliable

Cons

- Complex setup

- Requires big data expertise

- Not AI-native

Security & Compliance

Enterprise-grade security depends on deployment.

Deployment & Platforms

- Hadoop ecosystems

- Cloud clusters

Integrations & Ecosystem

- Databricks

- AWS EMR

- Azure Synapse

Pricing Model

Open-source with infrastructure cost.

Best-Fit Scenarios

- Big data AI pipelines

- Enterprise dataset processing

- Web-scale deduplication

5. Weaviate Vector Dedup Engine

One-line Verdict

Best for semantic deduplication using vector similarity search.

Short Description

Weaviate provides vector-based deduplication by comparing embeddings of data points to detect semantic duplicates. It is widely used in LLM datasets and semantic search systems.

Standout Capabilities

- Vector similarity search

- Semantic deduplication

- Embedding-based clustering

- Real-time indexing

- Hybrid search support

- Multimodal data support

- Graph-based retrieval

- Scalable architecture

AI-Specific Depth

Weaviate identifies semantically similar data points even when text or structure differs, making it ideal for LLM dataset cleaning.

Pros

- Strong semantic detection

- Real-time performance

- Multimodal support

Cons

- Requires embedding setup

- Infrastructure overhead

- Learning curve

Security & Compliance

Enterprise support available.

Deployment & Platforms

- Cloud

- Self-hosted

Integrations & Ecosystem

- LangChain

- LLM pipelines

- Vector databases

Pricing Model

Usage-based cloud pricing.

Best-Fit Scenarios

- LLM dataset cleaning

- Semantic duplicate detection

- RAG pipelines

6. Pinecone Similarity Deduplication

One-line Verdict

Best managed vector database for similarity-based deduplication.

Short Description

Pinecone enables similarity-based deduplication using vector embeddings to identify near-duplicate data points in large-scale datasets.

Standout Capabilities

- Vector similarity search

- Real-time deduplication

- Scalable indexing

- Metadata filtering

- High-performance retrieval

- Embedding storage

- API-based workflows

- Cloud-native architecture

AI-Specific Depth

Pinecone uses nearest-neighbor search to identify duplicate or near-duplicate embeddings in AI datasets.

Pros

- Easy to scale

- Fast retrieval

- Managed service

Cons

- Requires embeddings

- Cost increases with scale

- Limited preprocessing tools

Security & Compliance

Enterprise-grade cloud security.

Deployment & Platforms

- Cloud only

Integrations & Ecosystem

- LangChain

- OpenAI

- LLM frameworks

Pricing Model

Usage-based pricing.

Best-Fit Scenarios

- LLM training data cleaning

- Semantic deduplication

- RAG systems

7. Elasticsearch Duplicate Detection

One-line Verdict

Best hybrid search engine for structured and unstructured deduplication.

Short Description

Elasticsearch provides powerful search and indexing capabilities that can be used for duplicate detection in large datasets using text similarity, hashing, and fuzzy matching.

Standout Capabilities

- Full-text search deduplication

- Fuzzy matching

- Scalability

- Distributed indexing

- Hybrid search support

- Query-based filtering

- Real-time processing

- Analytics integration

AI-Specific Depth

Elasticsearch enables flexible duplicate detection using both lexical and semantic search methods.

Pros

- Highly scalable

- Strong search capabilities

- Flexible querying

Cons

- Requires tuning

- Complex configuration

- Not AI-native

Security & Compliance

Enterprise security available.

Deployment & Platforms

- Cloud

- Self-hosted

Integrations & Ecosystem

- Kibana

- Data pipelines

- ML systems

Pricing Model

Subscription or open-source deployment.

Best-Fit Scenarios

- Enterprise data search

- Hybrid deduplication

- Log and document datasets

8. Databricks Delta Dedup Tools

One-line Verdict

Best unified platform for deduplication in lakehouse architectures.

Short Description

Databricks Delta Lake provides built-in deduplication capabilities for large-scale data pipelines within lakehouse architectures used in AI training systems.

Standout Capabilities

- Delta table deduplication

- Streaming support

- ACID transactions

- Scalable processing

- Data versioning

- ML pipeline integration

- Structured query support

- Enterprise analytics

AI-Specific Depth

Databricks ensures training datasets remain clean and deduplicated during continuous ingestion pipelines.

Pros

- Strong enterprise platform

- Scalable architecture

- Integrated ML support

Cons

- Requires Databricks ecosystem

- Cost can scale

- Complex setup

Security & Compliance

Enterprise-grade governance support.

Deployment & Platforms

- Cloud lakehouse

Integrations & Ecosystem

- MLflow

- Spark

- Data engineering tools

Pricing Model

Enterprise pricing.

Best-Fit Scenarios

- AI lakehouse pipelines

- Enterprise data cleaning

- Streaming deduplication

9. Apache Spark MLlib Deduplication

One-line Verdict

Best for ML-based deduplication in distributed environments.

Short Description

Spark MLlib provides machine learning capabilities that can be used for deduplication through clustering, similarity scoring, and feature-based matching.

Standout Capabilities

- ML-based deduplication

- Distributed processing

- Feature engineering

- Clustering algorithms

- Scalable pipelines

- Batch processing

- Integration with Spark ecosystem

- Fault tolerance

AI-Specific Depth

MLlib supports similarity-based clustering for identifying duplicate or near-duplicate data in training datasets.

Pros

- Highly scalable

- Strong ML integration

- Enterprise-ready

Cons

- Requires expertise

- Not purpose-built for deduplication

- Complex tuning

Security & Compliance

Depends on deployment environment.

Deployment & Platforms

- Spark clusters

Integrations & Ecosystem

- Hadoop

- Databricks

- Cloud systems

Pricing Model

Open-source.

Best-Fit Scenarios

- Big data ML pipelines

- Dataset clustering

- Enterprise AI systems

10. Snorkel + Cleanlab Integration

One-line Verdict

Best for combining labeling intelligence with deduplication workflows.

Short Description

The combination of Snorkel and Cleanlab enables intelligent dataset cleaning and deduplication using weak supervision and data quality scoring techniques.

Standout Capabilities

- Weak supervision

- Data quality scoring

- Duplicate detection

- Label correction

- Active learning support

- ML pipeline integration

- Dataset refinement

- AI-assisted workflows

AI-Specific Depth

This combination helps identify redundant or low-quality training samples and removes them using AI-driven scoring systems.

Pros

- Strong dataset quality focus

- Flexible workflows

- AI-assisted cleaning

Cons

- Requires engineering effort

- Not a standalone platform

- Complex integration

Security & Compliance

Depends on deployment setup.

Deployment & Platforms

- Python environments

- Cloud systems

Integrations & Ecosystem

- ML frameworks

- Data pipelines

Pricing Model

Open-source + enterprise options.

Best-Fit Scenarios

- ML dataset optimization

- AI training pipelines

- Data quality improvement

Comparison Table

| Tool | Best For | Method | Scale | Semantic Dedup | Real-time |

|---|---|---|---|---|---|

| Dedupe | Structured datasets | ML-based | Medium | Partial | No |

| Cleanlab | Data quality | ML + embeddings | High | Yes | Partial |

| Modin/Dask | Distributed pipelines | Cluster compute | Very High | No | No |

| Spark | Big data dedup | Distributed SQL | Very High | Partial | Partial |

| Weaviate | Semantic dedup | Vector search | High | Yes | Yes |

| Pinecone | LLM datasets | Vector DB | High | Yes | Yes |

| Elasticsearch | Search-based dedup | Hybrid search | Very High | Partial | Yes |

| Databricks | Lakehouse data | Streaming + batch | Very High | Partial | Yes |

| MLlib | ML pipelines | Clustering | Very High | Partial | No |

| Snorkel+Cleanlab | AI dataset cleaning | Hybrid ML | High | Yes | Partial |

Scoring & Evaluation Table

| Tool | Core Features | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Dedupe | 8.7 | 8.6 | 8.5 | 8.3 | 8.4 | 8.2 | 9.0 | 8.5 |

| Cleanlab | 9.0 | 8.5 | 8.8 | 8.6 | 8.7 | 8.4 | 8.9 | 8.7 |

| Modin/Dask | 8.8 | 8.3 | 8.7 | 8.5 | 9.0 | 8.4 | 9.0 | 8.7 |

| Spark | 9.2 | 7.8 | 9.0 | 9.0 | 9.3 | 8.8 | 8.5 | 8.8 |

| Weaviate | 9.0 | 8.6 | 8.9 | 8.8 | 9.1 | 8.5 | 8.7 | 8.8 |

| Pinecone | 8.9 | 9.0 | 9.0 | 8.8 | 9.3 | 8.7 | 8.4 | 8.9 |

| Elasticsearch | 9.1 | 8.2 | 9.2 | 9.0 | 9.2 | 8.9 | 8.6 | 8.9 |

| Databricks | 9.3 | 8.4 | 9.4 | 9.3 | 9.2 | 9.0 | 8.3 | 9.0 |

| MLlib | 8.8 | 7.9 | 8.6 | 8.7 | 9.0 | 8.4 | 9.0 | 8.6 |

| Snorkel+Cleanlab | 9.0 | 8.1 | 8.8 | 8.7 | 8.9 | 8.5 | 8.8 | 8.7 |

Top 3 Recommendations

Best for Enterprise

- Databricks Delta

- Spark

- Elasticsearch

Best for SMBs

- Cleanlab

- Pinecone

- Weaviate

Best for Developers

- Dedupe

- Cleanlab

- Modin/Dask

Which Data Deduplication Tool Is Right for You

For Solo Developers

Dedupe and Cleanlab are ideal for small-scale dataset cleaning and experimentation.

For SMBs

Weaviate and Pinecone offer strong semantic deduplication with scalable APIs.

For Mid-Market Organizations

Elasticsearch and Databricks provide hybrid deduplication across structured and unstructured data.

For Enterprise AI Programs

Spark, Databricks, and Elasticsearch are best suited for large-scale distributed deduplication pipelines.

Budget vs Premium

Open-source tools reduce cost but require engineering effort, while managed platforms provide scalability and automation.

Feature Depth vs Ease of Use

Cleanlab and Pinecone balance usability and power, while Spark offers deep scalability at higher complexity.

Integrations & Scalability

Cloud-native and distributed systems are best for large-scale AI training pipelines.

Security & Compliance Needs

Highly regulated industries should prioritize Databricks, Elasticsearch, and enterprise-grade platforms.

Implementation Playbook

First 30 Days

- Identify duplicate types

- Choose dedup strategy

- Test small datasets

- Define similarity thresholds

- Validate output quality

Days 30–60

- Integrate pipelines

- Add semantic deduplication

- Improve clustering methods

- Optimize performance

- Test large datasets

Days 60–90

- Scale production pipelines

- Automate dedup workflows

- Monitor dataset quality

- Optimize cost efficiency

- Improve model performance impact

Common Mistakes and How to Avoid Them

- Relying only on exact matching

- Ignoring semantic duplicates

- Poor threshold tuning

- Not using embeddings for LLM data

- Ignoring multimodal duplicates

- Over-filtering datasets

- Not validating downstream model impact

- Weak pipeline integration

- Lack of scalability planning

- Skipping clustering strategies

- Poor dataset versioning

- Ignoring data distribution changes

Frequently Asked Questions

1. What is data deduplication in AI?

It is the process of removing duplicate or similar data points from training datasets.

2. Why is deduplication important?

It improves model performance and prevents overfitting on repeated data.

3. What are near duplicates?

They are data points that are not identical but semantically or structurally similar.

4. What is semantic deduplication?

It uses embeddings to identify similar meaning rather than exact matches.

5. Which tools are best for LLM datasets?

Weaviate, Pinecone, and Cleanlab are widely used.

6. What is exact deduplication?

It removes identical copies of data records.

7. Can deduplication improve model accuracy?

Yes, it improves generalization and reduces bias.

8. What is clustering-based deduplication?

It groups similar data points and removes redundant samples.

9. Which industries use deduplication tools?

AI research, healthcare, finance, ecommerce, and cybersecurity.

10. What should buyers prioritize?

Scalability, accuracy, semantic matching, and ML integration.

Conclusion

Data deduplication is a foundational step in building high-quality AI training datasets, especially for large-scale machine learning and generative AI systems. As datasets grow in size and complexity, removing redundant and semantically similar data becomes essential for improving model accuracy, reducing training cost, and ensuring better generalization. Platforms like Weaviate, Pinecone, Databricks, and Cleanlab are enabling organizations to implement both exact and semantic deduplication at scale. The right solution depends on dataset type, infrastructure maturity, and AI workload requirements. Organizations that invest in robust deduplication pipelines will achieve more efficient training, higher model quality, and better AI system performance across real-world applications.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals