Introduction

AI Model Cards & Documentation Tools help organizations create structured and standardized documentation for machine learning models, large language models, datasets, prompts, evaluations, risks, deployment workflows, and governance processes. These platforms improve AI transparency, accountability, explainability, operational governance, and compliance readiness across the entire AI lifecycle. As enterprises deploy more generative AI applications into production, maintaining accurate and auditable AI documentation has become a critical operational requirement rather than a simple compliance exercise.

Modern AI governance platforms now automate model lineage tracking, approval workflows, risk assessments, fairness reporting, explainability analysis, prompt documentation, policy enforcement, and audit reporting. Many tools also integrate directly into MLOps and LLMOps ecosystems, allowing teams to automatically capture metadata, evaluation metrics, experiments, datasets, retrieval pipelines, deployment records, and monitoring telemetry.

Without proper AI documentation, organizations often struggle with reproducibility, operational oversight, AI risk visibility, regulatory audits, and cross-functional collaboration. AI Model Cards solve these challenges by providing a centralized framework for documenting how AI systems are built, evaluated, monitored, and governed throughout their lifecycle.

Why It Matters

- Improves AI transparency and accountability

- Helps maintain audit-ready governance records

- Supports responsible AI initiatives

- Reduces operational and compliance risks

- Simplifies model lifecycle tracking

- Standardizes AI documentation practices

- Improves collaboration across technical and business teams

- Enhances trust in enterprise AI systems

Real-World Use Cases

- Documenting LLM training and evaluation workflows

- Managing AI governance approval pipelines

- Tracking prompts, datasets, and model lineage

- Generating explainability and fairness reports

- Supporting regulated AI deployments

- Building centralized enterprise AI inventories

- Maintaining compliance and audit documentation

- Managing foundation model governance initiatives

Evaluation Criteria for Buyers

When evaluating AI Model Cards & Documentation Tools, buyers should focus on:

- Automated lineage and metadata capture

- Support for generative AI and LLM workflows

- Governance and approval automation

- Explainability and fairness reporting

- Integration with MLOps ecosystems

- Audit and compliance readiness

- Collaboration and workflow management

- Scalability for enterprise deployments

- Risk scoring and policy management

- Ease of implementation and usability

Best for: Enterprises, regulated industries, AI governance teams, responsible AI programs, MLOps organizations, and companies deploying AI systems at production scale.

Not ideal for: Lightweight experimentation projects, small academic environments, or organizations relying solely on manual AI documentation processes.

What’s Changing in AI Model Documentation

- AI governance is shifting from manual spreadsheets to automated lifecycle platforms

- LLM governance requirements are increasing rapidly

- Enterprises now require centralized AI inventories

- Prompt documentation is becoming part of standard governance workflows

- Regulatory pressure is driving stronger audit readiness requirements

- AI transparency expectations are increasing across industries

- Explainability reporting is becoming operationalized

- AI approval workflows are replacing informal governance processes

- Risk scoring is becoming integrated into AI deployment pipelines

- Foundation model governance is now a major enterprise priority

Quick Buyer Checklist

Before selecting a platform, verify:

- Does the platform support automated model lineage tracking?

- Can it document prompts, evaluations, and datasets?

- Does it integrate with your MLOps stack?

- Are governance workflows configurable?

- Can it support enterprise-scale deployments?

- Does it provide explainability reporting?

- Are audit exports and compliance reports available?

- Can multiple teams collaborate inside the platform?

- Does it support generative AI systems?

- Is risk management included?

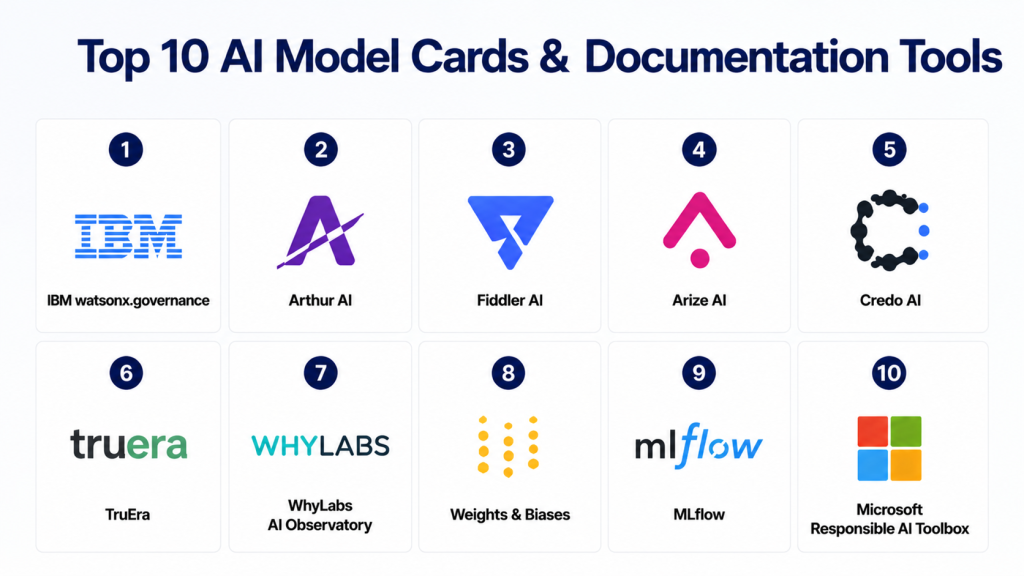

Top 10 AI Model Cards & Documentation Tools

1- IBM watsonx.governance

2- Arthur AI

3- Fiddler AI

4- Arize AI

5- Credo AI

6- TruEra

7- WhyLabs AI Observatory

8- Weights & Biases

9- MLflow

10- Microsoft Responsible AI Toolbox

1- IBM watsonx.governance

One-line Verdict

Comprehensive enterprise AI governance platform designed for large-scale, regulated AI operations.

Short Description

IBM watsonx.governance helps organizations manage AI transparency, governance, risk management, documentation workflows, and compliance operations from a centralized platform. The solution is built for enterprises that require structured governance across machine learning and generative AI systems.

The platform supports AI lifecycle management, model inventory tracking, approval workflows, audit reporting, policy enforcement, and explainability documentation. It is particularly valuable for highly regulated industries managing production AI environments.

Standout Capabilities

- AI governance automation

- Centralized model inventory

- Risk scoring workflows

- Audit-ready documentation

- Explainability reporting

- Approval management

- Policy enforcement

- AI lifecycle tracking

AI-Specific Depth

IBM watsonx.governance supports governance workflows for foundation models, prompt management, LLM risk analysis, generative AI oversight, and AI policy enforcement.

Pros

- Excellent enterprise governance depth

- Strong compliance capabilities

- Scalable for large AI programs

Cons

- Enterprise-focused complexity

- Requires governance maturity

- Premium implementation costs

Security & Compliance

Enterprise-grade governance and compliance controls.

Deployment & Platforms

- Cloud

- Hybrid

- Enterprise infrastructure

Integrations & Ecosystem

IBM integrates with enterprise AI systems, governance platforms, and cloud environments.

- IBM AI ecosystem

- Red Hat OpenShift

- AWS

- Azure

- Enterprise security platforms

Pricing Model

Enterprise custom pricing.

Best-Fit Scenarios

- Regulated industries

- Enterprise AI governance

- Large-scale AI deployments

2- Arthur AI

One-line Verdict

Advanced AI observability and governance platform with strong model documentation capabilities.

Short Description

Arthur AI combines AI monitoring, governance, documentation, explainability, and lifecycle management into a centralized operational platform. Enterprises use Arthur AI to improve visibility into AI systems while maintaining governance oversight.

The platform supports operational monitoring for both traditional machine learning and generative AI systems.

Standout Capabilities

- AI observability

- Governance dashboards

- Documentation workflows

- Drift monitoring

- Explainability analytics

- Bias detection

- AI inventory tracking

AI-Specific Depth

Arthur AI includes LLM observability, prompt analytics, hallucination tracking, and operational visibility for production generative AI environments.

Pros

- Strong observability features

- Excellent enterprise governance

- Good generative AI support

Cons

- Enterprise pricing

- Complex implementation for smaller teams

- Advanced setup requirements

Security & Compliance

Enterprise-grade access management and governance controls.

Deployment & Platforms

- SaaS

- Hybrid environments

- Enterprise cloud

Integrations & Ecosystem

- Databricks

- Kubernetes

- MLflow

- AWS

- Azure

- OpenAI ecosystems

Pricing Model

Custom enterprise pricing.

Best-Fit Scenarios

- Enterprise AI governance

- Production AI monitoring

- LLM observability initiatives

3- Fiddler AI

One-line Verdict

Strong responsible AI and explainability platform with enterprise governance capabilities.

Short Description

Fiddler AI helps organizations operationalize explainable and trustworthy AI systems. The platform combines model monitoring, governance workflows, fairness analysis, and explainability reporting within a unified environment.

It is widely used by enterprises requiring operational AI transparency and responsible AI reporting.

Standout Capabilities

- Explainability dashboards

- Fairness analysis

- Governance workflows

- AI monitoring

- Documentation automation

- Drift analysis

- Risk reporting

AI-Specific Depth

Fiddler AI supports prompt evaluation, LLM monitoring, AI explainability, and operational risk management for generative AI systems.

Pros

- Excellent explainability tooling

- Strong visualization capabilities

- Mature enterprise governance workflows

Cons

- Enterprise-oriented pricing

- Smaller teams may underutilize features

- Requires governance planning

Security & Compliance

Enterprise-grade security architecture.

Deployment & Platforms

- Cloud

- Enterprise SaaS

Integrations & Ecosystem

- Snowflake

- Databricks

- AWS

- Azure

- SageMaker

- MLflow

Pricing Model

Custom pricing.

Best-Fit Scenarios

- Responsible AI programs

- Explainability-focused teams

- Enterprise governance initiatives

4- Arize AI

One-line Verdict

Modern AI observability platform with strong support for LLM monitoring and lifecycle visibility.

Short Description

Arize AI focuses on AI observability, evaluation, monitoring, and operational transparency. The platform helps enterprises monitor AI quality while maintaining lineage visibility and governance documentation.

It is particularly effective for organizations deploying retrieval-augmented generation systems and LLM-powered applications.

Standout Capabilities

- AI observability

- LLM tracing

- Retrieval evaluation

- Drift monitoring

- Prompt analytics

- Evaluation workflows

- Data lineage

AI-Specific Depth

Arize AI includes strong telemetry and tracing support for generative AI pipelines, prompts, embeddings, and retrieval workflows.

Pros

- Excellent LLM observability

- Strong telemetry capabilities

- Modern user interface

Cons

- Governance depth still evolving

- Enterprise-focused feature set

- Advanced workflows may require customization

Security & Compliance

Varies by enterprise deployment.

Deployment & Platforms

- Cloud-native SaaS

- Enterprise deployments

Integrations & Ecosystem

- OpenAI

- LangChain

- Databricks

- AWS

- Kubernetes

- Snowflake

Pricing Model

Custom enterprise pricing.

Best-Fit Scenarios

- LLM operations

- Retrieval evaluation

- AI observability programs

5- Credo AI

One-line Verdict

Purpose-built AI governance platform focused on policy management and responsible AI operations.

Short Description

Credo AI enables enterprises to operationalize responsible AI governance using centralized policies, risk assessments, workflow automation, and compliance documentation.

The platform is designed for organizations implementing enterprise-wide AI governance frameworks.

Standout Capabilities

- AI policy management

- Governance workflows

- Risk scoring

- Compliance automation

- AI inventory tracking

- Audit documentation

- Approval management

AI-Specific Depth

Credo AI supports generative AI policy enforcement, organizational governance controls, and operational AI accountability workflows.

Pros

- Strong governance specialization

- Excellent policy management

- Good compliance workflows

Cons

- Enterprise-oriented focus

- Less technical observability depth

- Advanced governance maturity required

Security & Compliance

Enterprise governance controls available.

Deployment & Platforms

- SaaS

- Enterprise cloud deployments

Integrations & Ecosystem

- Enterprise governance platforms

- Cloud providers

- AI operations stacks

Pricing Model

Enterprise pricing.

Best-Fit Scenarios

- Responsible AI governance

- Enterprise compliance programs

- AI accountability initiatives

6- TruEra

One-line Verdict

AI quality and explainability platform with strong operational transparency capabilities.

Short Description

TruEra helps organizations improve AI quality, transparency, governance, and explainability. The platform supports model evaluation, fairness analysis, monitoring, and governance reporting.

It is widely used for explainable AI initiatives and operational AI oversight.

Standout Capabilities

- Explainability analytics

- AI quality evaluation

- Governance reporting

- Drift monitoring

- Transparency dashboards

- Evaluation workflows

AI-Specific Depth

TruEra supports explainable AI reporting and operational visibility for machine learning and generative AI systems.

Pros

- Strong explainability focus

- Good AI quality management

- Enterprise reporting capabilities

Cons

- Advanced deployment complexity

- Enterprise-focused pricing

- Smaller ecosystem compared to larger vendors

Security & Compliance

Enterprise-grade controls available.

Deployment & Platforms

- Cloud

- Enterprise deployments

Integrations & Ecosystem

- Databricks

- SageMaker

- MLflow

- AWS

- Azure

Pricing Model

Custom enterprise pricing.

Best-Fit Scenarios

- AI explainability initiatives

- AI quality programs

- Enterprise governance teams

7- WhyLabs AI Observatory

One-line Verdict

Operational AI monitoring platform with strong anomaly detection and governance visibility.

Short Description

WhyLabs focuses on AI monitoring, data quality management, anomaly detection, and operational transparency. The platform helps enterprises monitor production AI systems while improving governance visibility.

Its governance and documentation features continue expanding for enterprise AI environments.

Standout Capabilities

- Drift monitoring

- Data quality analysis

- AI observability

- Operational analytics

- Dataset tracking

- Governance visibility

- Alert automation

AI-Specific Depth

WhyLabs supports monitoring for generative AI pipelines, embeddings, and retrieval systems.

Pros

- Excellent anomaly detection

- Strong monitoring automation

- Good operational visibility

Cons

- Governance workflows less mature

- Enterprise setup complexity

- Documentation depth varies

Security & Compliance

Enterprise-grade controls available.

Deployment & Platforms

- SaaS

- Enterprise cloud

Integrations & Ecosystem

- MLflow

- AWS

- Azure

- Databricks

- Kubernetes

Pricing Model

Varies by deployment scale.

Best-Fit Scenarios

- AI monitoring operations

- Data quality governance

- Drift management programs

8- Weights & Biases

One-line Verdict

Popular AI development platform with strong experiment tracking and lifecycle documentation features.

Short Description

Weights & Biases helps AI teams manage experiments, collaboration, reproducibility, and model lifecycle tracking. The platform is highly popular among AI researchers and machine learning engineers.

Its artifact management and experiment documentation capabilities provide strong operational visibility for AI development pipelines.

Standout Capabilities

- Experiment tracking

- Artifact management

- Collaboration dashboards

- Evaluation reporting

- Model lineage

- Reproducibility tracking

AI-Specific Depth

Weights & Biases supports prompt experimentation, LLM evaluation tracking, and AI lifecycle visibility.

Pros

- Excellent developer experience

- Strong collaboration workflows

- Popular AI ecosystem adoption

Cons

- Governance depth lighter than enterprise suites

- Compliance tooling may require integrations

- Enterprise features vary by plan

Security & Compliance

Varies by deployment and enterprise configuration.

Deployment & Platforms

- SaaS

- Self-hosted

- Cloud deployments

Integrations & Ecosystem

- Hugging Face

- OpenAI

- TensorFlow

- PyTorch

- LangChain

Pricing Model

Free tier plus enterprise pricing.

Best-Fit Scenarios

- AI experimentation

- Research collaboration

- MLOps lifecycle tracking

9- MLflow

One-line Verdict

Widely adopted open-source ML lifecycle platform with strong lineage and tracking support.

Short Description

MLflow provides experiment tracking, model registry management, workflow tracking, and artifact management for machine learning pipelines. Many organizations use MLflow as a foundational component of broader AI governance ecosystems.

It is highly flexible and widely integrated across modern MLOps environments.

Standout Capabilities

- Experiment tracking

- Model registry

- Workflow management

- Artifact storage

- Metadata tracking

- Reproducibility support

AI-Specific Depth

MLflow supports LLM lifecycle tracking, metadata management, and AI evaluation workflows.

Pros

- Strong open-source ecosystem

- Flexible integrations

- Large community adoption

Cons

- Limited native governance workflows

- Requires integrations for compliance management

- Documentation automation less advanced

Security & Compliance

Depends on deployment architecture.

Deployment & Platforms

- Open-source

- Cloud

- Self-hosted

Integrations & Ecosystem

- Databricks

- Kubernetes

- AWS

- Azure

- TensorFlow

- PyTorch

Pricing Model

Open-source with enterprise ecosystem support.

Best-Fit Scenarios

- MLOps foundations

- Experiment management

- Flexible AI infrastructure

10- Microsoft Responsible AI Toolbox

One-line Verdict

Developer-focused responsible AI toolkit for explainability, fairness, and transparency workflows.

Short Description

Microsoft Responsible AI Toolbox provides open-source tools for fairness analysis, explainability, error analysis, and responsible AI workflows. It is often used alongside Azure AI environments and research initiatives.

The platform focuses primarily on technical responsible AI workflows rather than centralized enterprise governance.

Standout Capabilities

- Fairness analysis

- Explainability tooling

- Error diagnostics

- Responsible AI workflows

- Open-source ecosystem

- AI evaluation utilities

AI-Specific Depth

Supports responsible AI workflows for machine learning systems and selected generative AI evaluation scenarios.

Pros

- Strong open-source ecosystem

- Excellent fairness analysis tools

- Good developer accessibility

Cons

- Limited enterprise governance workflows

- Requires engineering expertise

- Less centralized management functionality

Security & Compliance

Depends on deployment architecture.

Deployment & Platforms

- Azure

- Open-source deployments

- Cloud-native environments

Integrations & Ecosystem

- Azure ML

- MLflow

- Python ecosystems

- Responsible AI libraries

Pricing Model

Open-source with Azure integrations.

Best-Fit Scenarios

- Responsible AI engineering

- Developer experimentation

- Explainability research

Comparison Table

| Tool | Best For | Deployment | Core Strength | LLM Support | Governance Depth | Public Rating |

|---|---|---|---|---|---|---|

| IBM watsonx.governance | Regulated enterprises | Hybrid | Governance | Strong | Very High | Varies / N/A |

| Arthur AI | Enterprise AI ops | Cloud | Observability | Strong | High | Varies / N/A |

| Fiddler AI | Explainability | SaaS | Responsible AI | Strong | High | Varies / N/A |

| Arize AI | LLM telemetry | Cloud | Observability | Strong | Medium | Varies / N/A |

| Credo AI | AI governance | SaaS | Policy workflows | Strong | High | Varies / N/A |

| TruEra | AI quality | Cloud | Explainability | Medium | Medium | Varies / N/A |

| WhyLabs | Monitoring | SaaS | Drift detection | Medium | Medium | Varies / N/A |

| Weights & Biases | Experimentation | SaaS | Tracking | Strong | Medium | Varies / N/A |

| MLflow | Open-source MLOps | Self-hosted | Lifecycle tracking | Strong | Low | Varies / N/A |

| Microsoft Responsible AI Toolbox | Responsible AI dev | Open-source | Fairness analysis | Medium | Low | Varies / N/A |

Scoring & Evaluation Table

| Tool | Core | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| IBM watsonx.governance | 9.7 | 7.6 | 9.0 | 9.8 | 9.2 | 9.1 | 7.8 | 9.00 |

| Arthur AI | 9.4 | 8.2 | 9.1 | 9.3 | 9.2 | 8.9 | 8.4 | 8.98 |

| Fiddler AI | 9.2 | 8.5 | 8.9 | 9.1 | 9.0 | 8.8 | 8.3 | 8.84 |

| Arize AI | 9.1 | 8.8 | 9.0 | 8.8 | 9.3 | 8.6 | 8.6 | 8.87 |

| Credo AI | 9.0 | 8.0 | 8.5 | 9.1 | 8.7 | 8.5 | 8.2 | 8.57 |

| TruEra | 8.9 | 8.1 | 8.6 | 8.8 | 8.8 | 8.3 | 8.1 | 8.50 |

| WhyLabs | 8.8 | 8.4 | 8.5 | 8.5 | 9.0 | 8.3 | 8.6 | 8.57 |

| Weights & Biases | 9.0 | 9.2 | 9.4 | 8.5 | 9.1 | 8.9 | 8.9 | 9.00 |

| MLflow | 8.8 | 8.9 | 9.5 | 8.2 | 8.9 | 8.4 | 9.3 | 8.88 |

| Microsoft Responsible AI Toolbox | 8.4 | 8.7 | 8.7 | 8.0 | 8.5 | 8.1 | 9.1 | 8.49 |

Top 3 Recommendations

Best for Enterprise Governance

- IBM watsonx.governance

- Arthur AI

- Credo AI

Best for AI Engineering Teams

- Weights & Biases

- MLflow

- Arize AI

Best for Responsible AI Programs

- Fiddler AI

- TruEra

- Microsoft Responsible AI Toolbox

Which Tool Is Right for You

Solo Developers

MLflow and Microsoft Responsible AI Toolbox provide strong flexibility and low-cost experimentation for independent developers and small engineering teams.

SMB Organizations

Weights & Biases and Arize AI offer excellent usability, observability, and collaboration without overwhelming governance complexity.

Mid-Market Enterprises

Fiddler AI and TruEra balance explainability, operational monitoring, and governance workflows for scaling AI programs.

Large Enterprises

IBM watsonx.governance, Arthur AI, and Credo AI are best suited for enterprises requiring centralized governance, compliance workflows, and audit readiness.

Budget vs Premium

Open-source tools reduce licensing costs but may require more engineering effort. Enterprise platforms provide governance automation and operational scalability.

Governance vs Flexibility

Developer-focused platforms prioritize experimentation flexibility, while governance suites emphasize policy enforcement and operational oversight.

Implementation Playbook

First 30 Days

- Inventory all AI systems

- Define governance standards

- Identify documentation gaps

- Select pilot governance workflows

Days 30–60

- Integrate governance into ML pipelines

- Configure approval workflows

- Enable metadata automation

- Standardize model card templates

Days 60–90

- Expand governance coverage

- Automate compliance reporting

- Improve cross-team collaboration

- Scale AI oversight organization-wide

Common Mistakes to Avoid

- Treating documentation as a manual task

- Ignoring prompt governance for LLMs

- Failing to track datasets and lineage

- Missing explainability documentation

- Delaying governance implementation

- Overlooking audit readiness

- Neglecting approval workflows

- Using inconsistent model card formats

- Ignoring operational monitoring

- Failing to standardize AI governance processes

Frequently Asked Questions

1. What are AI Model Cards?

AI Model Cards are structured documents that describe AI models, datasets, intended use cases, evaluation metrics, limitations, risks, and governance information for transparency and accountability.

2. Why are AI documentation tools important?

These tools help organizations maintain governance visibility, audit readiness, explainability reporting, operational oversight, and compliance documentation across AI systems.

3. Are AI Model Cards only for regulated industries?

No. Organizations across all industries benefit from standardized AI documentation because it improves reproducibility, collaboration, transparency, and operational governance.

4. Do these platforms support generative AI systems?

Yes. Most modern platforms now support LLM governance, prompt tracking, hallucination monitoring, retrieval evaluation, and foundation model oversight.

5. What is the difference between AI observability and AI documentation?

AI observability focuses on monitoring system behavior and performance, while AI documentation focuses on governance, transparency, lineage, and lifecycle reporting.

6. Which tools are best for open-source environments?

MLflow and Microsoft Responsible AI Toolbox are strong choices for teams seeking open-source flexibility and customizable AI governance workflows.

7. Are these platforms difficult to implement?

Implementation complexity depends on governance maturity. Enterprise platforms may require more planning, while developer-focused tools are often easier to adopt.

8. Can AI documentation workflows be automated?

Yes. Many modern platforms automatically capture metadata, lineage, experiments, prompts, datasets, and deployment history from MLOps pipelines.

9. Which industries benefit most from these tools?

Healthcare, finance, insurance, government, manufacturing, retail, and enterprise SaaS organizations commonly use AI governance and documentation platforms.

10. What should buyers prioritize first?

Organizations should prioritize governance automation, integration compatibility, LLM support, lineage visibility, scalability, and explainability reporting.

Conclusion

AI Model Cards & Documentation Tools are becoming essential building blocks for enterprise AI governance, operational transparency, and responsible AI deployment. As organizations expand their use of machine learning and generative AI systems, maintaining structured documentation, explainability reporting, lineage visibility, and audit-ready governance workflows becomes increasingly important. Platforms like IBM watsonx.governance, Arthur AI, and Credo AI lead the enterprise governance segment, while tools such as MLflow and Weights & Biases remain highly valuable for engineering-focused AI lifecycle management. The right platform depends on governance maturity, operational scale, regulatory requirements, and AI deployment complexity. Organizations should begin by shortlisting tools aligned with their governance objectives, running pilot implementations on selected AI workflows, and then scaling standardized AI documentation practices across the broader enterprise ecosystem to improve trust, accountability, and long-term operational resilience.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals