Introduction

AI Red Teaming Platforms help organizations simulate adversarial attacks against artificial intelligence systems to identify vulnerabilities before attackers or real users can exploit them. These platforms test large language models, AI agents, copilots, RAG systems, chatbots, and machine learning applications for weaknesses such as prompt injection, jailbreaks, harmful outputs, sensitive data leakage, unsafe tool usage, hallucinations, model manipulation, and policy violations.

As enterprises rapidly deploy generative AI into customer support, internal operations, automation workflows, security systems, and business applications, AI red teaming has become a core part of responsible AI deployment and AI security strategy. Traditional application security testing is not enough for AI systems because LLMs interact through natural language and can behave unpredictably under adversarial conditions. Recent industry reporting highlights that AI-specific attacks such as prompt injection and unsafe agent behavior are becoming major enterprise concerns, pushing organizations toward continuous adversarial testing workflows.

Modern AI red teaming platforms automate attack simulation, adversarial testing, jailbreak generation, harmful scenario creation, and runtime evaluation. Many solutions also support policy testing, AI compliance workflows, observability, AI governance integration, and security reporting. Some platforms focus heavily on enterprise runtime defense, while others are developer-first frameworks designed for AI testing pipelines and CI/CD integration.

Why It Matters

- Helps identify vulnerabilities before production deployment

- Reduces risk of prompt injection and jailbreak attacks

- Improves trust and safety for AI systems

- Protects sensitive enterprise data

- Supports responsible AI and compliance initiatives

- Strengthens AI agent and RAG security

- Enables continuous AI security testing

- Helps validate runtime guardrails and governance policies

Real-World Use Cases

- Red teaming enterprise copilots

- Testing AI agents connected to tools and APIs

- Simulating jailbreak and prompt injection attacks

- Stress-testing RAG pipelines

- Evaluating hallucination and harmful outputs

- Detecting sensitive data leakage risks

- Running adversarial testing during CI/CD workflows

- Validating AI safety and governance controls

Evaluation Criteria for Buyers

When evaluating AI Red Teaming Platforms, buyers should focus on:

- Prompt injection and jailbreak testing depth

- Automated adversarial attack generation

- Coverage for LLMs, RAG, and AI agents

- Runtime testing and monitoring support

- Integration with AI pipelines and CI/CD

- Governance and reporting workflows

- Red team automation capabilities

- Support for compliance and security standards

- Ease of deployment and scalability

- Developer and security team collaboration

Best for: AI security teams, red teams, MLOps engineers, platform security groups, enterprises deploying AI agents, and organizations operating production generative AI systems.

Not ideal for: Lightweight AI prototypes, non-production experimentation, or teams without operational AI deployments.

What’s Changing in AI Red Teaming

- AI red teaming is moving from manual testing to continuous automation

- Enterprises are integrating AI red teaming into CI/CD pipelines

- Prompt injection attacks are becoming a major operational concern

- AI agents are increasing security complexity and attack surfaces

- Runtime AI security testing is becoming more important than static evaluations

- Regulatory pressure is increasing demand for adversarial testing

- AI observability and red teaming workflows are converging

- Organizations are expanding testing beyond prompts into tool usage and memory systems

- Multi-turn attack simulation is becoming a key requirement

- AI governance programs increasingly require formal red teaming processes

Quick Buyer Checklist

Before selecting a platform, verify:

- Does it support automated jailbreak generation?

- Can it test AI agents and RAG systems?

- Does it detect prompt injection vulnerabilities?

- Can it integrate with CI/CD pipelines?

- Does it provide reporting and governance workflows?

- Can it simulate multi-turn adversarial attacks?

- Does it support runtime testing?

- Can it test for sensitive data leakage?

- Does it support multiple model providers?

- Is it suitable for enterprise-scale AI deployments?

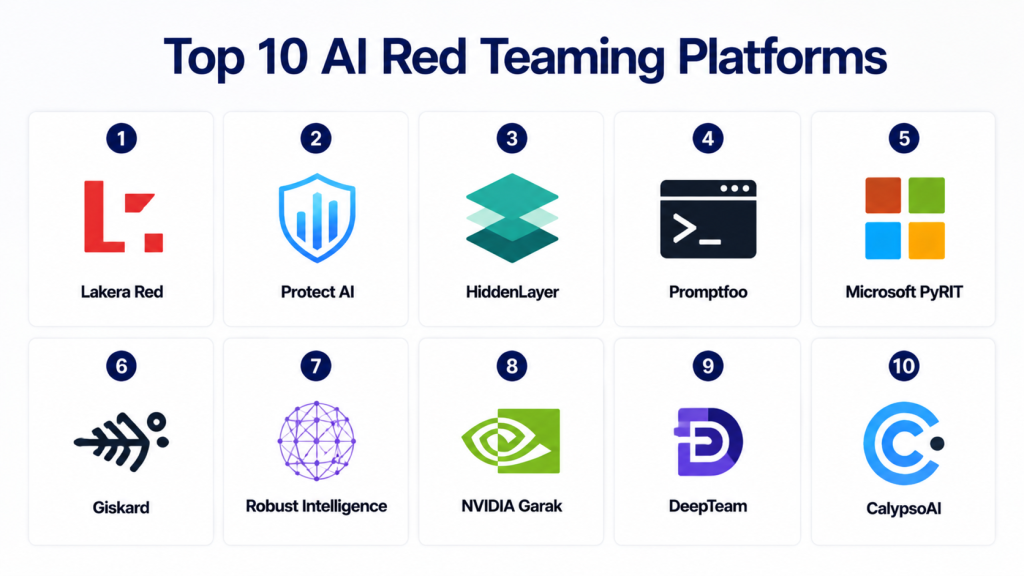

Top 10 AI Red Teaming Platforms

1- Lakera Red

2- Protect AI

3- HiddenLayer

4- Promptfoo

5- Microsoft PyRIT

6- Giskard

7- Robust Intelligence

8- NVIDIA Garak

9- DeepTeam

10- CalypsoAI

1- Lakera Red

One-line Verdict

One of the strongest enterprise AI red teaming platforms for adversarial testing and runtime AI security.

Short Description

Lakera Red helps organizations identify vulnerabilities in generative AI systems through automated adversarial testing, jailbreak simulation, prompt injection attacks, and runtime AI security assessments. The platform is built specifically for LLM applications, AI agents, copilots, and enterprise generative AI deployments.

Lakera combines red teaming automation with runtime protection capabilities, making it valuable for organizations that want both pre-deployment testing and operational AI defense.

Standout Capabilities

- Automated AI red teaming

- Prompt injection testing

- Jailbreak simulation

- Runtime AI security

- Data leakage testing

- AI agent testing

- Governance reporting

- Adversarial attack generation

AI-Specific Depth

Lakera supports testing for LLMs, AI agents, RAG systems, multimodal AI workflows, and enterprise copilots with runtime-aware security analysis.

Pros

- Strong enterprise AI security focus

- Excellent prompt attack coverage

- Useful runtime and pre-deployment workflows

Cons

- Enterprise-oriented pricing

- Advanced deployments may require security expertise

- Premium operational tooling

Security & Compliance

Enterprise-grade AI security architecture with governance-focused workflows.

Deployment & Platforms

- Cloud

- Enterprise AI environments

- Runtime AI workflows

Integrations & Ecosystem

- LLM applications

- AI agents

- RAG systems

- Enterprise copilots

- Cloud AI stacks

Pricing Model

Enterprise custom pricing.

Best-Fit Scenarios

- Enterprise AI red teaming

- Prompt injection testing

- AI agent security validation

2- Protect AI

One-line Verdict

Broad AI security platform with strong AI red teaming and AI supply chain protection capabilities.

Short Description

Protect AI provides security solutions for AI models, ML pipelines, LLM applications, and AI infrastructure. The platform supports adversarial testing, AI vulnerability management, red teaming automation, and model security analysis.

Its broader AI security coverage makes it suitable for organizations that want to combine AI red teaming with model lifecycle security and AI supply chain protection.

Standout Capabilities

- AI red teaming

- AI vulnerability scanning

- Model security analysis

- Runtime AI protection

- Supply chain risk management

- AI governance workflows

- Security posture management

AI-Specific Depth

Protect AI covers prompt attacks, unsafe outputs, adversarial testing, model risks, and deployment pipeline vulnerabilities.

Pros

- Broad AI security coverage

- Strong fit for enterprise security teams

- Useful beyond prompt-only testing

Cons

- Enterprise deployment complexity

- Requires security maturity

- Broad platform scope may exceed small-team needs

Security & Compliance

Enterprise AI security controls and governance integrations.

Deployment & Platforms

- Enterprise cloud

- AI infrastructure environments

- Security operations integrations

Integrations & Ecosystem

- MLOps pipelines

- Model repositories

- Cloud AI platforms

- Security workflows

- AI application stacks

Pricing Model

Enterprise pricing.

Best-Fit Scenarios

- AI security programs

- AI vulnerability management

- Enterprise red teaming

3- HiddenLayer

One-line Verdict

AI-native security platform focused on adversarial testing and runtime AI defense.

Short Description

HiddenLayer helps organizations secure AI systems against prompt injection, model abuse, adversarial attacks, unsafe outputs, and runtime AI threats. The platform includes automated AI red teaming capabilities that simulate realistic AI attacks against production systems.

It is particularly useful for enterprises that need operational AI security testing at scale.

Standout Capabilities

- Automated red teaming

- AI runtime protection

- Prompt attack simulation

- Adversarial AI testing

- AI monitoring

- Threat analytics

- Security reporting

AI-Specific Depth

HiddenLayer supports testing for LLMs, AI applications, RAG systems, and production AI deployments with adversarial simulation workflows.

Pros

- Strong runtime AI security

- Good enterprise scalability

- Mature AI threat focus

Cons

- Enterprise-oriented pricing

- Advanced setup requirements

- Security expertise recommended

Security & Compliance

Enterprise AI security architecture and operational threat defense.

Deployment & Platforms

- Cloud

- Enterprise AI environments

- Runtime workflows

Integrations & Ecosystem

- AI applications

- Security operations

- Cloud AI systems

- Model environments

- Enterprise governance stacks

Pricing Model

Custom enterprise pricing.

Best-Fit Scenarios

- Runtime AI defense

- AI threat simulation

- Enterprise adversarial testing

4- Promptfoo

One-line Verdict

Developer-first AI red teaming and evaluation platform with strong CI/CD integration.

Short Description

Promptfoo is a popular developer-focused framework for AI evaluations, prompt testing, adversarial attacks, and AI red teaming workflows. It supports automated attack generation, compliance mapping, model comparisons, and testing pipelines for LLM applications.

The platform is widely used by developers and AI engineering teams building secure LLM applications.

Standout Capabilities

- Automated adversarial testing

- Prompt attack generation

- CI/CD integration

- Model evaluations

- Compliance mapping

- Multi-turn attack simulation

- Developer workflows

AI-Specific Depth

Promptfoo supports testing for AI agents, RAG systems, jailbreaks, prompt injection, hallucinations, and unsafe model behavior.

Pros

- Excellent developer experience

- Strong automation workflows

- Good open ecosystem support

Cons

- Enterprise governance depth lighter than large commercial suites

- Requires engineering workflows

- Advanced enterprise monitoring may need integrations

Security & Compliance

Supports OWASP and AI governance testing workflows.

Deployment & Platforms

- Developer environments

- CI/CD pipelines

- Open-source workflows

Integrations & Ecosystem

- LLM APIs

- AI development stacks

- RAG workflows

- Security testing pipelines

- DevOps systems

Pricing Model

Open-source plus enterprise options.

Best-Fit Scenarios

- Developer-led AI red teaming

- CI/CD AI security testing

- Prompt attack automation

5- Microsoft PyRIT

One-line Verdict

Powerful open-source framework for automated AI red teaming and risk identification.

Short Description

PyRIT, short for Python Risk Identification Toolkit, is Microsoft’s open-source framework for red teaming generative AI systems. It helps teams probe models for harmful behavior, jailbreaks, prompt vulnerabilities, and unsafe outputs through automated workflows.

The framework is designed to be extensible and model-agnostic for enterprise AI security testing.

Standout Capabilities

- Automated AI red teaming

- Multi-turn attack testing

- Model-agnostic workflows

- Risk identification

- Adversarial prompt generation

- Extensible testing framework

- AI agent testing

AI-Specific Depth

PyRIT supports multimodal AI systems, LLMs, and AI agents with reusable adversarial testing components.

Pros

- Strong open-source flexibility

- Backed by Microsoft AI security research

- Good extensibility

Cons

- Requires engineering expertise

- Enterprise reporting may require extra tooling

- Less turnkey than commercial platforms

Security & Compliance

Depends on deployment and operational environment.

Deployment & Platforms

- Open-source

- Developer workflows

- Enterprise AI pipelines

Integrations & Ecosystem

- Python ecosystems

- LLM APIs

- AI pipelines

- Security testing environments

- AI development workflows

Pricing Model

Open-source.

Best-Fit Scenarios

- Custom AI red teaming

- Research-oriented security testing

- Enterprise AI experimentation

6- Giskard

One-line Verdict

AI testing and red teaming platform focused on evaluating AI vulnerabilities and model quality.

Short Description

Giskard helps organizations evaluate AI systems for vulnerabilities, hallucinations, prompt risks, and unsafe behavior using testing and red teaming workflows. It supports LLM applications, AI agents, and RAG systems through automated evaluation pipelines.

The platform combines AI quality evaluation with adversarial testing and governance-oriented workflows.

Standout Capabilities

- AI vulnerability testing

- Hallucination detection

- Adversarial prompt testing

- LLM evaluations

- AI quality reporting

- Governance workflows

- RAG testing

AI-Specific Depth

Giskard supports testing for prompt injection, hallucinations, bias, unsafe outputs, and adversarial AI behavior.

Pros

- Good balance of testing and governance

- Useful evaluation tooling

- Supports multiple AI workflows

Cons

- Enterprise monitoring depth varies

- Requires integration planning

- Advanced workflows may need customization

Security & Compliance

Supports governance and AI evaluation workflows.

Deployment & Platforms

- Cloud

- AI testing environments

- Enterprise AI workflows

Integrations & Ecosystem

- LLM APIs

- AI testing pipelines

- RAG systems

- Governance workflows

- AI observability stacks

Pricing Model

Varies by deployment.

Best-Fit Scenarios

- AI evaluations

- AI vulnerability testing

- Governance-oriented AI testing

7- Robust Intelligence

One-line Verdict

Enterprise AI firewall and adversarial testing platform focused on production AI resilience.

Short Description

Robust Intelligence helps organizations protect AI systems through adversarial testing, AI firewalls, runtime validation, and model robustness analysis. The platform is designed for enterprises deploying production AI systems across regulated and security-sensitive environments.

It combines red teaming workflows with runtime AI validation and governance-oriented security controls.

Standout Capabilities

- Adversarial AI testing

- AI firewall

- Runtime validation

- AI robustness analysis

- Prompt attack testing

- Security reporting

- Governance controls

AI-Specific Depth

Supports testing for prompt injection, adversarial inputs, unsafe outputs, and operational AI vulnerabilities.

Pros

- Strong enterprise AI security

- Good runtime validation

- Useful governance alignment

Cons

- Enterprise deployment complexity

- Premium operational tooling

- Smaller developer ecosystem than open-source tools

Security & Compliance

Enterprise AI governance and runtime protection controls.

Deployment & Platforms

- Enterprise cloud

- Runtime AI workflows

- AI governance environments

Integrations & Ecosystem

- AI deployment pipelines

- Governance workflows

- Security operations

- Enterprise AI applications

Pricing Model

Enterprise pricing.

Best-Fit Scenarios

- Production AI security

- Runtime AI resilience

- Enterprise adversarial testing

8- NVIDIA Garak

One-line Verdict

Open-source LLM vulnerability scanner designed for automated AI probing and adversarial testing.

Short Description

Garak is an open-source vulnerability scanner for LLMs that runs automated probes against AI systems to identify prompt weaknesses, hallucinations, and unsafe behavior. It is commonly used for AI security research and developer-led red teaming workflows.

The tool is useful for engineering teams that want lightweight automated vulnerability discovery.

Standout Capabilities

- Automated AI probes

- LLM vulnerability scanning

- Hallucination testing

- Prompt attack generation

- Open-source workflows

- Developer testing support

AI-Specific Depth

Garak focuses on identifying prompt-based weaknesses and unsafe model behavior in LLM systems.

Pros

- Open-source accessibility

- Useful automated scanning

- Good for developers and researchers

Cons

- Less enterprise workflow depth

- Requires engineering setup

- Monitoring and governance may need external tooling

Security & Compliance

Depends on deployment architecture.

Deployment & Platforms

- Open-source

- Developer workflows

- AI testing environments

Integrations & Ecosystem

- Python ecosystems

- AI testing pipelines

- LLM applications

- Research workflows

Pricing Model

Open-source.

Best-Fit Scenarios

- Lightweight AI red teaming

- Developer AI security testing

- Vulnerability scanning

9- DeepTeam

One-line Verdict

Open-source framework for stress-testing AI agents, RAG systems, and LLM workflows.

Short Description

DeepTeam is an open-source AI red teaming framework designed for testing AI agents, RAG systems, autonomous workflows, and conversational AI systems. It includes multiple adversarial attack strategies and vulnerability classes for evaluating AI behavior.

The framework is useful for organizations experimenting with advanced AI agent security testing.

Standout Capabilities

- AI agent testing

- Multi-turn jailbreak testing

- RAG pipeline testing

- Prompt injection testing

- Adversarial workflows

- Open-source extensibility

AI-Specific Depth

DeepTeam supports advanced adversarial simulation for AI agents and conversational workflows.

Pros

- Strong AI agent focus

- Open-source flexibility

- Useful advanced attack strategies

Cons

- Requires engineering expertise

- Enterprise tooling depth limited

- Monitoring integrations may require customization

Security & Compliance

Depends on implementation and deployment.

Deployment & Platforms

- Open-source

- AI testing workflows

- Developer environments

Integrations & Ecosystem

- AI agents

- RAG systems

- LLM applications

- Security testing workflows

Pricing Model

Open-source.

Best-Fit Scenarios

- AI agent red teaming

- RAG stress testing

- Advanced adversarial testing

10- CalypsoAI

One-line Verdict

Enterprise AI security and governance platform supporting secure AI adoption and adversarial testing.

Short Description

CalypsoAI helps enterprises secure generative AI deployments through policy enforcement, governance workflows, prompt security, and adversarial AI testing. It supports organizations adopting AI across business operations while maintaining operational oversight.

The platform combines governance, monitoring, and AI security controls into centralized enterprise workflows.

Standout Capabilities

- AI governance

- Prompt security

- Adversarial testing

- Data leakage protection

- Policy enforcement

- AI monitoring

- Governance reporting

AI-Specific Depth

CalypsoAI supports testing for unsafe outputs, prompt risks, policy violations, and operational AI vulnerabilities.

Pros

- Strong governance alignment

- Good enterprise visibility

- Useful AI policy controls

Cons

- Enterprise-focused deployments

- Less developer-oriented flexibility

- Pricing varies by organization size

Security & Compliance

Enterprise AI governance and operational security controls.

Deployment & Platforms

- SaaS

- Enterprise cloud

- Governance environments

Integrations & Ecosystem

- Enterprise AI applications

- Governance workflows

- Security operations

- AI policy systems

Pricing Model

Enterprise pricing.

Best-Fit Scenarios

- Secure AI adoption

- Governance-led AI programs

- Enterprise AI risk management

Comparison Table

| Tool | Best For | Deployment | Core Strength | AI Agent Testing | Enterprise Depth | Public Rating |

|---|---|---|---|---|---|---|

| Lakera Red | Enterprise AI security | Cloud | Prompt attack testing | Strong | Very High | Varies / N/A |

| Protect AI | Full AI security | Enterprise | AI lifecycle security | Strong | Very High | Varies / N/A |

| HiddenLayer | Runtime AI defense | Cloud | Adversarial testing | Strong | High | Varies / N/A |

| Promptfoo | Developer workflows | Open-source | CI/CD AI testing | Medium | Medium | Varies / N/A |

| Microsoft PyRIT | Open-source red teaming | Open-source | Automated AI attacks | Strong | Medium | Varies / N/A |

| Giskard | AI evaluation testing | Cloud | AI vulnerability testing | Medium | Medium | Varies / N/A |

| Robust Intelligence | AI runtime resilience | Enterprise | AI firewall | Medium | High | Varies / N/A |

| NVIDIA Garak | AI vulnerability scanning | Open-source | Automated probing | Low | Low | Varies / N/A |

| DeepTeam | AI agent testing | Open-source | Multi-turn attacks | Strong | Medium | Varies / N/A |

| CalypsoAI | Enterprise governance | SaaS | AI policy security | Medium | High | Varies / N/A |

Scoring & Evaluation Table

| Tool | Core | Ease | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Lakera Red | 9.5 | 8.5 | 8.8 | 9.6 | 9.2 | 8.9 | 8.3 | 9.02 |

| Protect AI | 9.4 | 8.1 | 9.0 | 9.6 | 9.1 | 8.9 | 8.2 | 8.98 |

| HiddenLayer | 9.2 | 8.2 | 8.7 | 9.5 | 9.0 | 8.7 | 8.1 | 8.83 |

| Promptfoo | 8.9 | 8.7 | 9.1 | 8.8 | 8.8 | 8.4 | 9.1 | 8.87 |

| Microsoft PyRIT | 8.8 | 7.9 | 8.7 | 8.9 | 8.7 | 8.2 | 9.2 | 8.63 |

| Giskard | 8.8 | 8.4 | 8.5 | 8.7 | 8.6 | 8.3 | 8.5 | 8.55 |

| Robust Intelligence | 9.0 | 8.0 | 8.6 | 9.3 | 8.9 | 8.5 | 8.1 | 8.66 |

| NVIDIA Garak | 8.2 | 7.8 | 8.1 | 8.4 | 8.5 | 7.9 | 9.0 | 8.21 |

| DeepTeam | 8.5 | 7.9 | 8.2 | 8.7 | 8.5 | 7.8 | 9.0 | 8.34 |

| CalypsoAI | 8.8 | 8.2 | 8.4 | 9.0 | 8.7 | 8.4 | 8.2 | 8.53 |

Top 3 Recommendations

Best for Enterprise AI Security

- Lakera Red

- Protect AI

- HiddenLayer

Best for Developers & Open-Source Workflows

- Promptfoo

- Microsoft PyRIT

- NVIDIA Garak

Best for AI Agents & Advanced Adversarial Testing

- Lakera Red

- DeepTeam

- Microsoft PyRIT

Which Tool Is Right for You

Solo Developers

Promptfoo, Garak, and PyRIT are strong choices for developers who want flexible and affordable AI red teaming workflows. These tools fit well into custom AI applications and developer-led testing environments.

SMB Organizations

Giskard and Promptfoo provide a balance between usability, automation, and AI security testing without requiring the complexity of massive enterprise security suites.

Mid-Market Enterprises

HiddenLayer, Robust Intelligence, and CalypsoAI provide stronger governance, runtime security, and operational monitoring for organizations scaling AI deployments.

Large Enterprises

Lakera Red and Protect AI are best suited for enterprises that need advanced AI threat simulation, runtime AI defense, governance integration, and operational scalability.

Budget vs Premium

Open-source platforms reduce licensing costs but require engineering effort. Enterprise suites provide stronger operational workflows, reporting, runtime protection, and governance integrations.

Feature Depth vs Ease of Use

Developer frameworks offer flexibility and customization, while enterprise platforms provide more turnkey governance, observability, and security operations workflows.

Integrations & Scalability

Choose platforms that fit your AI stack, cloud provider, CI/CD workflows, observability tools, and governance requirements. AI red teaming becomes more important as systems connect to tools, APIs, documents, and autonomous workflows.

Security & Compliance Needs

Regulated organizations should prioritize reporting, audit logs, governance integrations, runtime monitoring, and policy enforcement alongside adversarial testing.

Implementation Playbook

First 30 Days

- Inventory all AI applications and agents

- Identify high-risk AI workflows

- Define adversarial testing objectives

- Select pilot red teaming environments

- Build baseline attack scenarios

- Test for prompt injection and jailbreaks

- Document vulnerabilities and risky behaviors

Days 30–60

- Integrate red teaming into CI/CD pipelines

- Automate attack generation workflows

- Add AI runtime monitoring

- Expand testing for AI agents and RAG systems

- Configure governance reporting

- Train developers on AI security testing

Days 60–90

- Scale adversarial testing across production AI systems

- Automate reporting and incident workflows

- Add runtime validation controls

- Expand testing to multimodal AI workflows

- Improve remediation tracking

- Operationalize continuous AI security testing

Common Mistakes to Avoid

- Treating AI red teaming as a one-time test

- Ignoring multi-turn adversarial attacks

- Failing to test AI agents connected to tools

- Not validating retrieved documents in RAG systems

- Relying only on manual testing

- Ignoring runtime AI monitoring

- Skipping hallucination testing

- Failing to document vulnerabilities and fixes

- Not integrating red teaming into CI/CD

- Assuming traditional security testing is enough for LLMs

- Ignoring sensitive data leakage testing

- Forgetting governance and compliance reporting

- Not involving security teams early in AI deployment

- Underestimating prompt injection complexity

Frequently Asked Questions

1. What is AI red teaming?

AI red teaming is the process of simulating attacks against AI systems to identify vulnerabilities, unsafe behavior, prompt injection risks, jailbreaks, hallucinations, and security weaknesses before deployment.

2. Why is AI red teaming important?

AI systems behave differently from traditional software because they respond dynamically to natural language and external context. Red teaming helps identify risks that normal testing may miss.

3. What types of attacks do these platforms test?

Most platforms test prompt injection, jailbreaks, harmful outputs, hallucinations, unsafe tool usage, sensitive data leakage, and adversarial prompts.

4. Can these tools test AI agents?

Yes. Many modern AI red teaming platforms now support AI agents, autonomous workflows, tool calls, memory systems, and RAG pipelines.

5. What is the difference between AI observability and AI red teaming?

AI observability focuses on monitoring operational AI behavior, while AI red teaming focuses on actively attacking and stress-testing AI systems to uncover vulnerabilities.

6. Are open-source red teaming tools good enough?

Open-source tools are valuable for developers and smaller teams, but enterprises often need stronger governance, monitoring, runtime protection, and operational reporting.

7. Which platform is best for developers?

Promptfoo, PyRIT, and Garak are excellent developer-focused platforms because they support automation, extensibility, and CI/CD integration.

8. Which platform is best for enterprises?

Lakera Red, Protect AI, and HiddenLayer are strong choices for enterprises needing operational AI security and governance workflows.

9. Can AI red teaming help with compliance?

Yes. Many governance and regulatory frameworks increasingly expect organizations to perform adversarial testing and operational AI risk validation.

10. What should organizations prioritize first?

Organizations should first focus on high-risk AI systems involving sensitive data, AI agents, external tool usage, and customer-facing workflows.

Conclusion

AI Red Teaming Platforms are becoming essential components of enterprise AI security, governance, and responsible deployment strategies. As organizations expand the use of LLMs, copilots, AI agents, and RAG systems across business operations, adversarial testing is no longer optional. Platforms such as Lakera Red, Protect AI, and HiddenLayer provide strong enterprise-grade AI threat simulation and runtime security workflows, while developer-first tools like Promptfoo, PyRIT, and Garak help engineering teams integrate AI security testing directly into development pipelines. The right platform depends on operational maturity, deployment scale, governance requirements, and the complexity of AI systems being protected. Organizations should begin by identifying high-risk AI workflows, piloting automated red teaming on critical applications, validating runtime defenses, and then scaling continuous adversarial testing across the broader AI ecosystem to improve trust, resilience, and operational security.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals