This blog is still in progress. I am still working on it. Howee]ver, I have consolidated all the comparison available on the internet at one place in image format. Please refer it.

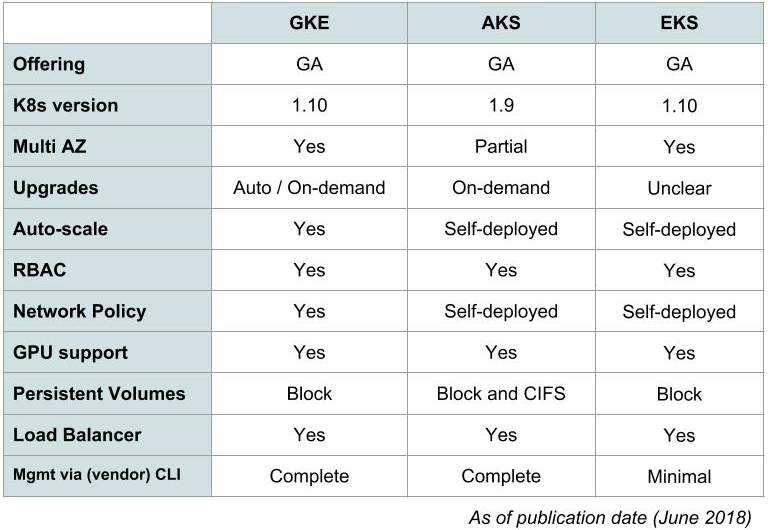

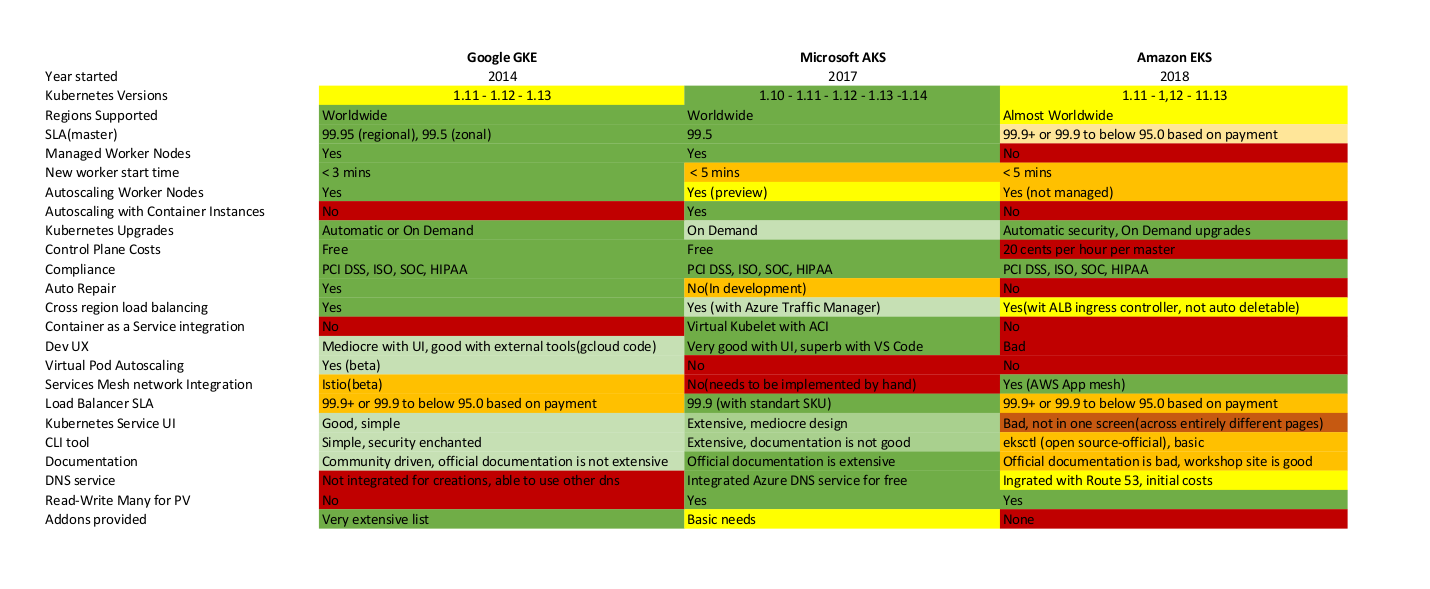

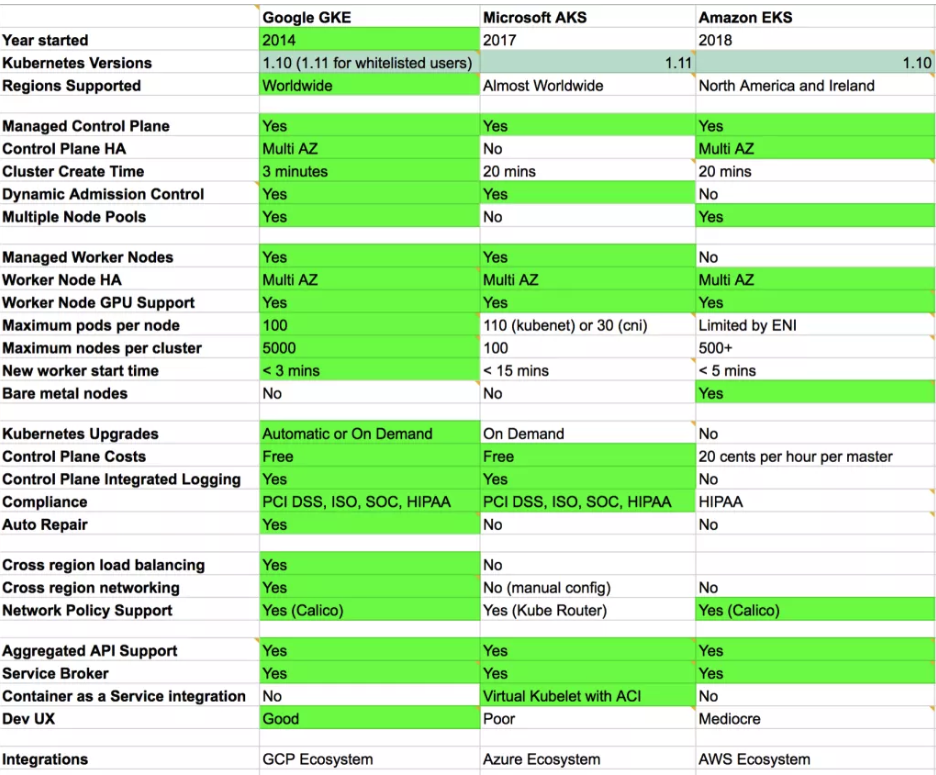

Feature Comparison

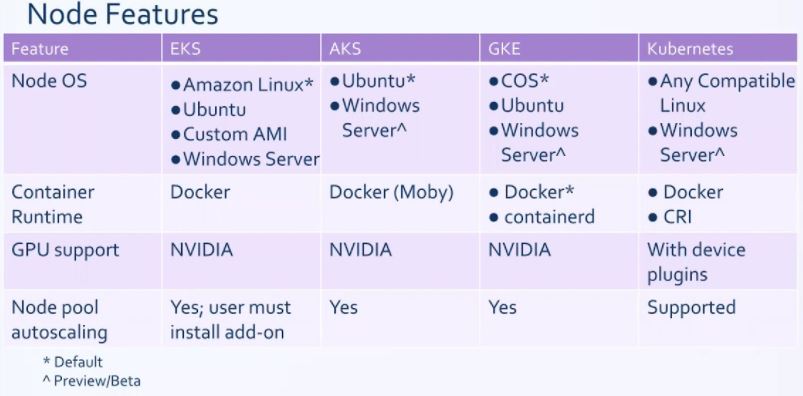

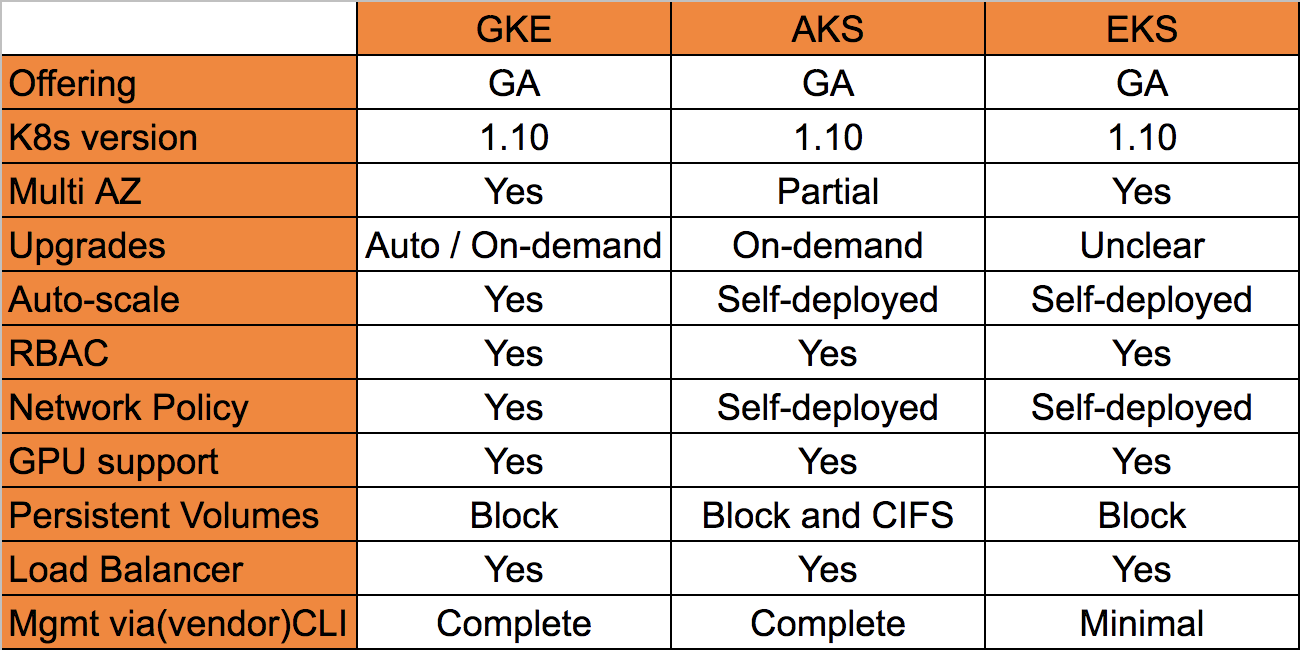

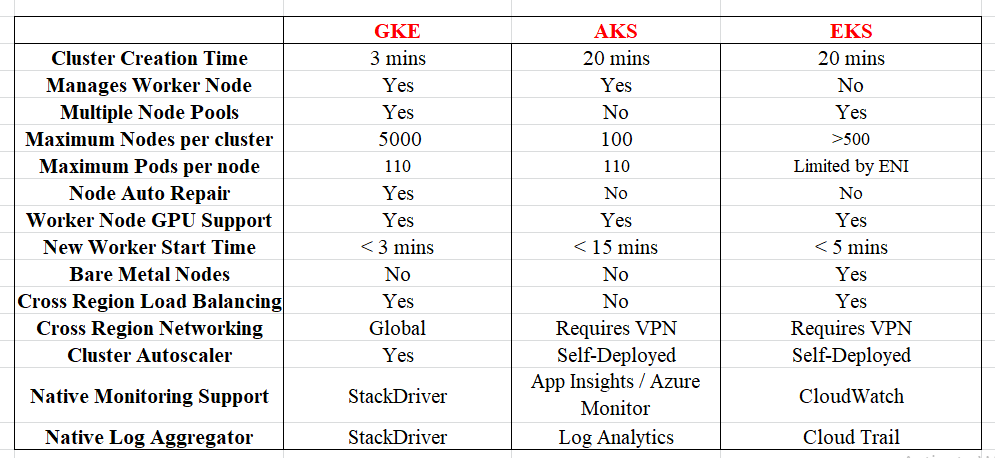

In this section, we compare the key features of the three providers. Following this table, we’ll provide a deeper analysis of each feature.

| Amazon EKS | Microsoft AKS | Google GKE | |

|---|---|---|---|

| Supported Kubernetes version(s) | 1.18 1.17 1.16 1.15 | 1.20 1.19 1.18 1.17 | 1.17 1.16 1.15 1.14 |

| Service Launch Date | June 2018 | June 2018 | August 2015 |

| CNCF Kubernetes Conformance | Yes | Yes | Yes |

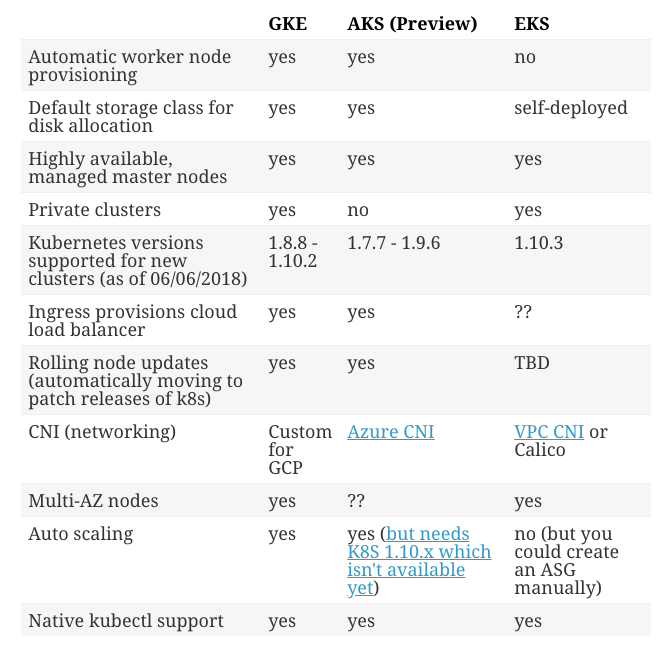

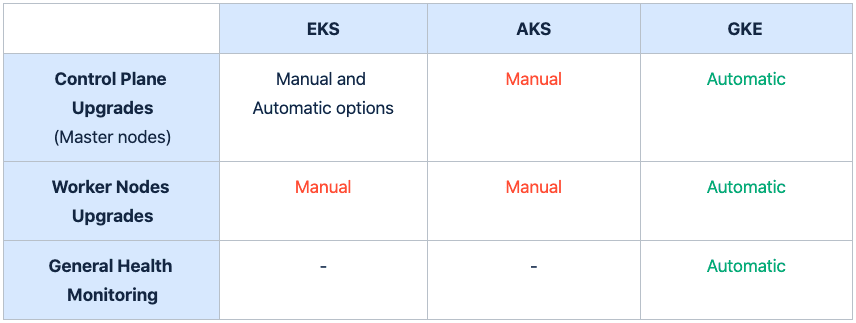

| Control-plane Upgrade | Manual User must also manually update the system services that run on nodes (e.g., kube-proxy, coredns, AWS VPC CNI) | Manual All system components update with cluster upgraded | Automatic (default) or Manual |

| Node Upgrade | Manual EKS will drain and replace nodes | Automatic or manual AKS will drain and replace nodes | Automatic (default) GKE drains and replaces nodes |

| Node OS | Linux: Amazon Linux 2 (default) Ubuntu (Partner AMI) Bottlerocket Windows: Windows Server 2019 | Linux: Ubuntu Windows: Windows Server 2019 | Linux: Container-Optimized OS (COS) (default) Ubuntu Windows: Windows Server 2019 Windows Server version 1909 |

| Container Runtime | Docker (default) Containers (through Bottlerocket) | Docker (default) Containerd | Docker (default) Containerd GVisor |

| High Availability Cluster | Control plane is deployed across multiple Availability Zones (default) | Control plane components will be spread between the number of zones defined by the Admin | Zonal Clusters: Single Control Plane Regional Clusters: Three Kubernetes control planes quorum |

| Control Plane SLA | 99.95% | 99.95% | 99.95% |

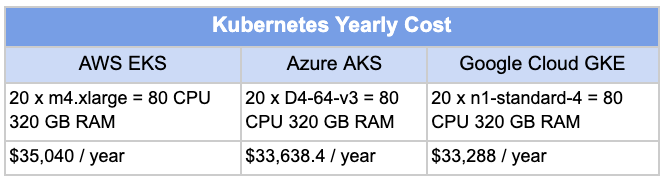

| Pricing | $0.10/hour (USD) per cluster + standard costs of EC2 instances and other resources | Pay-as-you-go: Standard costs of node VMs and other resources | $0.10/hour (USD) per cluster + standard costs of GCE machines and other resources |

| GPU support | Yes (NVIDIA) | Yes (NVIDIA) | Yes (NVIDIA) |

| Required install device plugin | Required install device plugin | Required install device plugin Compute Engine A2 VMs | |

| RBAC | Yes | Yes | Yes |

| Control Plane: Log Collection | Optional Default: Off Logs are sent to AWS CloudWatch | Optional Default: Off Logs are sent to Azure Monitor | Optional Default: Off Logs are sent to Stackdriver |

| Container Performance Metrics | Optional Default: Off Metrics are sent to AWS CloudWatch Container Insights | Optional Default: Off Metrics are sent to Azure Monitor | Optional Default: Off Metrics are sent to Stackdriver |

| Node Health Monitoring | No Kubernetes-aware support; if node instance fails, the AWS autoscaling group of the node pool will replace it | Auto repair is now available. Node status monitoring is available. Use autoscaling rules to shift workloads. | Node auto-repair enabled by default |

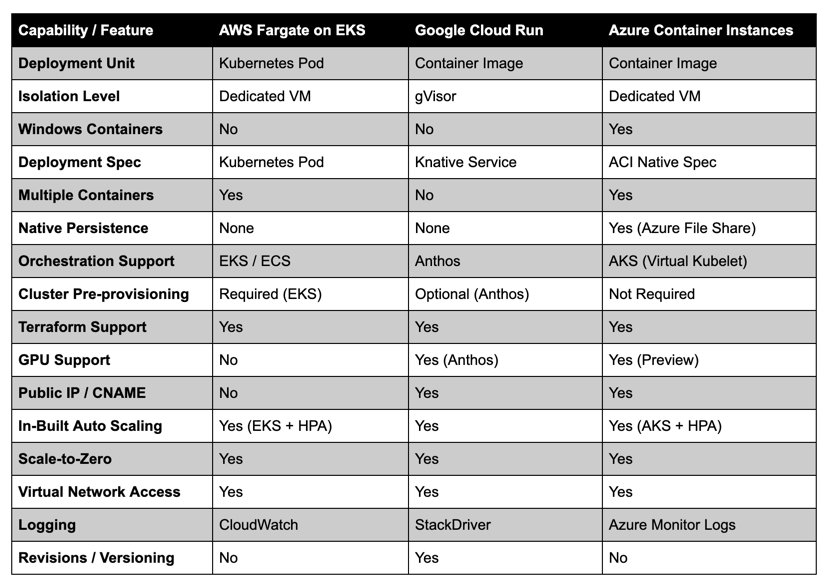

| Serverless Compute | AWS Fargate | Azure Container Instances | Cloud Run for Anthos |

| On-Prem Services | Via AWS Outposts | Yes | Via Anthos GKE On Prem through Google’s Connect service for multicluster management, in a vSphere 6.5 or 6.7 environment |

Strengths and Weaknesses of AKS, EKS, GKE

In alphabetical order, here is a quick summary of AKS, EKS and GKE. It is not an exhaustive list and could be debated as some strengths or weaknesses you might view as a strength for your individual application.

AKS Strengths

- If you are a .NET or Microsoft shop, AKS has first class support for Windows.

- Configuring the virtual network and subnet is simple.

- Robust command line support using the az cli.

- Integrated logging and monitoring solution.

- Azure Active Directory integration for cluster authentication.

AKS Weaknesses

- AKS is relatively new compared to GKE and EKS. As a result many features are still in alpha or beta.

- At Fairwinds, we are proponents of infrastructure as code, making use of Terraform a lot. The Azure Terraform provider doesn’t fully support all of the Azure APIs, so there are some gotchas. The az command line tool can be used to supplement Terraform.

- You are limited on your selection of underlying operating systems you can run. The only choices are Linux (Ubuntu) and Windows Server. The virtual machines do not support customization directly and there is no ability to provide a cloud init or user data script.

- You have to run a default node pool when deploying a cluster, it always has to be there and you can’t change server types once deployed.

- Support for multiple node pools is a preview feature.

- Node updates are not automatic, compared to GKE auto-updates.

- Nodes do not automatically recover from kubelet failures, compared to GKE auto-recovery.

EKS Strengths

- AWS is very mature and tools like Terraform integrate nicely. Amazon has published and maintains a very robust set of EKS Terraform modules with many features. If you only need to interact with EKS, there is an official command line tool, eksctl.

- EKS nodes are more customizable. You can use your own machine images (AMIs). This allows you to customize your operating system and configure servers for your exact needs. You still have the option for using a pre-set AMI, but sometimes these AMIs will not be viable due to security compliance regulations.

- AWS is the most widely used and offers many additional cloud services. Most Kubernetes tooling (DNS management, certificate management, etc.) fully support AWS integration. Kubernetes on AWS is widely documented and offers a large community of users.

EKS Weakness

- While it is simple to create an EKS cluster, adding and customizing node pools can be complex.

- Node updates are not automatic, compared to GKE auto-updates.

- Nodes do not automatically recover from kubelet failures, compared to GKE auto-recovery.

- Pod density and CNI limitations based on instance type and subnet sizes.

GKE Strengths

- GKE makes it really easy to deploy a Kubernetes cluster. The command line tool, and web console are both very friendly.

- Updating the cluster version and node pools is a simple one click process. The version can also be set to automatically update when possible.

- Node pools can be configured to self heal, preventing manual intervention when the underlying kubelet has issues.

- GKE cherry picks bug and CVE fixes into the version of Kubernetes they ship. The downside to this is if a user is running the GKE version of 1.15.9 and they wanted to look at the Kubernetes code, they couldn’t be completely sure that code wasn’t altered.

GKE Weaknesses

- You cannot customize your server configuration. You have to use one of the two server types they offer: Container OS or Ubuntu. You don’t get to pick the versions or kernel versions. If you want a deeper level of customization on your underlying hardware, you cannot do that with GKE.

- GKE runs managed cluster-addons (kube-dns, ip-masq-agent, etc…) that are not overly configurable to the end user. They cannot be modified to use node selectors or tolerations. Any changes made to these addons are reverted.

- While not a huge weakness, GKE has the concept of Zonal vs Regional clusters. By default, a cluster’s control plane (primary) and nodes all run in a single compute zone that you specify when you create the cluster. This cannot be changed after the cluster is created. If you need a production ready GKE cluster, be sure to create a Regional cluster.

I’m a DevOps/SRE/DevSecOps/Cloud Expert passionate about sharing knowledge and experiences. I have worked at Cotocus. I share tech blog at DevOps School, travel stories at Holiday Landmark, stock market tips at Stocks Mantra, health and fitness guidance at My Medic Plus, product reviews at TrueReviewNow , and SEO strategies at Wizbrand.

Do you want to learn Quantum Computing?

Please find my social handles as below;

Rajesh Kumar Personal Website

Rajesh Kumar at YOUTUBE

Rajesh Kumar at INSTAGRAM

Rajesh Kumar at X

Rajesh Kumar at FACEBOOK

Rajesh Kumar at LINKEDIN

Rajesh Kumar at WIZBRAND

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals