Introduction

Search relevance tuning for Retrieval-Augmented Generation systems has become one of the most critical layers in modern AI infrastructure. Even the best large language models fail when retrieval quality is poor, ranking is inaccurate, or semantic search pipelines return irrelevant chunks. Organizations building enterprise AI assistants, AI search engines, internal copilots, customer support bots, and knowledge automation systems now prioritize relevance optimization to improve answer accuracy, reduce hallucinations, and increase user trust.

Modern relevance tuning platforms help teams optimize ranking signals, hybrid search performance, vector retrieval, metadata filtering, reranking pipelines, query rewriting, personalization, contextual search, and retrieval evaluation. These systems are especially important for enterprises managing large-scale document repositories, multi-source knowledge bases, and domain-specific AI search experiences.

Why It Matters

- Improves retrieval precision and answer quality

- Reduces hallucinations in AI-generated responses

- Enhances semantic and hybrid search relevance

- Optimizes enterprise knowledge retrieval

- Supports scalable AI search operations

- Improves user trust and engagement

Real-World Use Cases

- Enterprise AI assistants

- Customer support copilots

- Internal document search

- AI-powered legal research

- Healthcare knowledge retrieval

- Ecommerce conversational search

- Developer documentation copilots

- Financial compliance search systems

Evaluation Criteria for Buyers

When evaluating search relevance tuning platforms for RAG, buyers should focus on:

- Retrieval quality optimization

- Hybrid search support

- Reranking capabilities

- Query understanding

- Metadata filtering flexibility

- Vector database compatibility

- Evaluation and benchmarking

- Scalability and latency

- AI observability

- Security and governance

Best For

Organizations building production-grade RAG pipelines that require accurate retrieval, scalable search relevance optimization, and enterprise AI search quality control.

Not Ideal For

Small teams experimenting with lightweight AI prototypes that do not yet require advanced retrieval optimization or enterprise-scale relevance tuning.

What’s Changing in Search Relevance Tuning for RAG

- Hybrid search is replacing pure vector search approaches

- Reranking models are becoming standard in enterprise RAG

- Query rewriting is improving conversational retrieval quality

- Context-aware ranking systems are growing rapidly

- Retrieval evaluation frameworks are becoming mandatory

- Enterprises are prioritizing grounded generation

- AI observability platforms now include retrieval metrics

- Multi-vector retrieval strategies are gaining adoption

- Metadata-aware search pipelines are becoming critical

- Real-time retrieval analytics are improving tuning workflows

Quick Buyer Checklist

Before selecting a relevance tuning platform, verify:

- Hybrid search compatibility

- Reranking model support

- Evaluation pipeline availability

- Enterprise scalability

- Multi-source retrieval support

- Vector database integrations

- API flexibility

- Security and governance controls

- AI monitoring features

- Latency optimization capabilities

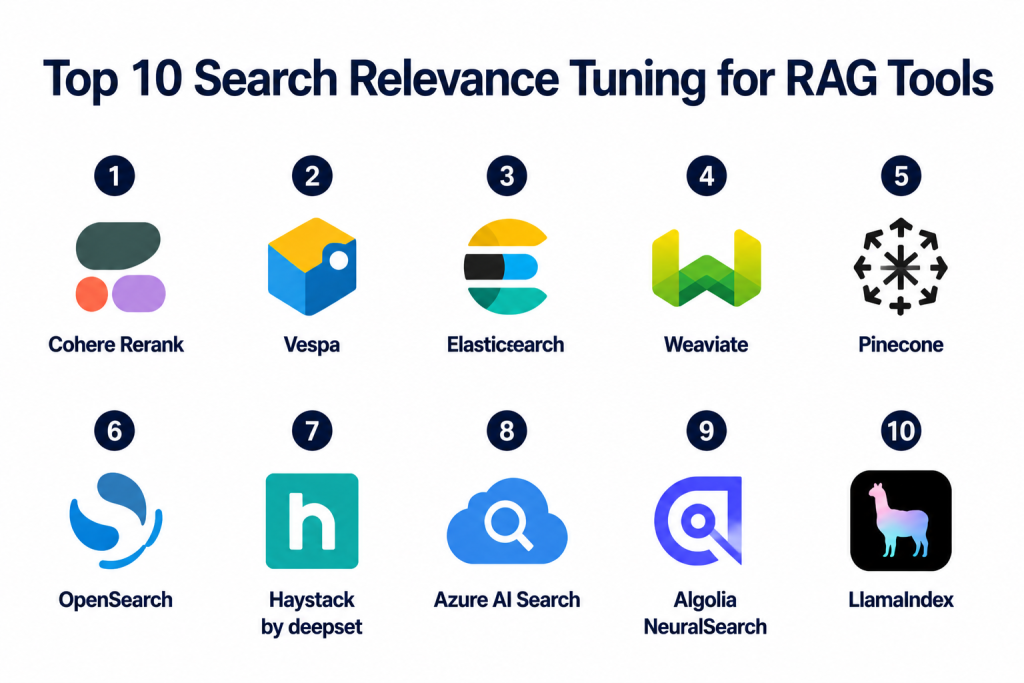

Top 10 Search Relevance Tuning for RAG Tools

1- Cohere Rerank

2- Vespa

3- Elasticsearch

4- Weaviate

5- Pinecone

6- OpenSearch

7- Haystack by deepset

8- Azure AI Search

9- Algolia NeuralSearch

10- LlamaIndex

1. Cohere Rerank

One-line Verdict

Best for high-quality semantic reranking in enterprise RAG pipelines.

Short Description

Cohere Rerank is one of the most widely adopted relevance optimization solutions for AI retrieval systems. It specializes in reranking retrieved search results using transformer-based semantic understanding to improve answer grounding and contextual relevance. Many enterprise RAG stacks integrate Cohere Rerank after vector retrieval to enhance final document selection before generation.

The platform is known for strong multilingual performance, fast inference, and production-grade ranking APIs suitable for AI assistants, enterprise search systems, and customer-facing AI applications.

Standout Capabilities

- Semantic reranking APIs

- Transformer-based relevance optimization

- Multilingual retrieval tuning

- Fast ranking inference

- Query-document relevance scoring

- Enterprise API deployment

- RAG-focused optimization

- Hybrid search enhancement

AI-Specific Depth

Cohere Rerank significantly improves retrieval quality in RAG systems by reordering retrieved passages based on semantic relevance. It works especially well in multi-stage retrieval pipelines where initial vector retrieval may contain noisy results.

Pros

- Strong reranking quality

- Easy API integration

- Excellent multilingual support

Cons

- Additional inference cost

- Requires upstream retrieval pipeline

- Limited full-search stack functionality

Security & Compliance

Enterprise-grade deployment options available. Specific compliance varies by deployment model.

Deployment & Platforms

- Cloud API

- Enterprise deployment options

Integrations & Ecosystem

Cohere integrates with multiple vector databases, orchestration frameworks, and enterprise AI stacks.

- LangChain

- LlamaIndex

- Pinecone

- Weaviate

- Elasticsearch

- Azure AI ecosystems

Pricing Model

Usage-based API pricing.

Best-Fit Scenarios

- Enterprise RAG optimization

- AI copilots

- Semantic search reranking

2. Vespa

One-line Verdict

Best for large-scale hybrid search and advanced ranking customization.

Short Description

Vespa is a highly scalable search and recommendation engine designed for real-time large-scale AI retrieval workloads. It provides advanced ranking pipelines, customizable relevance tuning, vector search, keyword search, and low-latency retrieval capabilities for enterprise-grade AI systems.

Its flexibility makes it suitable for organizations requiring deep control over ranking signals, personalization, and hybrid retrieval optimization.

Standout Capabilities

- Hybrid retrieval

- Custom ranking pipelines

- Vector and lexical search

- Low-latency serving

- Real-time indexing

- Personalization support

- Distributed architecture

- Advanced ranking expressions

AI-Specific Depth

Vespa supports complex ranking logic that combines semantic similarity, metadata signals, user behavior, and contextual ranking for advanced RAG optimization.

Pros

- Extremely scalable

- Deep ranking customization

- Strong hybrid retrieval support

Cons

- Complex deployment

- Steeper learning curve

- Requires infrastructure expertise

Security & Compliance

Enterprise deployment security controls available.

Deployment & Platforms

- Self-hosted

- Kubernetes

- Cloud environments

Integrations & Ecosystem

- TensorFlow

- PyTorch

- OpenSearch ecosystems

- AI orchestration tools

Pricing Model

Open-source with infrastructure costs.

Best-Fit Scenarios

- Enterprise AI search

- Large-scale retrieval systems

- Advanced ranking experimentation

3. Elasticsearch

One-line Verdict

Best for enterprises combining traditional search with semantic AI retrieval.

Short Description

Elasticsearch remains one of the most established enterprise search platforms and has evolved significantly for RAG workloads through vector search, semantic ranking, and hybrid retrieval support. Organizations use Elasticsearch to combine lexical precision with AI-driven semantic retrieval for high-quality enterprise search systems.

Its mature ecosystem and scalability make it highly attractive for production AI deployments.

Standout Capabilities

- Hybrid search

- Vector search support

- BM25 optimization

- Semantic retrieval

- Query boosting

- Metadata filtering

- Distributed indexing

- Analytics and observability

AI-Specific Depth

Elasticsearch supports retrieval tuning using hybrid ranking strategies that combine keyword relevance, semantic embeddings, and metadata scoring.

Pros

- Mature ecosystem

- Strong scalability

- Excellent analytics tooling

Cons

- Complex tuning process

- Infrastructure-heavy

- Advanced AI features may require expertise

Security & Compliance

Enterprise security features available.

Deployment & Platforms

- Cloud

- Self-hosted

- Kubernetes

Integrations & Ecosystem

- Kibana

- LangChain

- Vector embedding frameworks

- Observability ecosystems

Pricing Model

Subscription and infrastructure-based pricing.

Best-Fit Scenarios

- Enterprise document search

- Internal AI assistants

- Hybrid semantic search

4. Weaviate

One-line Verdict

Best for semantic-first RAG pipelines with flexible AI integrations.

Short Description

Weaviate is an AI-native vector database focused on semantic search, retrieval optimization, and contextual AI applications. Its modular architecture allows teams to build advanced retrieval systems with hybrid search, reranking, metadata filtering, and AI-powered semantic understanding.

The platform is popular among AI engineering teams building scalable RAG applications.

Standout Capabilities

- AI-native vector search

- Hybrid retrieval

- Semantic ranking

- Metadata filtering

- Modular AI integrations

- Generative search

- Multi-modal search

- Scalable clustering

AI-Specific Depth

Weaviate provides semantic-aware retrieval optimization with flexible reranking and embedding model integrations.

Pros

- AI-focused architecture

- Strong semantic retrieval

- Flexible integrations

Cons

- Smaller enterprise ecosystem

- Requires vector expertise

- Advanced tuning may require engineering effort

Security & Compliance

Enterprise security controls supported.

Deployment & Platforms

- Cloud

- Self-hosted

- Kubernetes

Integrations & Ecosystem

- OpenAI

- Cohere

- Hugging Face

- LangChain

- LlamaIndex

Pricing Model

Cloud subscription and infrastructure-based pricing.

Best-Fit Scenarios

- Semantic search systems

- AI assistants

- Multi-modal retrieval

5. Pinecone

One-line Verdict

Best for scalable managed vector retrieval optimization.

Short Description

Pinecone is a fully managed vector database platform designed for large-scale AI retrieval systems. It focuses on low-latency vector search, scalable indexing, and operational simplicity for production RAG deployments.

The platform is widely used in AI copilots, enterprise search applications, and semantic retrieval pipelines.

Standout Capabilities

- Managed vector search

- Scalable indexing

- Low-latency retrieval

- Metadata filtering

- Hybrid search support

- Multi-region scaling

- Serverless options

- AI retrieval optimization

AI-Specific Depth

Pinecone supports optimized semantic retrieval pipelines with scalable vector infrastructure suitable for enterprise RAG applications.

Pros

- Easy operational management

- Strong scalability

- Excellent performance

Cons

- Infrastructure cost at scale

- Limited custom ranking logic

- Primarily vector-focused

Security & Compliance

Enterprise-grade deployment options available.

Deployment & Platforms

- Managed cloud

- Serverless infrastructure

Integrations & Ecosystem

- LangChain

- LlamaIndex

- OpenAI

- Cohere

- Haystack

Pricing Model

Usage-based pricing.

Best-Fit Scenarios

- Production RAG systems

- AI copilots

- Semantic retrieval infrastructure

6. OpenSearch

One-line Verdict

Best open-source alternative for hybrid RAG relevance optimization.

Short Description

OpenSearch provides open-source search and analytics capabilities with support for vector retrieval, hybrid search, and AI-enhanced ranking. It is widely adopted by enterprises looking for cost-effective search relevance tuning infrastructure.

The platform supports extensive customization and scalability for enterprise AI search environments.

Standout Capabilities

- Open-source search engine

- Hybrid retrieval

- Vector search

- Query ranking

- Metadata filtering

- Distributed search

- AI plugins

- Search analytics

AI-Specific Depth

OpenSearch enables semantic retrieval optimization through hybrid ranking strategies and customizable AI pipelines.

Pros

- Open-source flexibility

- Strong scalability

- Enterprise customization

Cons

- Requires infrastructure management

- Advanced tuning complexity

- UI ecosystem less mature

Security & Compliance

Enterprise security controls supported.

Deployment & Platforms

- Self-hosted

- Cloud

- Kubernetes

Integrations & Ecosystem

- AWS ecosystems

- LangChain

- Observability tools

- AI frameworks

Pricing Model

Open-source with optional managed services.

Best-Fit Scenarios

- Cost-conscious enterprises

- Hybrid search systems

- AI retrieval infrastructure

7. Haystack by deepset

One-line Verdict

Best for customizable RAG orchestration and retrieval experimentation.

Short Description

Haystack is an open-source framework designed for building retrieval pipelines, question-answering systems, and AI search applications. It provides modular retrieval orchestration with support for ranking optimization, query pipelines, and advanced search experimentation.

AI engineering teams use Haystack extensively for custom RAG workflows.

Standout Capabilities

- Modular retrieval pipelines

- Hybrid search orchestration

- Reranking support

- Evaluation tools

- Query routing

- Multi-retriever workflows

- Open-source flexibility

- AI search experimentation

AI-Specific Depth

Haystack supports advanced retrieval evaluation and multi-stage search optimization for enterprise RAG architectures.

Pros

- Highly flexible

- Strong developer ecosystem

- Excellent experimentation support

Cons

- Requires engineering expertise

- Infrastructure management needed

- UI tooling limited

Security & Compliance

Varies based on deployment model.

Deployment & Platforms

- Self-hosted

- Cloud infrastructure

- Kubernetes

Integrations & Ecosystem

- Elasticsearch

- Weaviate

- Pinecone

- OpenAI

- Hugging Face

Pricing Model

Open-source with enterprise offerings.

Best-Fit Scenarios

- Custom AI retrieval systems

- Research-heavy RAG projects

- Advanced pipeline orchestration

8. Azure AI Search

One-line Verdict

Best for Microsoft-centric enterprise AI retrieval systems.

Short Description

Azure AI Search combines enterprise-grade search infrastructure with AI enrichment, semantic ranking, vector search, and hybrid retrieval capabilities. It is heavily adopted by organizations building RAG systems within Microsoft ecosystems.

The platform supports scalable enterprise search with strong governance and cloud integration.

Standout Capabilities

- Semantic ranking

- Hybrid search

- AI enrichment pipelines

- Vector search

- Enterprise governance

- Metadata filtering

- Scalable cloud deployment

- Azure ecosystem integration

AI-Specific Depth

Azure AI Search supports enterprise-grade retrieval tuning with semantic ranking and AI enrichment pipelines for grounded AI generation.

Pros

- Strong Microsoft integration

- Enterprise governance

- Managed cloud scalability

Cons

- Azure ecosystem dependency

- Pricing complexity

- Advanced customization limitations

Security & Compliance

Enterprise compliance and governance controls supported.

Deployment & Platforms

- Microsoft Azure cloud

Integrations & Ecosystem

- Azure OpenAI

- Microsoft Fabric

- Power Platform

- LangChain

Pricing Model

Consumption-based cloud pricing.

Best-Fit Scenarios

- Microsoft enterprise AI

- Internal knowledge assistants

- Enterprise search modernization

9. Algolia NeuralSearch

One-line Verdict

Best for AI-powered ecommerce and customer-facing search relevance.

Short Description

Algolia NeuralSearch combines semantic retrieval with traditional keyword search to improve customer-facing AI search experiences. It focuses heavily on speed, personalization, and relevance optimization for digital commerce and web applications.

The platform is especially useful for AI-enhanced product discovery and conversational search.

Standout Capabilities

- Neural search

- Hybrid ranking

- Personalization

- Real-time indexing

- Fast retrieval

- Query understanding

- Ecommerce optimization

- AI relevance tuning

AI-Specific Depth

Algolia enhances RAG retrieval quality through semantic ranking combined with real-time user behavior optimization.

Pros

- Excellent speed

- Strong personalization

- Easy deployment

Cons

- Ecommerce-focused orientation

- Limited deep infrastructure control

- Enterprise customization limitations

Security & Compliance

Enterprise security features supported.

Deployment & Platforms

- Managed cloud

Integrations & Ecosystem

- Shopify

- Salesforce

- Commerce platforms

- AI frameworks

Pricing Model

Usage-based SaaS pricing.

Best-Fit Scenarios

- Ecommerce AI search

- Customer-facing search

- Personalized retrieval systems

10. LlamaIndex

One-line Verdict

Best for flexible RAG indexing and retrieval optimization workflows.

Short Description

LlamaIndex provides a flexible framework for indexing, retrieval orchestration, query routing, and retrieval optimization in modern RAG systems. It simplifies integration across multiple data sources and search infrastructures.

The platform is widely used by developers building AI assistants and custom enterprise retrieval pipelines.

Standout Capabilities

- Retrieval orchestration

- Query routing

- Multi-source indexing

- Hybrid retrieval

- Reranking integration

- Metadata filtering

- Workflow flexibility

- AI-native architecture

AI-Specific Depth

LlamaIndex enables advanced RAG tuning workflows through configurable retrieval chains and contextual ranking integrations.

Pros

- Strong developer flexibility

- Broad integrations

- Excellent RAG tooling

Cons

- Requires engineering expertise

- Infrastructure depends on backend stack

- Enterprise governance varies

Security & Compliance

Depends on deployment architecture.

Deployment & Platforms

- Cloud

- Self-hosted

- Hybrid environments

Integrations & Ecosystem

- OpenAI

- Pinecone

- Weaviate

- LangChain

- Elasticsearch

Pricing Model

Open-source with enterprise offerings.

Best-Fit Scenarios

- AI assistants

- Custom RAG architectures

- Multi-source retrieval systems

Comparison Table

| Tool | Best For | Deployment | Core Strength | Hybrid Search | Enterprise Scale |

|---|---|---|---|---|---|

| Cohere Rerank | Semantic reranking | Cloud API | Reranking quality | Yes | High |

| Vespa | Large-scale retrieval | Self-hosted | Custom ranking | Yes | Very High |

| Elasticsearch | Enterprise search | Hybrid | Mature ecosystem | Yes | Very High |

| Weaviate | AI-native retrieval | Cloud/Self-hosted | Semantic search | Yes | High |

| Pinecone | Managed vector search | Cloud | Scalability | Partial | High |

| OpenSearch | Open-source search | Hybrid | Flexibility | Yes | High |

| Haystack | Retrieval orchestration | Self-hosted | Pipeline customization | Yes | Medium |

| Azure AI Search | Microsoft AI search | Cloud | Enterprise governance | Yes | Very High |

| Algolia NeuralSearch | Ecommerce search | Cloud | Speed & personalization | Yes | High |

| LlamaIndex | RAG orchestration | Hybrid | Workflow flexibility | Yes | Medium |

Scoring & Evaluation Table

| Tool | Core Features | Ease of Use | Integrations | Security | Performance | Support | Value | Weighted Total |

|---|---|---|---|---|---|---|---|---|

| Cohere Rerank | 9.2 | 8.8 | 8.9 | 8.7 | 9.1 | 8.5 | 8.6 | 8.9 |

| Vespa | 9.5 | 7.2 | 8.7 | 8.9 | 9.6 | 8.3 | 8.8 | 8.9 |

| Elasticsearch | 9.3 | 7.9 | 9.5 | 9.2 | 9.1 | 9.0 | 8.5 | 9.0 |

| Weaviate | 8.9 | 8.4 | 8.8 | 8.5 | 8.8 | 8.3 | 8.7 | 8.7 |

| Pinecone | 8.8 | 9.0 | 8.7 | 8.5 | 9.3 | 8.6 | 8.2 | 8.8 |

| OpenSearch | 8.7 | 7.8 | 8.6 | 8.7 | 8.8 | 8.2 | 9.0 | 8.6 |

| Haystack | 8.8 | 7.9 | 8.9 | 8.2 | 8.5 | 8.1 | 8.8 | 8.5 |

| Azure AI Search | 9.0 | 8.6 | 9.1 | 9.3 | 8.9 | 8.8 | 8.1 | 8.8 |

| Algolia NeuralSearch | 8.6 | 9.1 | 8.4 | 8.2 | 9.2 | 8.5 | 8.0 | 8.5 |

| LlamaIndex | 8.9 | 8.3 | 9.4 | 8.1 | 8.6 | 8.4 | 8.9 | 8.7 |

Top 3 Recommendations

Best for Enterprise

- Elasticsearch

- Azure AI Search

- Vespa

Best for SMBs

- Pinecone

- Weaviate

- Algolia NeuralSearch

Best for Developers

- Haystack

- LlamaIndex

- OpenSearch

Which Search Relevance Tuning Tool Is Right for You

For Solo Developers

LlamaIndex and Haystack provide flexibility for experimentation and custom RAG architecture development without requiring massive enterprise infrastructure.

For SMBs

Pinecone and Weaviate offer easier operational management with scalable semantic retrieval suitable for growing AI applications.

For Mid-Market Organizations

Elasticsearch and OpenSearch provide strong hybrid search capabilities with scalable infrastructure and advanced relevance optimization.

For Enterprise AI Programs

Azure AI Search, Vespa, and Elasticsearch are ideal for large-scale deployments requiring governance, scalability, observability, and advanced ranking control.

Budget vs Premium

Open-source platforms like OpenSearch and Haystack reduce licensing costs but require more engineering investment. Managed platforms like Pinecone and Azure AI Search simplify operations but increase recurring cloud expenses.

Feature Depth vs Ease of Use

Vespa offers deep ranking customization but requires expertise, while Pinecone emphasizes operational simplicity and managed scalability.

Integrations & Scalability

Organizations with complex AI ecosystems should prioritize platforms with strong orchestration and vector database integrations.

Security & Compliance Needs

Highly regulated industries should focus on enterprise governance, auditability, and deployment flexibility.

Implementation Playbook

First 30 Days

- Define retrieval quality KPIs

- Benchmark existing RAG performance

- Identify hallucination sources

- Implement hybrid retrieval testing

- Establish evaluation datasets

Days 30–60

- Add reranking pipelines

- Optimize chunking strategies

- Tune metadata filters

- Deploy retrieval observability

- Improve semantic ranking workflows

Days 60–90

- Scale production deployment

- Add personalization layers

- Automate retrieval evaluation

- Optimize latency and cost

- Continuously retrain ranking systems

Common Mistakes and How to Avoid Them

- Relying only on vector similarity

- Ignoring metadata filtering

- Skipping reranking stages

- Using poor chunking strategies

- Failing to benchmark retrieval quality

- Overlooking query rewriting

- Ignoring latency optimization

- Not monitoring hallucinations

- Underestimating governance requirements

- Poor indexing architecture

- Weak evaluation pipelines

- Inconsistent embedding strategies

Frequently Asked Questions

1. What is search relevance tuning in RAG systems?

Search relevance tuning improves the quality of retrieved documents before generation. It helps AI systems return more accurate, contextual, and grounded responses.

2. Why is hybrid search important for RAG?

Hybrid search combines semantic vector retrieval with keyword search. This improves precision and reduces irrelevant retrieval results.

3. What does reranking do in a RAG pipeline?

Reranking reorders retrieved results using deeper semantic understanding so the most relevant context is passed to the language model.

4. Which tool is best for enterprise-scale RAG?

Elasticsearch, Vespa, and Azure AI Search are strong enterprise options because of scalability, governance, and advanced ranking capabilities.

5. Is vector search enough for modern AI retrieval?

No. Pure vector search often misses keyword precision and metadata relevance. Most production systems now use hybrid retrieval.

6. What is the role of metadata filtering in relevance tuning?

Metadata filtering improves retrieval precision by narrowing search results using document attributes, permissions, timestamps, or categories.

7. Which platform is easiest for developers to start with?

LlamaIndex and Pinecone are often easier for developers due to simpler integrations and flexible APIs.

8. How do enterprises measure retrieval quality?

Teams use metrics like precision, recall, groundedness, hallucination rates, and answer relevance benchmarking.

9. What industries benefit most from relevance tuning?

Healthcare, finance, legal, ecommerce, education, and enterprise knowledge management benefit heavily from optimized AI retrieval.

10. What should buyers prioritize first?

Buyers should first evaluate retrieval accuracy, hybrid search quality, scalability, and integration compatibility with existing AI infrastructure.

Conclusion

Search relevance tuning has become one of the foundational pillars of successful Retrieval-Augmented Generation systems. As enterprises move from AI experimentation into production-scale deployment, retrieval quality now directly impacts trust, usability, hallucination reduction, and business outcomes. Modern organizations are increasingly adopting hybrid retrieval, semantic reranking, metadata-aware search, and retrieval observability to improve grounded AI responses and enterprise search accuracy. Platforms like Elasticsearch, Vespa, Pinecone, Cohere Rerank, and Azure AI Search are helping teams optimize AI retrieval pipelines across customer support, internal knowledge systems, legal research, and AI copilots. The right solution ultimately depends on your scalability needs, engineering maturity, governance requirements, and operational priorities. Start by shortlisting tools aligned with your RAG architecture, run controlled retrieval evaluations, benchmark relevance quality carefully, and scale gradually with strong monitoring and continuous optimization strategies.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals