Introduction

Model Monitoring & Drift Detection Tools help organizations track machine learning model behavior in production environments. These platforms detect issues such as concept drift, data drift, prediction anomalies, feature instability, and performance degradation before they negatively impact business operations. As AI systems become deeply embedded in decision-making workflows, continuous monitoring has become essential for maintaining reliability, fairness, compliance, and operational stability.

Modern AI environments generate enormous volumes of inference data, requiring automated systems capable of identifying model degradation in real time. These tools provide observability dashboards, statistical drift analysis, alerting systems, root-cause analysis, and integration with MLOps pipelines. Real-world use cases include fraud detection monitoring, recommendation engine stability, healthcare prediction validation, supply chain forecasting, customer churn prediction, and LLM output reliability tracking.

Organizations evaluating these tools should focus on drift detection accuracy, monitoring scalability, explainability, alerting workflows, observability depth, model governance, integration flexibility, support for streaming and batch inference, cost optimization, and enterprise security controls.

Best for: enterprises deploying ML models in production, MLOps teams, AI platform engineers, regulated industries, and organizations requiring continuous AI observability

Not ideal for: teams running isolated experiments without production deployment or low-scale AI workloads with manual oversight

What’s Changed in Model Monitoring & Drift Detection Tools

- Real-time monitoring for streaming inference pipelines

- LLM observability and hallucination monitoring support

- Drift detection expanded beyond tabular ML into multimodal AI

- Built-in root-cause analysis for model failures

- Token, latency, and inference cost monitoring

- Automated retraining triggers and remediation workflows

- Guardrails for unsafe or policy-violating outputs

- Better observability dashboards with feature-level visibility

- Enterprise governance and auditability improvements

- Support for hybrid and multi-cloud monitoring architectures

- Integration with feature stores and experiment tracking systems

- AI-specific monitoring for embeddings and vector pipelines

Quick Buyer Checklist

- Real-time and batch monitoring support

- Data drift and concept drift detection

- Feature-level observability

- LLM and generative AI monitoring support

- Alerting and anomaly detection workflows

- Root-cause analysis capabilities

- Integration with MLOps and CI/CD pipelines

- Governance, RBAC, and audit controls

- Scalability for large inference volumes

- Cost and latency visibility

- Explainability and debugging support

- Hybrid cloud deployment flexibility

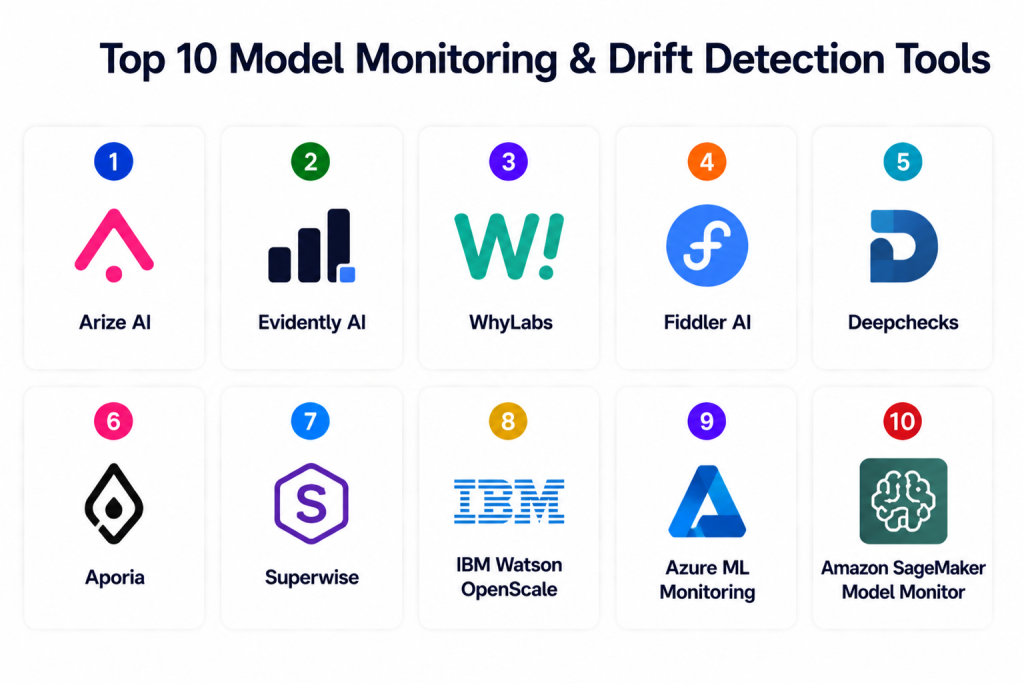

Top 10 Model Monitoring & Drift Detection Tools

1 — Arize AI

One-line verdict: Best overall platform for enterprise-grade ML observability and drift detection workflows.

Short description: Arize AI provides comprehensive monitoring for ML and LLM systems, including drift analysis, root-cause investigation, embeddings observability, and production performance monitoring. The platform is widely used for enterprise AI reliability and troubleshooting.

Standout Capabilities

- Real-time data and concept drift monitoring

- Embedding and vector observability

- Root-cause analysis workflows

- LLM monitoring and hallucination analysis

- Feature-level anomaly detection

- Automated alerts and dashboards

- Explainability and model debugging

AI-Specific Depth

- Model support: Hosted, BYO, multi-model

- RAG / knowledge integration: Vector and embedding observability

- Evaluation: Regression monitoring and performance analysis

- Guardrails: Alerting and anomaly policies

- Observability: Full-stack AI observability dashboards

Pros

- Excellent enterprise observability

- Strong LLM monitoring support

- Powerful root-cause analysis

Cons

- Premium enterprise pricing

- Learning curve for advanced workflows

- Requires mature MLOps practices

Security & Compliance

- RBAC, SSO, encryption, audit controls

- Certifications: Varies / Not publicly stated

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- ML pipelines

- Feature stores

- Data warehouses

- Vector databases

- CI/CD workflows

Pricing Model

Enterprise subscription

Best-Fit Scenarios

- Enterprise ML observability

- LLM monitoring

- Large-scale AI reliability tracking

2 — Evidently AI

One-line verdict: Best open-source solution for drift detection, data quality monitoring, and ML observability.

Short description: Evidently AI is an open-source monitoring framework focused on detecting data drift, feature quality issues, and model degradation using dashboards and statistical analysis.

Standout Capabilities

- Open-source drift detection

- Interactive dashboards

- Statistical feature monitoring

- Batch and streaming support

- Regression testing

- Data quality analysis

- Flexible Python integration

AI-Specific Depth

- Model support: Framework agnostic

- RAG / knowledge integration: N/A

- Evaluation: Statistical testing and drift metrics

- Guardrails: Threshold-based alerts

- Observability: Dashboards and reports

Pros

- Open-source flexibility

- Strong statistical monitoring

- Easy integration into pipelines

Cons

- Requires engineering setup

- Limited enterprise workflows

- Advanced governance requires customization

Security & Compliance

- Depends on deployment

- Certifications: N/A

Deployment & Platforms

- Cloud / On-prem / Hybrid

Integrations & Ecosystem

- Python ML workflows

- Monitoring stacks

- Dashboards

- Data pipelines

Pricing Model

Open-source / enterprise support

Best-Fit Scenarios

- Open-source monitoring stacks

- Drift detection experiments

- Cost-conscious teams

3 — WhyLabs

One-line verdict: Strong option for scalable AI observability with automated anomaly detection.

Short description: WhyLabs focuses on monitoring AI and ML systems using statistical observability, feature analysis, anomaly detection, and production reliability tracking.

Standout Capabilities

- Real-time anomaly detection

- Data profiling and drift monitoring

- Observability dashboards

- LLM monitoring support

- Explainability integration

- Automated alerts

- Scalable telemetry collection

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Partial support

- Evaluation: Drift and anomaly analysis

- Guardrails: Safety policies and alerts

- Observability: Advanced dashboards

Pros

- Strong observability features

- Scalable architecture

- Automated monitoring workflows

Cons

- Enterprise-focused pricing

- Requires onboarding

- Complex initial configuration

Security & Compliance

- RBAC, encryption, SSO

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- ML workflows

- Data warehouses

- Feature stores

- Monitoring tools

Pricing Model

Enterprise SaaS

Best-Fit Scenarios

- Large-scale AI observability

- Real-time anomaly detection

- Multi-model monitoring

4 — Fiddler AI

One-line verdict: Best for explainability-driven model monitoring and governance.

Short description: Fiddler AI combines monitoring, explainability, fairness analysis, and governance tools to help enterprises manage production AI responsibly.

Standout Capabilities

- Drift and anomaly detection

- Explainable AI workflows

- Fairness monitoring

- Root-cause analysis

- Real-time dashboards

- LLM monitoring support

- Regulatory reporting

AI-Specific Depth

- Model support: Multi-framework

- RAG / knowledge integration: Connectors available

- Evaluation: Performance and fairness evaluation

- Guardrails: Governance and policy controls

- Observability: Enterprise dashboards

Pros

- Excellent explainability features

- Strong governance support

- Enterprise-ready workflows

Cons

- Premium pricing

- Setup complexity

- Requires mature governance processes

Security & Compliance

- RBAC, SSO, encryption, audit trails

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- ML platforms

- Governance systems

- Data pipelines

- Monitoring stacks

Pricing Model

Enterprise subscription

Best-Fit Scenarios

- Regulated industries

- Explainability-focused AI

- Enterprise governance workflows

5 — Deepchecks

One-line verdict: Best open-source-first platform for ML validation and drift testing.

Short description: Deepchecks provides monitoring, testing, validation, and drift detection capabilities for ML pipelines and production systems.

Standout Capabilities

- Drift and validation testing

- Data integrity monitoring

- CI/CD integration

- Batch and real-time support

- Automated checks

- Model quality analysis

- Open-source flexibility

AI-Specific Depth

- Model support: Framework agnostic

- RAG / knowledge integration: N/A

- Evaluation: Regression and validation tests

- Guardrails: Automated quality checks

- Observability: Reports and dashboards

Pros

- Strong open-source ecosystem

- CI/CD friendly

- Good validation workflows

Cons

- Enterprise governance limited

- UI less polished than enterprise tools

- Requires engineering setup

Security & Compliance

- Depends on deployment

- Certifications: N/A

Deployment & Platforms

- Cloud / On-prem

Integrations & Ecosystem

- Python pipelines

- ML workflows

- CI/CD systems

Pricing Model

Open-source / enterprise

Best-Fit Scenarios

- Validation-heavy ML workflows

- Open-source stacks

- CI/CD testing pipelines

6 — Aporia

One-line verdict: Enterprise-grade monitoring platform for mature ML operations teams.

Short description: Aporia delivers real-time drift detection, anomaly monitoring, and observability for production AI systems.

Standout Capabilities

- Real-time monitoring

- Feature drift analysis

- Alerting workflows

- Explainability dashboards

- Data quality monitoring

- Root-cause analysis

- Team collaboration

AI-Specific Depth

- Model support: Multi-framework

- RAG / knowledge integration: Connectors available

- Evaluation: Performance and anomaly monitoring

- Guardrails: Policy controls

- Observability: Full dashboards

Pros

- Mature enterprise workflows

- Real-time alerting

- Strong anomaly detection

Cons

- Premium cost

- Setup effort

- Overkill for smaller teams

Security & Compliance

- SSO, RBAC, encryption

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- ML pipelines

- Data warehouses

- Monitoring stacks

Pricing Model

Subscription / usage-based

Best-Fit Scenarios

- Enterprise AI operations

- Real-time inference monitoring

- Multi-team observability

7 — Superwise

One-line verdict: Powerful enterprise monitoring suite with automated remediation workflows.

Short description: Superwise focuses on AI observability, drift detection, automated alerts, and operational workflows for production ML systems.

Standout Capabilities

- Automated anomaly alerts

- Real-time drift monitoring

- Operational dashboards

- Root-cause analysis

- Governance workflows

- Multi-model monitoring

- Automated remediation triggers

AI-Specific Depth

- Model support: Multi-framework

- RAG / knowledge integration: Partial support

- Evaluation: Drift and regression analysis

- Guardrails: Automated policy enforcement

- Observability: Enterprise dashboards

Pros

- Enterprise automation

- Strong operational workflows

- Good scalability

Cons

- Learning curve

- Expensive at scale

- Complex onboarding

Security & Compliance

- RBAC, SSO, audit logs

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- MLOps pipelines

- Data systems

- Alerting platforms

Pricing Model

Enterprise SaaS

Best-Fit Scenarios

- Large AI operations teams

- Automated remediation

- Production monitoring

8 — IBM Watson OpenScale

One-line verdict: Best for enterprise governance and explainability in regulated industries.

Short description: IBM Watson OpenScale provides monitoring, explainability, fairness analysis, and drift detection for enterprise AI systems.

Standout Capabilities

- Drift and bias monitoring

- Explainability analysis

- Governance workflows

- Audit reporting

- Enterprise dashboards

- Regulatory support

- Performance tracking

AI-Specific Depth

- Model support: IBM ecosystem and external models

- RAG / knowledge integration: Enterprise connectors

- Evaluation: Fairness and performance analysis

- Guardrails: Governance and compliance policies

- Observability: Enterprise monitoring dashboards

Pros

- Excellent governance

- Regulatory-friendly

- Strong explainability

Cons

- IBM ecosystem focus

- Enterprise complexity

- Premium pricing

Security & Compliance

- Enterprise controls, encryption, RBAC

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid / On-prem

Integrations & Ecosystem

- IBM AI stack

- Data governance tools

- ML workflows

Pricing Model

Enterprise licensing

Best-Fit Scenarios

- Regulated industries

- Governance-heavy AI deployments

- Enterprise monitoring

9 — Azure ML Monitoring

One-line verdict: Best for Azure-native monitoring and production ML observability.

Short description: Azure ML Monitoring provides model tracking, drift analysis, alerting, and operational observability within Azure ML workflows.

Standout Capabilities

- Azure-native monitoring

- Drift and anomaly analysis

- Alerting workflows

- Dashboard visualization

- Integration with Azure ML

- Feature monitoring

- Operational metrics

AI-Specific Depth

- Model support: Azure ecosystem and BYO

- RAG / knowledge integration: Azure data connectors

- Evaluation: Performance and drift metrics

- Guardrails: IAM and governance controls

- Observability: Azure dashboards

Pros

- Deep Azure integration

- Enterprise-ready

- Good scalability

Cons

- Azure lock-in

- Pricing complexity

- Limited portability

Security & Compliance

- Azure security controls, RBAC, encryption

- Certifications: Azure compliance ecosystem

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- Azure ML

- Data lakes

- Pipelines

- Monitoring systems

Pricing Model

Usage-based

Best-Fit Scenarios

- Azure-native enterprises

- Production ML monitoring

- Enterprise governance

10 — Amazon SageMaker Model Monitor

One-line verdict: Best for AWS-native drift detection and automated monitoring workflows.

Short description: SageMaker Model Monitor continuously tracks data quality, concept drift, bias, and prediction behavior in AWS ML deployments.

Standout Capabilities

- Automated drift detection

- Bias and feature monitoring

- Real-time alerting

- Integration with SageMaker pipelines

- CloudWatch monitoring

- Scheduled evaluations

- Auto-remediation support

AI-Specific Depth

- Model support: AWS ecosystem and BYO

- RAG / knowledge integration: AWS connectors

- Evaluation: Automated monitoring and validation

- Guardrails: IAM policies and monitoring rules

- Observability: CloudWatch dashboards

Pros

- Fully managed service

- Tight AWS integration

- Automated workflows

Cons

- AWS lock-in

- Cost scaling challenges

- Less flexible outside AWS

Security & Compliance

- IAM, encryption, audit controls

- Certifications: AWS compliance ecosystem

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- SageMaker

- AWS data services

- Monitoring pipelines

Pricing Model

Usage-based

Best-Fit Scenarios

- AWS-native ML teams

- Automated monitoring workflows

- Enterprise production AI

Comparison Table

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Arize AI | Enterprise observability | Cloud / Hybrid | Multi-model | Root-cause analysis | Premium pricing | N/A |

| Evidently AI | Open-source monitoring | Cloud / On-prem | Framework agnostic | Statistical drift | Requires setup | N/A |

| WhyLabs | AI observability | Cloud / Hybrid | Multi-model | Anomaly detection | Enterprise pricing | N/A |

| Fiddler AI | Explainability | Cloud / Hybrid | Multi-framework | Governance | Complex onboarding | N/A |

| Deepchecks | Validation testing | Cloud / On-prem | Framework agnostic | Open-source checks | Limited enterprise workflows | N/A |

| Aporia | Real-time monitoring | Cloud / Hybrid | Multi-framework | Anomaly monitoring | Cost | N/A |

| Superwise | Enterprise automation | Cloud / Hybrid | Multi-framework | Automated remediation | Learning curve | N/A |

| IBM OpenScale | Governance | Cloud / Hybrid | IBM + external | Compliance | IBM-centric | N/A |

| Azure ML Monitoring | Azure ecosystems | Cloud | Azure + BYO | Cloud integration | Azure lock-in | N/A |

| SageMaker Model Monitor | AWS ecosystems | Cloud | AWS + BYO | Managed monitoring | AWS lock-in | N/A |

Scoring & Evaluation

Monitoring scores are comparative rather than absolute. Enterprise-focused tools typically score higher in governance, observability, and automation, while open-source platforms prioritize flexibility and developer control. Teams should evaluate tools based on infrastructure compatibility, scalability, governance requirements, and operational maturity. Open-source stacks may reduce costs but require engineering expertise, while managed platforms accelerate deployment and enterprise readiness.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Arize AI | 9 | 9 | 8 | 9 | 8 | 8 | 9 | 8 | 8.6 |

| Evidently AI | 8 | 8 | 7 | 8 | 8 | 9 | 7 | 8 | 8.0 |

| WhyLabs | 8 | 8 | 8 | 8 | 7 | 8 | 8 | 7 | 7.9 |

| Fiddler AI | 9 | 9 | 9 | 8 | 7 | 7 | 9 | 8 | 8.4 |

| Deepchecks | 8 | 8 | 7 | 8 | 8 | 8 | 7 | 7 | 7.8 |

| Aporia | 8 | 8 | 8 | 8 | 7 | 8 | 8 | 7 | 7.9 |

| Superwise | 9 | 8 | 8 | 8 | 7 | 7 | 9 | 8 | 8.1 |

| IBM OpenScale | 9 | 9 | 9 | 8 | 6 | 7 | 9 | 8 | 8.3 |

| Azure ML Monitoring | 8 | 8 | 8 | 9 | 8 | 8 | 9 | 8 | 8.2 |

| SageMaker Model Monitor | 8 | 8 | 8 | 9 | 8 | 8 | 9 | 8 | 8.2 |

Top 3 for Enterprise: Arize AI, Fiddler AI, IBM OpenScale

Top 3 for SMB: Evidently AI, Deepchecks, WhyLabs

Top 3 for Developers: Evidently AI, Deepchecks, Arize AI

Which Model Monitoring & Drift Detection Tool Is Right for You

Solo / Freelancer

Evidently AI and Deepchecks are excellent choices for developers wanting lightweight monitoring and open-source flexibility. These tools integrate easily into Python-based workflows and reduce infrastructure costs.

SMB

WhyLabs, Deepchecks, and Aporia provide balanced observability, anomaly detection, and scalability without requiring massive enterprise infrastructure investments.

Mid-Market

Arize AI and Superwise offer stronger dashboards, automated remediation workflows, and enterprise observability while remaining flexible enough for growing AI teams.

Enterprise

Fiddler AI, IBM OpenScale, Arize AI, Azure ML Monitoring, and SageMaker Model Monitor provide governance, explainability, compliance, and operational scalability required for production-grade AI environments.

Regulated Industries

IBM OpenScale and Fiddler AI stand out for governance, explainability, auditability, and compliance workflows critical for finance, healthcare, and public sector deployments.

Budget vs Premium

Open-source tools like Evidently AI and Deepchecks minimize software costs but require engineering investment. Enterprise platforms provide automation, governance, and operational support at premium pricing.

Build vs Buy

Organizations with strong engineering teams can build custom monitoring using open-source libraries. Enterprises needing governance, dashboards, compliance, and scalability often benefit from managed commercial platforms.

Implementation Playbook

30 Days

- Identify critical production models

- Define baseline monitoring metrics

- Configure data and concept drift checks

- Build alerting workflows

- Establish ownership and escalation processes

60 Days

- Integrate observability dashboards

- Add root-cause analysis workflows

- Configure governance and RBAC

- Implement regression monitoring

- Validate alert thresholds and anomaly policies

90 Days

- Automate remediation and retraining triggers

- Scale monitoring across all production models

- Integrate cost and latency optimization

- Expand monitoring to LLM and vector workflows

- Conduct governance and compliance reviews

Common Mistakes & How to Avoid Them

- Monitoring only accuracy while ignoring feature drift

- No baseline metrics before deployment

- Missing observability for embeddings and vectors

- Ignoring cost and latency monitoring

- Weak alert escalation workflows

- No retraining strategy after drift detection

- Over-automation without human review

- Missing governance and audit controls

- Lack of explainability for model failures

- No integration with CI/CD and MLOps pipelines

- Monitoring only batch workloads and ignoring streaming inference

- Poor visibility into feature-level anomalies

- Vendor lock-in without portability planning

- Failure to monitor LLM hallucinations and unsafe outputs

FAQs

1. What is model drift?

Model drift occurs when data patterns or relationships change over time, causing prediction quality to degrade.

2. What is the difference between data drift and concept drift?

Data drift refers to changes in input data distributions, while concept drift occurs when relationships between inputs and outputs change.

3. Why is model monitoring important?

Monitoring helps detect failures, anomalies, and degraded performance before business impact becomes severe.

4. Can these tools monitor LLMs?

Yes. Modern platforms increasingly support LLM observability, hallucination analysis, embeddings monitoring, and vector workflows.

5. Are open-source options available?

Yes. Evidently AI and Deepchecks are popular open-source monitoring solutions.

6. Do these tools support real-time inference monitoring?

Most enterprise platforms support streaming and real-time monitoring workflows.

7. How do monitoring platforms detect drift?

They use statistical methods, anomaly detection, feature comparisons, and predictive behavior analysis.

8. Can monitoring systems trigger retraining automatically?

Some enterprise platforms support automated retraining and remediation workflows.

9. Are these tools cloud-only?

No. Many support hybrid, on-prem, and multi-cloud deployments.

10. What industries benefit most from model monitoring?

Finance, healthcare, e-commerce, cybersecurity, manufacturing, and any organization deploying production AI systems.

11. Do monitoring tools replace MLOps platforms?

No. They complement MLOps platforms by providing post-deployment observability and reliability tracking.

12. What should teams monitor besides accuracy?

Feature distributions, latency, cost, embeddings, hallucinations, fairness, and prediction stability are all important.

Conclusion

Model Monitoring & Drift Detection Tools have become essential for maintaining reliable, scalable, and trustworthy AI systems in production. Open-source solutions like Evidently AI and Deepchecks provide flexibility for developers and smaller teams, while enterprise platforms such as Arize AI, Fiddler AI, and IBM OpenScale deliver governance, observability, explainability, and operational scalability for complex environments. As AI systems evolve toward LLMs, multimodal pipelines, and autonomous workflows, monitoring capabilities must expand beyond traditional metrics into embeddings, hallucinations, latency, and governance controls. The best platform depends on infrastructure maturity, compliance requirements, operational scale, and monitoring complexity. Start with clear monitoring baselines, pilot observability workflows, validate governance and alerting, and then scale across production AI systems for long-term reliability and performance.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals