Introduction

Model Latency & Cost Optimization Tools help organizations reduce inference costs, improve response times, optimize token usage, and maximize infrastructure efficiency across AI and LLM workloads. As enterprises scale generative AI systems, inference and operational expenses often become the largest component of AI spending. These platforms help teams optimize throughput, reduce latency bottlenecks, manage GPU utilization, route requests intelligently, cache prompts, and monitor token-level costs without sacrificing output quality.

Modern AI systems must balance three competing priorities: speed, accuracy, and cost. Optimization tools now combine model routing, semantic caching, observability, batching, quantization, autoscaling, inference orchestration, and token analysis into unified optimization workflows. Real-world use cases include reducing chatbot response latency, optimizing agentic AI pipelines, minimizing GPU costs, controlling LLM token usage, improving streaming responsiveness, and scaling enterprise AI workloads efficiently.

When evaluating these platforms, buyers should focus on model routing flexibility, token optimization, caching support, observability, GPU orchestration, autoscaling, inference acceleration, governance, deployment flexibility, throughput efficiency, and integration with AI infrastructure.

Best for: LLMOps teams, AI platform engineers, enterprises deploying production AI systems, cloud infrastructure teams, and organizations managing large-scale inference workloads

Not ideal for: lightweight prototypes, small experimental AI projects, or organizations without production inference pipelines

What’s Changed in Model Latency & Cost Optimization Tools

- Intelligent model routing became standard for balancing quality, latency, and cost

- Prompt caching significantly reduced repeated inference costs and latency

- Semantic caching and proxy models improved response efficiency dramatically

- Quantization and speculative decoding became mainstream optimization techniques

- Continuous batching improved throughput and reduced queue latency

- Token-level observability became critical for AI FinOps

- GPU orchestration platforms optimized idle compute utilization

- Multi-model orchestration improved workload efficiency

- Streaming response architectures reduced perceived latency

- AI-specific FinOps tooling emerged for token and inference visibility

- Cost-aware agent orchestration gained importance for multi-agent systems

- Infrastructure optimization increasingly focused on inference rather than training

Quick Buyer Checklist

- Token usage analytics and optimization

- Intelligent model routing

- Semantic caching support

- GPU orchestration and autoscaling

- Inference batching capabilities

- Cost and latency observability dashboards

- Quantization and model compression support

- Multi-model orchestration

- Cloud and hybrid deployment support

- Alerting and anomaly detection

- AI-specific FinOps workflows

- CI/CD and LLMOps integration

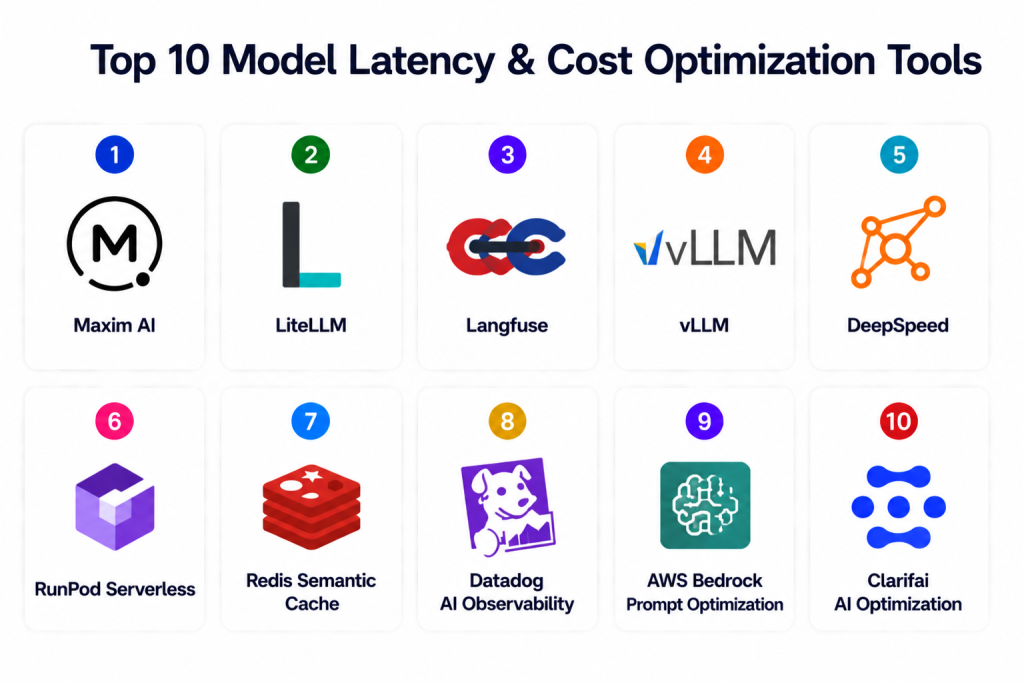

Top 10 Model Latency & Cost Optimization Tools

1 — Maxim AI

One-line verdict: Best overall platform for enterprise AI cost, latency, and observability optimization.

Short description: Maxim AI combines evaluation, observability, simulation, and optimization workflows for reducing AI infrastructure costs and latency while preserving output quality. It supports intelligent routing, tracing, and token analytics for production AI systems.

Standout Capabilities

- Intelligent model routing

- Real-time token and latency analytics

- Distributed tracing

- Quality-cost tradeoff analysis

- Prompt optimization workflows

- Simulation testing

- Semantic caching integrations

AI-Specific Depth

- Model support: Hosted / BYO / multi-model

- RAG / knowledge integration: Workflow connectors

- Evaluation: Quality and latency evaluation

- Guardrails: Cost and performance thresholds

- Observability: Full-stack AI dashboards

Pros

- Excellent observability stack

- Strong AI FinOps workflows

- Advanced routing optimization

Cons

- Enterprise pricing

- Advanced setup required

- Mature AI operations needed

Security & Compliance

- RBAC, encryption, audit workflows

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- LLM APIs

- AI gateways

- CI/CD pipelines

- Observability stacks

Pricing Model

Enterprise subscription

Best-Fit Scenarios

- Enterprise LLMOps

- AI FinOps optimization

- Large-scale inference systems

2 — LiteLLM

One-line verdict: Best lightweight open-source gateway for model routing and cost optimization.

Short description: LiteLLM provides unified API management, routing, caching, and token tracking across multiple LLM providers to reduce operational complexity and optimize AI costs.

Standout Capabilities

- Multi-model routing

- Unified API abstraction

- Token usage monitoring

- Budget controls

- Load balancing

- Failover support

- Open-source deployment

AI-Specific Depth

- Model support: Multi-provider / BYO

- RAG / knowledge integration: Gateway integrations

- Evaluation: Basic usage analytics

- Guardrails: Budget limits and rate controls

- Observability: Token and request metrics

Pros

- Lightweight deployment

- Strong open-source adoption

- Excellent routing flexibility

Cons

- Limited enterprise governance

- Basic dashboards

- Requires engineering management

Security & Compliance

- Depends on deployment

- Certifications: N/A

Deployment & Platforms

- Cloud / On-prem / Hybrid

Integrations & Ecosystem

- OpenAI-compatible APIs

- AI gateways

- Monitoring systems

Pricing Model

Open-source

Best-Fit Scenarios

- Multi-model routing

- AI API abstraction

- Cost-conscious teams

3 — Langfuse

One-line verdict: Ideal for token-level observability and LLM cost analytics.

Short description: Langfuse provides tracing, observability, prompt analytics, token tracking, and latency monitoring for production LLM applications.

Standout Capabilities

- Token-level tracing

- Cost attribution

- Latency dashboards

- Prompt analytics

- Request lineage

- Multi-model observability

- Session tracing

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Trace integration

- Evaluation: Prompt analytics

- Guardrails: Threshold alerts

- Observability: Full tracing dashboards

Pros

- Excellent observability

- Strong developer workflows

- Detailed tracing support

Cons

- Limited optimization automation

- Requires infrastructure integration

- Enterprise governance still maturing

Security & Compliance

- RBAC and encryption

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / Hybrid / On-prem

Integrations & Ecosystem

- LLM frameworks

- AI pipelines

- Monitoring stacks

Pricing Model

Open-source / enterprise

Best-Fit Scenarios

- AI observability

- Token cost analytics

- Prompt tracing

4 — vLLM

One-line verdict: Best inference engine for high-throughput and low-latency LLM serving.

Short description: vLLM is an optimized inference framework designed for efficient serving of large language models with advanced batching and memory management.

Standout Capabilities

- Continuous batching

- High-throughput inference

- GPU memory optimization

- KV cache optimization

- Efficient token serving

- Open-source flexibility

- Low-latency serving

AI-Specific Depth

- Model support: Open-source models

- RAG / knowledge integration: Framework compatible

- Evaluation: Performance benchmarking

- Guardrails: Infrastructure controls

- Observability: Metrics integrations

Pros

- Exceptional throughput

- Strong GPU efficiency

- Widely adopted open-source ecosystem

Cons

- Engineering expertise required

- Infrastructure complexity

- Limited governance features

Security & Compliance

- Depends on deployment

- Certifications: N/A

Deployment & Platforms

- On-prem / Cloud / Hybrid

Integrations & Ecosystem

- Hugging Face

- Kubernetes

- GPU orchestration stacks

Pricing Model

Open-source

Best-Fit Scenarios

- Large-scale inference serving

- GPU optimization

- Low-latency AI systems

5 — DeepSpeed

One-line verdict: Best for large-scale model optimization and efficient distributed inference.

Short description: DeepSpeed provides distributed optimization, inference acceleration, quantization, and memory efficiency for large AI workloads.

Standout Capabilities

- ZeRO optimization

- Quantization support

- Distributed inference

- Memory optimization

- Mixed precision serving

- Tensor parallelism

- GPU acceleration

AI-Specific Depth

- Model support: PyTorch ecosystems

- RAG / knowledge integration: Framework integrations

- Evaluation: Performance optimization metrics

- Guardrails: Infrastructure controls

- Observability: External integrations

Pros

- Excellent large-model optimization

- Strong distributed inference

- Open-source ecosystem

Cons

- Complex deployment

- Requires infrastructure expertise

- Limited UI and dashboards

Security & Compliance

- Depends on deployment

- Certifications: N/A

Deployment & Platforms

- On-prem / Cloud / Hybrid

Integrations & Ecosystem

- PyTorch

- Kubernetes

- GPU clusters

Pricing Model

Open-source

Best-Fit Scenarios

- Large-scale inference

- Distributed AI workloads

- GPU efficiency optimization

6 — RunPod Serverless

One-line verdict: Best for GPU-efficient inference infrastructure and cost-efficient scaling.

Short description: RunPod provides optimized GPU infrastructure for AI inference with autoscaling, batching, and low-cost compute orchestration.

Standout Capabilities

- Serverless GPU inference

- Autoscaling

- Low-cost GPU provisioning

- Quantization workflows

- vLLM integrations

- Throughput optimization

- Flexible compute orchestration

AI-Specific Depth

- Model support: Open-source and custom models

- RAG / knowledge integration: Infrastructure compatible

- Evaluation: Infrastructure metrics

- Guardrails: Scaling controls

- Observability: Compute dashboards

Pros

- Cost-efficient GPUs

- Flexible scaling

- Strong inference performance

Cons

- Infrastructure-focused

- Limited governance tooling

- Requires deployment expertise

Security & Compliance

- Infrastructure security controls

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- vLLM

- Kubernetes

- AI frameworks

Pricing Model

Usage-based infrastructure pricing

Best-Fit Scenarios

- GPU inference optimization

- Scalable AI serving

- Budget-conscious AI infrastructure

7 — Redis Semantic Cache

One-line verdict: Best for reducing repeated LLM calls using semantic caching.

Short description: Redis semantic caching reduces repeated inference workloads by storing and retrieving semantically similar responses.

Standout Capabilities

- Semantic caching

- Vector similarity search

- Token reduction

- Low-latency retrieval

- Reduced API calls

- Embedding-based caching

- Scalable infrastructure

AI-Specific Depth

- Model support: Framework agnostic

- RAG / knowledge integration: Strong vector support

- Evaluation: Cache hit analytics

- Guardrails: Expiration policies

- Observability: Cache performance metrics

Pros

- Significant cost reduction

- Lower response latency

- Easy integration

Cons

- Requires embedding workflows

- Cache tuning needed

- Limited governance features

Security & Compliance

- Redis access controls and encryption

- Certifications: Varies

Deployment & Platforms

- Cloud / On-prem / Hybrid

Integrations & Ecosystem

- Vector DBs

- AI gateways

- LLM frameworks

Pricing Model

Infrastructure subscription

Best-Fit Scenarios

- Repeated query optimization

- RAG systems

- High-volume AI traffic

8 — Datadog AI Observability

One-line verdict: Best for unified AI infrastructure, latency, and cost observability.

Short description: Datadog extends infrastructure monitoring into AI observability with token tracking, latency dashboards, tracing, and AI telemetry.

Standout Capabilities

- AI telemetry

- Latency monitoring

- Cost tracking

- Distributed tracing

- Token observability

- Infrastructure dashboards

- Alerting workflows

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Trace support

- Evaluation: Performance analytics

- Guardrails: Alert policies

- Observability: Unified telemetry

Pros

- Enterprise observability

- Unified infrastructure monitoring

- Strong scalability

Cons

- Expensive at scale

- Datadog ecosystem focus

- Complex onboarding

Security & Compliance

- Enterprise RBAC and encryption

- Certifications: Varies

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- Infrastructure monitoring

- AI pipelines

- Cloud ecosystems

Pricing Model

Usage-based enterprise pricing

Best-Fit Scenarios

- Enterprise observability

- AI infrastructure telemetry

- Unified monitoring

9 — AWS Bedrock Prompt Optimization

One-line verdict: Best AWS-native platform for prompt caching and token optimization.

Short description: AWS Bedrock provides prompt optimization, caching, and orchestration features to reduce token costs and improve inference responsiveness.

Standout Capabilities

- Prompt caching

- Prompt optimization

- Token reduction workflows

- Managed orchestration

- Low-latency serving

- Cloud-native integrations

- AI workflow management

AI-Specific Depth

- Model support: AWS ecosystem and external models

- RAG / knowledge integration: AWS connectors

- Evaluation: Prompt optimization metrics

- Guardrails: IAM and governance controls

- Observability: AWS dashboards

Pros

- Deep AWS integration

- Managed optimization features

- Strong scalability

Cons

- AWS lock-in

- Pricing complexity

- Limited portability

Security & Compliance

- IAM, encryption, audit controls

- Certifications: AWS compliance ecosystem

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- AWS AI stack

- CloudWatch

- Bedrock services

Pricing Model

Usage-based

Best-Fit Scenarios

- AWS-native AI systems

- Prompt caching optimization

- Enterprise cloud AI

10 — Clarifai AI Optimization

One-line verdict: Best for AI infrastructure orchestration and compute optimization.

Short description: Clarifai combines AI orchestration, compute optimization, inference scaling, and governance workflows for reducing infrastructure costs.

Standout Capabilities

- AI compute orchestration

- GPU efficiency optimization

- Inference scaling

- Resource utilization analysis

- Cost governance

- AI workflow orchestration

- Multi-model infrastructure management

AI-Specific Depth

- Model support: Multi-framework

- RAG / knowledge integration: AI workflow support

- Evaluation: Infrastructure analytics

- Guardrails: Governance and controls

- Observability: Infrastructure dashboards

Pros

- Strong orchestration capabilities

- Good GPU optimization

- Enterprise AI workflows

Cons

- Enterprise complexity

- Infrastructure-focused learning curve

- Premium pricing

Security & Compliance

- Enterprise RBAC and governance

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud / Hybrid / On-prem

Integrations & Ecosystem

- AI infrastructure

- GPU orchestration

- Model serving systems

Pricing Model

Enterprise subscription

Best-Fit Scenarios

- Enterprise AI infrastructure

- GPU optimization

- Multi-model orchestration

Comparison Table

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Maxim AI | Enterprise optimization | Cloud/Hybrid | Multi-model | Full-stack optimization | Premium pricing | N/A |

| LiteLLM | Open-source routing | Cloud/Hybrid | Multi-provider | API abstraction | Limited governance | N/A |

| Langfuse | Token observability | Cloud/Hybrid | Multi-model | Tracing analytics | Less automation | N/A |

| vLLM | High-throughput serving | Cloud/On-prem | Open-source | GPU efficiency | Engineering complexity | N/A |

| DeepSpeed | Distributed inference | Cloud/On-prem | PyTorch ecosystems | Model optimization | Complex setup | N/A |

| RunPod | GPU infrastructure | Cloud | Custom/open-source | Cost-efficient compute | Infra expertise needed | N/A |

| Redis Semantic Cache | Semantic caching | Hybrid | Framework agnostic | Cost reduction | Cache tuning | N/A |

| Datadog AI Observability | Enterprise telemetry | Cloud/Hybrid | Multi-model | Unified monitoring | Expensive at scale | N/A |

| AWS Bedrock | AWS optimization | Cloud | AWS + external | Prompt caching | AWS lock-in | N/A |

| Clarifai | AI orchestration | Cloud/Hybrid | Multi-framework | Infrastructure optimization | Enterprise complexity | N/A |

Scoring & Evaluation

These scores are comparative rather than absolute. Enterprise-focused platforms generally score higher in governance, orchestration, and observability, while open-source frameworks prioritize flexibility and infrastructure efficiency. Teams should evaluate optimization tools based on workload scale, cloud ecosystem alignment, latency requirements, operational maturity, and governance needs.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Maxim AI | 9 | 9 | 8 | 9 | 7 | 9 | 9 | 8 | 8.6 |

| LiteLLM | 8 | 8 | 7 | 9 | 8 | 9 | 7 | 7 | 8.0 |

| Langfuse | 8 | 8 | 7 | 8 | 8 | 8 | 8 | 8 | 7.9 |

| vLLM | 9 | 8 | 7 | 8 | 6 | 10 | 7 | 8 | 8.1 |

| DeepSpeed | 9 | 8 | 7 | 8 | 6 | 10 | 7 | 8 | 8.1 |

| RunPod | 8 | 8 | 7 | 8 | 8 | 9 | 7 | 7 | 7.9 |

| Redis Semantic Cache | 8 | 8 | 7 | 9 | 8 | 9 | 8 | 8 | 8.2 |

| Datadog AI Observability | 9 | 8 | 8 | 9 | 7 | 8 | 9 | 8 | 8.3 |

| AWS Bedrock | 8 | 8 | 8 | 9 | 8 | 9 | 9 | 8 | 8.4 |

| Clarifai | 8 | 8 | 8 | 8 | 7 | 9 | 9 | 8 | 8.1 |

Top 3 for Enterprise: Maxim AI, Datadog AI Observability, AWS Bedrock

Top 3 for SMB: LiteLLM, Langfuse, Redis Semantic Cache

Top 3 for Developers: vLLM, DeepSpeed, LiteLLM

Which Model Latency & Cost Optimization Tool Is Right for You

Solo / Freelancer

LiteLLM, Langfuse, and Redis Semantic Cache provide lightweight deployment, affordable optimization, and strong developer flexibility.

SMB

RunPod, LiteLLM, and Langfuse balance observability, routing, and infrastructure efficiency without requiring massive enterprise investments.

Mid-Market

Maxim AI, Clarifai, and Datadog AI Observability provide stronger orchestration, tracing, and AI FinOps workflows for scaling AI operations.

Enterprise

AWS Bedrock, Maxim AI, Datadog AI Observability, and Clarifai provide enterprise governance, observability, orchestration, and infrastructure optimization.

Regulated Industries

Datadog AI Observability and Maxim AI provide governance, auditability, and observability features needed for compliance-heavy environments.

Budget vs Premium

Open-source frameworks like LiteLLM, vLLM, and DeepSpeed minimize licensing costs but require engineering investment. Managed enterprise platforms accelerate deployment and governance readiness.

Build vs Buy

Organizations with strong platform engineering teams may benefit from building custom optimization stacks using open-source tooling. Enterprises needing governance, dashboards, and support often benefit from managed commercial platforms.

Implementation Playbook

30 Days

- Establish latency and cost baselines

- Identify expensive inference workflows

- Implement token monitoring and tracing

- Pilot caching and routing workflows

- Define optimization KPIs

60 Days

- Deploy semantic caching

- Optimize prompts and token usage

- Implement autoscaling and batching

- Configure governance and alerts

- Validate latency improvements

90 Days

- Automate optimization workflows

- Expand observability across AI systems

- Optimize GPU utilization

- Implement AI FinOps governance

- Scale multi-model orchestration

Common Mistakes & How to Avoid Them

- Ignoring token-level visibility

- Overusing large models for simple tasks

- No caching for repeated requests

- Weak autoscaling configuration

- Missing GPU utilization analysis

- No prompt optimization workflows

- Overlooking latency introduced by agent orchestration

- Lack of observability and tracing

- No governance or cost ownership

- Vendor lock-in without portability planning

- Missing throughput optimization

- Over-optimization reducing output quality

- No alerting for cost spikes

- Ignoring semantic cache hit rates

FAQs

1. What are model latency and cost optimization tools?

These platforms help reduce AI inference latency, token costs, GPU expenses, and operational inefficiencies across AI systems.

2. Why is inference optimization important?

Inference now represents the largest portion of AI operational spending for many organizations.

3. What is semantic caching?

Semantic caching stores responses for semantically similar requests to avoid repeated expensive inference calls.

4. How does model routing reduce costs?

Routing systems send simple requests to cheaper models and complex tasks to stronger models automatically.

5. What is continuous batching?

Continuous batching improves throughput and latency by dynamically adding requests into GPU inference streams.

6. Do optimization tools affect output quality?

Poor optimization can reduce quality, which is why evaluation and observability are important alongside optimization.

7. Are open-source optimization frameworks available?

Yes. LiteLLM, vLLM, and DeepSpeed are widely used open-source optimization tools.

8. What industries benefit most from these tools?

Finance, customer support, healthcare, AI SaaS, coding copilots, and large-scale AI platforms benefit significantly.

9. Can optimization tools reduce GPU costs?

Yes. Quantization, batching, autoscaling, and routing can significantly reduce GPU utilization costs.

10. Are these tools cloud-specific?

Some are cloud-native (AWS Bedrock), while others support hybrid and multi-cloud environments.

11. What metrics should teams monitor?

Latency percentiles, token usage, cache hit rates, GPU utilization, throughput, and cost-per-request are all important.

12. Do optimization tools replace observability systems?

No. They complement broader observability and AI monitoring platforms.

Conclusion

Model Latency & Cost Optimization Tools have become essential infrastructure for scalable AI and LLM operations. Open-source frameworks like LiteLLM, vLLM, and DeepSpeed provide flexible optimization for engineering-focused teams, while enterprise platforms such as Maxim AI, Datadog AI Observability, AWS Bedrock, and Clarifai deliver governance, orchestration, observability, and operational scalability for production AI environments. As inference workloads continue to dominate AI spending, organizations must optimize not only model quality but also throughput, GPU efficiency, token usage, and latency. The best platform depends on infrastructure ecosystem, operational maturity, governance requirements, and workload scale. Start with token and latency observability, pilot routing and caching workflows, validate performance and quality tradeoffs, and then scale optimization across all production AI systems

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals