Introduction

Retrieval-Augmented Generation RAG Frameworks help organizations connect large language models with external knowledge systems so AI responses are grounded in trusted and up-to-date information. Instead of relying only on pre-trained model knowledge, RAG frameworks retrieve documents, embeddings, databases, APIs, vector indexes, and enterprise content during inference to improve factual accuracy and reduce hallucinations.

Modern enterprises are rapidly adopting RAG architectures for AI copilots, enterprise search, customer support automation, legal assistants, healthcare knowledge systems, financial research, internal documentation assistants, and AI-driven analytics. As AI systems become more production-critical, RAG frameworks now include observability, evaluation pipelines, guardrails, vector orchestration, caching, governance, and multi-agent retrieval workflows.

Organizations evaluating RAG frameworks should focus on retrieval quality, vector database support, orchestration flexibility, latency optimization, hallucination control, observability, governance, scalability, prompt management, evaluation tooling, deployment portability, and enterprise integrations.

Best for: AI engineers, LLMOps teams, enterprise AI platform teams, AI product developers, customer support automation teams, and organizations building production-grade generative AI systems

Not ideal for: simple standalone chatbots with static prompts, lightweight hobby projects, or organizations without external knowledge retrieval requirements

What’s Changed in Retrieval-Augmented Generation RAG Frameworks

- Multi-agent retrieval workflows became more common

- Hybrid retrieval dense plus sparse search improved retrieval accuracy

- Vector databases became core infrastructure for enterprise AI

- Hallucination detection workflows became tightly integrated with RAG pipelines

- Prompt injection defense and retrieval security became critical priorities

- Retrieval observability and tracing gained enterprise adoption

- Long-context LLMs changed chunking and retrieval strategies

- Structured data retrieval from SQL and APIs became more common

- Multi-modal RAG expanded into image and audio retrieval

- Enterprises increasingly adopted private and hybrid RAG deployments

- Real-time retrieval and streaming generation improved significantly

- Evaluation frameworks became essential for measuring retrieval quality

Quick Buyer Checklist

- Vector database compatibility

- Hybrid retrieval support

- Multi-model and BYO model support

- Observability and tracing

- Hallucination mitigation workflows

- Evaluation and regression testing

- Guardrails and prompt injection defense

- Enterprise access controls

- Latency optimization and caching

- API extensibility and orchestration flexibility

- Multi-cloud or hybrid deployment support

- Cost visibility and token monitoring

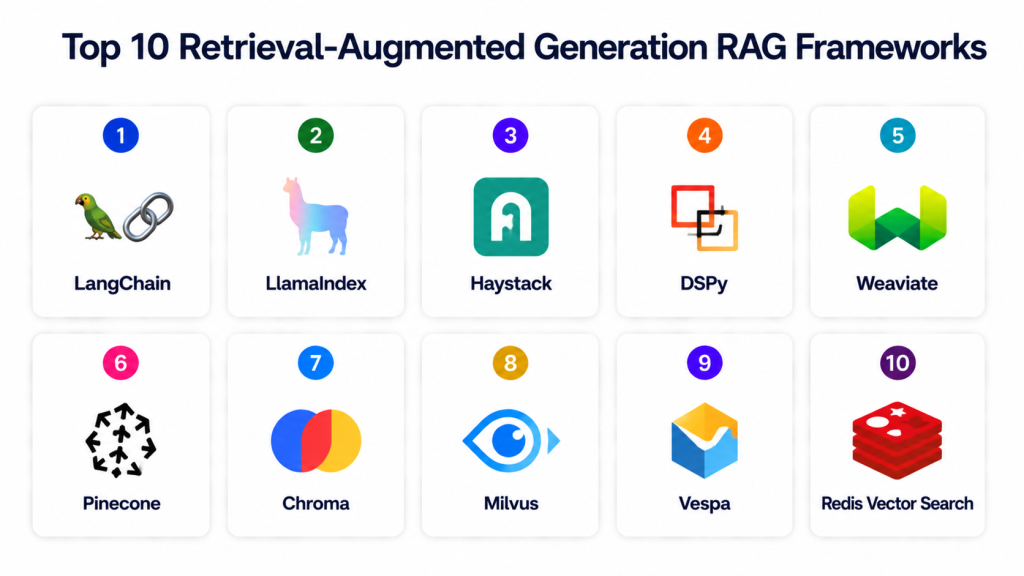

Top 10 Retrieval-Augmented Generation RAG Frameworks

1 — LangChain

One-line verdict: Best overall RAG orchestration framework for enterprise AI applications and multi-step LLM workflows.

Short description: LangChain is one of the most widely adopted frameworks for building retrieval-augmented applications using LLMs, vector stores, APIs, tools, and multi-step reasoning pipelines. It provides orchestration for retrieval, prompts, memory, agents, and generation workflows.

Standout Capabilities

- Multi-step agent orchestration

- Vector database integrations

- Prompt and memory management

- Retrieval chains and pipelines

- Tool calling workflows

- Large ecosystem of connectors

- LLM and API integrations

AI-Specific Depth

- Model support: Hosted, open-source, BYO models, multi-model routing

- RAG / knowledge integration: Pinecone, Weaviate, Chroma, FAISS, SQL, APIs

- Evaluation: Prompt testing and retrieval evaluation workflows

- Guardrails: Prompt injection mitigation and policy workflows

- Observability: LangSmith tracing and token monitoring

Pros

- Extremely flexible architecture

- Massive ecosystem and adoption

- Strong support for complex RAG systems

Cons

- Workflow complexity can grow quickly

- Rapid ecosystem changes require maintenance

- Performance tuning may require engineering effort

Security & Compliance

Depends on deployment architecture and connected services. Certifications are not publicly stated.

Deployment & Platforms

Cloud, hybrid, on-prem, Python, JavaScript.

Integrations & Ecosystem

LangChain integrates broadly with modern AI ecosystems.

- OpenAI

- Anthropic

- Hugging Face

- Pinecone

- Weaviate

- Chroma

- SQL databases

Pricing Model

Open-source with optional enterprise tooling.

Best-Fit Scenarios

- Enterprise AI copilots

- Multi-step AI agents

- Complex RAG orchestration workflows

2 — LlamaIndex

One-line verdict: Best framework for structured data retrieval and enterprise knowledge indexing workflows.

Short description: LlamaIndex focuses on connecting enterprise data sources to LLMs using indexing, retrieval, embeddings, and structured query workflows. It is widely used for document retrieval and enterprise AI assistants.

Standout Capabilities

- Enterprise data connectors

- Structured retrieval pipelines

- Indexing workflows

- Query engines

- Retrieval optimization

- Multi-source knowledge access

- LLM orchestration support

AI-Specific Depth

- Model support: Hosted, open-source, BYO models

- RAG / knowledge integration: SQL, vector stores, APIs, documents

- Evaluation: Retrieval validation and ranking workflows

- Guardrails: Query filtering and retrieval constraints

- Observability: Query tracing and metadata visibility

Pros

- Excellent enterprise data integrations

- Strong indexing workflows

- Good structured retrieval support

Cons

- Less orchestration flexibility than LangChain

- Scaling advanced workflows requires engineering

- Ecosystem smaller than LangChain

Security & Compliance

Depends on deployment architecture and enterprise integrations.

Deployment & Platforms

Cloud, hybrid, on-prem, Python.

Integrations & Ecosystem

LlamaIndex integrates with enterprise retrieval ecosystems.

- Pinecone

- Chroma

- Weaviate

- OpenAI

- SQL systems

- APIs

- Document stores

Pricing Model

Open-source with enterprise offerings.

Best-Fit Scenarios

- Enterprise knowledge assistants

- Document retrieval workflows

- Structured enterprise search

3 — Haystack

One-line verdict: Best modular framework for search-centric RAG applications and enterprise document retrieval.

Short description: Haystack provides retrieval pipelines, semantic search, question answering, and document indexing workflows optimized for enterprise search and retrieval systems.

Standout Capabilities

- Hybrid retrieval workflows

- Dense and sparse search

- Search pipeline orchestration

- Semantic retrieval

- Document preprocessing

- Multi-language support

- Enterprise search workflows

AI-Specific Depth

- Model support: Hosted, open-source, BYO models

- RAG / knowledge integration: Elasticsearch, FAISS, Pinecone, APIs

- Evaluation: Retrieval relevance evaluation

- Guardrails: Retrieval filtering workflows

- Observability: Pipeline and search telemetry

Pros

- Strong search-oriented architecture

- Modular retrieval workflows

- Good enterprise retrieval support

Cons

- More retrieval-focused than orchestration-focused

- Complex scaling workflows may require tuning

- Smaller ecosystem than LangChain

Security & Compliance

Depends on deployment architecture.

Deployment & Platforms

Cloud, on-prem, hybrid, Python.

Integrations & Ecosystem

Haystack integrates with enterprise search ecosystems.

- Elasticsearch

- OpenSearch

- Pinecone

- FAISS

- Hugging Face

- OpenAI

Pricing Model

Open-source with enterprise support.

Best-Fit Scenarios

- Enterprise semantic search

- Knowledge retrieval systems

- Search-heavy AI applications

4 — DSPy

One-line verdict: Best framework for optimizing prompts and retrieval pipelines programmatically.

Short description: DSPy focuses on declarative optimization of prompts, retrieval workflows, and reasoning pipelines for AI systems. It helps teams systematically improve retrieval quality and LLM behavior.

Standout Capabilities

- Declarative prompt programming

- Retrieval optimization

- Pipeline optimization

- Automated prompt tuning

- Programmatic LLM workflows

- Research-oriented flexibility

- Advanced evaluation workflows

AI-Specific Depth

- Model support: Multi-model and BYO models

- RAG / knowledge integration: Flexible retrieval integrations

- Evaluation: Automated optimization and scoring workflows

- Guardrails: Logic-driven constraints and validations

- Observability: Experiment telemetry and optimization tracking

Pros

- Strong optimization capabilities

- Research-friendly workflows

- Flexible programmatic architecture

Cons

- Steeper learning curve

- Less enterprise tooling maturity

- Smaller operational ecosystem

Security & Compliance

Varies based on deployment.

Deployment & Platforms

Cloud, local, hybrid, Python.

Integrations & Ecosystem

DSPy integrates with AI experimentation and orchestration workflows.

- OpenAI

- Anthropic

- Retrieval systems

- Python ML ecosystems

- Experiment tracking tools

Pricing Model

Open-source.

Best-Fit Scenarios

- Prompt optimization

- Advanced RAG experimentation

- Research-focused AI systems

5 — Weaviate

One-line verdict: Best open-source vector database and semantic retrieval platform for scalable RAG systems.

Short description: Weaviate combines vector search, semantic retrieval, hybrid search, and AI-native indexing workflows into a scalable platform for enterprise retrieval systems.

Standout Capabilities

- Vector search

- Hybrid retrieval

- Semantic indexing

- Multi-modal retrieval

- Graph-style relationships

- Open-source deployment

- API-first architecture

AI-Specific Depth

- Model support: Hosted and BYO models

- RAG / knowledge integration: Semantic vector retrieval

- Evaluation: Retrieval quality and ranking analysis

- Guardrails: Access controls and query filtering

- Observability: Search telemetry and retrieval metrics

Pros

- Strong semantic retrieval

- Open-source flexibility

- Scalable architecture

Cons

- Requires operational management

- Not a full orchestration framework

- Advanced workflows require integrations

Security & Compliance

RBAC and deployment-level security controls available.

Deployment & Platforms

Cloud, hybrid, on-prem.

Integrations & Ecosystem

Weaviate integrates broadly with RAG ecosystems.

- LangChain

- LlamaIndex

- OpenAI

- Hugging Face

- Python APIs

- Vector workflows

Pricing Model

Open-source with enterprise cloud offerings.

Best-Fit Scenarios

- Enterprise vector search

- Multi-modal retrieval

- Semantic knowledge systems

6 — Pinecone

One-line verdict: Best managed vector database platform for production-grade enterprise RAG pipelines.

Short description: Pinecone is a managed vector database optimized for scalable embedding retrieval and enterprise-grade RAG applications.

Standout Capabilities

- Managed vector infrastructure

- Low-latency retrieval

- High scalability

- Multi-tenant architecture

- Embedding optimization

- API-first workflows

- Enterprise operational simplicity

AI-Specific Depth

- Model support: Open-source and hosted models

- RAG / knowledge integration: Embedding and vector retrieval

- Evaluation: Retrieval performance metrics

- Guardrails: API-level access policies

- Observability: Latency and query telemetry

Pros

- Operational simplicity

- High scalability

- Strong production reliability

Cons

- Vendor lock-in concerns

- Usage-based cost growth

- Less orchestration flexibility

Security & Compliance

Enterprise security features, encryption, and RBAC available.

Deployment & Platforms

Managed cloud service.

Integrations & Ecosystem

Pinecone integrates broadly with enterprise RAG ecosystems.

- LangChain

- LlamaIndex

- OpenAI

- Anthropic

- Hugging Face

- AI orchestration systems

Pricing Model

Usage-based managed service.

Best-Fit Scenarios

- Production RAG pipelines

- Enterprise vector retrieval

- Scalable embedding search

7 — Chroma

One-line verdict: Best lightweight open-source vector store for fast RAG prototyping and development.

Short description: Chroma is a lightweight vector database optimized for embedding search and developer-friendly RAG workflows.

Standout Capabilities

- Lightweight vector search

- Fast prototyping

- Simple developer experience

- Open-source architecture

- Embedding management

- Python SDK support

- Quick deployment workflows

AI-Specific Depth

- Model support: Open-source and BYO models

- RAG / knowledge integration: Vector retrieval support

- Evaluation: Basic retrieval validation workflows

- Guardrails: Query filtering support

- Observability: Lightweight telemetry and metrics

Pros

- Easy onboarding

- Lightweight architecture

- Open-source flexibility

Cons

- Less scalable than enterprise systems

- Limited orchestration support

- Advanced governance requires integrations

Security & Compliance

Varies based on deployment.

Deployment & Platforms

Cloud, local, hybrid.

Integrations & Ecosystem

Chroma integrates with modern RAG development ecosystems.

- LangChain

- LlamaIndex

- Python AI frameworks

- Embedding systems

Pricing Model

Open-source.

Best-Fit Scenarios

- RAG prototyping

- Lightweight AI assistants

- Developer experimentation

8 — Milvus

One-line verdict: Best high-performance distributed vector database for large-scale enterprise retrieval.

Short description: Milvus provides distributed vector search infrastructure optimized for scalable retrieval, embeddings, and high-throughput RAG systems.

Standout Capabilities

- Distributed vector search

- GPU acceleration support

- High-throughput retrieval

- Multi-tenant architecture

- Scalable indexing workflows

- Real-time retrieval

- Enterprise infrastructure support

AI-Specific Depth

- Model support: Open-source and hosted models

- RAG / knowledge integration: Embedding retrieval workflows

- Evaluation: Search quality and retrieval analysis

- Guardrails: Access and deployment controls

- Observability: Retrieval performance telemetry

Pros

- High scalability

- Strong distributed architecture

- GPU-aware performance optimization

Cons

- Operational complexity

- Requires infrastructure expertise

- Less orchestration tooling

Security & Compliance

Deployment-level RBAC and access controls supported.

Deployment & Platforms

Cloud, hybrid, on-prem.

Integrations & Ecosystem

Milvus integrates with enterprise retrieval systems.

- LangChain

- LlamaIndex

- AI orchestration platforms

- Python SDKs

- Vector retrieval systems

Pricing Model

Open-source with enterprise offerings.

Best-Fit Scenarios

- Enterprise-scale vector search

- Distributed retrieval systems

- High-throughput AI applications

9 — Vespa

One-line verdict: Best real-time retrieval engine for large-scale search and generative AI systems.

Short description: Vespa combines search, ranking, retrieval, and AI serving infrastructure into a scalable platform for real-time AI systems.

Standout Capabilities

- Real-time retrieval

- Large-scale search infrastructure

- Multi-modal ranking

- Semantic retrieval

- AI serving integration

- Distributed architecture

- High-performance query handling

AI-Specific Depth

- Model support: Hosted and BYO models

- RAG / knowledge integration: Search and ranking workflows

- Evaluation: Retrieval ranking evaluation

- Guardrails: Query and ranking controls

- Observability: Search telemetry and operational monitoring

Pros

- Extremely scalable architecture

- Strong real-time retrieval

- Enterprise-grade performance

Cons

- Steeper operational complexity

- Smaller ecosystem than LangChain

- Requires infrastructure engineering expertise

Security & Compliance

Enterprise deployment controls and security workflows supported.

Deployment & Platforms

Cloud, hybrid, on-prem.

Integrations & Ecosystem

Vespa integrates with large-scale retrieval and AI systems.

- Search systems

- AI serving infrastructure

- Embedding workflows

- Enterprise APIs

Pricing Model

Open-source with enterprise support.

Best-Fit Scenarios

- Real-time AI retrieval

- Large-scale enterprise search

- High-performance AI serving

10 — Redis Vector Search

One-line verdict: Best ultra-low-latency retrieval platform for lightweight production RAG systems.

Short description: Redis Vector Search extends Redis with embedding search and retrieval workflows optimized for fast and lightweight AI applications.

Standout Capabilities

- In-memory vector retrieval

- Ultra-low-latency search

- Lightweight architecture

- Hybrid search support

- Multi-tenant workflows

- API-driven integrations

- Fast deployment support

AI-Specific Depth

- Model support: Open-source and hosted models

- RAG / knowledge integration: Embedding retrieval support

- Evaluation: Search latency monitoring

- Guardrails: Access policies and filtering

- Observability: Query telemetry and monitoring

Pros

- Very low latency

- Easy operational model

- Good for lightweight production systems

Cons

- Less scalable for massive datasets

- Limited orchestration capabilities

- Enterprise workflows may require integrations

Security & Compliance

Authentication, RBAC, and deployment-level controls available.

Deployment & Platforms

Cloud, hybrid, on-prem.

Integrations & Ecosystem

Redis integrates broadly with AI ecosystems.

- LangChain

- LlamaIndex

- Python AI frameworks

- Embedding workflows

- Search APIs

Pricing Model

Open-source and enterprise subscription options.

Best-Fit Scenarios

- Lightweight production RAG

- Low-latency AI retrieval

- Fast inference workflows

Comparison Table

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| LangChain | AI orchestration | Cloud / Hybrid / On-prem | Multi-model | Workflow flexibility | Complexity growth | N/A |

| LlamaIndex | Enterprise indexing | Cloud / Hybrid | BYO and hosted | Structured retrieval | Smaller ecosystem | N/A |

| Haystack | Search-heavy RAG | Cloud / Hybrid | Multi-model | Hybrid retrieval | Scaling complexity | N/A |

| DSPy | Retrieval optimization | Cloud / Local | Multi-model | Programmatic optimization | Learning curve | N/A |

| Weaviate | Semantic vector search | Cloud / Hybrid | Multi-model | Open-source retrieval | Operational management | N/A |

| Pinecone | Managed vector retrieval | Cloud | Hosted and BYO | Scalability | Vendor lock-in | N/A |

| Chroma | Lightweight RAG | Cloud / Local | BYO models | Simplicity | Limited scalability | N/A |

| Milvus | Distributed vector retrieval | Cloud / Hybrid | Multi-model | High performance | Operational complexity | N/A |

| Vespa | Real-time enterprise retrieval | Cloud / Hybrid | BYO models | Real-time scalability | Infrastructure complexity | N/A |

| Redis Vector Search | Low-latency retrieval | Cloud / Hybrid | Multi-model | Fast retrieval | Limited orchestration | N/A |

Scoring & Evaluation

These scores are comparative rather than absolute. Enterprise orchestration platforms score highly for flexibility and scalability, while vector databases score higher for retrieval performance and operational specialization. Teams should evaluate based on orchestration complexity, retrieval quality, latency requirements, governance maturity, and infrastructure scale.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| LangChain | 9 | 8 | 8 | 10 | 7 | 8 | 7 | 9 | 8.4 |

| LlamaIndex | 8 | 8 | 7 | 9 | 8 | 8 | 7 | 8 | 8.0 |

| Haystack | 8 | 8 | 7 | 8 | 7 | 8 | 7 | 8 | 7.8 |

| DSPy | 8 | 9 | 7 | 7 | 6 | 8 | 7 | 7 | 7.6 |

| Weaviate | 8 | 8 | 8 | 8 | 7 | 8 | 8 | 8 | 7.9 |

| Pinecone | 9 | 8 | 8 | 9 | 8 | 8 | 8 | 9 | 8.4 |

| Chroma | 7 | 7 | 6 | 8 | 9 | 9 | 6 | 7 | 7.5 |

| Milvus | 9 | 8 | 8 | 8 | 6 | 9 | 8 | 8 | 8.1 |

| Vespa | 9 | 9 | 8 | 8 | 6 | 9 | 8 | 8 | 8.2 |

| Redis Vector Search | 8 | 7 | 7 | 8 | 8 | 9 | 7 | 8 | 7.9 |

Top 3 for Enterprise: LangChain, Pinecone, Vespa

Top 3 for SMB: LlamaIndex, Haystack, Chroma

Top 3 for Developers: LangChain, DSPy, Chroma

Which Retrieval-Augmented Generation RAG Framework Is Right for You

Solo / Freelancer

Chroma, Redis Vector Search, and lightweight LangChain workflows are good for rapid experimentation and low-cost AI applications.

SMB

LlamaIndex, Haystack, and Chroma balance retrieval flexibility, operational simplicity, and manageable infrastructure requirements.

Mid-Market

LangChain, Weaviate, and Pinecone provide scalable orchestration and retrieval capabilities for growing AI workloads.

Enterprise

LangChain, Pinecone, Milvus, and Vespa provide enterprise scalability, observability, governance, and high-throughput retrieval systems.

Regulated Industries

LlamaIndex, Haystack, Pinecone, and LangChain provide stronger governance, structured retrieval, and observability for compliance-heavy AI systems.

Budget vs Premium

Open-source systems reduce licensing cost but increase infrastructure responsibility. Managed services simplify operations while increasing long-term platform dependency.

Build vs Buy

Organizations with strong engineering teams can build custom RAG systems using open-source frameworks. Enterprises prioritizing operational simplicity often choose managed vector infrastructure and enterprise orchestration tooling.

Implementation Playbook

30 Days

- Identify enterprise knowledge sources

- Implement vector indexing workflows

- Connect retrieval with LLM inference

- Define retrieval quality metrics

- Establish baseline observability

60 Days

- Add hybrid retrieval workflows

- Configure evaluation pipelines

- Implement prompt injection defenses

- Add caching and latency optimization

- Connect observability and tracing systems

90 Days

- Scale retrieval infrastructure

- Implement governance workflows

- Add multi-agent retrieval orchestration

- Optimize cost and model routing

- Standardize enterprise retrieval patterns

Common Mistakes & How to Avoid Them

- Using poor chunking strategies

- Ignoring retrieval evaluation workflows

- No hallucination monitoring

- Missing prompt injection defenses

- Weak metadata and lineage tracking

- Over-retrieving irrelevant context

- No caching or latency optimization

- Vendor lock-in without portability planning

- Missing governance workflows

- Poor embedding model selection

- Weak observability and tracing

- No structured retrieval for enterprise systems

- Ignoring cost visibility and token usage

- Treating retrieval quality as static

FAQs

1. What is a RAG framework?

A RAG framework combines retrieval systems with LLMs to generate grounded responses using external knowledge sources.

2. Why are RAG systems important?

They reduce hallucinations, improve factual accuracy, and allow AI systems to access real-time enterprise information.

3. What is the difference between LangChain and LlamaIndex?

LangChain focuses more on orchestration and agents, while LlamaIndex focuses more on structured retrieval and indexing workflows.

4. What are vector databases used for in RAG?

Vector databases store embeddings and enable semantic similarity search for retrieval workflows.

5. Which vector databases are most popular for RAG?

Pinecone, Weaviate, Milvus, Chroma, and Redis Vector Search are widely used.

6. Can RAG frameworks work with open-source models?

Yes. Most modern RAG frameworks support hosted, open-source, and BYO models.

7. What is hybrid retrieval?

Hybrid retrieval combines semantic vector search with keyword or sparse search to improve retrieval quality.

8. How do organizations evaluate RAG quality?

They measure retrieval relevance, hallucination rate, latency, answer quality, and grounding accuracy.

9. Are RAG frameworks production-ready?

Yes. LangChain, Haystack, Pinecone, Weaviate, and Vespa are commonly used in production AI systems.

10. What are common RAG security risks?

Prompt injection, data leakage, unauthorized retrieval, and weak access controls are major risks.

11. What observability features matter most for RAG?

Tracing, retrieval telemetry, latency metrics, token usage, and hallucination monitoring are critical.

12. How should organizations start building a RAG system?

Start with one trusted knowledge source, implement retrieval evaluation, add observability, then scale gradually.

Conclusion

Retrieval-Augmented Generation RAG Frameworks have become foundational infrastructure for enterprise generative AI systems. LangChain and LlamaIndex dominate orchestration and structured retrieval workflows, while vector platforms such as Pinecone, Weaviate, Milvus, Chroma, and Redis Vector Search provide scalable semantic retrieval infrastructure. Enterprise-scale platforms like Vespa support high-throughput, real-time retrieval for complex AI systems. As organizations increasingly deploy AI copilots, enterprise assistants, and knowledge-grounded generative applications, RAG systems must balance retrieval quality, latency, governance, observability, and operational scalability. The best framework depends on orchestration complexity, infrastructure maturity, retrieval requirements, and governance needs. Start with one focused retrieval workflow, evaluate grounding quality carefully, add tracing and guardrails, and then expand toward enterprise-scale RAG operations.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals