Introduction

Model Canary & A/B Deployment Tools help teams release machine learning models safely by gradually exposing new versions to selected traffic, comparing performance against existing versions, and rolling back quickly if problems appear. These tools reduce production risk by allowing teams to test models with real users, controlled traffic percentages, shadow traffic, champion-challenger setups, and experiment-based routing before full rollout.

In production AI systems, even a small model change can impact latency, accuracy, user experience, compliance, or business metrics. Canary and A/B deployment tools make model releases more measurable and reversible. Real-world use cases include testing a new recommendation model on limited traffic, validating a fraud model before full rollout, comparing LLM prompt/model variants, releasing computer vision models safely, routing users between model versions, and monitoring model quality before promotion.

Buyers should evaluate traffic splitting, rollback controls, experiment tracking, monitoring integrations, deployment automation, governance workflows, Kubernetes support, cloud ecosystem fit, model registry integration, and support for real-time and batch inference.

Best for: MLOps teams, AI platform engineers, product experimentation teams, enterprises deploying production ML models, and organizations needing controlled model rollout workflows

Not ideal for: offline-only experimentation, early prototypes, or teams without production deployment pipelines

What’s Changed in Model Canary & A/B Deployment Tools

- Canary releases are now standard for production AI model deployment

- A/B testing has expanded from product experiments into model quality evaluation

- Shadow deployment is increasingly used for high-risk AI systems

- LLM deployment workflows now require prompt, model, and routing experimentation

- Kubernetes-native model serving platforms now include traffic splitting

- Managed cloud AI platforms provide built-in model version rollout controls

- Observability is now tied directly to rollout decisions

- Model registries increasingly integrate with deployment approvals

- Feature flags are used to control model exposure by user segment

- Drift monitoring is used during canary rollout validation

- Automated rollback is becoming more common in AI deployment workflows

- Experiment metrics increasingly combine business, latency, quality, and safety signals

Quick Buyer Checklist

- Traffic splitting and percentage-based rollout

- Canary deployment support

- A/B testing and experiment tracking

- Shadow deployment support

- Fast rollback workflows

- Model registry integration

- Monitoring and alerting integration

- CI/CD pipeline compatibility

- Kubernetes or cloud-native support

- Governance and approval workflows

- Support for LLM and traditional ML models

- Cost and latency observability

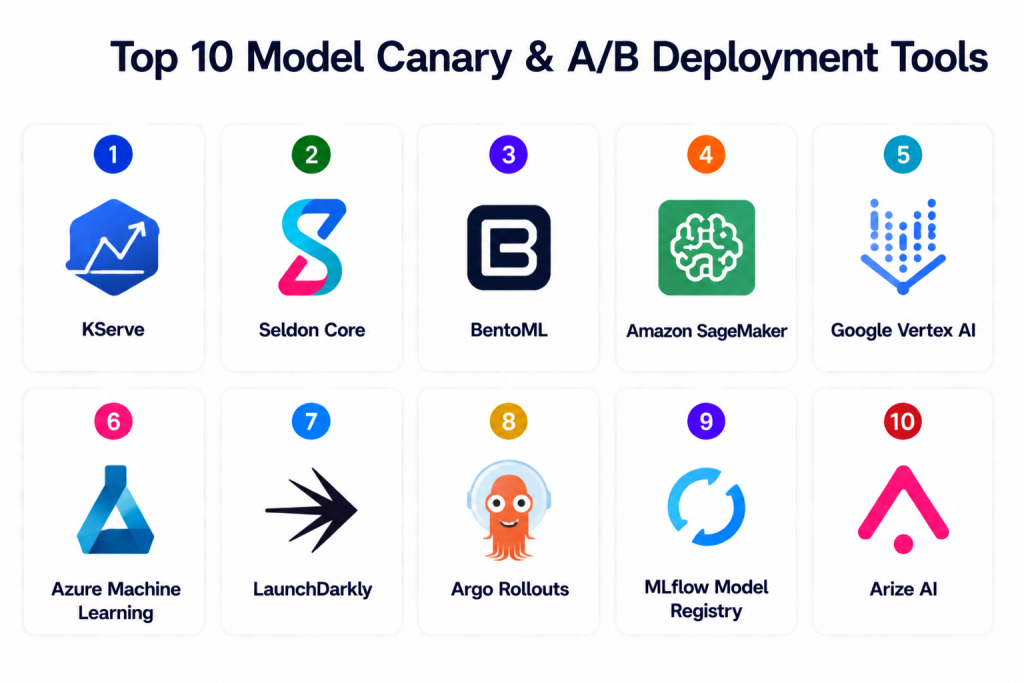

Top 10 Model Canary & A/B Deployment Tools

1 — KServe

One-line verdict: Best Kubernetes-native tool for controlled model rollout, canary deployment, and traffic splitting.

Short description: KServe provides Kubernetes-native model serving with support for canary rollouts, traffic splitting, autoscaling, and multiple ML runtimes. It is a strong choice for platform teams building portable inference infrastructure across cloud and on-prem environments.

Standout Capabilities

- Canary deployment support

- Traffic splitting between model versions

- Kubernetes-native serving

- Autoscaling with Knative

- Multi-framework model support

- Custom predictor support

- Integration with Kubeflow workflows

AI-Specific Depth

- Model support: Multi-framework and BYO models

- RAG / knowledge integration: Works with custom RAG serving stacks

- Evaluation: External evaluation integration

- Guardrails: Kubernetes policies and routing controls

- Observability: Prometheus and Kubernetes metrics

Pros

- Strong cloud portability

- Good for enterprise MLOps platforms

- Supports production rollout controls

Cons

- Requires Kubernetes expertise

- Setup can be complex

- Experiment analytics need external tools

Security & Compliance

RBAC, namespace isolation, ingress controls, service mesh security, and Kubernetes policy enforcement. Certifications are not publicly stated.

Deployment & Platforms

Cloud, on-prem, hybrid, Kubernetes.

Integrations & Ecosystem

KServe integrates well with Kubernetes-based AI stacks and deployment workflows.

- Kubernetes

- Kubeflow

- Knative

- Istio

- Prometheus

- Grafana

- CI/CD systems

Pricing Model

Open-source.

Best-Fit Scenarios

- Kubernetes model serving

- Canary rollout workflows

- Platform teams needing portability

2 — Seldon Core

One-line verdict: Best enterprise Kubernetes platform for advanced canary, A/B, and model graph deployments.

Short description: Seldon Core enables Kubernetes-native deployment of machine learning models with canary releases, A/B testing, model graphs, explainability integrations, and enterprise-grade rollout patterns.

Standout Capabilities

- Canary deployment support

- A/B testing workflows

- Model graph orchestration

- Traffic routing policies

- Explainer integration

- Monitoring support

- Multi-framework inference serving

AI-Specific Depth

- Model support: Multi-framework and BYO models

- RAG / knowledge integration: N/A

- Evaluation: External evaluation and monitoring workflows

- Guardrails: Traffic policies and Kubernetes controls

- Observability: Prometheus and Grafana integrations

Pros

- Strong deployment control

- Good for complex model graphs

- Enterprise-ready Kubernetes architecture

Cons

- Requires Kubernetes knowledge

- Advanced configuration can be complex

- Some governance workflows require add-ons

Security & Compliance

RBAC, service mesh security, ingress controls, audit logging through Kubernetes, and encryption through infrastructure. Certifications are not publicly stated.

Deployment & Platforms

Cloud, on-prem, hybrid, Kubernetes.

Integrations & Ecosystem

Seldon Core fits well into modern Kubernetes and MLOps environments.

- Kubernetes

- Istio

- Prometheus

- Grafana

- CI/CD tools

- Model registries

- Monitoring platforms

Pricing Model

Open-source with enterprise offerings.

Best-Fit Scenarios

- Enterprise model rollout

- A/B testing for inference endpoints

- Complex multi-model deployments

3 — BentoML

One-line verdict: Best developer-friendly platform for packaging models and supporting controlled rollout workflows.

Short description: BentoML helps teams package models into production APIs and deploy them across cloud, containers, Kubernetes, and serverless environments. Canary and A/B workflows can be implemented through deployment targets and traffic management layers.

Standout Capabilities

- Model packaging

- API-first serving

- Multi-framework support

- Containerized deployment

- Versioned services

- Deployment automation

- Cloud and Kubernetes support

AI-Specific Depth

- Model support: Multi-framework and BYO models

- RAG / knowledge integration: Custom connector support

- Evaluation: External testing integration

- Guardrails: API-level controls and deployment policies

- Observability: Logs and metrics through deployment stack

Pros

- Excellent developer experience

- Flexible deployment targets

- Good model packaging workflow

Cons

- Canary routing depends on deployment layer

- Enterprise governance needs additional setup

- Complex rollout analytics require integrations

Security & Compliance

Authentication, encryption, RBAC, audit controls, and security depend on deployment environment. Certifications are not publicly stated.

Deployment & Platforms

Cloud, on-prem, hybrid, containers, Kubernetes, serverless.

Integrations & Ecosystem

BentoML works well with CI/CD pipelines and modern AI API deployments.

- Docker

- Kubernetes

- CI/CD tools

- ML frameworks

- API gateways

- Monitoring tools

- Model registries

Pricing Model

Open-source with enterprise and managed options.

Best-Fit Scenarios

- AI API deployment

- Developer-led model releases

- Multi-framework serving workflows

4 — Amazon SageMaker

One-line verdict: Best AWS-native platform for managed canary, shadow, and A/B model deployment.

Short description: Amazon SageMaker provides managed model deployment, endpoint variants, shadow testing, traffic shifting, monitoring, and integration with AWS MLOps workflows.

Standout Capabilities

- Production variant traffic splitting

- Shadow testing

- Managed endpoints

- Auto rollback support through monitoring workflows

- Model registry integration

- Endpoint monitoring

- CI/CD integration

AI-Specific Depth

- Model support: AWS ecosystem and BYO models

- RAG / knowledge integration: AWS data ecosystem support

- Evaluation: SageMaker evaluation and monitoring workflows

- Guardrails: IAM and policy controls

- Observability: CloudWatch and SageMaker dashboards

Pros

- Fully managed deployment workflow

- Strong AWS integration

- Good enterprise security controls

Cons

- AWS lock-in

- Pricing complexity

- Less portable than open-source stacks

Security & Compliance

IAM, encryption, audit logging, network isolation, and AWS governance controls. Certifications follow AWS compliance programs.

Deployment & Platforms

AWS cloud.

Integrations & Ecosystem

SageMaker integrates deeply with AWS AI, data, and deployment services.

- SageMaker Pipelines

- SageMaker Model Registry

- CloudWatch

- S3

- IAM

- Lambda

- CI/CD services

Pricing Model

Usage-based.

Best-Fit Scenarios

- AWS-native model rollout

- Shadow testing production models

- Managed enterprise MLOps

5 — Google Vertex AI

One-line verdict: Best Google Cloud platform for managed model version rollout and traffic splitting.

Short description: Google Vertex AI supports managed model deployment, endpoint traffic splitting, model monitoring, and integrated MLOps workflows for cloud-native AI teams.

Standout Capabilities

- Endpoint traffic splitting

- Model version deployment

- Managed prediction endpoints

- Model monitoring integration

- Custom container support

- CI/CD compatibility

- Cloud-native governance

AI-Specific Depth

- Model support: Google models and BYO models

- RAG / knowledge integration: Google Cloud data ecosystem support

- Evaluation: Vertex evaluation workflows

- Guardrails: IAM and governance policies

- Observability: Cloud Monitoring dashboards

Pros

- Managed rollout workflows

- Strong Google Cloud integration

- Good support for custom containers

Cons

- Google Cloud lock-in

- Cost depends on traffic scale

- Less flexible outside cloud

Security & Compliance

IAM, encryption, audit logging, network controls, and Google Cloud governance. Certifications follow Google Cloud compliance programs.

Deployment & Platforms

Google Cloud.

Integrations & Ecosystem

Vertex AI connects model deployment with broader Google Cloud AI workflows.

- Vertex AI Pipelines

- Model Registry

- BigQuery

- Cloud Storage

- Cloud Monitoring

- CI/CD tools

Pricing Model

Usage-based.

Best-Fit Scenarios

- Google Cloud AI deployment

- Managed A/B traffic routing

- Enterprise model rollout governance

6 — Azure Machine Learning

One-line verdict: Best Azure-native model deployment platform for managed endpoints and safe rollout workflows.

Short description: Azure Machine Learning supports model deployment through managed online endpoints with traffic allocation, deployment versioning, monitoring, and governance controls.

Standout Capabilities

- Managed online endpoints

- Traffic allocation between deployments

- Model versioning

- Monitoring and logging

- CI/CD integration

- Enterprise identity controls

- Rollback workflows

AI-Specific Depth

- Model support: Azure ecosystem and BYO models

- RAG / knowledge integration: Azure data ecosystem support

- Evaluation: Azure ML evaluation workflows

- Guardrails: Azure RBAC and policy controls

- Observability: Azure Monitor dashboards

Pros

- Strong enterprise security

- Good endpoint rollout controls

- Deep Azure ecosystem integration

Cons

- Azure lock-in

- Cost can scale quickly

- Requires Azure ML knowledge

Security & Compliance

Azure RBAC, encryption, audit controls, private networking, and enterprise governance. Certifications follow Azure compliance programs.

Deployment & Platforms

Azure cloud.

Integrations & Ecosystem

Azure ML integrates with common enterprise cloud and MLOps workflows.

- Azure ML Registry

- Azure Monitor

- Azure DevOps

- GitHub Actions

- Data Lake

- Key Vault

- CI/CD pipelines

Pricing Model

Usage-based.

Best-Fit Scenarios

- Azure-native AI deployment

- Enterprise endpoint governance

- Safe traffic allocation workflows

7 — LaunchDarkly

One-line verdict: Best feature flag platform for controlling model exposure by segment and rollout percentage.

Short description: LaunchDarkly is a feature management platform that can control which users see specific model versions, prompts, or AI-powered features. It is useful when model releases need product-level segmentation and experimentation controls.

Standout Capabilities

- Feature flags

- Gradual rollout

- User segmentation

- Experimentation support

- Kill switches

- Audit logs

- Governance controls

AI-Specific Depth

- Model support: Model agnostic

- RAG / knowledge integration: N/A

- Evaluation: Business metric experimentation

- Guardrails: Kill switches and rollout policies

- Observability: Experiment and flag dashboards

Pros

- Excellent rollout control

- Strong user targeting

- Fast rollback through flags

Cons

- Not a model serving platform

- Requires integration with AI application layer

- Model metrics need external systems

Security & Compliance

RBAC, audit logs, SSO, approval workflows, and enterprise governance controls. Certifications vary by plan and vendor disclosure.

Deployment & Platforms

Cloud / SaaS.

Integrations & Ecosystem

LaunchDarkly integrates well with application delivery and experiment workflows.

- CI/CD tools

- Application frameworks

- Analytics platforms

- Observability systems

- Product experimentation tools

Pricing Model

Subscription-based.

Best-Fit Scenarios

- AI feature rollouts

- User-segmented model experiments

- Fast rollback for AI features

8 — Argo Rollouts

One-line verdict: Best Kubernetes-native rollout controller for canary and blue-green AI deployments.

Short description: Argo Rollouts extends Kubernetes deployment strategies with canary, blue-green, metric-based promotion, and automated rollback workflows.

Standout Capabilities

- Canary deployments

- Blue-green deployments

- Metric-based rollout promotion

- Automated rollback

- Kubernetes-native workflows

- GitOps compatibility

- Traffic routing integrations

AI-Specific Depth

- Model support: Framework agnostic through Kubernetes workloads

- RAG / knowledge integration: N/A

- Evaluation: Metric-based promotion through integrations

- Guardrails: Rollout policies and rollback controls

- Observability: Integrates with monitoring systems

Pros

- Strong Kubernetes rollout control

- GitOps-friendly

- Flexible metric-based promotion

Cons

- Not model-specific

- Requires Kubernetes expertise

- Needs external model monitoring

Security & Compliance

Uses Kubernetes RBAC, audit logs, namespace controls, and GitOps governance.

Deployment & Platforms

Cloud, on-prem, hybrid, Kubernetes.

Integrations & Ecosystem

Argo Rollouts fits well in GitOps and platform engineering workflows.

- Kubernetes

- Argo CD

- Istio

- NGINX

- Prometheus

- Datadog

- CI/CD systems

Pricing Model

Open-source.

Best-Fit Scenarios

- Kubernetes canary deployment

- GitOps model rollout

- Metric-driven production promotion

9 — MLflow Model Registry

One-line verdict: Best lightweight model registry foundation for version promotion and deployment governance.

Short description: MLflow Model Registry helps teams manage model versions, stages, approvals, metadata, and deployment promotion workflows. It is often paired with serving platforms for canary and A/B deployment.

Standout Capabilities

- Model versioning

- Stage transitions

- Approval workflows

- Artifact tracking

- Deployment metadata

- Experiment linkage

- API-based automation

AI-Specific Depth

- Model support: Multi-framework

- RAG / knowledge integration: N/A

- Evaluation: Experiment and metric comparison

- Guardrails: Approval policies through workflow design

- Observability: Registry metadata and experiment logs

Pros

- Simple model lifecycle control

- Strong open-source adoption

- Good for deployment governance

Cons

- Not a traffic router

- Needs serving platform integration

- Enterprise governance is limited

Security & Compliance

Access control depends on deployment. Enterprise distributions may add stronger governance. Certifications are not publicly stated.

Deployment & Platforms

Cloud, on-prem, hybrid.

Integrations & Ecosystem

MLflow works across common MLOps and serving systems.

- MLflow Tracking

- CI/CD pipelines

- Model serving platforms

- Data platforms

- Experiment workflows

Pricing Model

Open-source with managed options through ecosystem providers.

Best-Fit Scenarios

- Model version governance

- Approval-based deployment promotion

- Lightweight MLOps workflows

10 — Arize AI

One-line verdict: Best observability platform for validating model canaries and production A/B experiments.

Short description: Arize AI monitors deployed model performance, drift, prediction quality, and production behavior. It helps teams compare canary and baseline model performance before full rollout.

Standout Capabilities

- Model performance monitoring

- Drift detection

- Production comparison workflows

- Root-cause analysis

- Feature-level observability

- Alerts and dashboards

- LLM observability support

AI-Specific Depth

- Model support: Multi-model and BYO

- RAG / knowledge integration: Embedding and vector observability

- Evaluation: Production performance comparison

- Guardrails: Alerting and anomaly policies

- Observability: Full AI monitoring dashboards

Pros

- Strong production validation

- Excellent observability

- Useful for canary decision-making

Cons

- Not a deployment tool itself

- Requires integration with serving stack

- Premium pricing

Security & Compliance

RBAC, encryption, audit controls, and enterprise governance features. Certifications are not publicly stated.

Deployment & Platforms

Cloud / Hybrid.

Integrations & Ecosystem

Arize AI complements model serving, deployment, and monitoring workflows.

- Model serving systems

- Feature stores

- Data warehouses

- LLM pipelines

- Alerting tools

- MLOps platforms

Pricing Model

Enterprise subscription.

Best-Fit Scenarios

- Canary validation

- Production model monitoring

- A/B performance comparison

Comparison Table

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| KServe | Kubernetes canary serving | Cloud / Hybrid / On-prem | Multi-framework | Traffic splitting | Kubernetes complexity | N/A |

| Seldon Core | Enterprise A/B deployments | Cloud / Hybrid / On-prem | Multi-framework | Model graph rollout | Setup effort | N/A |

| BentoML | Developer-led model APIs | Cloud / Hybrid | Multi-framework | Packaging workflow | Needs routing layer | N/A |

| SageMaker | AWS managed rollout | Cloud | AWS + BYO | Shadow testing | AWS lock-in | N/A |

| Vertex AI | Google managed rollout | Cloud | Google + BYO | Traffic splitting | GCP lock-in | N/A |

| Azure ML | Azure managed endpoints | Cloud | Azure + BYO | Traffic allocation | Azure lock-in | N/A |

| LaunchDarkly | Feature-level AI rollout | Cloud | Model agnostic | User targeting | Not model serving | N/A |

| Argo Rollouts | Kubernetes rollout control | Cloud / Hybrid / On-prem | Framework agnostic | Metric-based canary | Not ML-specific | N/A |

| MLflow Registry | Model version governance | Cloud / Hybrid / On-prem | Multi-framework | Lifecycle tracking | No traffic routing | N/A |

| Arize AI | Canary validation | Cloud / Hybrid | Multi-model | Observability | Not deployment tool | N/A |

Scoring & Evaluation

Scoring is comparative, not absolute. Deployment platforms score higher in rollout execution, while monitoring and registry tools score higher in governance and validation. Teams should evaluate tools based on where the biggest gap exists: traffic routing, experiment management, model governance, user segmentation, or production validation.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| KServe | 9 | 8 | 8 | 9 | 6 | 8 | 8 | 8 | 8.0 |

| Seldon Core | 9 | 8 | 8 | 8 | 6 | 8 | 8 | 8 | 7.9 |

| BentoML | 8 | 7 | 7 | 8 | 8 | 8 | 7 | 8 | 7.6 |

| SageMaker | 9 | 8 | 9 | 9 | 8 | 8 | 9 | 9 | 8.6 |

| Vertex AI | 9 | 8 | 9 | 9 | 8 | 8 | 9 | 9 | 8.6 |

| Azure ML | 9 | 8 | 9 | 9 | 8 | 8 | 9 | 9 | 8.6 |

| LaunchDarkly | 8 | 8 | 9 | 9 | 9 | 8 | 9 | 9 | 8.6 |

| Argo Rollouts | 8 | 8 | 8 | 9 | 7 | 8 | 8 | 8 | 8.0 |

| MLflow Registry | 7 | 8 | 7 | 8 | 8 | 8 | 7 | 8 | 7.6 |

| Arize AI | 8 | 9 | 8 | 9 | 8 | 8 | 9 | 8 | 8.4 |

Top 3 for Enterprise: SageMaker, Vertex AI, Azure ML

Top 3 for SMB: BentoML, MLflow Registry, LaunchDarkly

Top 3 for Developers: KServe, Argo Rollouts, BentoML

Which Model Canary & A/B Deployment Tool Is Right for You

Solo / Freelancer

BentoML and MLflow Registry are practical choices for lightweight model versioning and API deployment workflows. They give developers control without requiring large platform teams.

SMB

BentoML, LaunchDarkly, and Argo Rollouts work well for smaller teams that need controlled rollout, fast rollback, and flexible deployment patterns.

Mid-Market

KServe, Seldon Core, and Arize AI provide stronger production workflows for model rollout, traffic routing, and validation.

Enterprise

SageMaker, Vertex AI, Azure ML, and LaunchDarkly are strong options for enterprise teams that need governance, auditability, user targeting, and managed deployment workflows.

Regulated Industries

Managed cloud platforms and tools with strong audit controls are better for regulated environments. Arize AI also helps validate model performance during rollout.

Budget vs Premium

Open-source tools reduce licensing costs but require engineering expertise. Managed cloud services and enterprise feature flag platforms simplify operations but may increase long-term cost.

Build vs Buy

Build with Kubernetes-native tools if your team has platform engineering maturity. Buy managed services when speed, governance, and operational simplicity matter more.

Implementation Playbook

30 Days

- Identify one production model for controlled rollout

- Define baseline model metrics

- Set canary traffic percentage

- Configure rollback conditions

- Connect monitoring dashboards

60 Days

- Add A/B experiment metrics

- Integrate model registry and CI/CD workflows

- Add feature flag controls if needed

- Configure alerting and anomaly detection

- Validate performance against baseline

90 Days

- Standardize rollout templates

- Add approval workflows

- Expand canary deployment across model teams

- Automate rollback and promotion rules

- Build governance reports for production releases

Common Mistakes & How to Avoid Them

- Deploying new models directly to full traffic

- Running A/B tests without statistical confidence

- Ignoring latency and cost during canary testing

- Not connecting monitoring to rollout decisions

- No rollback plan

- Mixing model changes with product changes without tracking

- Missing segment-level performance analysis

- Ignoring drift during rollout

- No model registry integration

- Weak approval workflows

- Not validating fairness and safety metrics

- Using feature flags without model observability

- Poor experiment documentation

- Vendor lock-in without portability planning

FAQs

1. What is model canary deployment?

Model canary deployment gradually exposes a new model version to a small percentage of traffic before full rollout.

2. What is A/B testing for models?

A/B testing compares two or more model versions using live traffic and defined metrics such as accuracy, conversion, latency, or business impact.

3. What is shadow deployment?

Shadow deployment sends production-like traffic to a new model without affecting users, allowing teams to evaluate performance safely.

4. Which tools support Kubernetes canary deployment?

KServe, Seldon Core, and Argo Rollouts are strong Kubernetes-native options.

5. Which cloud tools support managed model rollout?

Amazon SageMaker, Google Vertex AI, and Azure Machine Learning provide managed model deployment and rollout features.

6. Do feature flags help with model deployment?

Yes. Feature flags help control which users or segments receive a model version or AI-powered feature.

7. Can model canary deployment reduce risk?

Yes. Canary rollout limits exposure and allows fast rollback if performance, safety, or latency issues appear.

8. What metrics should be monitored during canary rollout?

Teams should monitor accuracy, drift, latency, error rates, cost, fairness, business metrics, and user-level outcomes.

9. Is A/B deployment only for real-time models?

No. It is most common for real-time models but can also be used for batch workflows with controlled evaluation groups.

10. Do model registries replace deployment tools?

No. Model registries manage versions and approvals, while deployment tools handle traffic routing and serving.

11. What is champion-challenger deployment?

Champion-challenger compares a current production model against one or more candidate models before promotion.

12. How should teams choose a canary deployment tool?

Choose based on infrastructure, cloud ecosystem, traffic routing needs, governance requirements, and monitoring maturity.

Conclusion

Model Canary & A/B Deployment Tools help teams release AI models safely, compare real-world performance, and reduce production risk. Kubernetes-native platforms like KServe, Seldon Core, and Argo Rollouts offer strong flexibility for platform teams, while managed services such as SageMaker, Vertex AI, and Azure ML simplify rollout workflows for cloud-native enterprises. Feature flag platforms like LaunchDarkly provide user-level exposure control, while MLflow Registry and Arize AI help with governance and validation. The right tool depends on your infrastructure, experiment maturity, model risk level, and monitoring depth. Start with one high-value model, define rollout metrics, test canary rules, validate rollback workflows, and then standardize deployment practices across your AI teams.

Find Trusted Cardiac Hospitals

Compare heart hospitals by city and services — all in one place.

Explore Hospitals